Laptops with Nvidia 2070 or 2080 Max-Q graphics are often sold with either 80 watt or 90 watt power limits, but how much of a difference does the power limit actually make to performance, thermals, clock speeds and power draw? The difference is honestly not what I expected.

I’m testing with the Gigabyte Aero 17 XB laptop, so we’ve got RTX 2070 Super Max-Q graphics. I’ve chosen to test with this laptop as the Gigabyte control center software lets us swap between running the GPU at 80 or 90 watts, a feature that not many actually have. Most laptops you buy either come stuck at 80 or 90 watts and you can’t change it. More power will generally equal more performance, and when you see laptops with Max-Q graphics for sale they don’t advertise the power limits, so unless you check reviews like mine and find out the limits, it’s basically russian roulette. We’ll start out looking at the games, then compare thermals, clock speed, power draw and professional 3D applications afterwards.

I’ve chosen to test with this laptop as the Gigabyte control center software lets us swap between running the GPU at 80 or 90 watts, a feature that not many actually have. Most laptops you buy either come stuck at 80 or 90 watts and you can’t change it. More power will generally equal more performance, and when you see laptops with Max-Q graphics for sale they don’t advertise the power limits, so unless you check reviews like mine and find out the limits, it’s basically russian roulette. We’ll start out looking at the games, then compare thermals, clock speed, power draw and professional 3D applications afterwards.

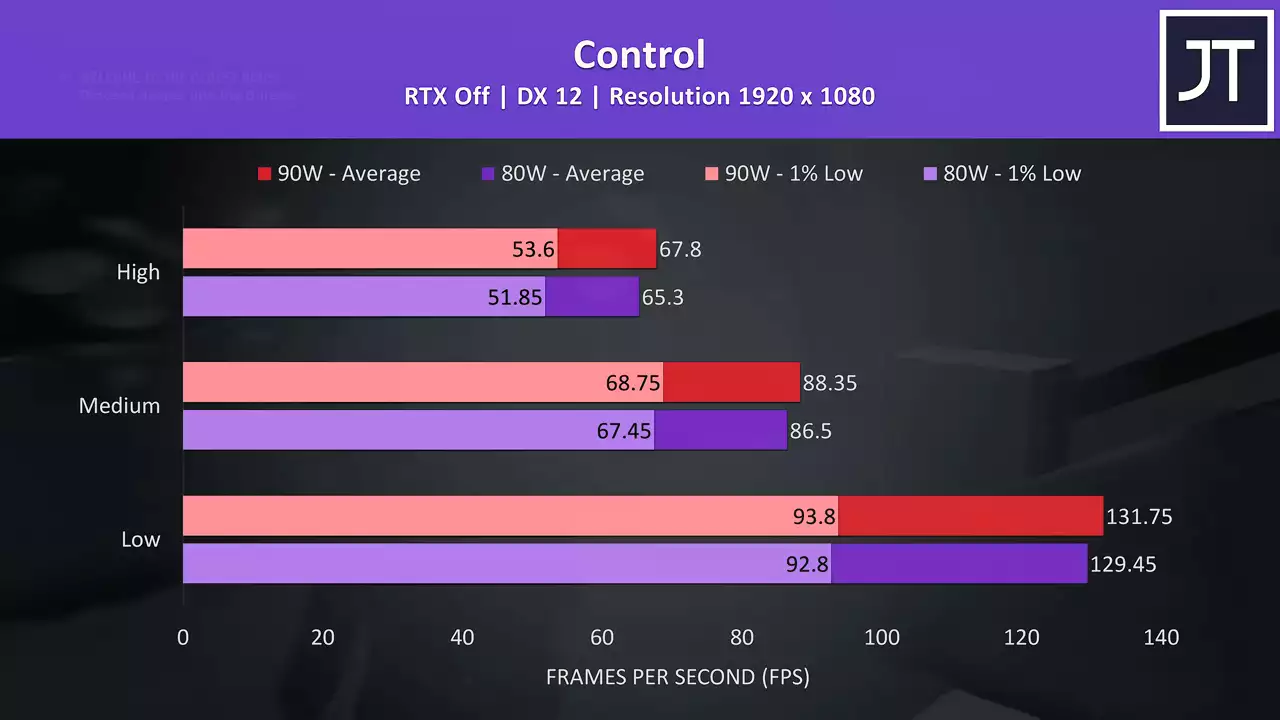

I’ve tested control by walking through the same section of the game. The 90 watt power limit is shown by the red bars, and lower 80 watt limit by the purple bars. In this game the difference appears quite minimal, with the 90 watt config just 3.8% faster at the high setting preset. I’ve also tested with RTX on, and again the 90 watt configuration was performing better, however at high settings now the 90 watt was almost 5% faster in average frame rate.

The 90 watt power limit is shown by the red bars, and lower 80 watt limit by the purple bars. In this game the difference appears quite minimal, with the 90 watt config just 3.8% faster at the high setting preset. I’ve also tested with RTX on, and again the 90 watt configuration was performing better, however at high settings now the 90 watt was almost 5% faster in average frame rate.

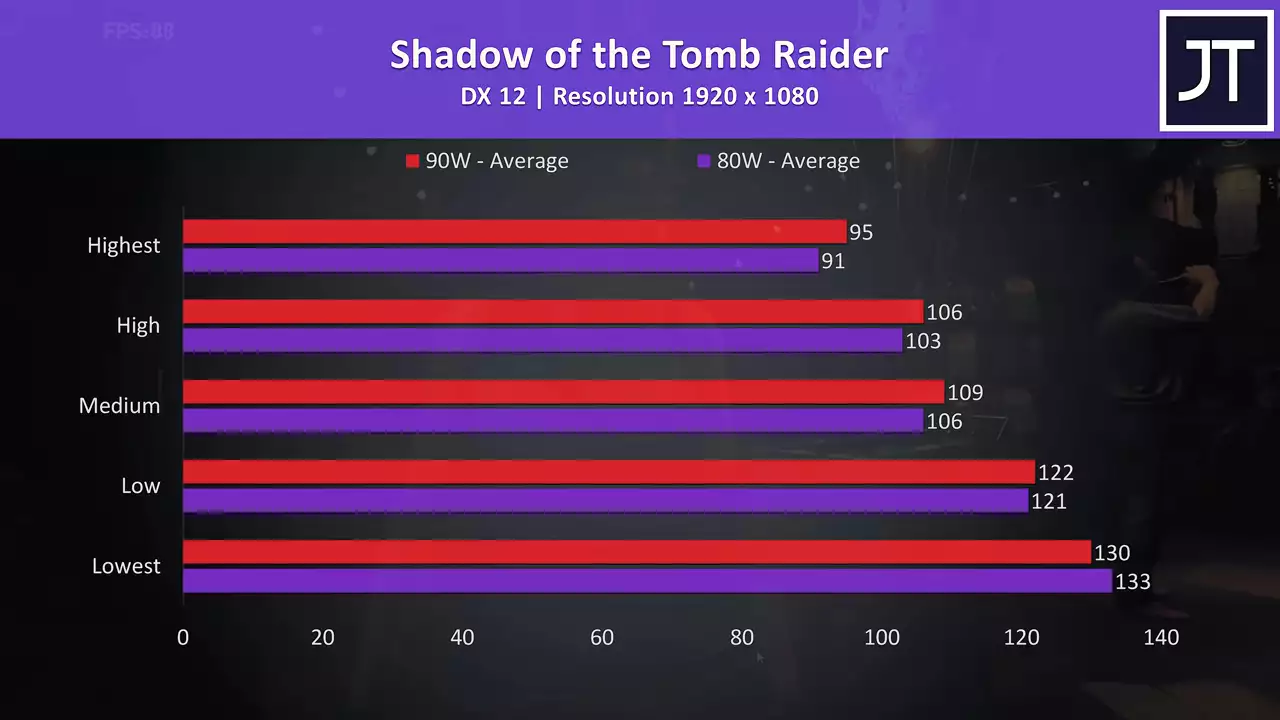

Shadow of the Tomb Raider was tested using the games built in benchmark tool. The 90 watt config was ahead at all setting levels, though not by too much, with just a 4.4% lead at highest settings.

The 90 watt config was ahead at all setting levels, though not by too much, with just a 4.4% lead at highest settings.

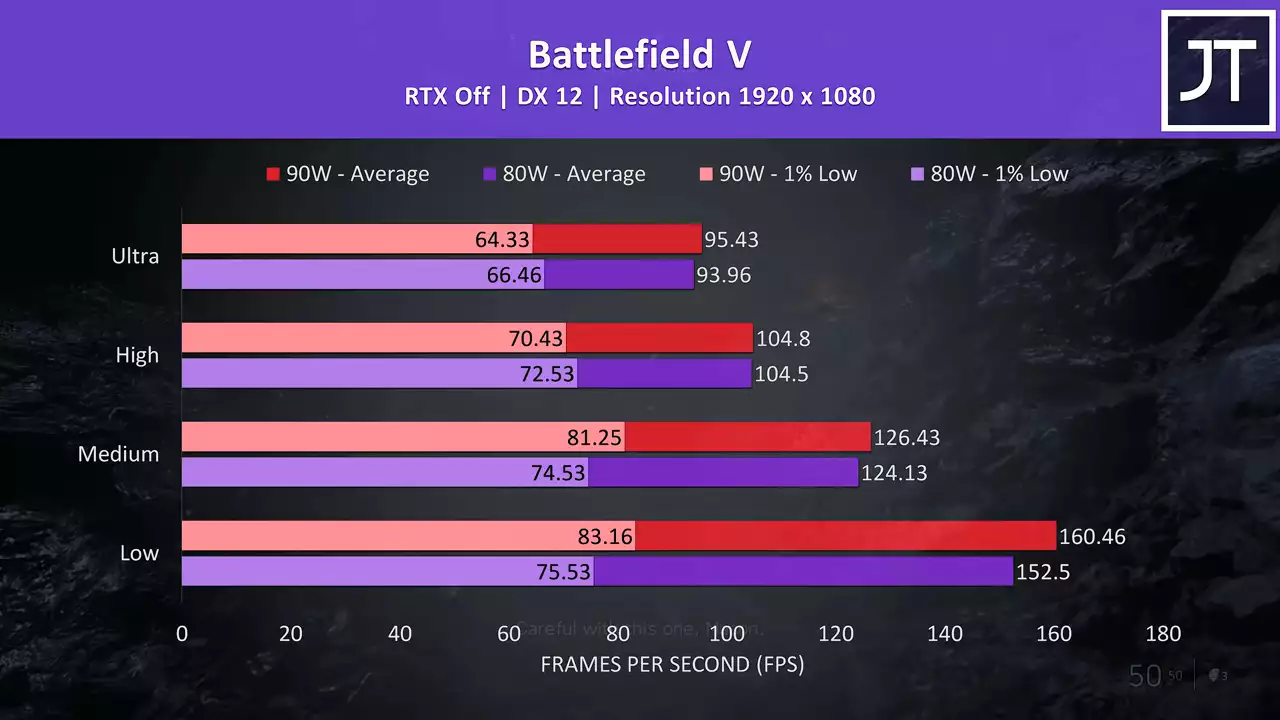

Battlefield V was tested in campaign mode, and interestingly the difference seems larger at lower setting levels.  The 1% low was actually a little ahead with the 80 watt limit at high and ultra, though 1% low consistency in this test isn’t the best.

The 1% low was actually a little ahead with the 80 watt limit at high and ultra, though 1% low consistency in this test isn’t the best.

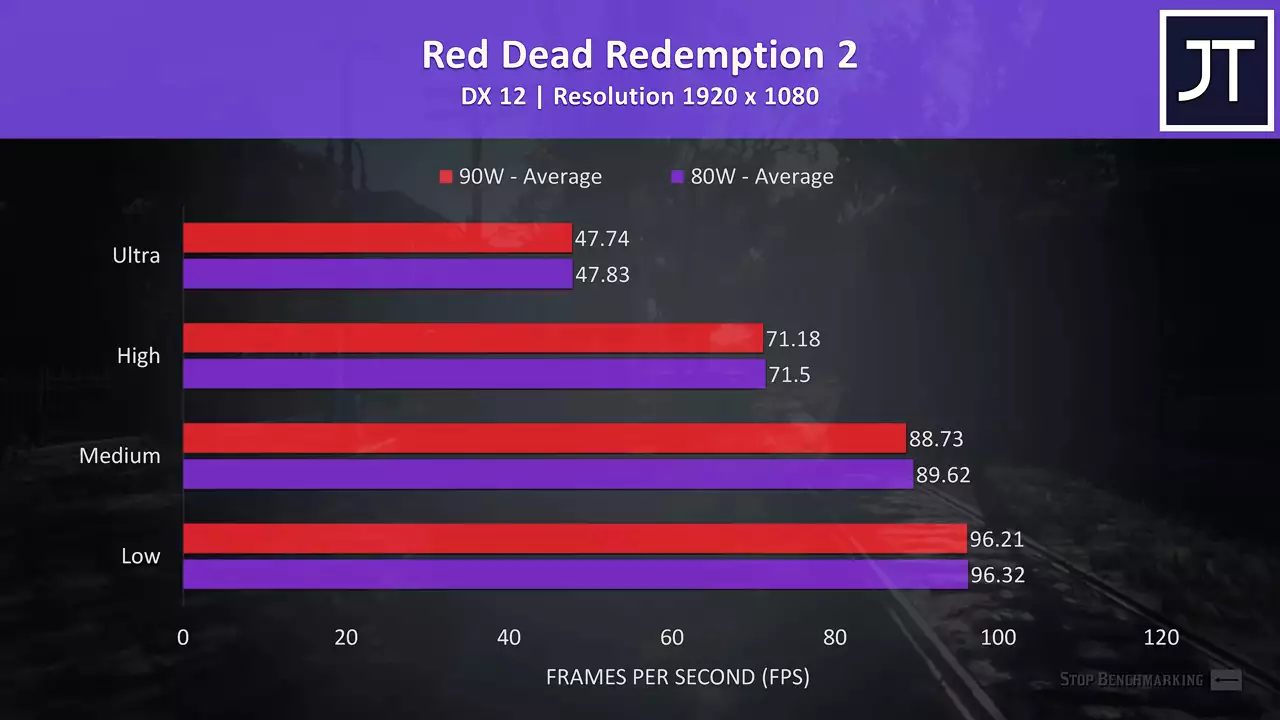

Red Dead Redemption 2 was tested using the games benchmark, and there’s essentially no difference between the two here.  Technically the 90 watt configuration was behind at all setting levels, but it’s so close it’s well within margin of error range.

Technically the 90 watt configuration was behind at all setting levels, but it’s so close it’s well within margin of error range.

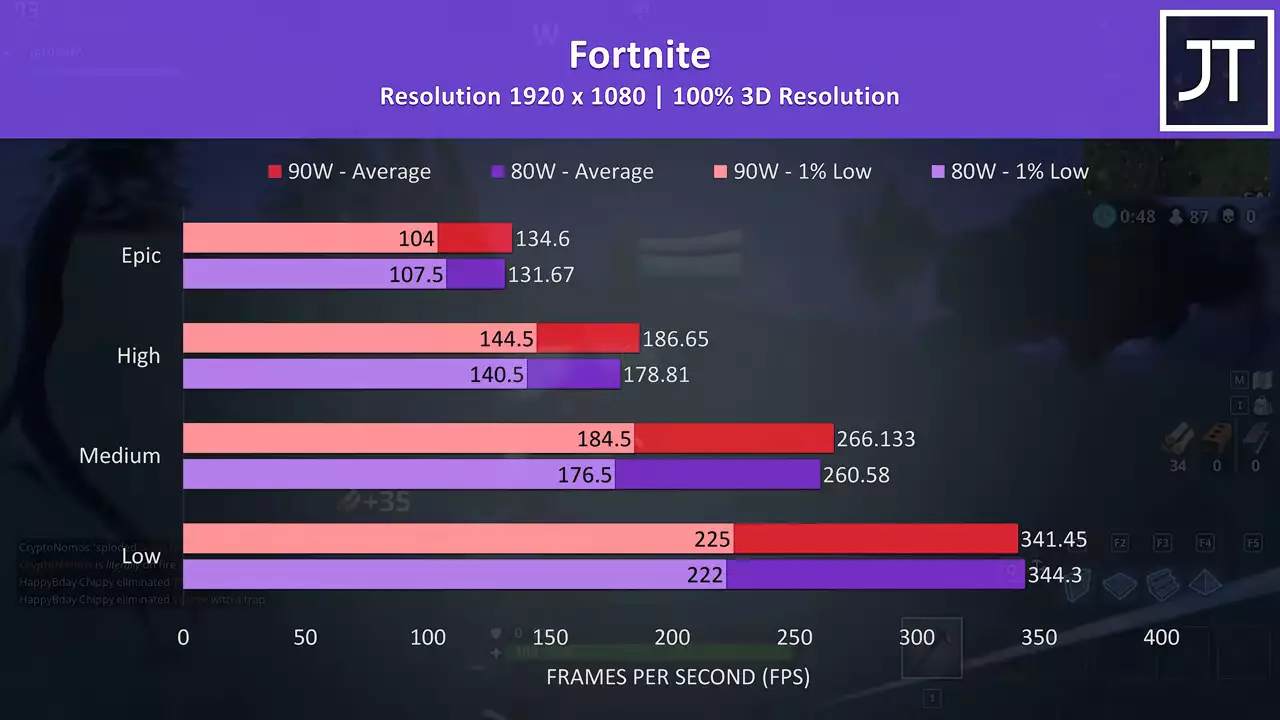

Fortnite was tested with the replay feature using the exact same replay for both 80 and 90 watt configurations. The results were quite close, with epic settings just 2% faster in average frame rate at 90 watts.

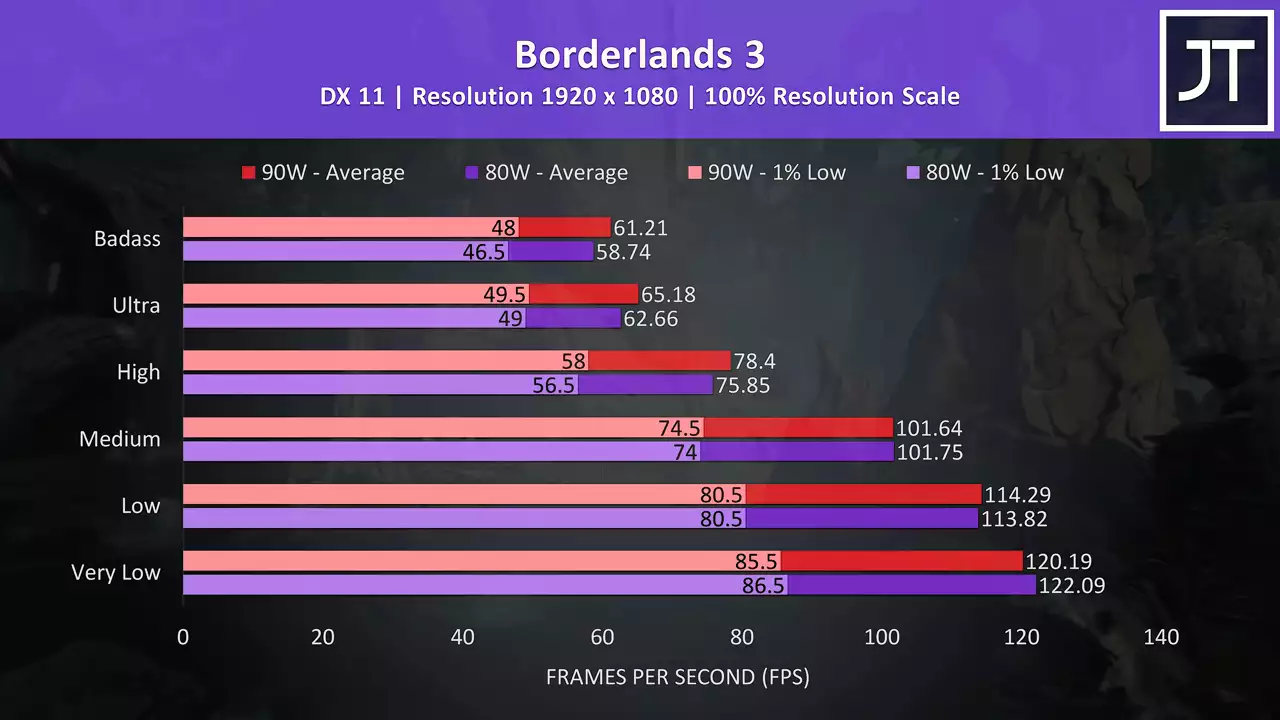

The results were quite close, with epic settings just 2% faster in average frame rate at 90 watts.  Borderlands 3 was tested with the built in benchmark tool, and at the highest setting level here the 90 watt config was 4.20% faster, so some blazing speeds there.

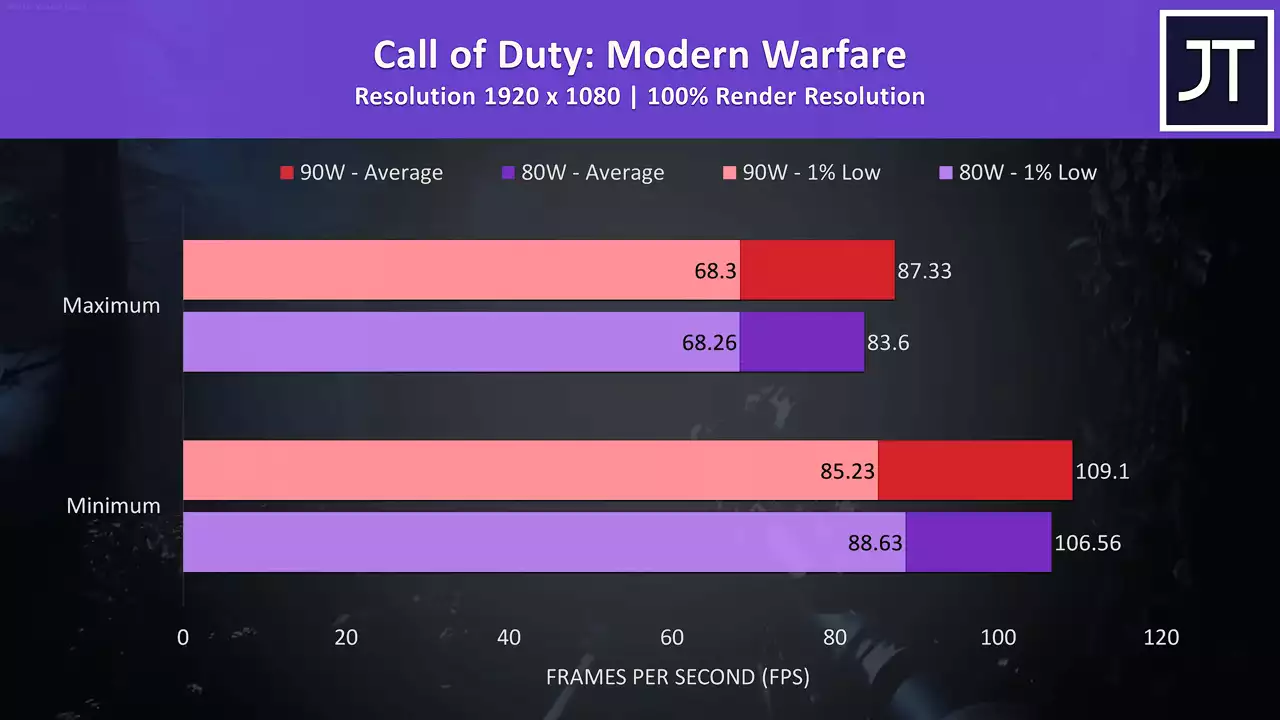

Borderlands 3 was tested with the built in benchmark tool, and at the highest setting level here the 90 watt config was 4.20% faster, so some blazing speeds there.  Call of Duty Modern Warfare was tested with either max or min settings as it doesn’t have built in presets. At max settings the average FPS from the 90 watt config was around 4.5% faster.

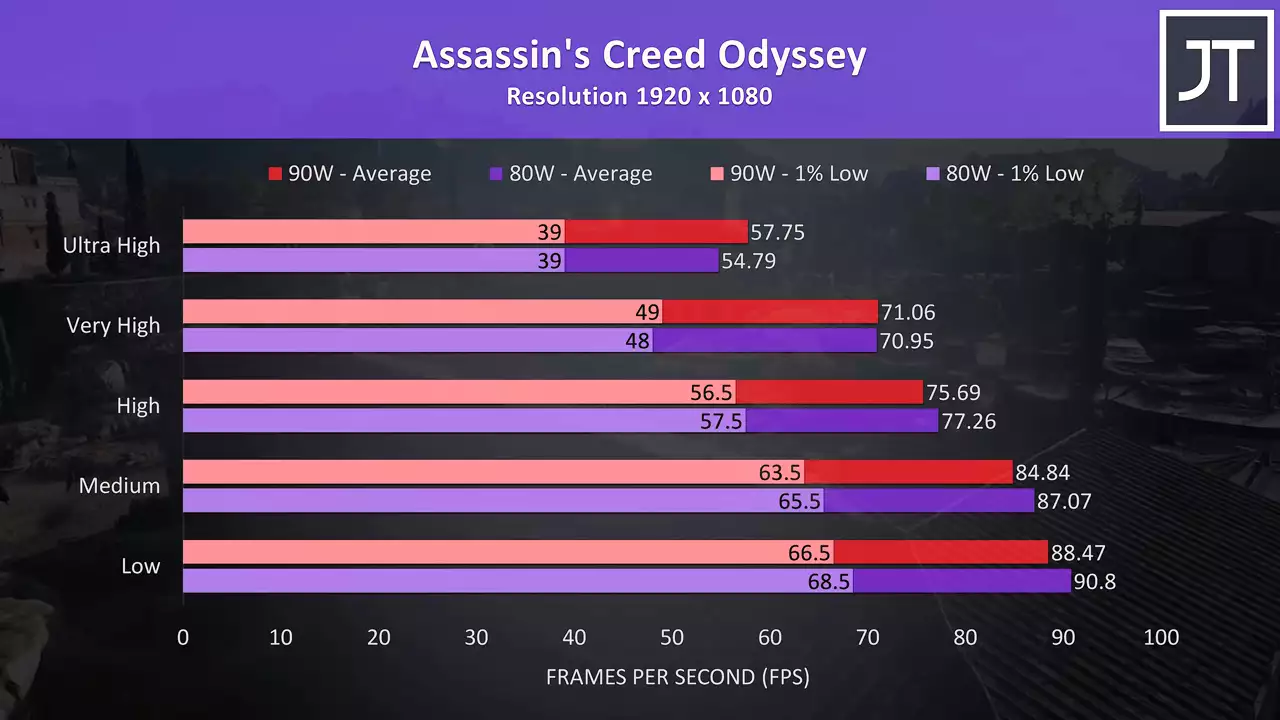

Call of Duty Modern Warfare was tested with either max or min settings as it doesn’t have built in presets. At max settings the average FPS from the 90 watt config was around 4.5% faster.  Assassin’s Creed Odyssey was tested with the games benchmark tool. Interestingly the 90 watt results were behind between low and high settings, similar to what we saw in some other games, but then when we’re presumably most GPU bound at max settings the 90 watt config was 5% faster in average FPS.

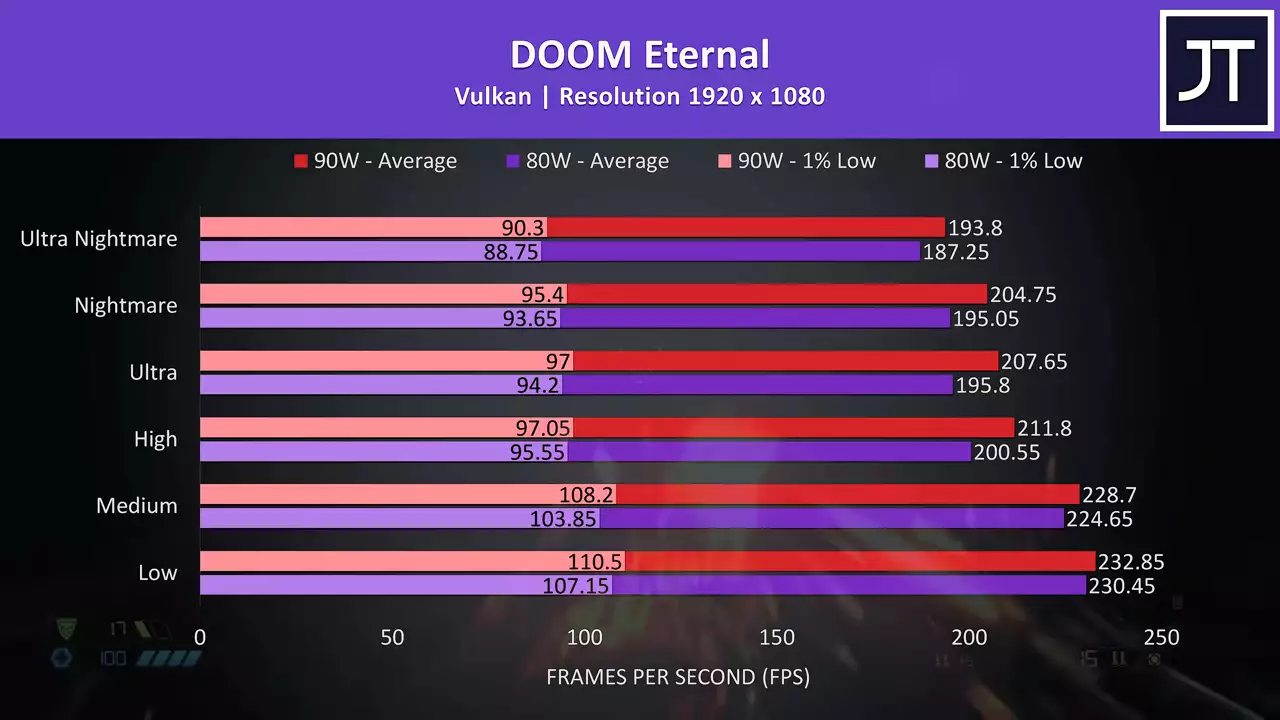

Assassin’s Creed Odyssey was tested with the games benchmark tool. Interestingly the 90 watt results were behind between low and high settings, similar to what we saw in some other games, but then when we’re presumably most GPU bound at max settings the 90 watt config was 5% faster in average FPS.  DOOM Eternal was tested with Vulkan, and the 90 watt config was ahead at all setting levels for both average FPS and 1% lows, though it wasn’t by much, at ultra nightmare settings the 90 watt average FPS is just 3.5% faster.

DOOM Eternal was tested with Vulkan, and the 90 watt config was ahead at all setting levels for both average FPS and 1% lows, though it wasn’t by much, at ultra nightmare settings the 90 watt average FPS is just 3.5% faster.  CS:GO was tested using the Ulletical FPS benchmark, and the difference was basically nothing at max setting levels, with only a small gain with everything at minimum using the 90 watt configuration.

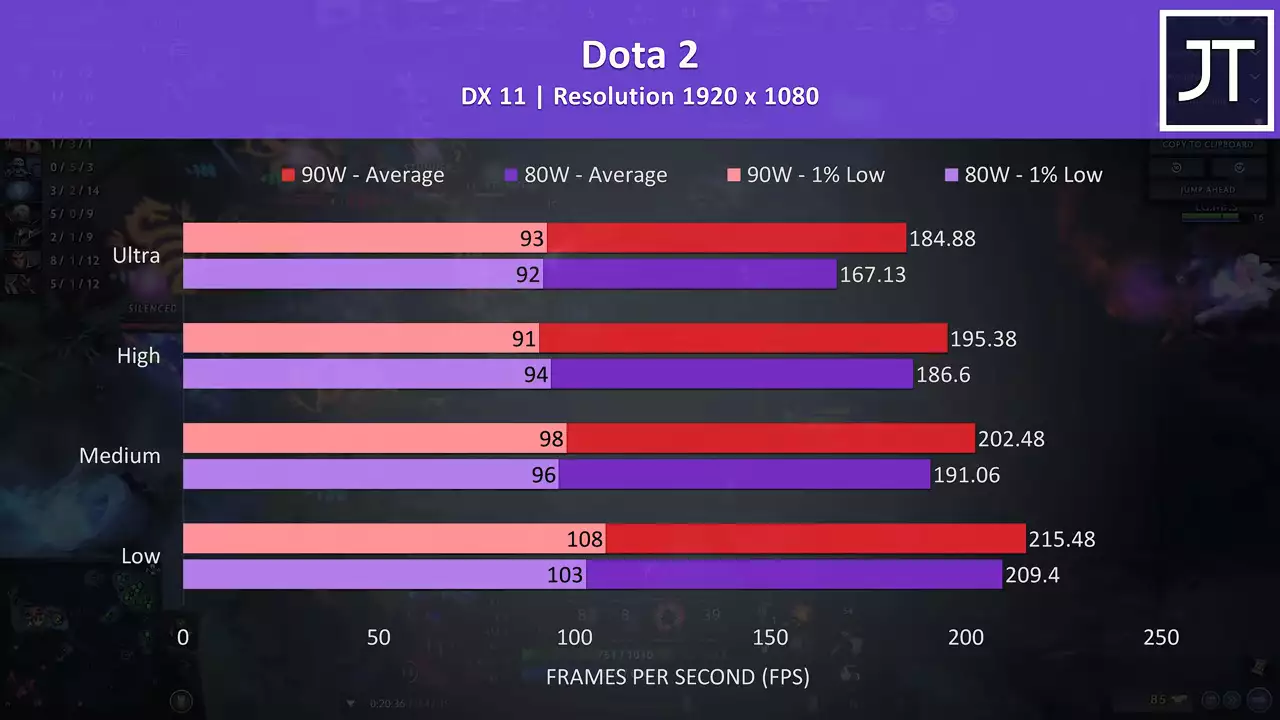

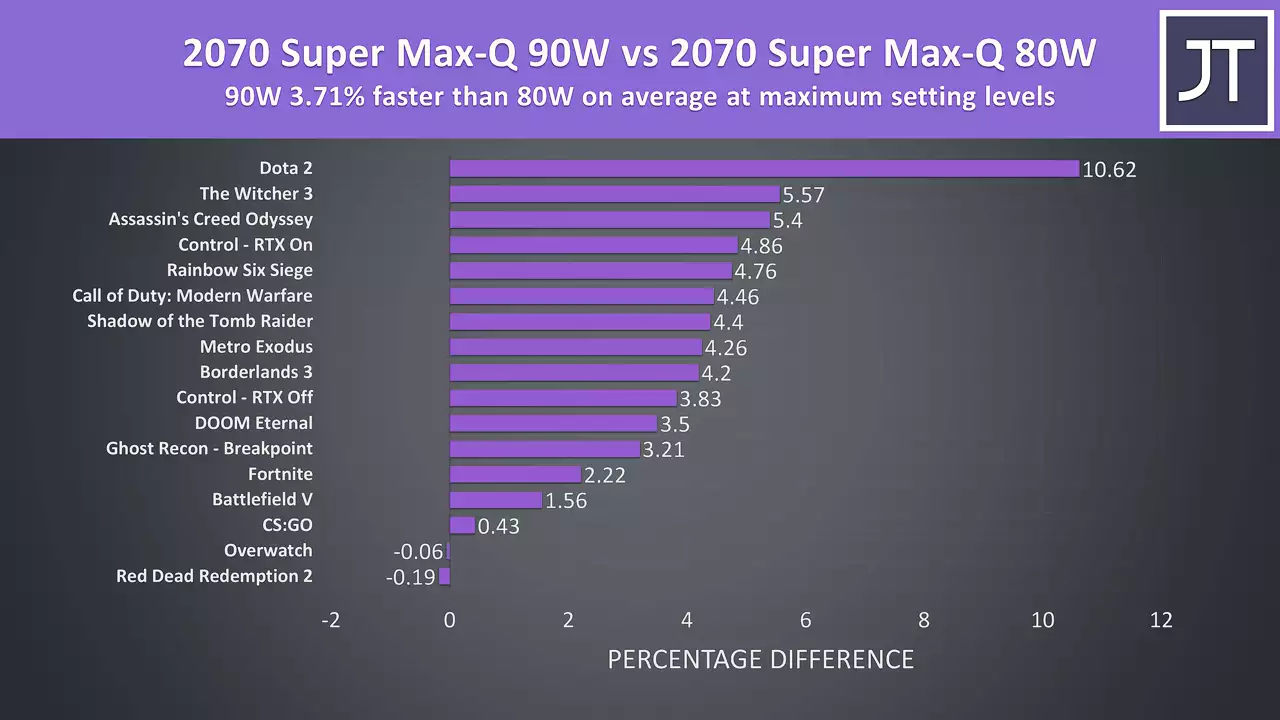

CS:GO was tested using the Ulletical FPS benchmark, and the difference was basically nothing at max setting levels, with only a small gain with everything at minimum using the 90 watt configuration.  Dota 2 was tested playing in the middle lane, and though it may not look like much, this game saw the largest difference at ultra settings out of all games tested, where the 90 watt test was almost 11% faster.

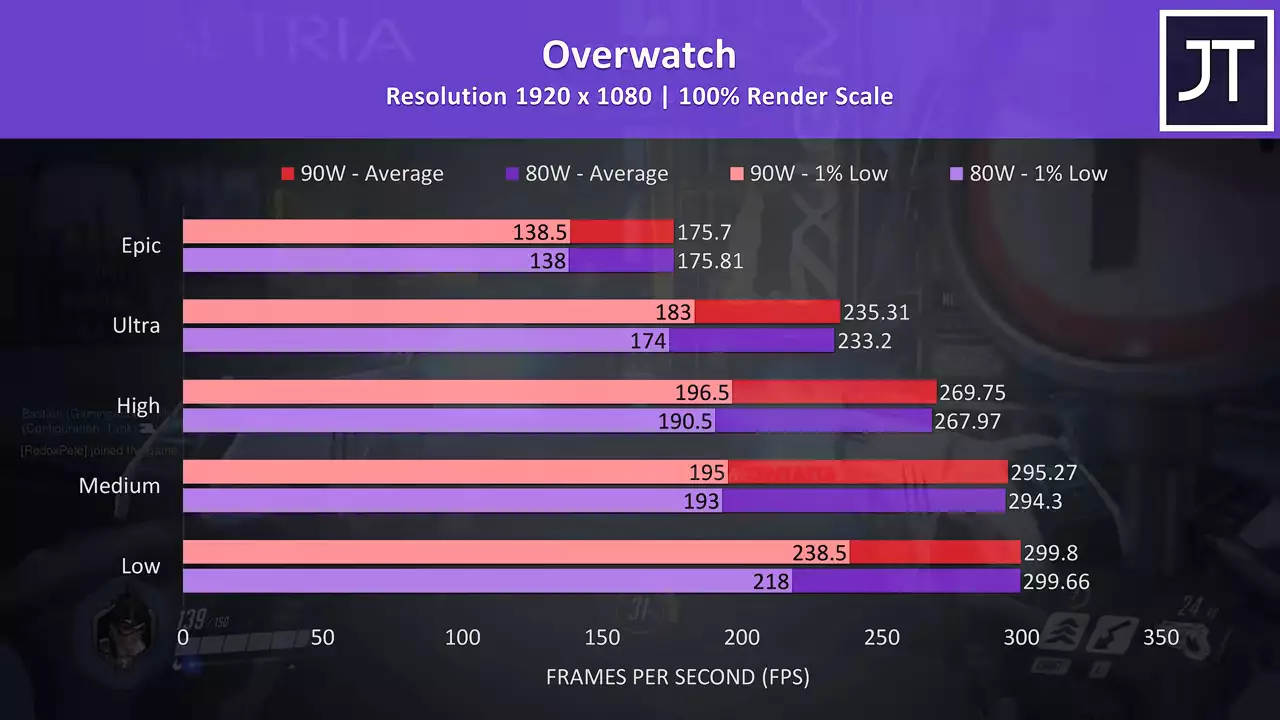

Dota 2 was tested playing in the middle lane, and though it may not look like much, this game saw the largest difference at ultra settings out of all games tested, where the 90 watt test was almost 11% faster. Overwatch was tested in the practice range as it allows me to do the exact same test pass, perfect for a comparison like this. There was a larger boost to 1% low performance in this game at 90 watts, the average frame rates were extremely close at all setting levels.

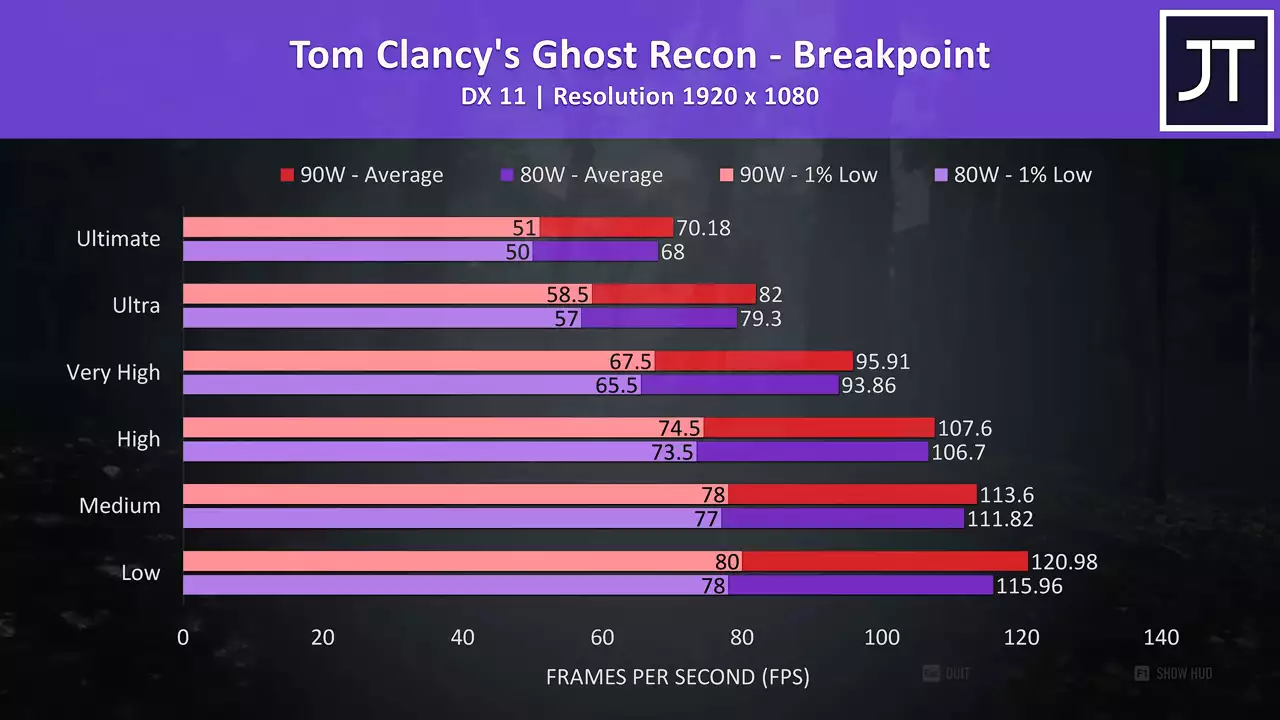

Overwatch was tested in the practice range as it allows me to do the exact same test pass, perfect for a comparison like this. There was a larger boost to 1% low performance in this game at 90 watts, the average frame rates were extremely close at all setting levels.  Ghost Recon Breakpoint was tested using the games benchmark, and there was just a 3.2% boost to average FPS at 90 watts here with the ultimate setting preset.

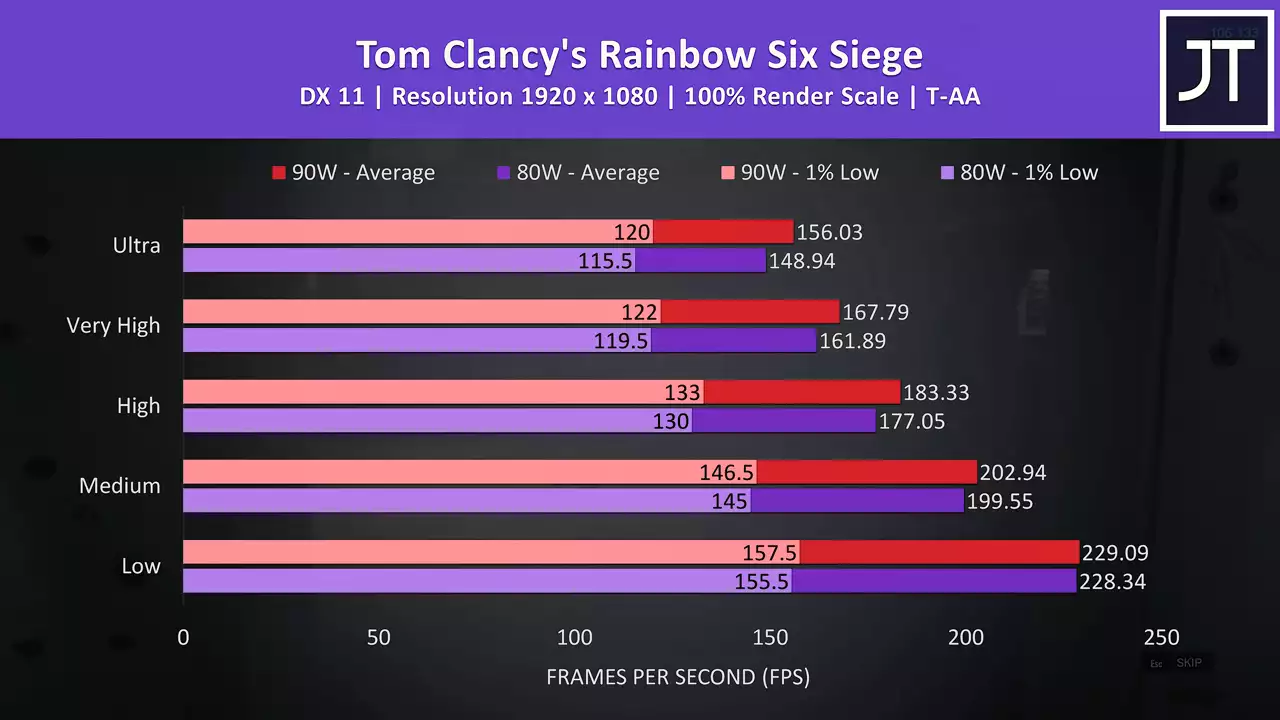

Ghost Recon Breakpoint was tested using the games benchmark, and there was just a 3.2% boost to average FPS at 90 watts here with the ultimate setting preset. Rainbow Six Siege was also tested with the games benchmark, but with Vulkan this time. The 90 watt tests were in front at all setting levels, and at ultra settings this resulted in almost a 4.8% higher average frame rate.

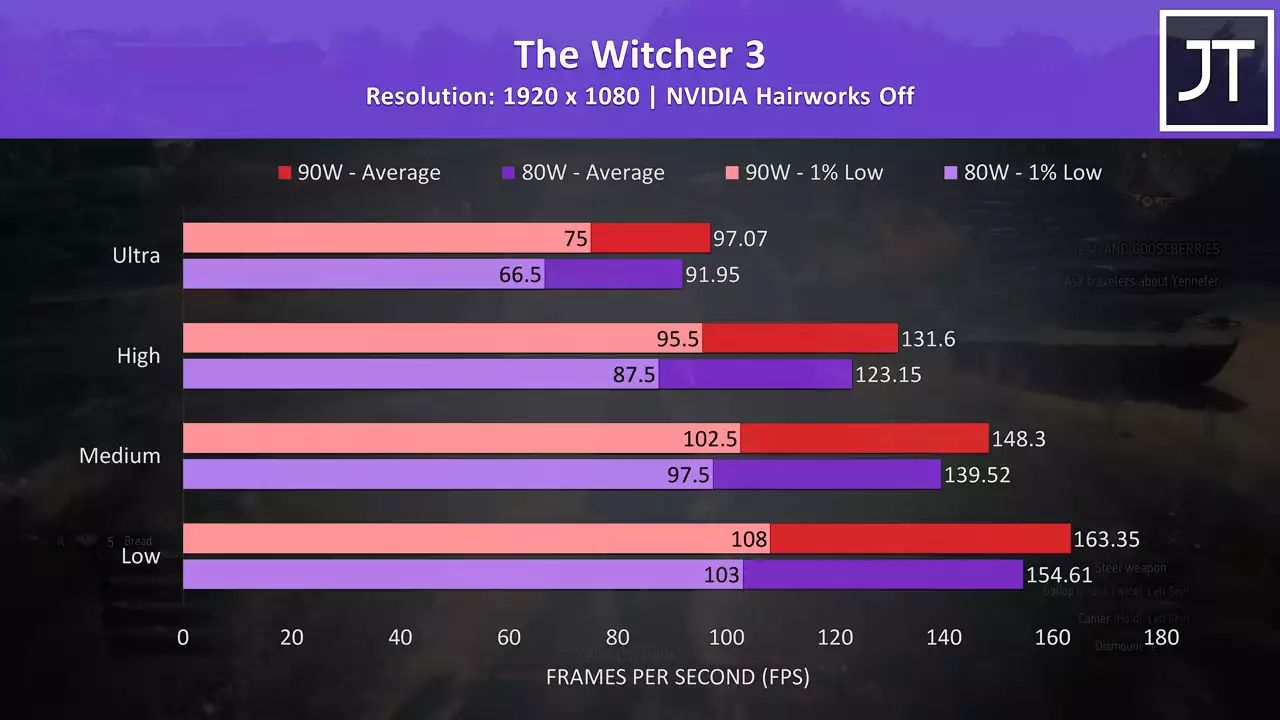

Rainbow Six Siege was also tested with the games benchmark, but with Vulkan this time. The 90 watt tests were in front at all setting levels, and at ultra settings this resulted in almost a 4.8% higher average frame rate.  The Witcher 3 tends to be one of the more GPU bound games tested here, which may explain the above average improvement seen with the 90 watt configuration. At ultra settings the 1% low was almost 13% faster at 90 watts, while the average FPS was 5.6% faster. That might not sound like much, but it puts it in second place out of all titles tested.

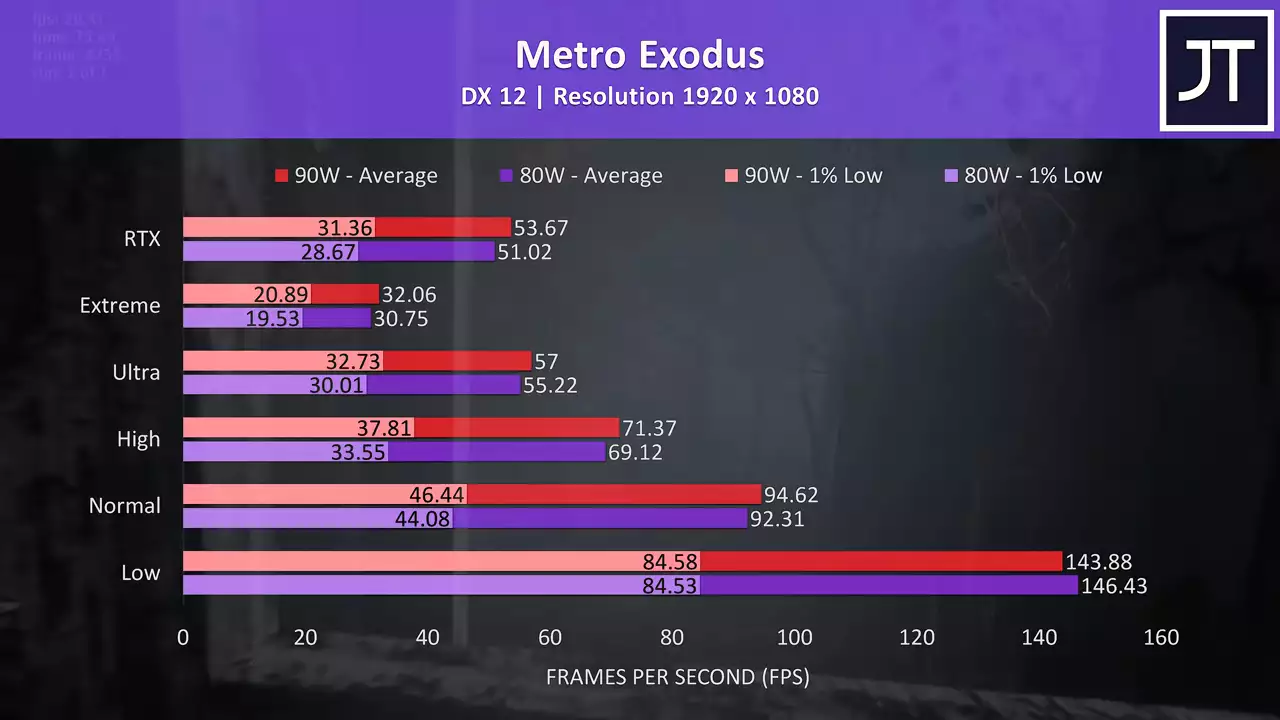

The Witcher 3 tends to be one of the more GPU bound games tested here, which may explain the above average improvement seen with the 90 watt configuration. At ultra settings the 1% low was almost 13% faster at 90 watts, while the average FPS was 5.6% faster. That might not sound like much, but it puts it in second place out of all titles tested.  Metro exodus was tested with the games benchmark, and the 90 watt config was ahead at all setting levels except for low, presumably as the GPU matters less and less at lower setting levels. In any case, at extreme the 90 watt test was just 4% faster than the 80 watt result.

Metro exodus was tested with the games benchmark, and the 90 watt config was ahead at all setting levels except for low, presumably as the GPU matters less and less at lower setting levels. In any case, at extreme the 90 watt test was just 4% faster than the 80 watt result.

On average out of all of these games tested, the 90 watt configuration was just 3.7% faster than the 80 watt configuration in terms of average frame rate.  As we can see, results vary from essentially no change in Red Dead Redemption 2 and Overwatch, to almost 11% in Dota 2, granted that was more of an outlier in the results. Other games still only saw around a 5% performance improvement best case, which at the end of the day may not sound like much, but when you consider that the RTX 2080 Super max-q is around 8% faster than the 2070 super max-q on average, in a way it’s not far off stepping up to a whole new tier of GPU.

As we can see, results vary from essentially no change in Red Dead Redemption 2 and Overwatch, to almost 11% in Dota 2, granted that was more of an outlier in the results. Other games still only saw around a 5% performance improvement best case, which at the end of the day may not sound like much, but when you consider that the RTX 2080 Super max-q is around 8% faster than the 2070 super max-q on average, in a way it’s not far off stepping up to a whole new tier of GPU.

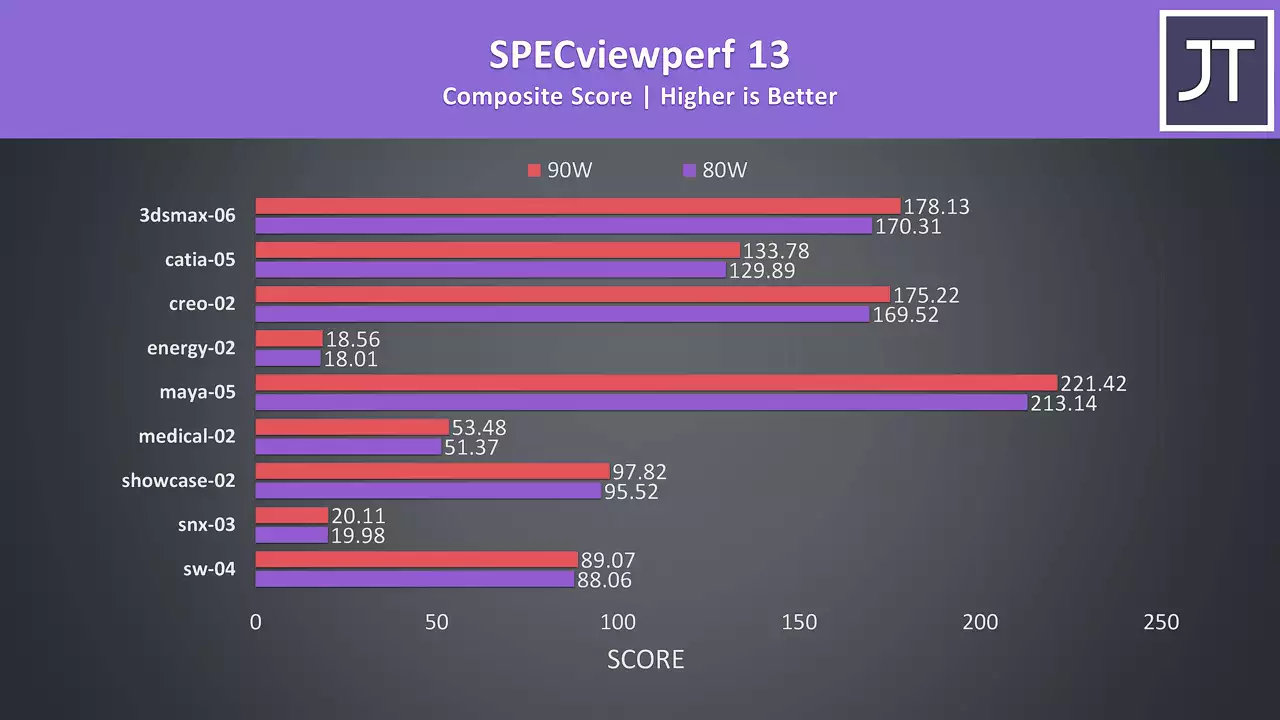

I’ve run some 3DMark tests, and the 90 watt configuration was 5% faster in the firestrike graphics score, 6% faster in the timespy graphics score, and 5% faster in the port royal test which uses ray tracing.  I’ve also tested SPECviewperf which tests professional 3D workloads.

I’ve also tested SPECviewperf which tests professional 3D workloads. The 90 watt configuration was ahead in all cases, however depending on the specific test the difference was often not very much.

The 90 watt configuration was ahead in all cases, however depending on the specific test the difference was often not very much.

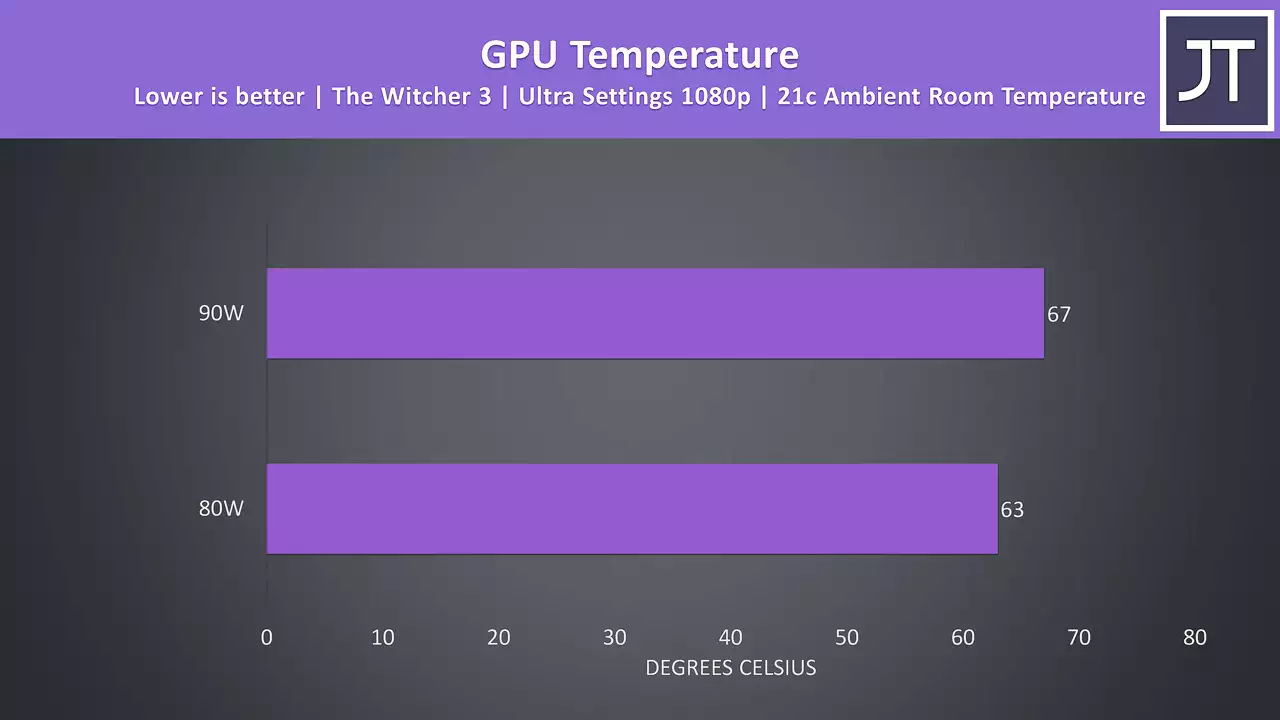

These are the temperature differences between the GPU while playing the witcher 3 at ultra settings 1080p.  The 90 watt configuration was just 4 degrees warmer, so not that much warmer, but as expected adding more power does equal more heat.

The 90 watt configuration was just 4 degrees warmer, so not that much warmer, but as expected adding more power does equal more heat.

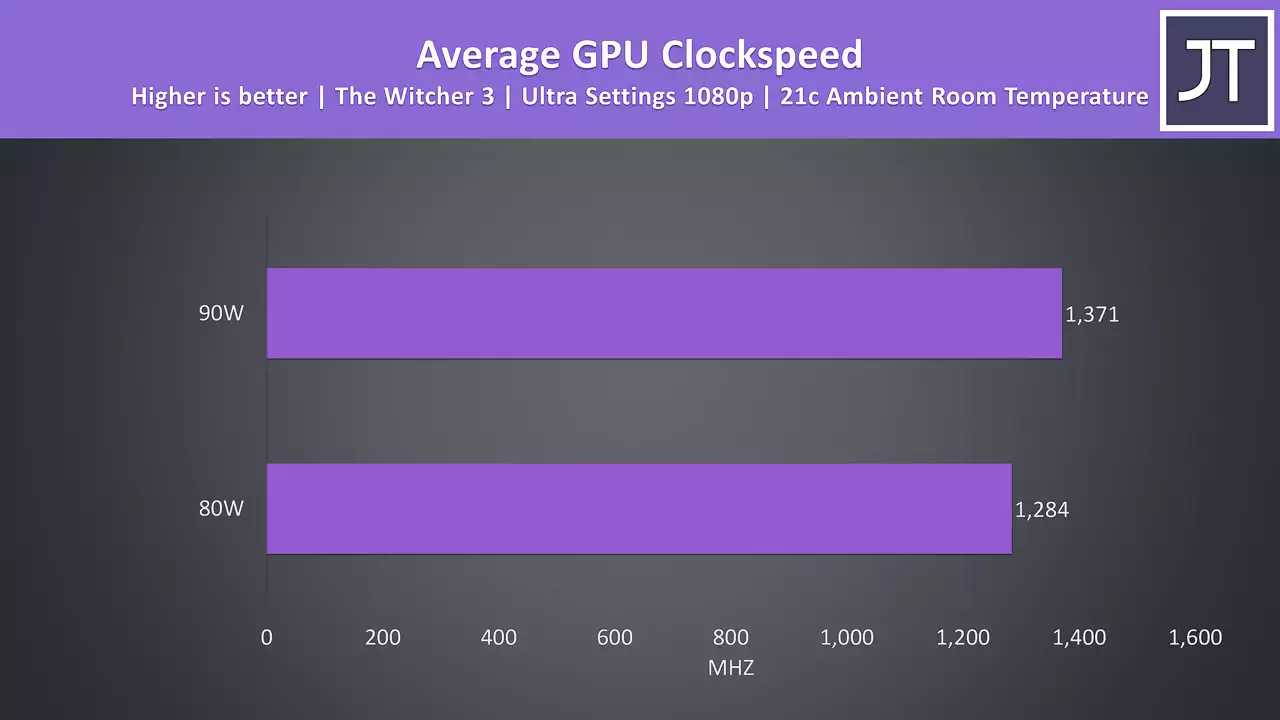

When we look at clock speed differences the 90 watt configuration is almost 7% faster when compared to the 80 watt configuration

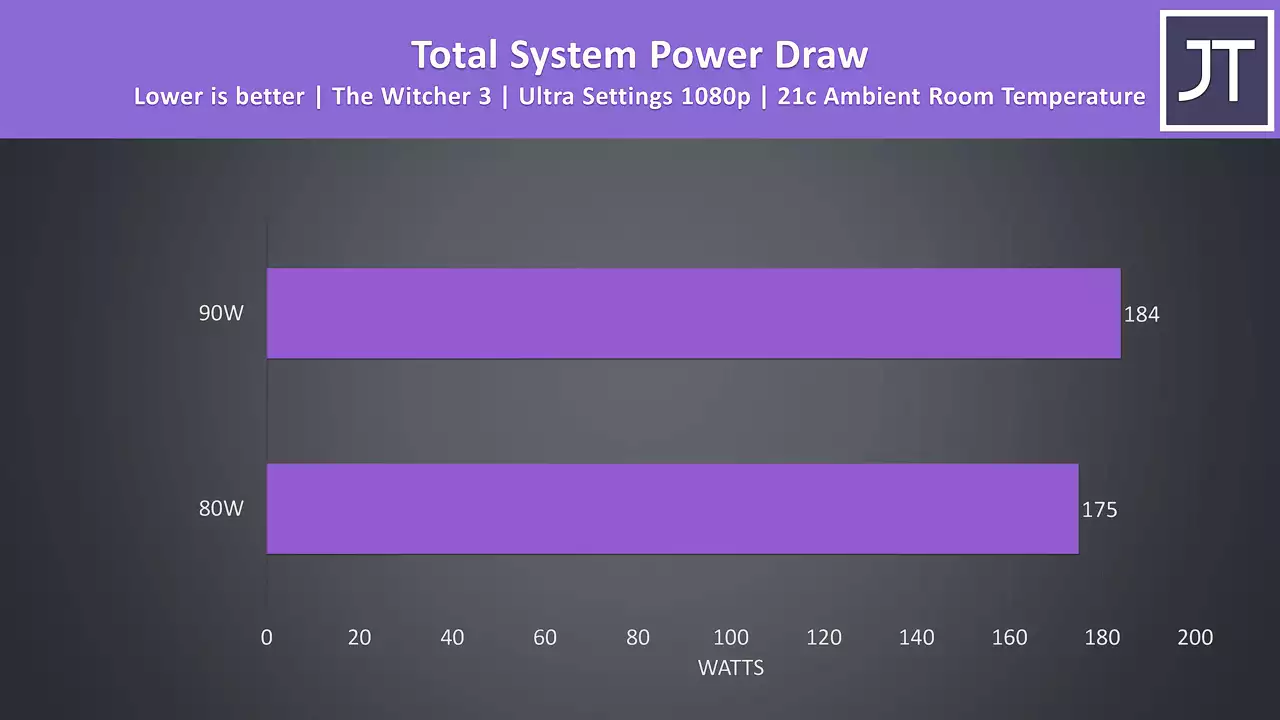

When we look at clock speed differences the 90 watt configuration is almost 7% faster when compared to the 80 watt configuration and this is while drawing around 5% more power from the wall in this same game.

and this is while drawing around 5% more power from the wall in this same game.

As we can see there is definitely a difference between the 80 and 90 watt configuration, however I was quite surprised that the differences were as small as they were, I honestly thought it would matter more.

At the same time though, I think this is still pretty crap from Nvidia as companies advertising laptops with max-q graphics never specify the power limit they run at, so you have no idea which level of performance you’re expecting from your hardware unless you check reviews like mine that actually tell you the power limits the hardware runs at.

This is basically the only issue I have with max-q personally, you just don’t know what you’re getting as performance depends on wattage and that’s unclear on a per laptop basis.

Other people seem to complain that Max-q exists at all, I disagree with that. If you want the same GPU chip in a thinner machine, a lower power limit is required so that thermals remain in check. If you want a higher power limit, then consider buying a slightly thicker machine, or accept that your thinner machine will run blazingly hot like the ASUS Zephyrus GX502.

I have no problem with that difference existing, what I do have a problem with is different companies running their GPUs at who knows what limits as the customer can’t easily see what they’re buying.

Anyway let me know what you thought of the performance differences between the 80 and 90 watt Max-Q configurations down in the comments, I’m keen to hear your thoughts about customers basically having no idea which variant they’re getting when buying a new laptop.

No comments yet