Although, multi-GPU setups aren't quite as common as they used to be, they're still one of the hallmarks of a high-end computer, because, yeah, use more of them is a pretty intuitive solution to the need for more power. But how far back does the multi-GPU timeline go?

Even though the practice of using multiple GPUs arguably had its heyday in the late 2000s and the early 2010s, its roots actually go back to 1998 in a company that doesn't even exist anymore.  The 3dfx Voodoo II introduced us to SLI, which back then, stood for scan-line interleave, an incredibly sexy name, even if the card's design wouldn't necessarily catch our eye today.

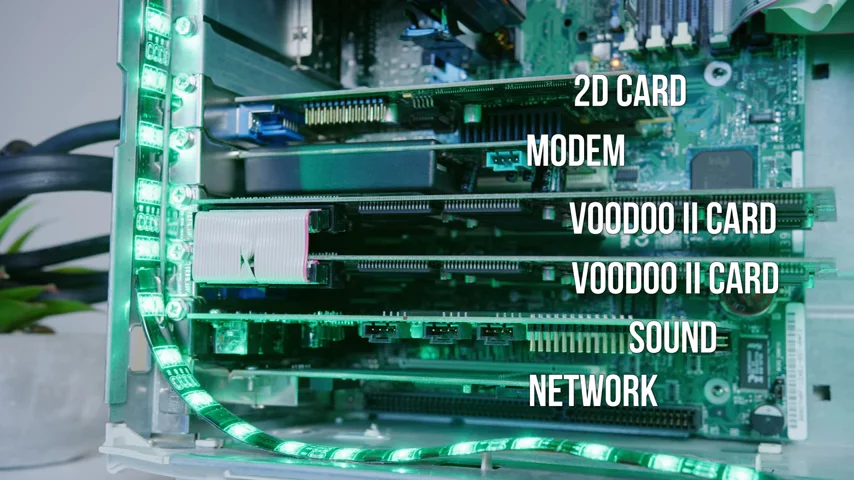

The 3dfx Voodoo II introduced us to SLI, which back then, stood for scan-line interleave, an incredibly sexy name, even if the card's design wouldn't necessarily catch our eye today.

But looks aren't everything as the Voodoo II actually had three GPUs on one card, meaning two cards in SLI, packed some really serious punch, for the time anyway.

The max resolution was a whopping 1024 by 768, and you actually had to pair your Voodoos with a dedicated 2D card, meaning the setup would take lots of room in your rig, and also, hit your bank account pretty hard.  But of course, 3dfx didn't last much longer, and Nvidia ended up buying their SLI technology and renaming it Scalable Link Interface, even sexier.

But of course, 3dfx didn't last much longer, and Nvidia ended up buying their SLI technology and renaming it Scalable Link Interface, even sexier.

With the first SLI consumer cards from Nvidia hitting the market in 2004. The earliest Nvidia SLI GPU's were part of the G4 six series and sped up performance by using schemes called alternate frame rendering and split frame rendering. In alternate frame rendering, one card would render odd numbered frames while the other would render even numbered frames. Whereas in split frame rendering, each card would render part of the same frame.

SFR was particularly interesting since it used an algorithm to determine the most efficient way to split a frame between two cards with varying degrees of success. Ultimately, whether AFR or SFR was used depended on which one was best for a specific game, as determined by Nvidia's engineers.

But you couldn't just grab these cards and plop them in your system and head off to frag all the single GPU peasants you could headshot in Unreal. Early SLI required specialized equipment, like hefty power supplies and an SLI bridge, which connected the frame buffers on the two cards. But it was actually more challenging than it is today to find SLI compatible motherboards, which originally required Nvidia chip sets, and you needed both identical cards and video BIOS versions. Although, you could try mixing cards, you were much more likely to run into stability issues.

But if you could pull that all together, the gains from SLI were seriously impressive in hot titles of the day, such as Unreal Tournament 2004 and the Venerable Half-Life Two.

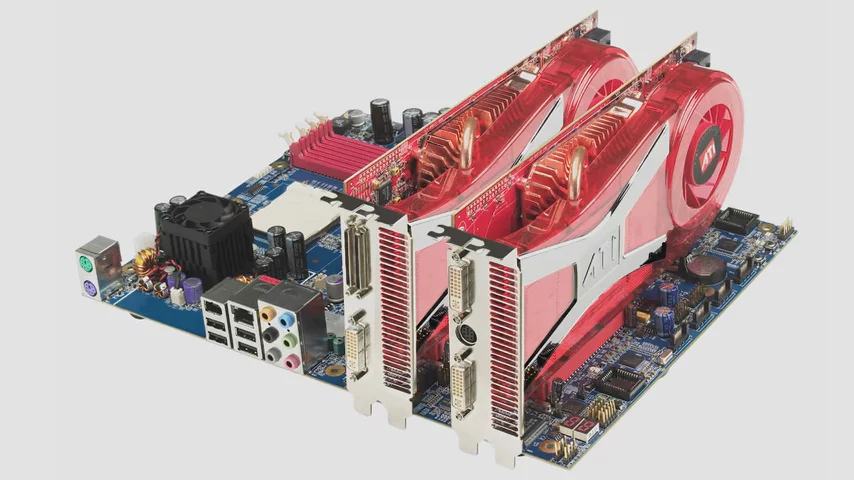

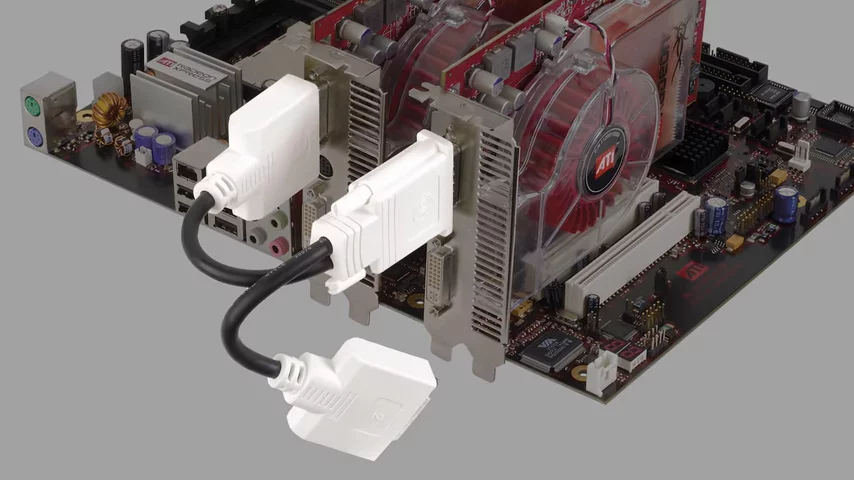

Meanwhile, ATI was responding with its competing crossfire solution for its R400 series GPUs, which were released in 2005, one year before ATI was acquired by AMD.  Unlike SLI, you could actually mix different GPU models in crossfire with some limitations, but the first couple of generations actually required a clunky Y-shaped DVI dongle that plugged your monitor into both of your graphics cards instead of just one

Unlike SLI, you could actually mix different GPU models in crossfire with some limitations, but the first couple of generations actually required a clunky Y-shaped DVI dongle that plugged your monitor into both of your graphics cards instead of just one but as time went on, both teams, red and green, made improvements. SLI started to work better with cards from different ad and board manufacturers. Crossfire got a proper bridge instead of a clunky dongle and three and even four card setups became possible.

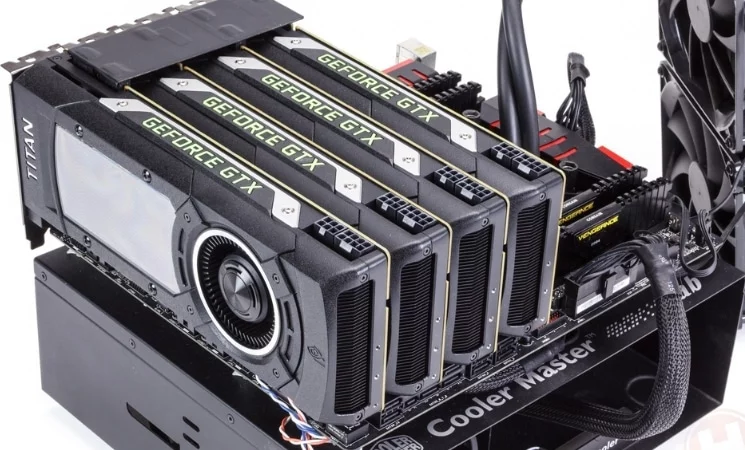

but as time went on, both teams, red and green, made improvements. SLI started to work better with cards from different ad and board manufacturers. Crossfire got a proper bridge instead of a clunky dongle and three and even four card setups became possible.

This is when larger power supplies started to become a little more common. Nvidia said the minimum you should be rocking for a triple 8,800 ultra setup was 1100 watts, which would be overkill for most high-end systems today.

On that note, why exactly has multi-GPU fallen off so hard in popularity? Setups like SLI and crossfire simply did not scale very well, despite scale being right in their very sexy names.

Because of overhead and getting the cards to communicate and synchronize properly, diminishing returns quickly set in as you added more cards, meaning spending three times the bucks won't get you three times the performance. There have even been plenty of documented cases where a single good quality graphics card would be a multi-card setup, even though you might not expect that based solely on the relative power of the GPUs, and frustratingly, multi-GPU has been fraught with problems relating to driver and software stability, even into the contemporary era of gaming.

Nvidia and AMD have stopped supporting it, meaning that games now need to natively support it. All of this has led to many enthusiasts preferring single cards systems instead. Sometimes less really is more.

No comments yet