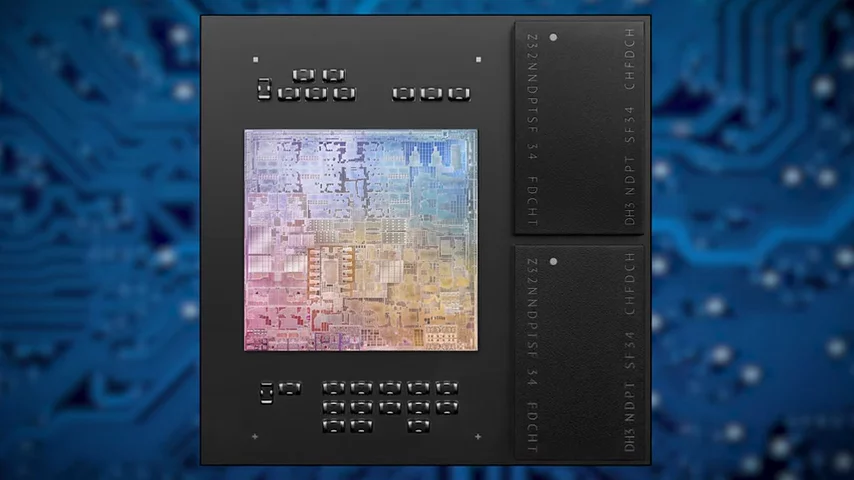

The first systems with the Apple M1, the inaugural chip in the new Apple Silicon series, are on the market. So let's take a closer look at how the chips perform, and the innovations under the hood that Apple hopes will make the M1 a home run.

A cursory glance might make you think that the M1 is just a souped up mobile chip, as it is quite similar to the A14, in the current iPhone 12 lineup, with four high performance and four high-efficiency cores. And it has I/O controllers and built in memory that makes it more of a mobile system on a chip than a plain CPU.

But Apple claims that it's the world's fastest laptop CPU. And early reviews actually show that this claim isn't really unfair, with many commentators gushing over its performance. But how did Apple pull this off without fundamentally changing the design from what it's using in its smartphones?

Part of the answer is that Apple used a lot more transistors in the M1 than the A14, over 4 billion more according to the company.  But while this may have boosted performance, the real secret sauce lies in how Apple's design, both in the A14 and the M1 fundamentally differs from x86, which is the architecture that AMD and Intel have been using for decades.

But while this may have boosted performance, the real secret sauce lies in how Apple's design, both in the A14 and the M1 fundamentally differs from x86, which is the architecture that AMD and Intel have been using for decades.

Apple's philosophy was to make the chip much more parallel than conventional CPU designs. Both the M1's decoder which translates incoming instructions, and the execution units that actually process them, are wider, meaning they can accept more instructions at once.

Additionally, the M1 can go significantly deeper with out-of-order execution, meaning that it can read ahead on the page and anticipate which instructions the program will need to have processed ahead of time to a greater extent to that of x86.

Then you have the fact that the M1 features a lot more Level 1 cache than x86 processors, which is the fastest cache memory available to a CPU core. And because it's based on a chip originally meant for mobiles, it does all this while drawing significantly less power.

But hold on a second, couldn't Intel and AMD just implement some of the changes themselves and catch up with Apple? Well, it turns out that might be quite a challenge as the x86 architecture has some inherent limitations. For example, AMD and Intel could just try and add more L1 cache, but it's extremely difficult to make decoders much wider than they are now on x86 chips, so, can't win them all.

So does this mean that Intel and AMD are in huge trouble? Well, like any new impressive piece of tech, the M1 isn't without its weaknesses. You see, although early reviews have reported very impressive performance gains in first party apps that have been written for Apple Silicon, the M1's speed benefits in other applications have been more modest, as programs written for x86 have to be emulated, which introduces significant CPU overhead. And because the emulation process is imperfect, there have also been stability issues with a number of popular programs, even though they were written for late model Mac hardware. But Apple is betting that because developers who have large user bases on the Mac platform would like to keep it that way, those developers will adapt and come out with versions specifically written for the M1 sooner than later.

This approach is more or less in line with Apple's playbook for other new products. They bring a new platform to market and then use their brand power to force developers to catch up, which is something that they hope will again happen with the M1. Ultimately, it's not hard to imagine offering a more tightly controlled App Store experience for Macs, with programs being specifically vetted for full compatibility with Apple Silicon, especially as we expect to see more of Apple's chips in desktop Macs down the line after seeing how powerful their first crack at a laptop processor has been.

No comments yet