There's nothing like unboxing a shiny new graphics card. But they're just so bulky. Why is it that we can't just buy the GPU by itself and slot it directly onto our motherboard? Like we do for CPUs. It turns out there are a lot of reasons that it's just not that simple. And a big one is power.

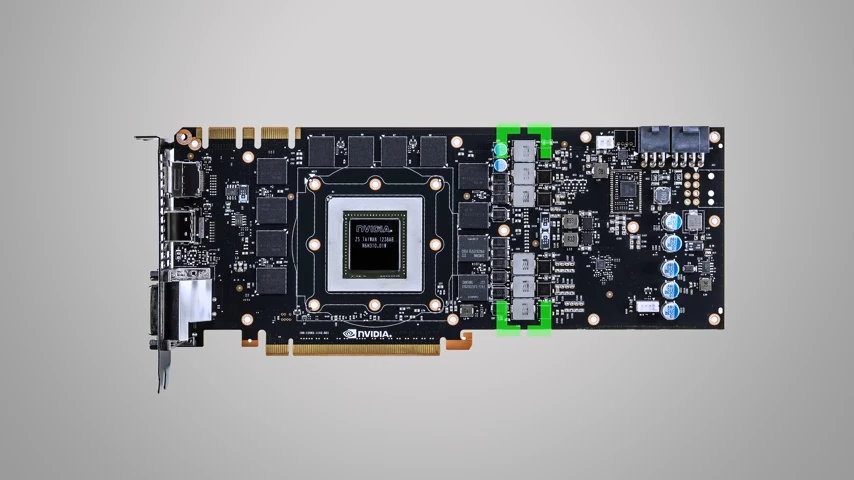

You see a mid to high-end discrete GPU draws lots of power, to the point where it's typically the single most power-hungry component in your system. And you can see this if you look at a graphics card with the cooler removed.  Many of those little components that you see all over the board are dedicated to power delivery and voltage regulation, which is part of the reason that graphics cards tend to be quite large. Putting these components onto the motherboard would not only make it larger, but also significantly more expensive. But that's far from the only logistical concern. Another big one is memory.

Many of those little components that you see all over the board are dedicated to power delivery and voltage regulation, which is part of the reason that graphics cards tend to be quite large. Putting these components onto the motherboard would not only make it larger, but also significantly more expensive. But that's far from the only logistical concern. Another big one is memory.

Although integrated graphics can share your system's standard DDR Ram, and some lower-end discrete GPUs actually use dedicated but still run-of-the-mill DDR, mid and higher range options use special video Ram called GDDR, with the G being for graphics. GDDR, is higher bandwidth, because it's designed to handle large chunks of data such as the visual assets and textures that your GPU is continually asking for. By contrast, normal DDR, is better at handling smaller pieces of data. So it doesn't have as much bandwidth, but its latency is lower.

This means that if you were to put a relatively powerful GPU directly onto a motherboard, you would also need to build in dedicated GDDR memory, which would you guessed it make the motherboard even bigger and even more expensive.

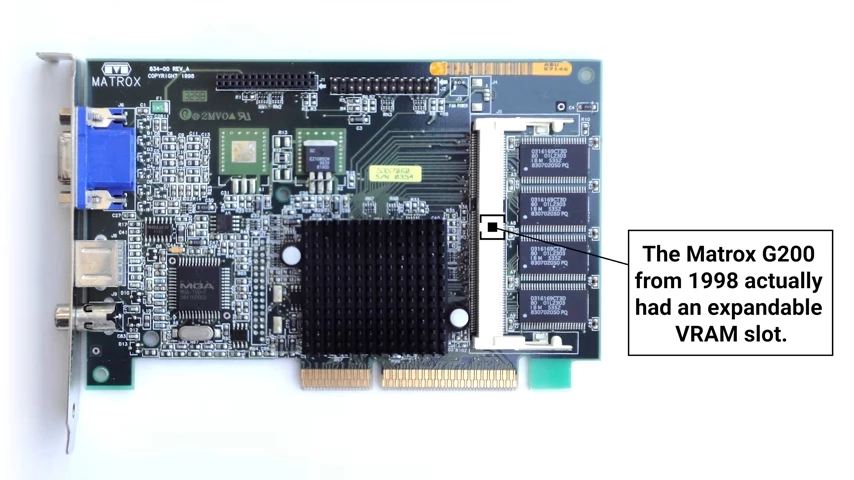

Hypothetically, you could design this video memory or VRAM to be slaughtered in rather than soldered directly to the graphics card.  But this actually has the possibility of increasing the rate of data errors, which is a big part of the reason that all modern graphics cards use soldered on VRAM.

But this actually has the possibility of increasing the rate of data errors, which is a big part of the reason that all modern graphics cards use soldered on VRAM.

Even if you could solve the memory and power issues, though, there's another big roadblock. Having a GPU socket on your motherboard, is the socket itself. You know how AMD, and Intel CPUs use completely different socket designs, and even within AMD or within Intel, you end up having to upgrade your motherboard every so often when they decide to change the pin layout, Well, you would have the same problem with a GPU socket, but arguably worse, as there are now three discreet GPU manufacturers with Intel entering the fray, and GPU architectures tend to be updated more frequently and more dramatically than on the CPU side. Meaning that motherboards would go out-of-date much more quickly, not to mention that you'd be locked into one CPU vendor and one GPU vendor every time you bought a new one.

None of this even mentions that all those different GPU's, would necessitate different amounts of power delivery and VRAM. so you'd either have the problem where you'd need a stupidly huge lineup of different motherboards from every manufacturer to accommodate all these different variations, or you'd have to overengineer every motherboard with enough power and memory to support the entire GPU product stack which might put you in a position where you'd have to consider selling organs for a motherboard.

Finally, there's the reality that although graphics cards are hot commodities with gamers, there are many more people out there that don't need a powerful GPU. Meaning that motherboards with GPU sockets on them would be relegated to a very niche market due to how expensive they'd be, giving manufacturers even less of an incentive to make them.

So for the time being, if you want a GPU on your motherboard, it's probably only gonna happen in a laptop or other specialized device. On the desktop, it's just so much cheaper and easier for them to continue doing what they've been doing. Slotting them into universally compatible, inexpensive PCI Express slots. And besides, do you really wanna strap another cooler to your motherboard?

No comments yet