Introduction

ChatGPT, also known as the Generative Pre-trained Transformer, is a large language model developed by OpenAI. It has been trained on a massive amount of text data and is capable of generating human-like text. It can complete sentences, paragraphs, and even entire articles, with impressive coherence and fluency. However, like any other technology, ChatGPT also has its limitations and challenges. In this article, we will take a closer look at some of the limitations and challenges that ChatGPT currently faces.

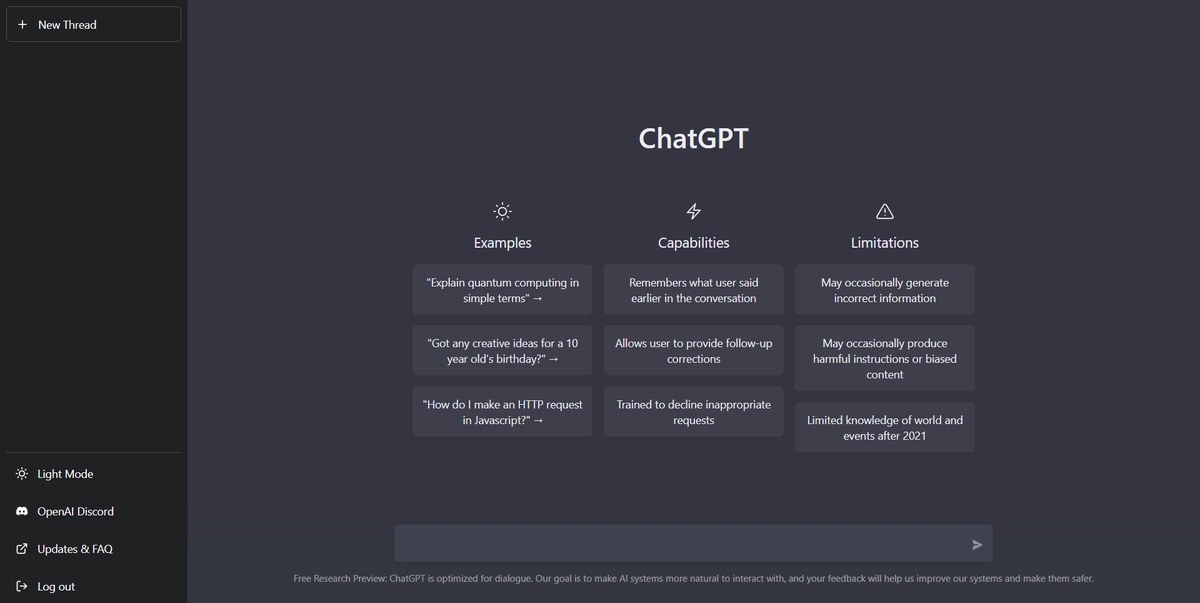

Limitations of ChatGPT

- Lack of Contextual Understanding

One of the main limitations of ChatGPT is its inability to understand the context of a given text. It can generate coherent and fluent text, but it doesn't have the ability to understand the meaning behind the words. This means that ChatGPT is not capable of understanding the intent of a text, making it difficult to use for tasks such as sentiment analysis or natural language understanding.

- Limited Vocabulary and Bias

Another limitation of ChatGPT is that it can only generate text based on the data it has been trained on. This means that it has a limited vocabulary and may not be able to generate text for topics that it hasn't seen during training. Furthermore, it may also generate text that is biased towards the data it was trained on, which can be a problem when trying to use it for certain applications.

Challenges of ChatGPT

- Language Understanding

ChatGPT also has challenges when it comes to understanding and generating text in different languages. Despite the model can be fine-tuned to specific languages and domains, it still struggles with languages that have different grammatical structures and vocabularies. Additionally, it also has difficulty understanding and generating text in languages with less amount of data, which makes it less effective in low-resource languages.

- Ethical and Societal Implications

Another challenge is related to the ethical and societal implications of using ChatGPT. The model has been used to generate fake news and deepfake videos, which can be used to spread misinformation and propaganda. Furthermore, ChatGPT can also be used to impersonate people online, which can be used for cyber attacks and fraud. Therefore, it is important to consider the potential risks and negative consequences of using ChatGPT and to develop guidelines and regulations for its use.

- Lack of Explainability

Finally, one of the most significant challenges that ChatGPT faces is the issue of explainability. As a deep learning model, ChatGPT is a black box, meaning that it is difficult to understand how it makes its decisions. This makes it difficult to trust the model's output, and it also makes it difficult to use the model in certain applications, such as those that require regulatory compliance.

Conclusion

In conclusion, ChatGPT is a powerful language model that has the potential to revolutionize the field of natural language processing. However, it also has limitations and challenges that need to be addressed. These include its inability to understand context, its limited vocabulary and bias towards the data it was trained on, its difficulty in understanding and generating text in different languages, and its ethical and societal implications. Additionally, the lack of explainability is also a significant challenge that needs to be overcome. Nevertheless, with further research and development, these limitations and challenges can be addressed and overcome, allowing ChatGPT to reach its full potential.

No comments yet