How much does laptop CPU affect eGPU performance? I’m going to compare the quad core ice lake chip in the razer blade stealth against the 6 core chip in the Dell G7.

Both the razer blade stealth and Dell G7 have thunderbolt 3, so we’re able to connect them up to the eGPU enclosure. Both of these machines are technically classified as having 10th gen processors, but the blade is using ice lake while the G7 is using comet lake H, so they’re two different architectures.

Ice Lakee actually makes some important improvements in terms of thunderbolt. The thunderbolt controller is integrated into the SoC. Meanwhile, with comet lake over here, the thunderbolt chip is external so that will introduce an additional bottleneck.

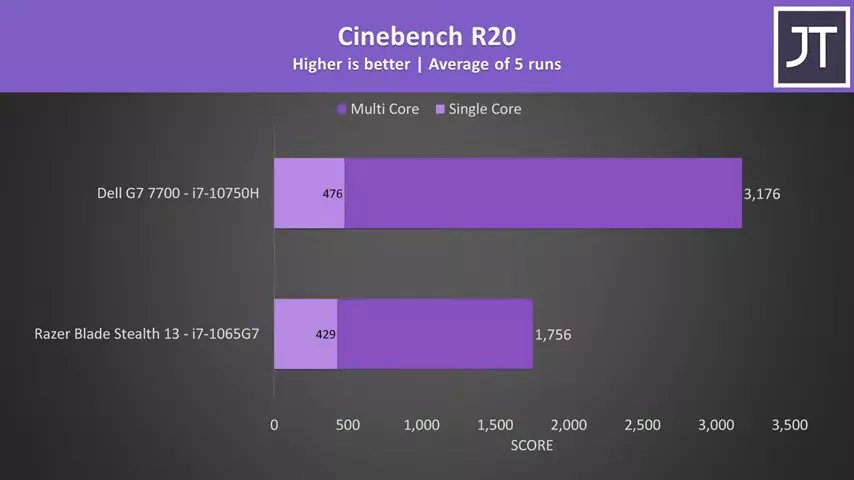

In terms of raw CPU performance, the 10750H in the G7 runs better in single and multicore workloads, so despite the G7 being able to destroy the blade stealth in gaming and other workloads when comparing them head to head, it’s a different story when we connect an eGPU enclosure.

In terms of raw CPU performance, the 10750H in the G7 runs better in single and multicore workloads, so despite the G7 being able to destroy the blade stealth in gaming and other workloads when comparing them head to head, it’s a different story when we connect an eGPU enclosure.

For testing, I’m using MSI’s GeForce RTX 3090 Gaming X Trio and I’ve got this inside the Mantiz Saturn Pro Gen II external GPU enclosure.

and I’ve got this inside the Mantiz Saturn Pro Gen II external GPU enclosure.

This is one of the best GPUs currently available, I’ve used it to minimize a GPU bottleneck. Both laptops were tested with Windows fully up to date and latest Nvidia drivers installed, so let’s get into the results.

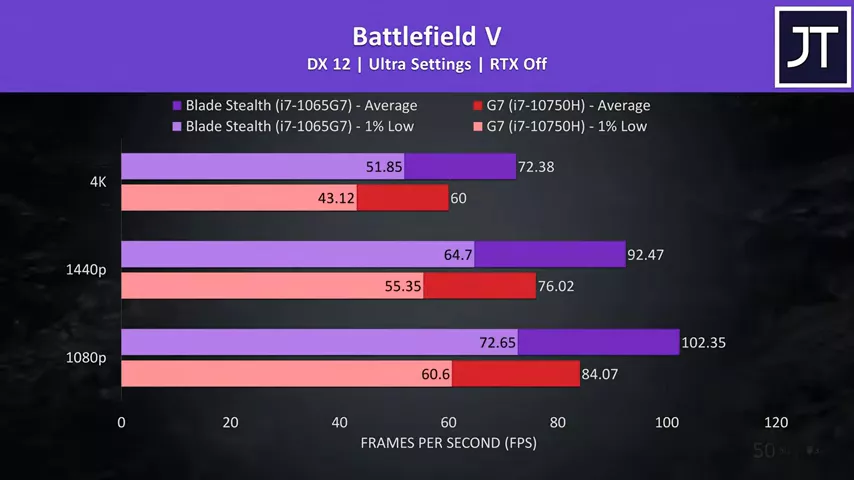

Battlefield 5 was tested in campaign mode by running through the same mission on both laptops. I’ve got the larger Dell G7 with higher powered CPU shown by the red bars, and the smaller Razer Blade Stealth with weaker CPU shown by the purple bars. I’ve also tested three resolutions listed on the left, 1080p down the bottom, 1440p in the middle, and 4K up top. In all instances the Blade was coming out at least 20% faster in average frame rate.

I’ve got the larger Dell G7 with higher powered CPU shown by the red bars, and the smaller Razer Blade Stealth with weaker CPU shown by the purple bars. I’ve also tested three resolutions listed on the left, 1080p down the bottom, 1440p in the middle, and 4K up top. In all instances the Blade was coming out at least 20% faster in average frame rate.

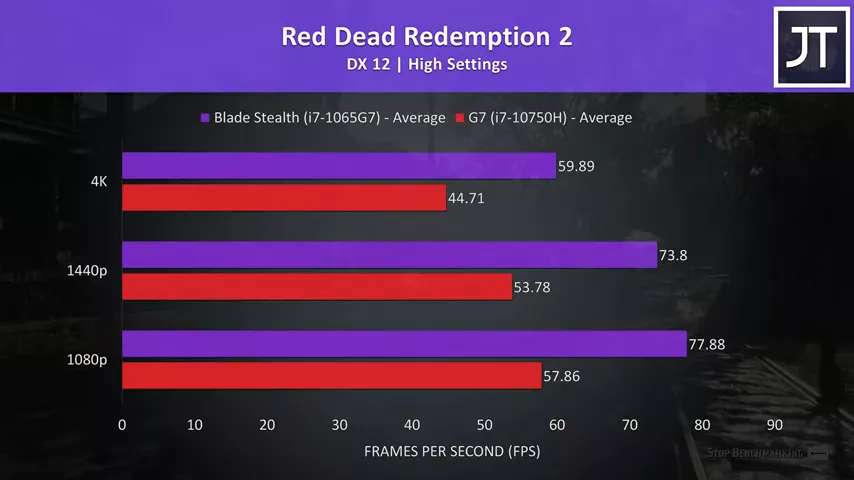

Red Dead Redemption 2 was tested with the games benchmark tool.  The Blade Stealth with weaker processor was once again out in front, this time with well over a 30% higher average FPS over the G7, so it doesn’t seem that the more powerful CPU is able to help it here.

The Blade Stealth with weaker processor was once again out in front, this time with well over a 30% higher average FPS over the G7, so it doesn’t seem that the more powerful CPU is able to help it here.

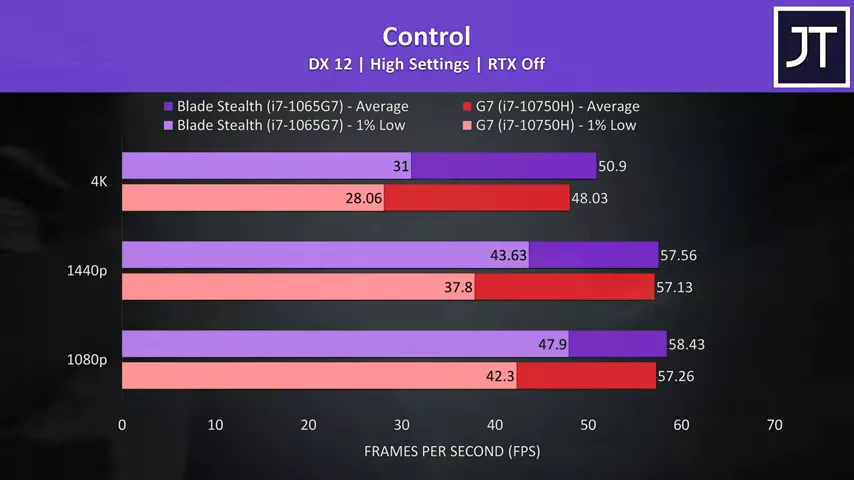

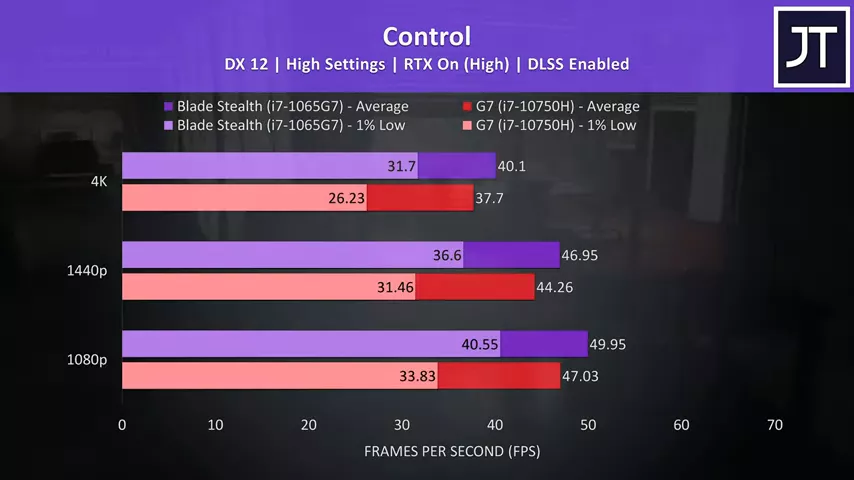

Let’s start with RTX off results in Control.  The average frame rates were quite close together here at 1080p and 1440p resolutions, though the Blade Stealth was still slightly ahead. The difference is bigger in the 1% lows though, so overall a more stable experience on the Blade.

The average frame rates were quite close together here at 1080p and 1440p resolutions, though the Blade Stealth was still slightly ahead. The difference is bigger in the 1% lows though, so overall a more stable experience on the Blade.  With RTX on and DLSS enabled the Blade is now further ahead of the G7, but again there are bigger improvements seen in the 1% lows.

With RTX on and DLSS enabled the Blade is now further ahead of the G7, but again there are bigger improvements seen in the 1% lows.

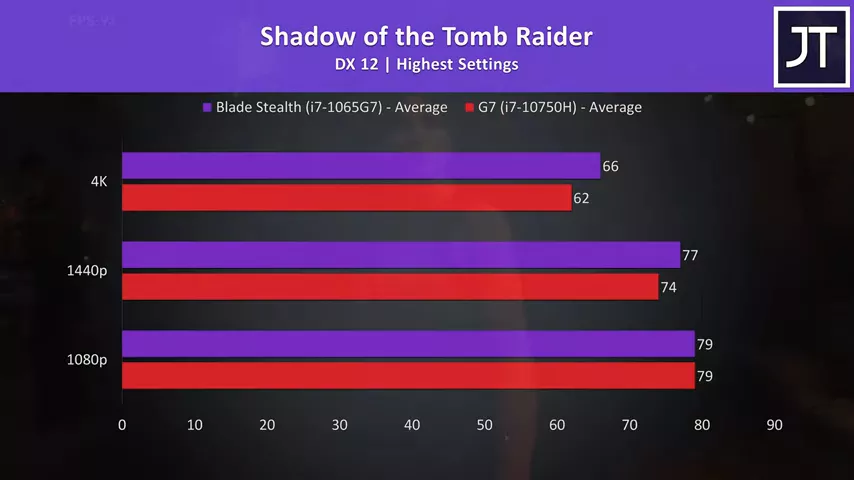

Shadow of the Tomb Raider was tested with the games built in benchmark.  There was no difference to frame rates in this test at 1080p, then at 1440p the Blade was only 2 FPS ahead, then with a slightly larger 4 FPS lead at 4K.

There was no difference to frame rates in this test at 1080p, then at 1440p the Blade was only 2 FPS ahead, then with a slightly larger 4 FPS lead at 4K.

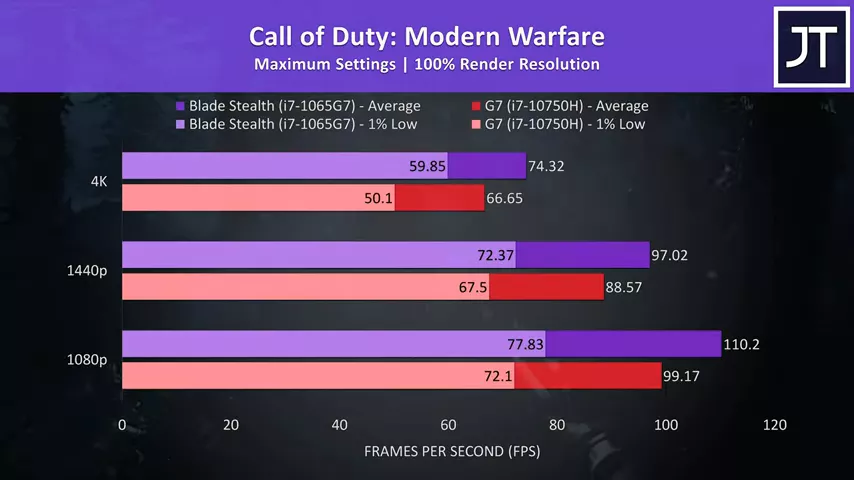

Call of Duty Modern Warfare was tested in campaign mode, and the differences here were one of the smallest out of all 10 games tested, but regardless there’s still around a 10% boost to average FPS with the Blade Stealth.

Call of Duty Modern Warfare was tested in campaign mode, and the differences here were one of the smallest out of all 10 games tested, but regardless there’s still around a 10% boost to average FPS with the Blade Stealth.  Metro Exodus was tested using the games benchmark tool. This game saw the biggest performance boost with the Ice Lake based Blade Stealth out of all games we’re looking at at 1080p and 1440p resolutions, we’re talking a mid to high 40% improvement to average FPS.

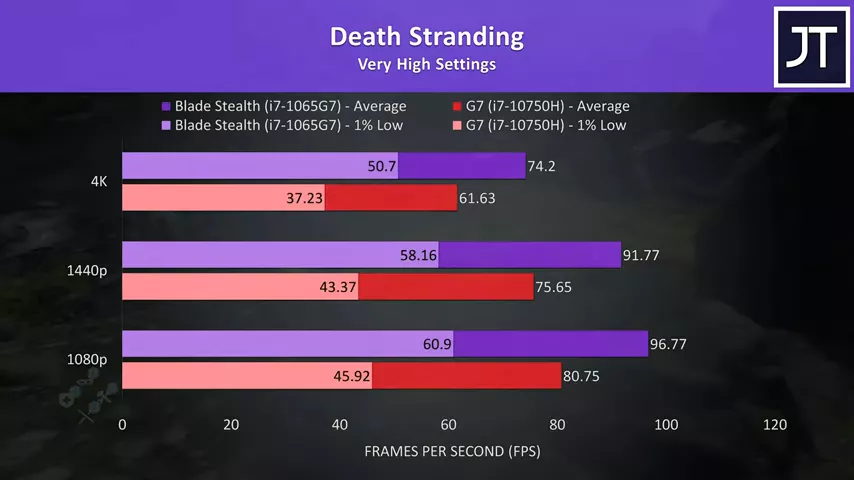

Metro Exodus was tested using the games benchmark tool. This game saw the biggest performance boost with the Ice Lake based Blade Stealth out of all games we’re looking at at 1080p and 1440p resolutions, we’re talking a mid to high 40% improvement to average FPS.  Death Stranding was tested running through the same part of the game, again nice improvements were seen with the Blade Stealth’s Ice Lake processor, around 20% higher average FPS at all resolutions.

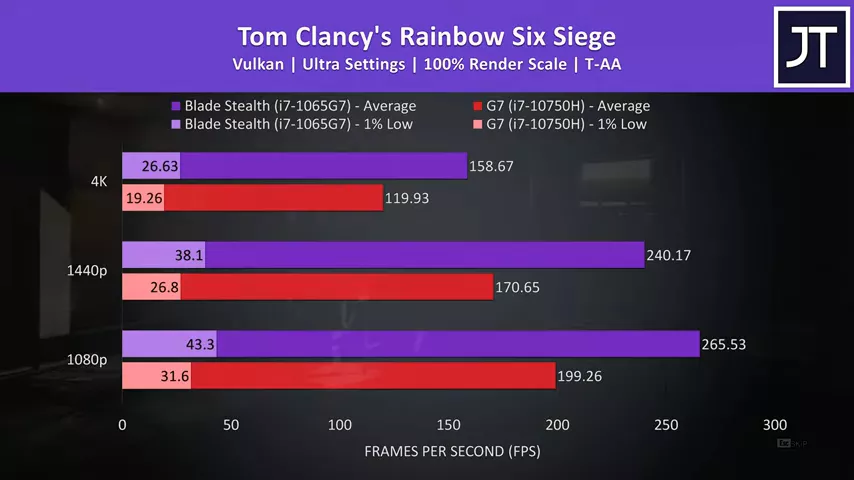

Death Stranding was tested running through the same part of the game, again nice improvements were seen with the Blade Stealth’s Ice Lake processor, around 20% higher average FPS at all resolutions.  Rainbow Six Siege was tested with the built in benchmark using Vulkan. The Blade was giving above average performance out of the 10 games tested, interestingly both laptops experienced heavy stuttering right at the start of the benchmark run, which is why the 1% lows are so poor. That issue resolved itself after a few seconds, but as it happened on two separate machines I can only assume it’s some sort of eGPU specific problem with test.

Rainbow Six Siege was tested with the built in benchmark using Vulkan. The Blade was giving above average performance out of the 10 games tested, interestingly both laptops experienced heavy stuttering right at the start of the benchmark run, which is why the 1% lows are so poor. That issue resolved itself after a few seconds, but as it happened on two separate machines I can only assume it’s some sort of eGPU specific problem with test.

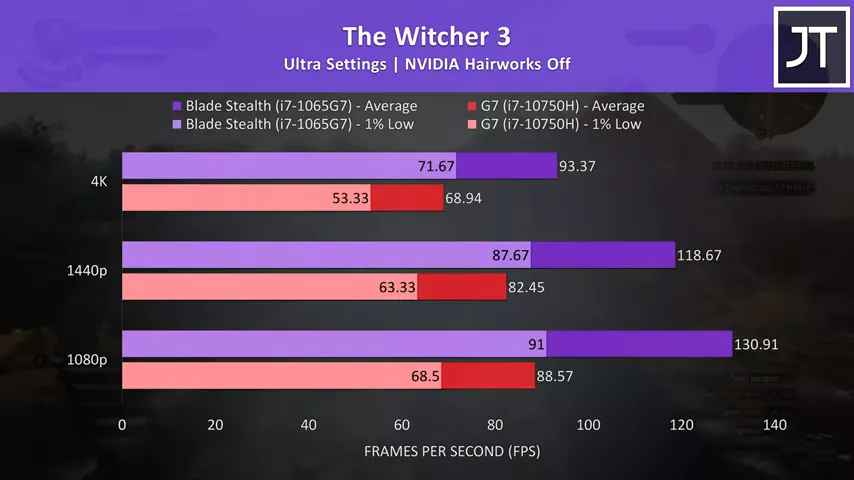

The Witcher 3 was one of the best results for the Blade, even the 1% lows from it are higher than the averages the G7 was able to offer. There’s a massive 48% higher average frame rate at 1080p, 44% higher at 1440p, and although lower, still a 35% boost at 4K, the highest out of all games tested at this resolution.

The Witcher 3 was one of the best results for the Blade, even the 1% lows from it are higher than the averages the G7 was able to offer. There’s a massive 48% higher average frame rate at 1080p, 44% higher at 1440p, and although lower, still a 35% boost at 4K, the highest out of all games tested at this resolution.

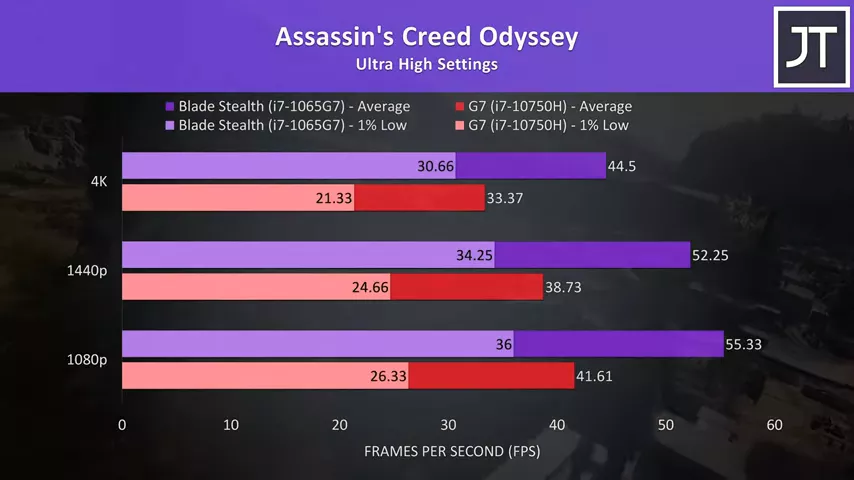

Assassin’s Creed Odyssey was tested with the games benchmark tool, and results here were again above average when compared to other games tested, with the Blade performing more than 30% better at all three resolutions.

Assassin’s Creed Odyssey was tested with the games benchmark tool, and results here were again above average when compared to other games tested, with the Blade performing more than 30% better at all three resolutions.

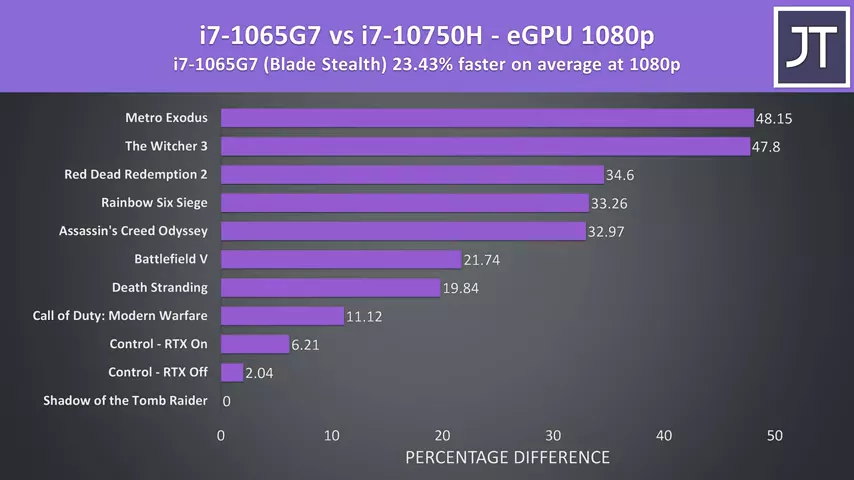

These are the performance differences in all games at 1080p. On average the Blade Stealth with Ice Lake processor and more efficient Thunderbolt implementation was 23% faster in average FPS when compared to the G7. I thought the higher powered 10750H with more cores and threads would do better in 1080p gaming, but I was sure proven wrong by these results.

On average the Blade Stealth with Ice Lake processor and more efficient Thunderbolt implementation was 23% faster in average FPS when compared to the G7. I thought the higher powered 10750H with more cores and threads would do better in 1080p gaming, but I was sure proven wrong by these results.

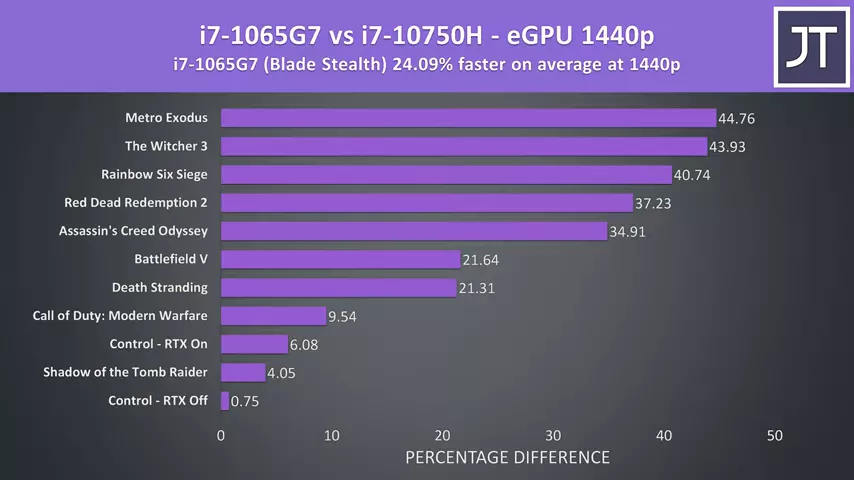

At 1440p we’re looking at a similar difference on average, the Blade Stealth was now 24% faster than the G7 at this resolution, so pretty similar all things considered.

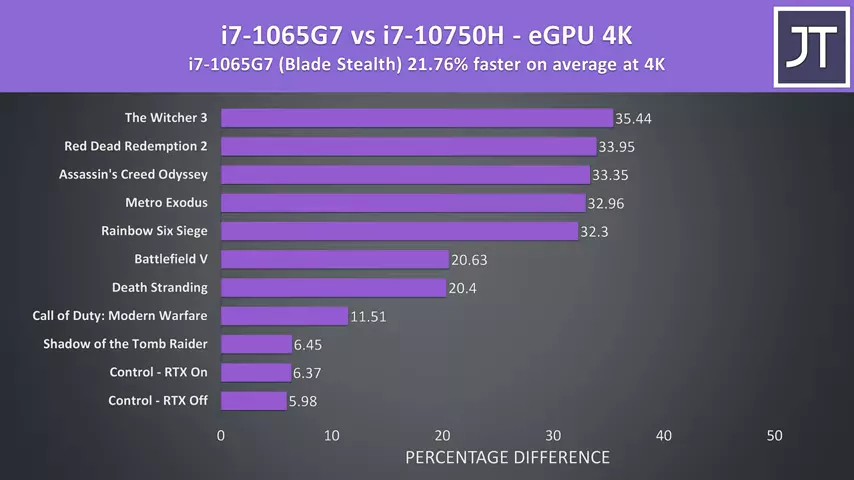

At 1440p we’re looking at a similar difference on average, the Blade Stealth was now 24% faster than the G7 at this resolution, so pretty similar all things considered.  Then at the highest 4K resolution the blade is around 22% faster than the G7. The highest differences aren’t as great as what we saw at 1440p and 1080p previously, my best guess is that the higher 4K resolution is able to work the GPU harder so the other system bottlenecks aren’t quite as limiting as the lower resolutions.

Then at the highest 4K resolution the blade is around 22% faster than the G7. The highest differences aren’t as great as what we saw at 1440p and 1080p previously, my best guess is that the higher 4K resolution is able to work the GPU harder so the other system bottlenecks aren’t quite as limiting as the lower resolutions.

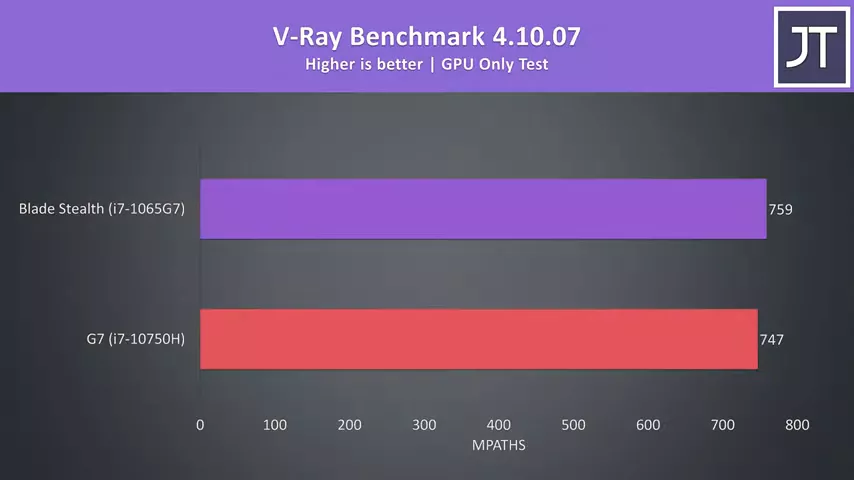

I’ve also tested V-Ray for a non-gaming test, and the difference was far less pronounced here, with the Blade Stealth scoring less than 2% higher than the G7. This should almost be a pure GPU workload, yet there’s still a small difference at play.

I’ve also tested V-Ray for a non-gaming test, and the difference was far less pronounced here, with the Blade Stealth scoring less than 2% higher than the G7. This should almost be a pure GPU workload, yet there’s still a small difference at play.

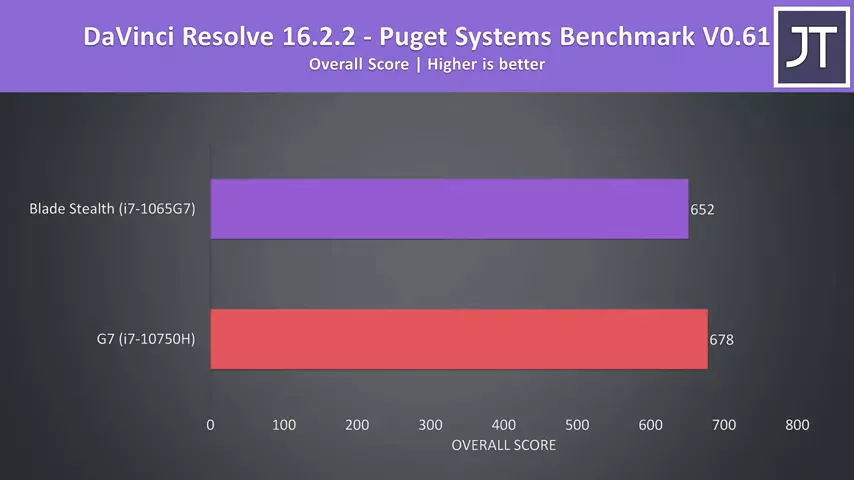

Interestingly DaVinci Resolve was slightly better on the G7, the only test where this happened. I’m thinking that the extra CPU cores might finally be useful in this video production workload, but either way it’s only scoring 4% higher than the Blade Stealth, nothing major.

Interestingly DaVinci Resolve was slightly better on the G7, the only test where this happened. I’m thinking that the extra CPU cores might finally be useful in this video production workload, but either way it’s only scoring 4% higher than the Blade Stealth, nothing major.

I honestly wasn’t expecting these sorts of results going into this testing. I had an inkling that the Thunderbolt difference would play a role, but I still thought the 6 core chip in the G7 would have the win, but apparently more cores, more threads and higher clock speeds just aren’t enough, which just goes to show that Thunderbolt is the main bottleneck rather than the processor.

People are always keen to point out CPU bottlenecks in eGPU setups, and while that is undoubtedly true to some degree, I think these results show that the Thunderbolt implementation matters much more and is seriously worth considering if you’re looking into an eGPU. In a way, these results are good.

I’ve always said that I think an eGPU setup makes more sense with a smaller machine, that way you can better take advantage of the portability and then connect to a dock when at home. That sounds a bit better to me than lugging this thing around anyway, but this testing also confirms that you don’t need this for best eGPU performance, you can get away with those smaller laptops as long as they have Ice Lake and integrated Thunderbolt, and ice lake chips are pretty much only available in those ultrabook style machines.

This of course may change depending on what Intel does with 11th gen, and we won’t know until we see H series processors, like an 11750H or something probably next year. It’ll depend on whether or not that design uses integrated Thunderbolt, so we’ll just have to wait and see, but for now in 2020 it does seem like Ice Lake is actually offering a nice advantage for an eGPU setup.

To see what sort of limits the processor and thunderbolt actually make though, I’m going to compare this setup against a desktop PC with the same graphics card.

No comments yet