How many gigabytes does your hard drive or SSD hold? Are you sure about that?

We've already discussed how over provisioning and file system overhead often mean that the usable capacity of your drives is less than what's advertised. But it turns out there's another way that you can be easily fooled. The fact that the term gigabyte itself can be ambiguous.

Many of us think of a gigabyte as exactly 1 billion bytes. However, it's a commonly accepted practice in the industry to instead use gigabyte to mean 1,073,741,824 many bytes. If you look closely, that's a difference of about 74 megabytes, which is not an insignificant gap. So why does the industry have to make things so complicated?

To get the answers we have to look way back to the early days of modern computing. As early as the 1960s, binary addressing was already the standard for working memory or Ram. You see when a computer puts something into memory that data is assigned to her particular address, so the system can recall where it's stored.

Binary addressing means that each memory address is expressed in binary digits. That is ones and zeros. So for example, if you have a 10 bit address, there are two to the 10th power or 1,024 possible addresses, not exactly 1,000. It therefore became convenient to refer to 1,024 bits as a kilobit or the same number of bytes as a kilobyte, even though those uses didn't strictly line up with the meaning of the kilo prefix as it's used in fields outside of computer science.  We've also seen this at times with mass storage devices.

We've also seen this at times with mass storage devices.

During the 1980s when the IBM PC platform was becoming popular, the industry needed to decide on a standard sector size. And if you didn't know, a sector is the smallest amount of data that can be stored at one time on a drive. The powers that be settled on making 512 bytes the default size for one sector. And if you put two sectors together, you have 1,024 bytes, which matched the binary addressing definition of kilobyte we discussed earlier.

Even today when we're using much larger drive sizes sectors are still defined by sizes and powers of two, with the sector today usually coming in at 4,096 bytes.  However, the storage industry today typically uses the more common exactly 1 billion bytes definition of gigabyte. Since this definition allows drive makers to put larger capacities on their packaging and claim slightly faster speeds on their spec sheets. But wouldn't it be nice to know exactly what you're getting when you go out and buy a drive or memory card? Thankfully, more precise terms were published in 1999, with words like mebibyte and gibibyte that unambiguously refer to units that are powers of two, with the BI or bi in the middle, standing for binary.

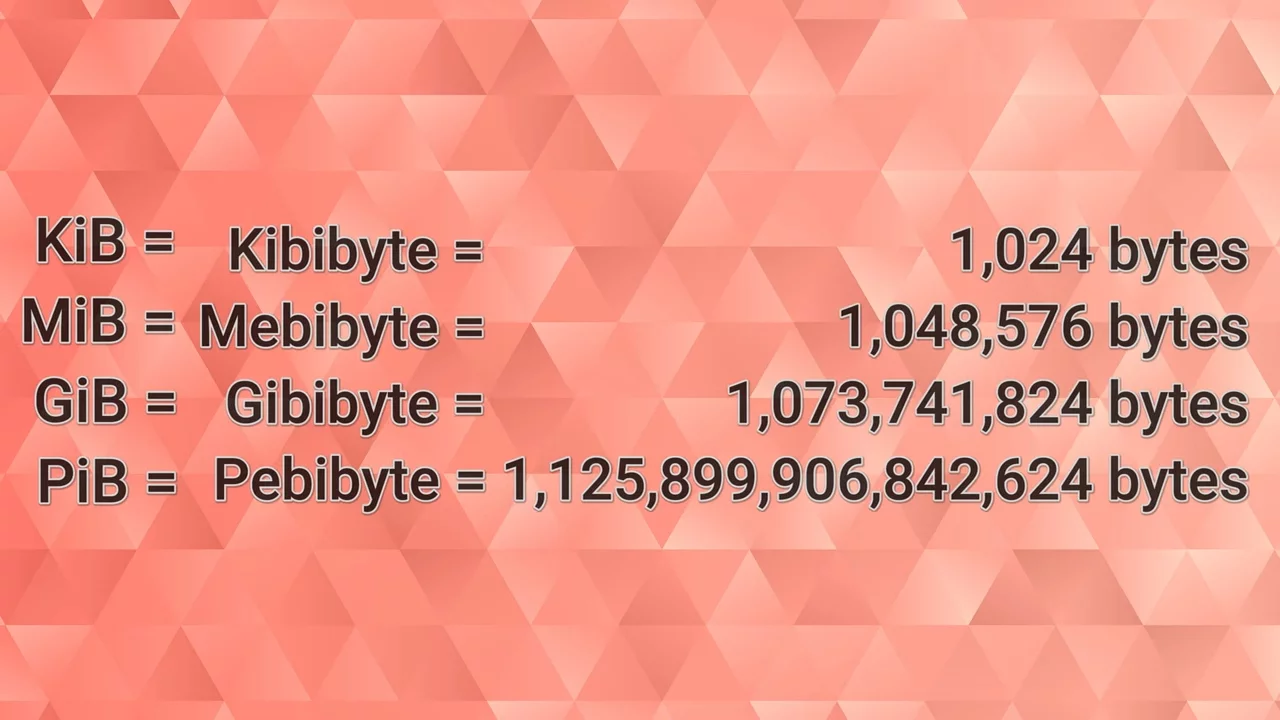

However, the storage industry today typically uses the more common exactly 1 billion bytes definition of gigabyte. Since this definition allows drive makers to put larger capacities on their packaging and claim slightly faster speeds on their spec sheets. But wouldn't it be nice to know exactly what you're getting when you go out and buy a drive or memory card? Thankfully, more precise terms were published in 1999, with words like mebibyte and gibibyte that unambiguously refer to units that are powers of two, with the BI or bi in the middle, standing for binary.  If you wanna write that out as abbreviation, sticking a lowercase i between the capital G and the capital B is the correct way to do it.

If you wanna write that out as abbreviation, sticking a lowercase i between the capital G and the capital B is the correct way to do it.

However, this obviously hasn't solved the problem completely. The more common words like megabyte and gigabyte can refer to data units in either system, and the words with Bi or b in the middle, having gained a whole lot of traction outside of tech circles. In fact, there have even been lawsuits over how these terms are used, with customers claiming that they got less storage than they paid for in certain circumstances. And the storage continues to grow and grow. This could become a more significant issue.

For example, there's about a 125 terabyte difference between a petabyte and a pebibyte, but maybe we'll just constantly be storing such large files on our devices that these differences won't matter very much.

No comments yet