The central processing unit, or CPU, that’s the key to making your home computer work is often likened to a brain, but the truth is it’s nothing like the brains found in nature or in our skulls. CPUs are great at performing precise calculations with huge numbers, but when it comes to learning and abstraction, the thinky meat between our ears has the CPU licked.

An emerging field of artificial intelligence called neuromorphic computing is attempting to mimic how the neurons in our own brains work, and researchers from Intel and IBM are making true silicon brains a reality.

It’s easy to get a little lost in the terminology here because another technology on the forefront of AI is called deep learning, and one of the most advanced approaches relies on something called a neural network.

Neural networks are a software approach that mimic how brains work. A neural network changes when it’s shown lots and lots of examples of what it’s supposed to learn, but it may need to see thousands to millions of examples to achieve the desired results, like how to tell the difference between a chihuahua and a blueberry muffin. Clearly that’s not how we learn. I don’t need to see millions of pictures of a dog before I know what a dog is.

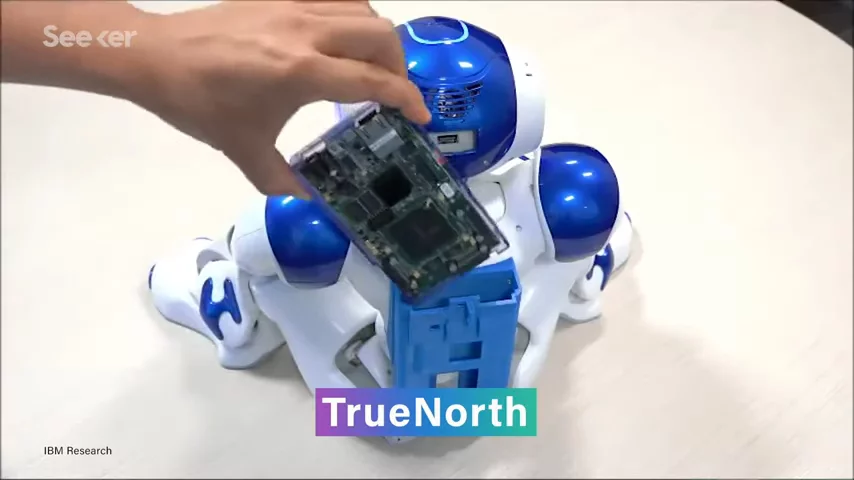

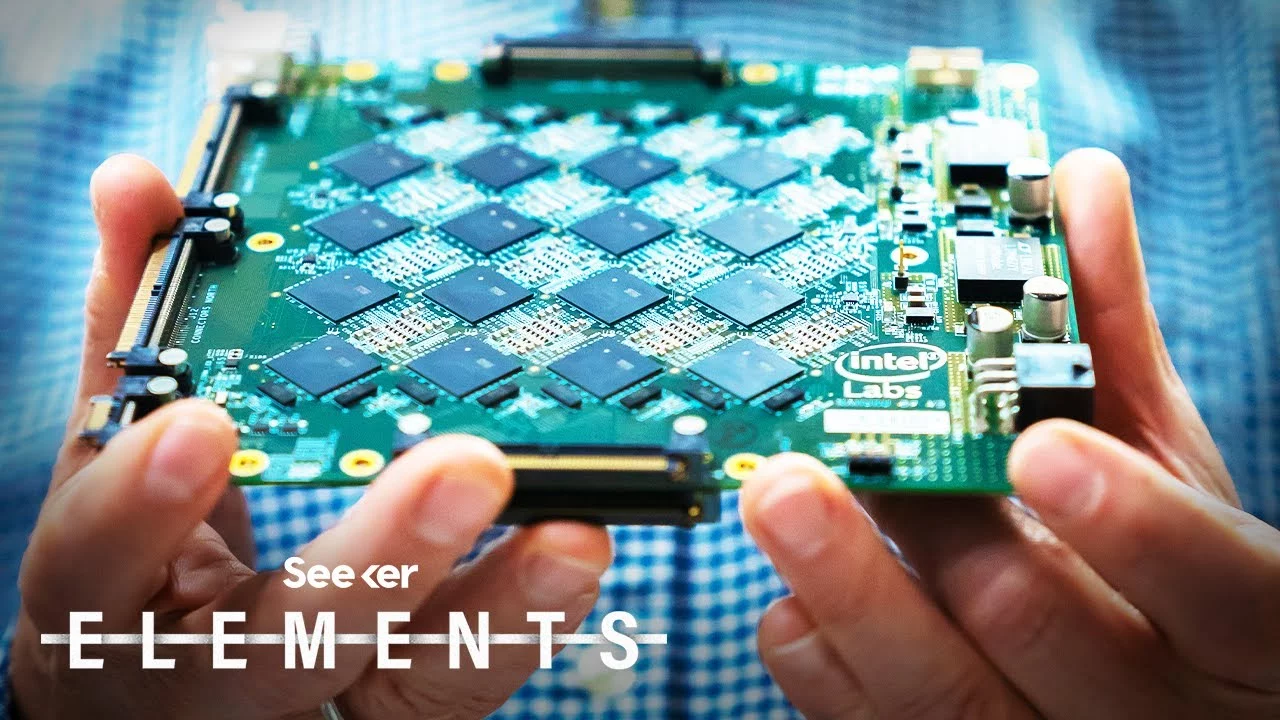

So, to solve this, researchers from IBM and Intel are trying to mimic brains at a hardware level too.  IBM revealed their brain inspired chip called TrueNorth in 2014, while Intel’s chip called Loihi was introduced in 2017.

IBM revealed their brain inspired chip called TrueNorth in 2014, while Intel’s chip called Loihi was introduced in 2017.

The two neuromorphic chips use the same silicon transistors commonly found in conventional chips, but they’re arranged to interconnect more like neurons.

TrueNorth’s one million neurons are connected by 256 million synapses, while Loihi’s 130,000 neurons are each capable of communicating with thousands of others for a total of over 130 million synapses.

TrueNorth and Loihi also combined into one chip two aspects of computers that are normally separate: memory and computation.

In a typical computer like you have at home, the CPU handles computation and shuffles data back and forth from the Random Access Memory, or RAM. But this separation slows things down and draws more power, and it’s not how things work in our own brains.

In another drastic departure from standard chips, TrueNorth and Loihi do not use a clock to update information across the system in a synchronized manner. Instead, the neurons in the chip fire independently, and the timing of these spikes of activity can be used as another way to encode information.

All of these tweaks to how information is moved around means neuromorphic chips can learn quickly and use far less energy than a conventional CPU.

Best of all though, is the problems they can solve as a result of their novel design. Problems like constraint satisfaction, where several solutions could exist but only one of them fits the constraints. Think Sudoku puzzles. Neuromorphic computers can also be used for optimization tasks, like the famous traveling salesman problem where finding the best route to take from millions of options can be very challenging, even for a supercomputer.

Since Loihi is a research chip that was never intended for mass production, there aren’t many of them for researchers to work with. Still, Intel wired together 768 of them to create Pohoiki Springs, a computer that’s the size of 5 servers and boasts 100 million neurons. That’s in league with the brain size of a small mammal. And yet, despite its size and complexity, it needed under 500 watts of power to operate.

By contrast, the overkill gaming PC can use up to twice that much power, and it still isn’t as “smart” as a squirrel.

Neuromorphic computers are not poised to completely replace conventional ones any time soon. Remember that because this kind of hardware is just emerging, software that can make the best use of it needs time to develop.

Still, it’s something to look forward to. As the technology matures we’ll be able to crack bigger and tougher problems that were previously beyond our grasp with our current CPU "brains." While our brains are more adaptable than a conventional CPU, our data processing speed is estimated to be a paltry 120 bits per second.

No comments yet