Let’s find out what the differences are between the Intel i5-9300H and i7-9750H laptop processors. Starting with the specs

| I5-9300H | I7-9750H | |

|---|---|---|

| Cores/Threads | 4/8 | 6/12 |

| Base Clock | 2.4GHZ | 2.6GHZ |

| Single Core Boost | 4.1GHZ | 4.5GHZ |

| All Core Boost | 4.0GHZ | 4.0GHZ |

| Cache | 8MB | 12MB |

| TDP | 25W | 45W |

| Memory | DDR4-2666 | DDR4-2666 |

| Architecture | 12NM++ | 14NM++ |

| Unlocked | NO | NO |

| Released | Q2 2019 | Q2 2019 |

We can see that the key difference is that the i7 has 6 cores versus the 4 in the i5. The i7 has a 400MHz higher single core turbo boost speed, however when all cores are active they both max out at 4GHz. Along with 50% more cores, the i7 also has 50% more cache, otherwise both chips use the same 9th gen architecture.

The laptops I’m testing with are my Lenovo Y540 with i7-9750H, and Lenovo Y7000 with i5-9300H.

The chassis of both are basically the same, so comparable thermals and battery. We’ll start with the games, then other applications like rendering, video editing, thermals, power draw and clock speeds afterwards.

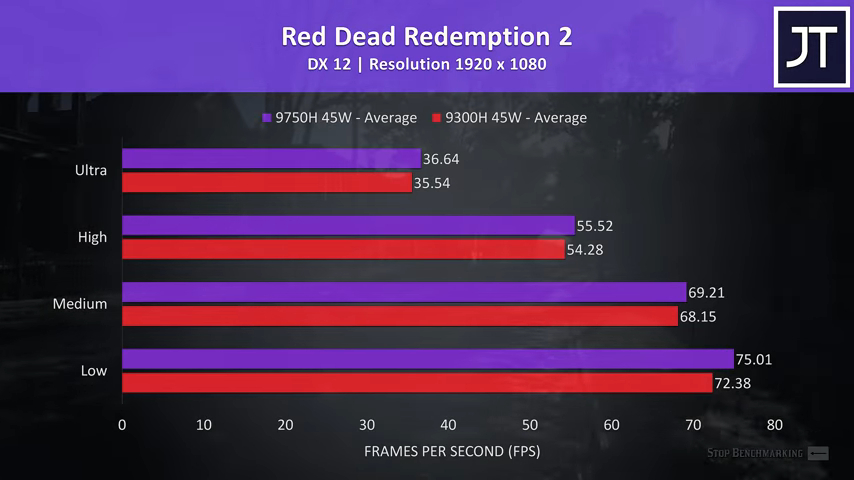

Red Dead Redemption 2 was tested with the game’s built in benchmark tool. I’ve got the i5-9300H shown by the red bar on the bottom, and the i7-9750H shown by the purple bar on top, and I’ve tested all setting levels, which are noted on the left. In this test the i7 was just 3% faster in average FPS at ultra settings, and about the same at low too.

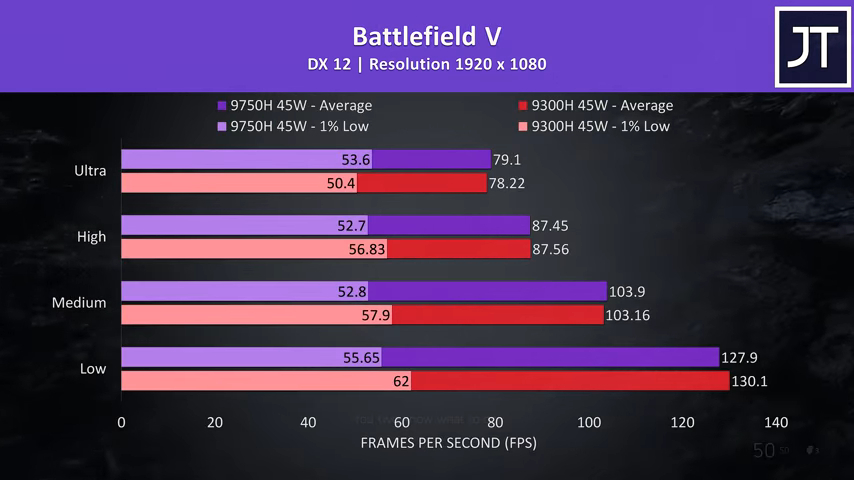

Battlefield V was tested in campaign mode running through the same section of the game on both machines. The results were quite close here, at ultra settings the i7 was only 1% ahead, which is honestly kind of margin of error ranges anyway. Interestingly the i5 was ahead in 1% low at all other settings, however I have found 1% low performance inconsistent in this title.

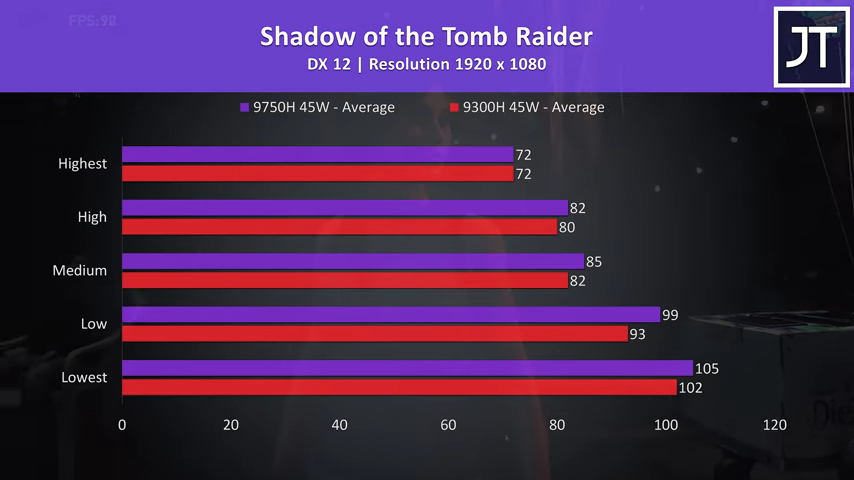

Shadow of the Tomb Raider was tested using the built in benchmark. The i7 was ahead in all tests, although by the time we step up to highest settings the result was identical, likely as we’re more GPU bound at this stage. At lowest settings, the i7 was just 3% faster than the i5.

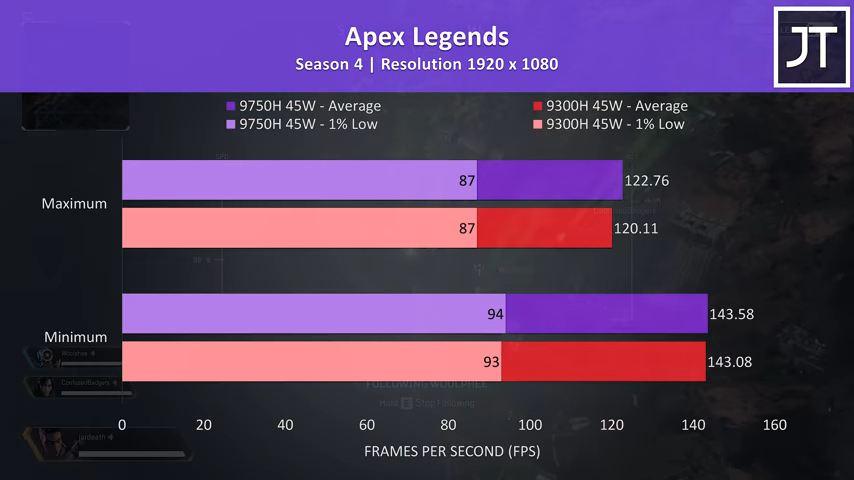

Apex Legends was tested by running through the same section of the map on both laptops. Unfortunately I tested with the default frame cap enabled, so both were about the same at minimum settings, however even maxed out the i7 was only 2% faster than the i5.

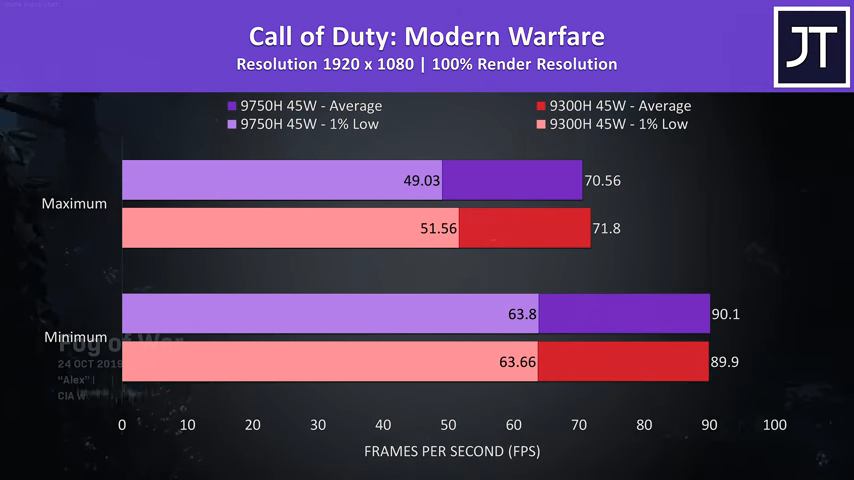

Call of Duty Modern Warfare was also tested with maximum or minimum settings, and again the results were almost identical at minimum, however interestingly the i5 was actually slightly ahead at ultra, granted it’s only 1 FPS, which I’d say is basically margin of error, even though this is the average of 3 test runs.

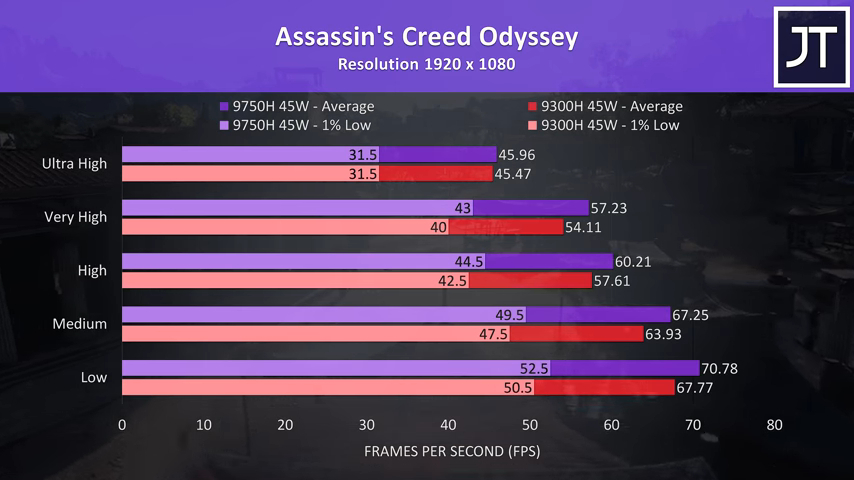

Assassin’s Creed Odyssey was tested using the games benchmark, the i7 is ahead at the lower setting presets, 4% faster than the i5 at low settings, then at maximum where we’re presumably less CPU bound it’s now just 1% faster with the same 1% low performance.

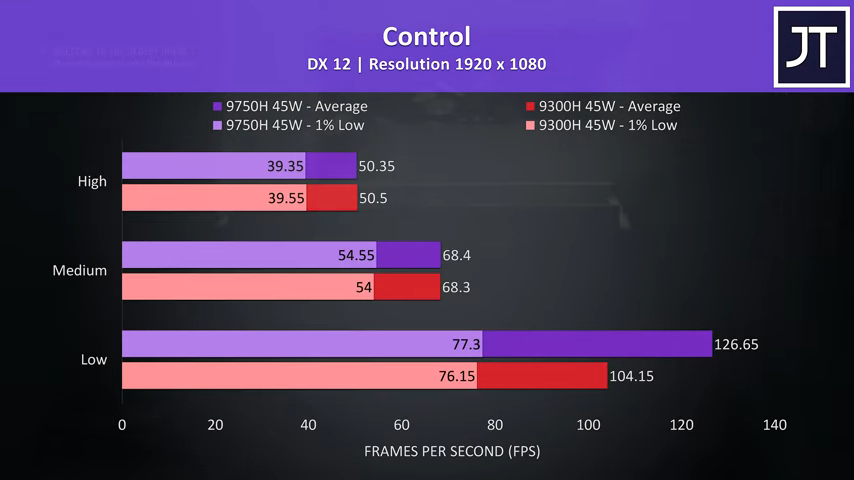

Control was tested running through the same area of the game on both machines. At high and medium setting presets the performance was essentially identical, but with low settings for some reason the i7 saw a nice improvement. My guess is the low setting preset must be more CPU bound compared to the others, which is why the i7 was almost 22% faster here.

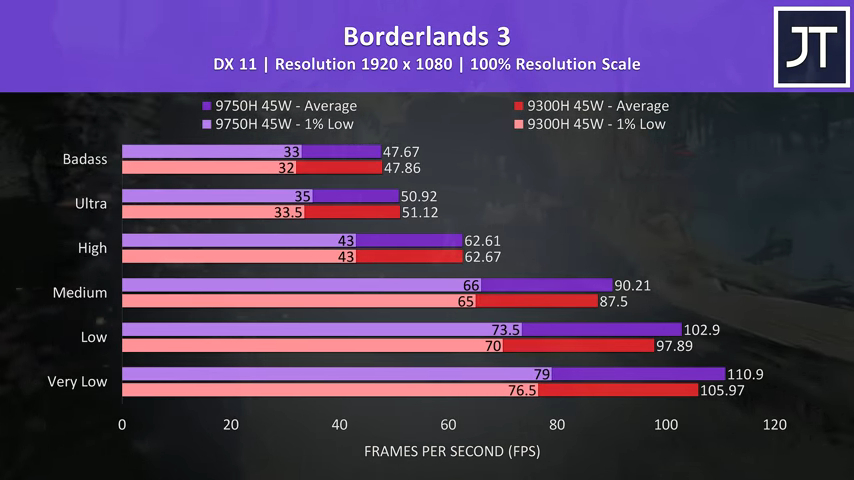

Borderlands 3 was tested with the built in benchmark and follows the same pattern as many other titles tested, in that the results are quite close together, especially at higher settings. The top three settings have basically the same average FPS with only minor gains to 1% low results, while the i7 was less than 5% faster at low settings.

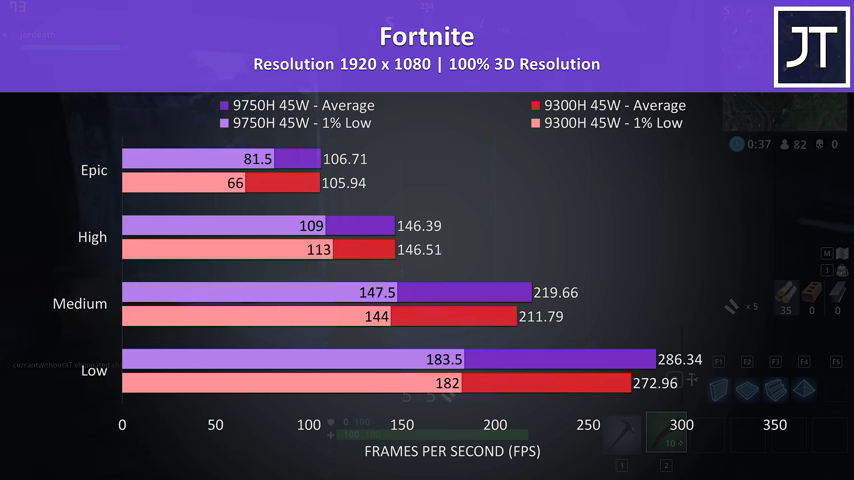

Fortnite was tested using the replay feature, different replay files but running through the same part of the game on both laptops. The average FPS at high and epic is about the same regardless of CPU, but the 1% low results are a bit strange. The two chips are similar in 1% low performance in all but epic settings, where the i7 saw a large 23% improvement.

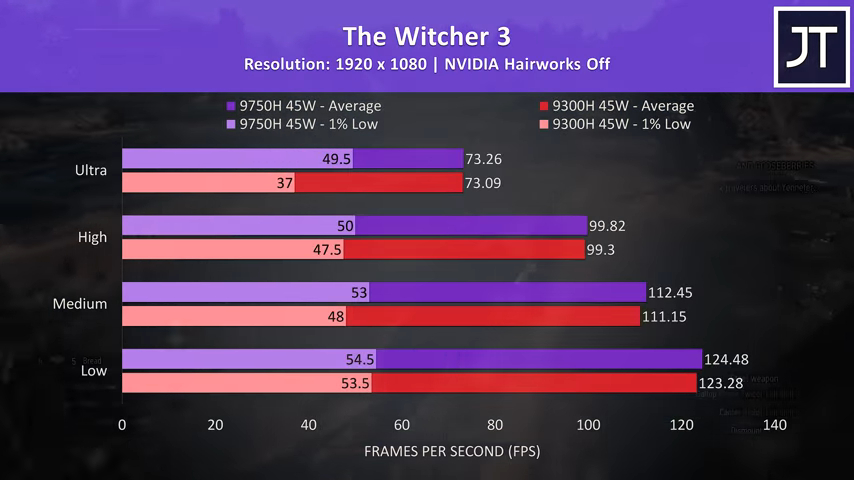

The Witcher 3 was similar in that the 1% low performance was way up at the highest setting preset when compared to the others, however outside of this the averages were much the same regardless of the processor in use.

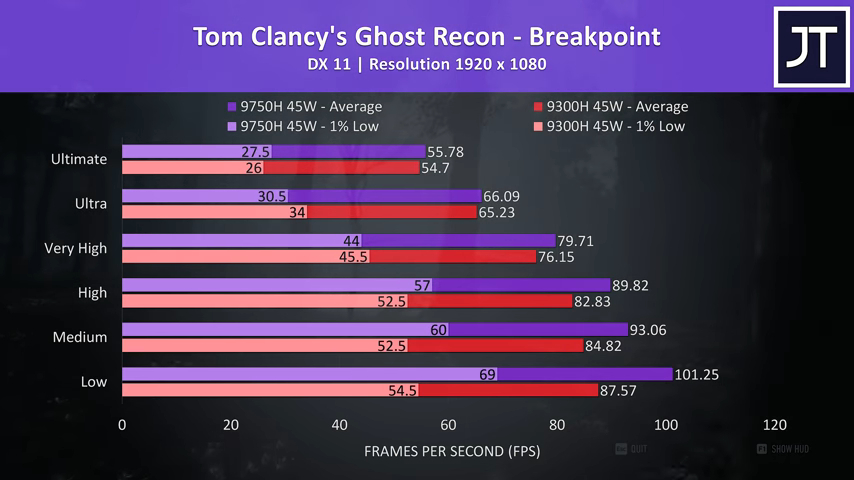

Ghost Recon Breakpoint was tested using the games benchmark tool. The i7 was ahead in all tests in terms of average FPS, however the margin closes in at higher settings. At ultimate, the i7 was just 2% faster than the i5, but at low settings it was just under 16% faster.

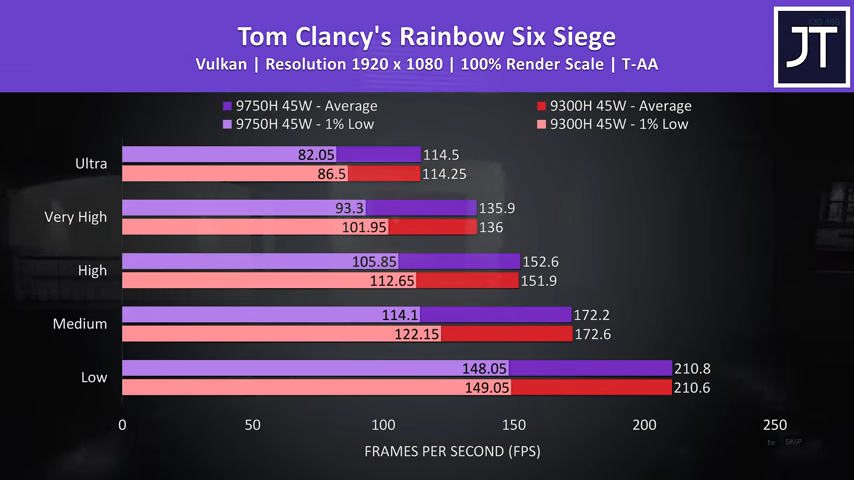

Rainbow Six Siege was also tested using the games benchmark tool with the latest Vulkan option. The average frame rates are so close here that there’s not going to be a perceivable difference between the two, and interestingly the i5 was actually consistently coming out in front with the 1% low performance. The i5 does actually clock higher in multicore loads as we’ll see a bit later, so this could be part of the reason.

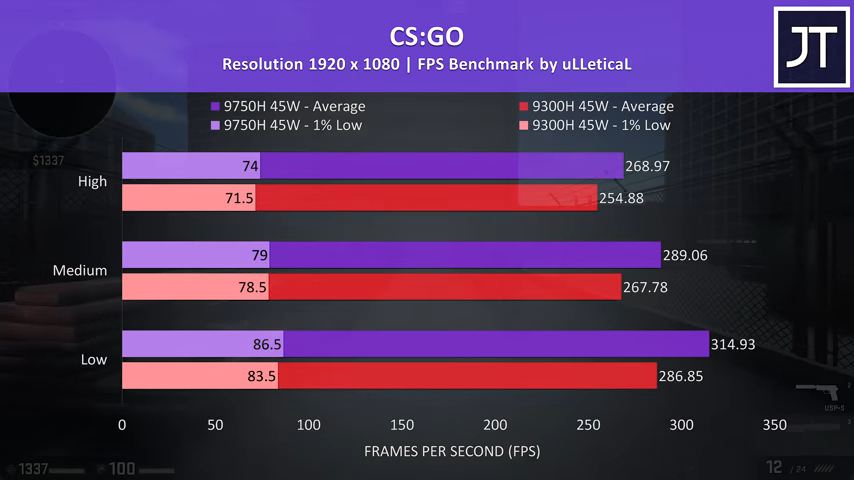

CS:GO was tested with the Ulletical FPS benchmark, and is a game that really cares about CPU power, so it wasn’t much of a surprise that this game saw one of the largest differences out of all 15 titles tested. At max settings the i7 was 5.5% faster than the i5, and then almost 10% faster at low settings where we’re less GPU bound.

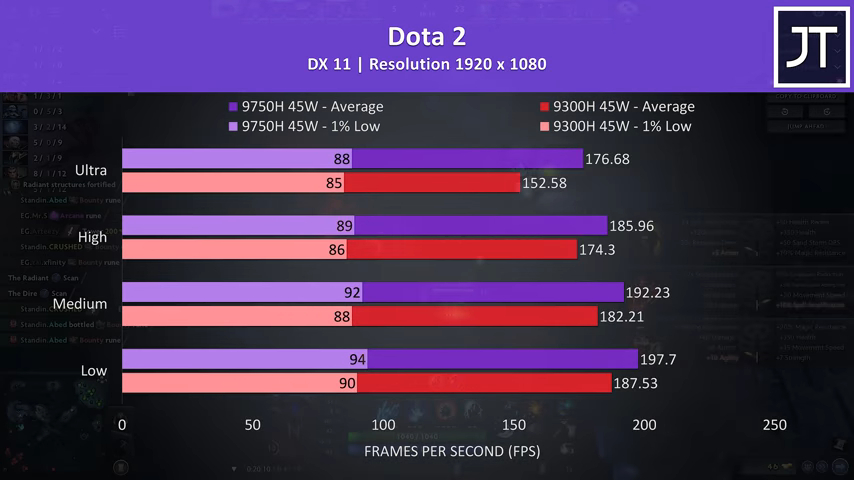

Dota 2 is another game that typically relies more on CPU power. At low settings the i7, was 5% faster than the i5. Unlike most other games, I’ve actually found the gap to widen as we step up to higher setting levels. By the time we’re at ultra settings, the i7 was actually 16% faster than the i5 in terms of average FPS, though the 1% low was only 3.5% faster.

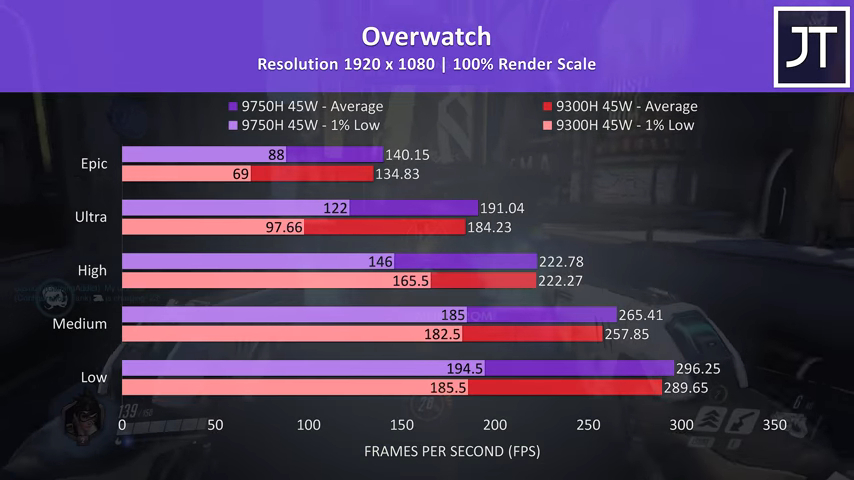

Overwatch was tested playing in the practice range, and while this performs better than actual gameplay it allows me to more accurately perform the exact same test run, perfect for a comparison like this. As another esports title, the CPU matters more compared to many others tested. There was a 4% higher average FPS at max settings with the i7, which doesn't sound like much but for context makes it the 3rd biggest gain out of all 15 titles tested, granted the 1% lows were nicely improved at epic at ultra, though at the same time the i5 was ahead at high with minor change at medium and low.

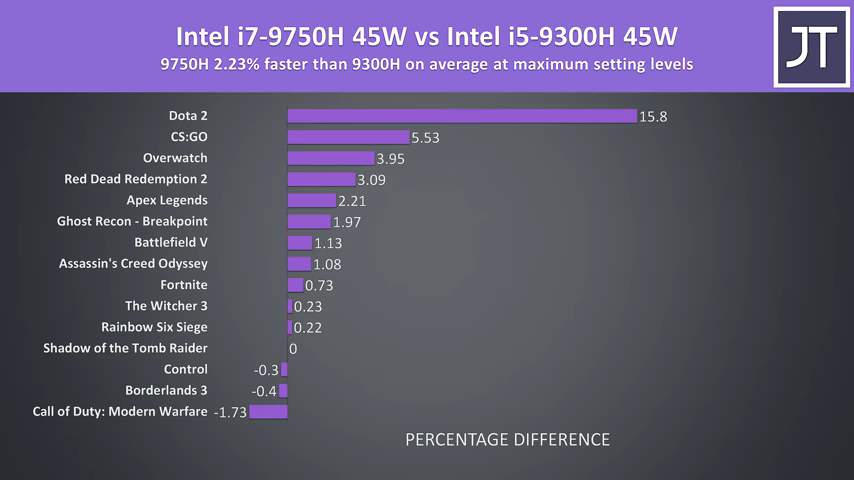

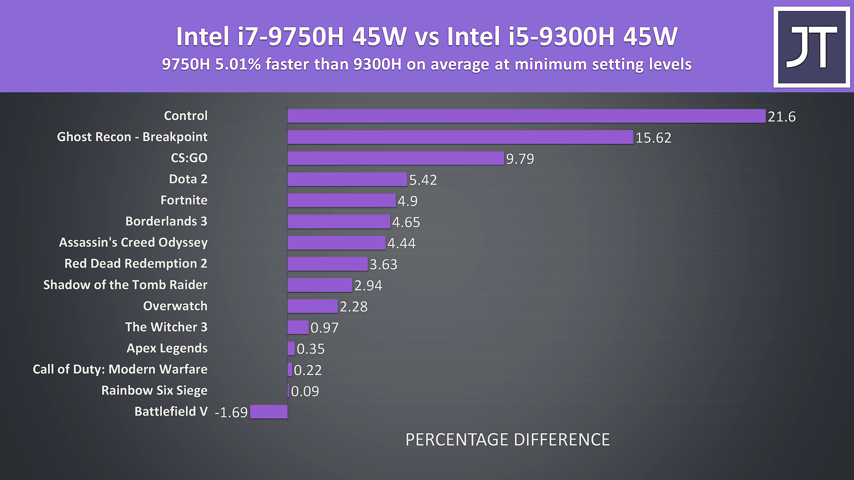

These are the differences to average FPS over all 15 games tested. On average with the highest setting levels in use, the Intel i7-9750H was just 2% faster than the i5-9300H. As we can see here, the results really vary by game. The three top games are esports titles, so if you’re serious about those then the i7 may make more sense there, otherwise at max settings where we’re more GPU bound, it seems that for the most part it doesn’t matter too much.

As we saw throughout the games, there was generally a larger difference with the lowest possible settings, as we’re more CPU bound here. At minimum in these same titles, the i7 was now 5% faster on average when compared to the i5. Some non esports titles are now dominating the top of the graph, so it seems that some games may benefit from more cores when the GPU is taking less of the work, but again on average the difference isn’t anything too amazing.

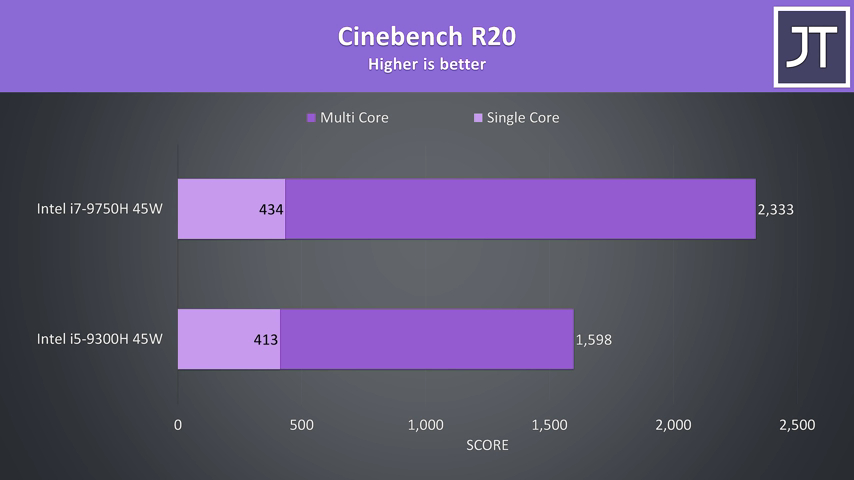

That’s it for gaming, let’s move onto the productivity focussed tests starting with Cinebench R20.

I’ve got the quad core i5 down the bottom, and the six core i7 up top. The multicore test is obviously ahead on the i7, it’s got 50% more cores, and as such is scoring 46% higher over the i5. The i7 is only 5% ahead in terms of single core performance though, if you recall the i7 can boost 400MHz higher in single core workloads.

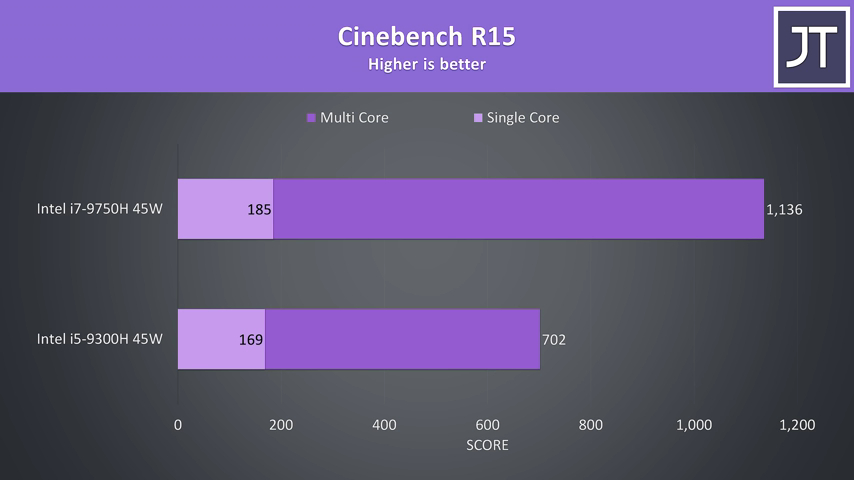

I’ve also tested the older Cinebench R15 as many people still use it, so these numbers can be used for comparison.

This time the i7 was a larger 9.5% ahead when it came to single core performance, and a much larger 62% faster at multicore. This test doesn’t take long to complete, so that larger difference could be due to PL2 limits.

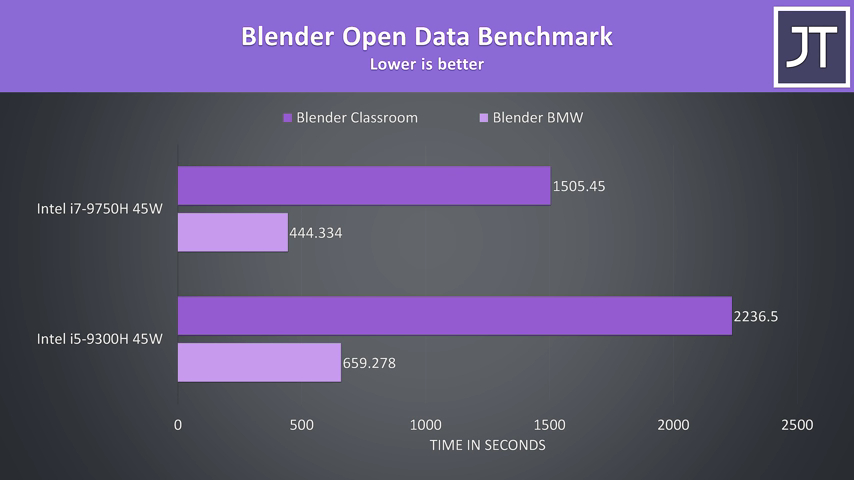

I’ve run the BMW and Classroom benchmarks with blender, and this is another test which benefits from additional cores, so it’s no surprise that the i7 is coming out ahead here.

The i7 completes both tasks around 48% faster at the stock 45w limit, so pretty good scaling given the 50% core increase with the i7.

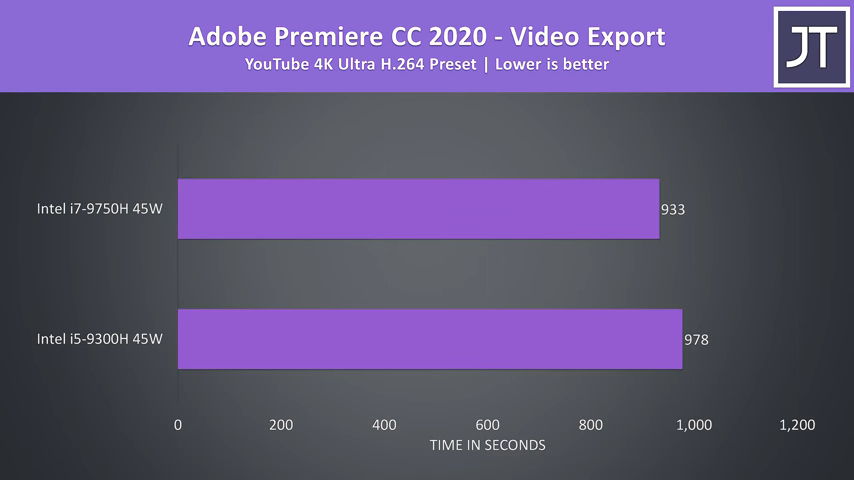

I’ve used Adobe Premiere to export the same 4K video, and the results surprised me.

Given this task is generally pretty CPU heavy, at least I thought, I figured the difference would be larger, however that was not the case, at least with this particular project I test with. My guess is both are utilizing quicksync primarily in this workload, so the iGPU may be the limit rather than core count.

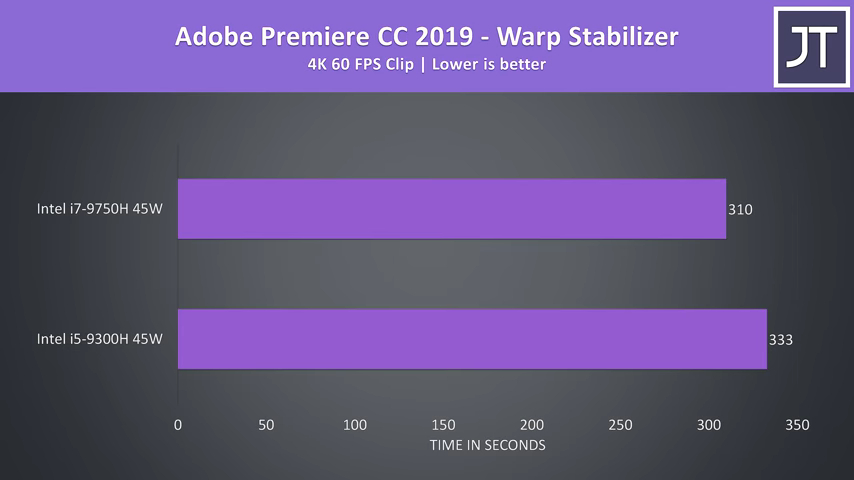

I’ve also used the warp stabilizer effect in Adobe Premiere which basically uses a single core to smooth out a clip.

There was a larger performance difference here compared to exporting, with the i7 completing the task 7% faster than the i5, right in between the previous two Cinebench single core improvements.

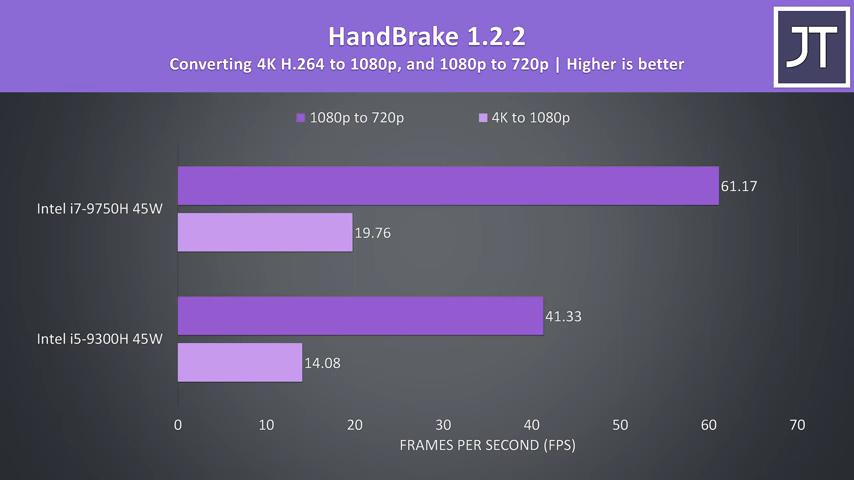

Handbrake was used to convert a 4K video file to 1080p, and then a separate 1080p file to 720p.

This is another test that benefits greatly from CPU cores. The i7 was completing the 4K transcode 40% faster than the i5, then the i7 was 48% faster with the 1080p to 720p conversion.

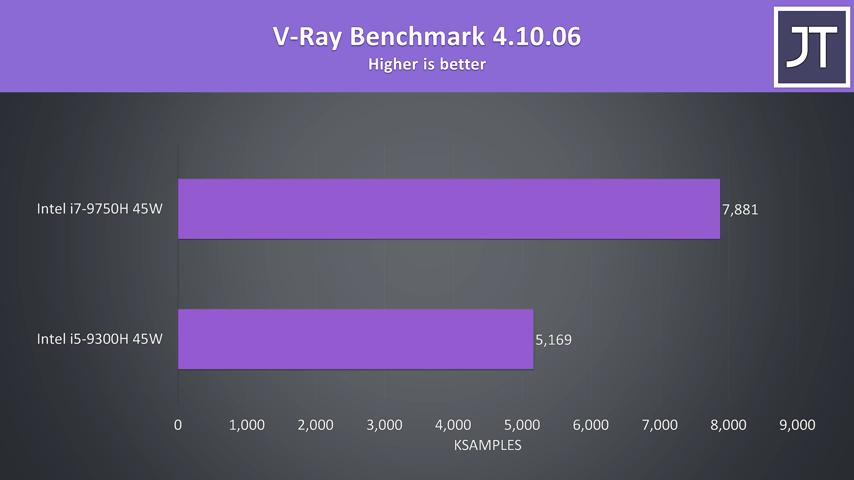

The V-Ray benchmark uses the CPU to render out a scene, so yet another task that’s typically faster the more cores you have.

The i7 was 52% faster in this test, which makes sense given it’s got 50% more cores and that they’re all being utilized.

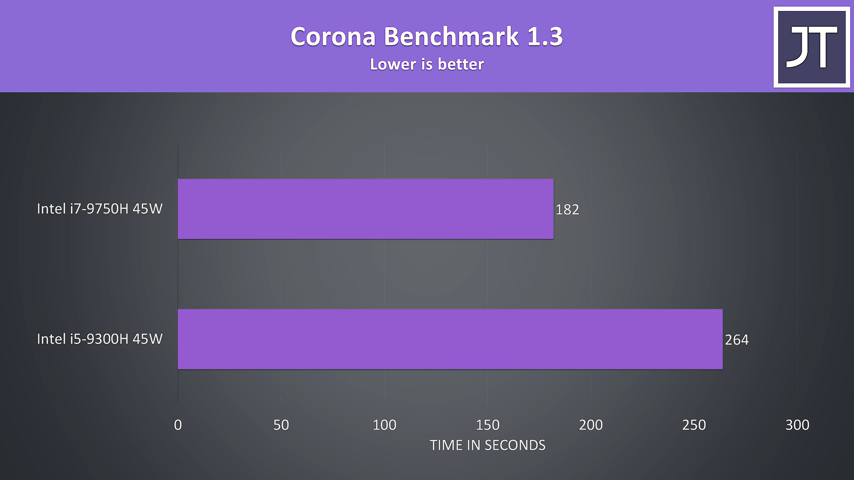

Another benchmark that renders out a scene with the processor, slightly less of a difference here, but these rendering tests smash all cores well with load, so the i7 was able to complete the task 45% faster than the i5.

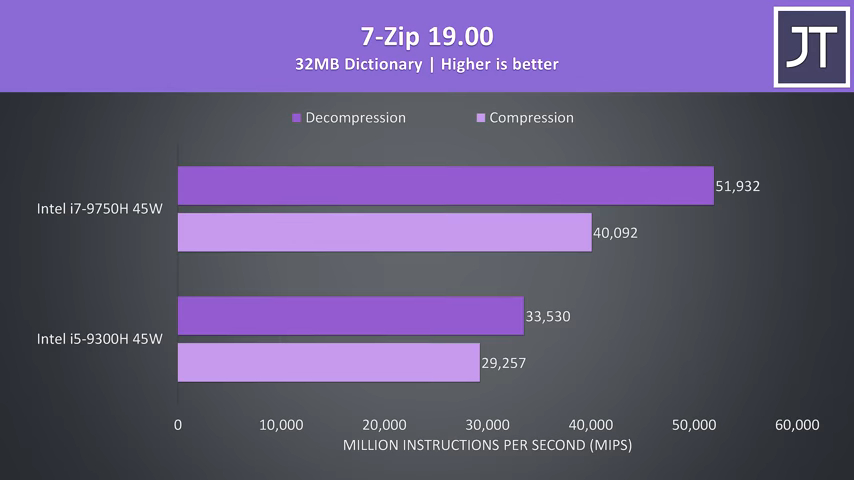

I’ve used 7-Zip to test compression and decompression speeds.

The compression speeds saw a 37% improvement with the i7, then a larger 55% boost when it came to decompression speed.

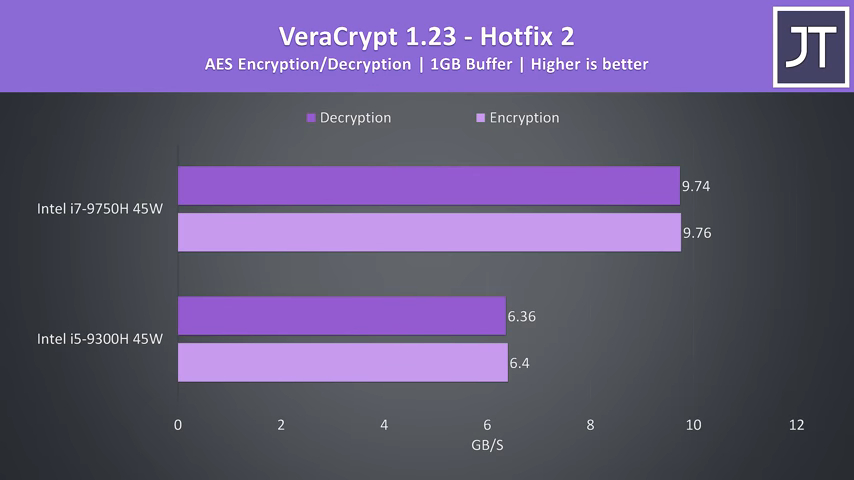

VeraCrypt was used to test AES encryption and decryption speeds.

Both tasks were around 53% faster with the i7, so it seems like either the 50% additional cores or cache are helping out in this workload and it’s scaling quite nicely.

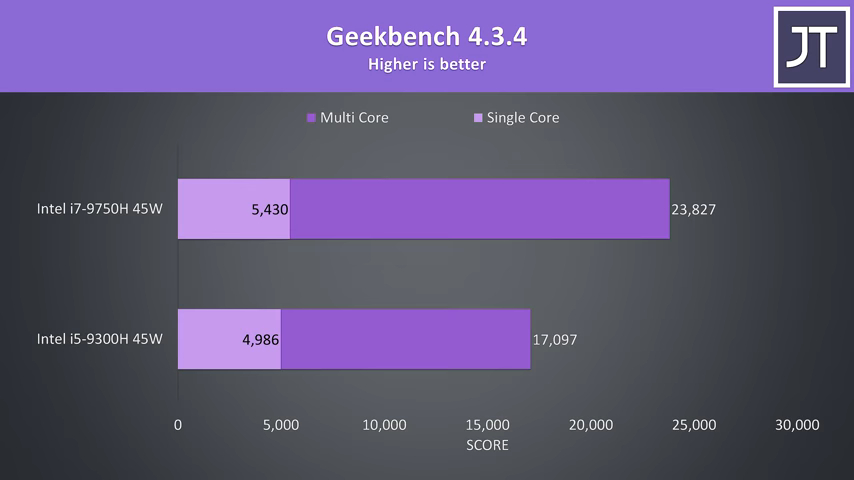

GeekBench was able to score 9% faster in single core performance with the i7, and 39% faster when it came to multicore, so the workloads this runs aren’t quite as core efficient as many of those rendering tasks we saw hit 50% higher speeds.

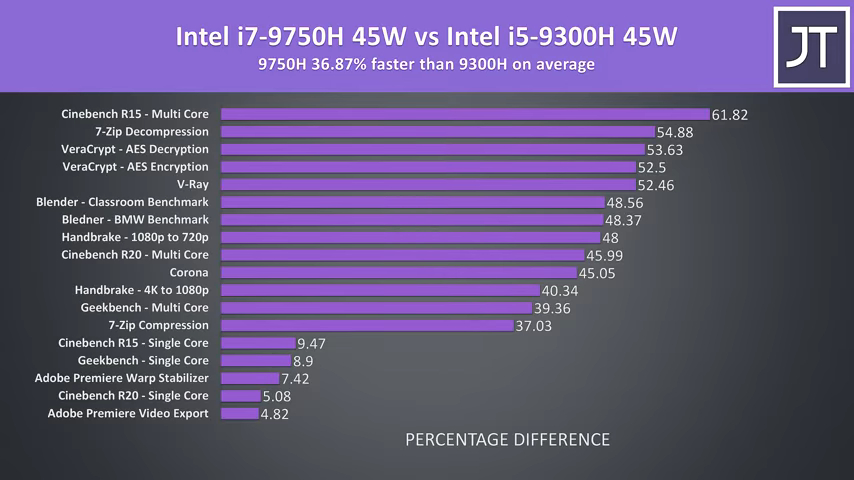

Here’s a summary of all of the applications just tested.

On average over these tests, the Intel i7-9750H was 37% faster than the i5-9300H. That overall percentage isn’t too useful though as it includes both single and multicore tests, we can see some of the rendering tests were around 50% better with the extra cores, then single core performance was anywhere from from 5 to almost 10% faster with the i7. This is about what you’d expect, given the i7 has 50% more cores, and the single core turbo boost speed is almost 10% faster than the i5.

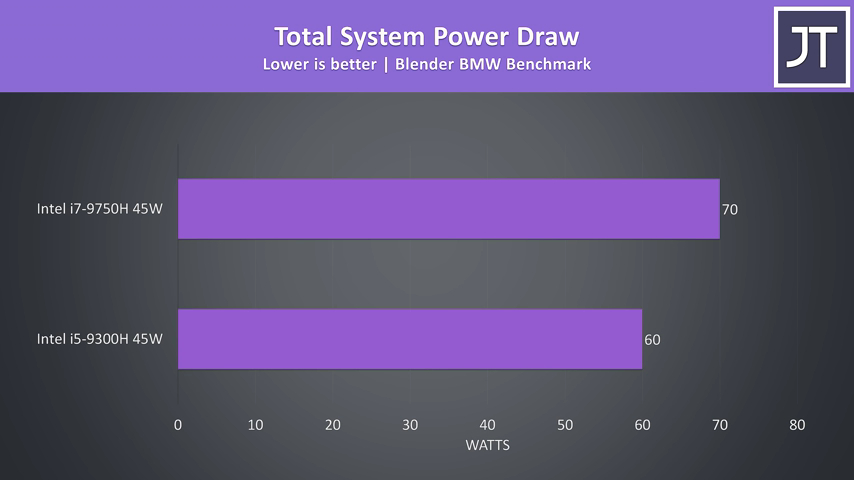

When we look at the total power drawn from the wall with the core heavy Blender test running, the i7 is using about 10 watts more, despite both chips apparently running with the sustained stock 45 watt TDP limit. At least this was what was being reported by HWinfo when running the blender test over the course of 20-30 minutes.

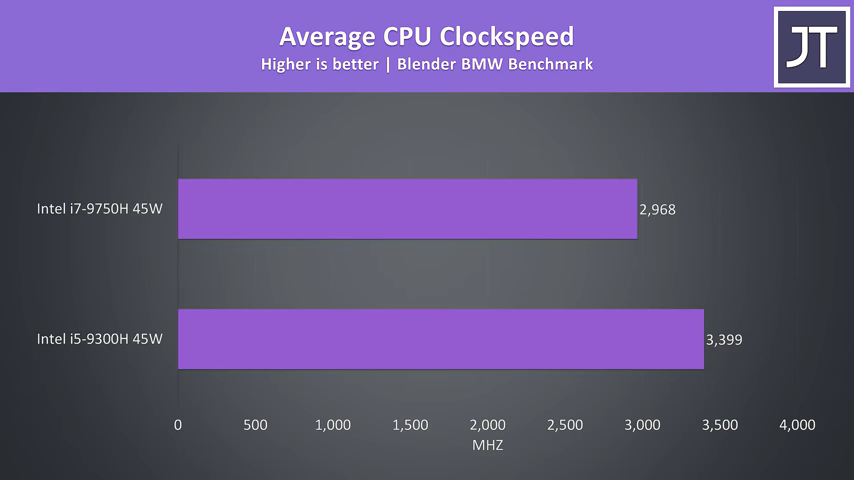

These are the average clock speeds while running the same blender benchmark.

Interestingly the i5 was able to clock higher in this test, which makes sense when you consider that the same 45w power budget is spread out over fewer cores.

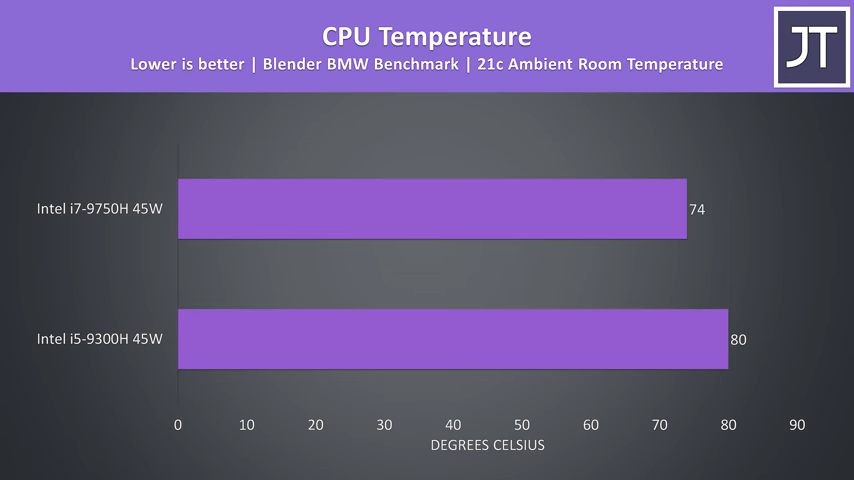

The temperatures were actually cooler with the i7 which I wasn’t expecting, both chips have the same power limit. The i5 was clocking higher as we just saw, but the i7 system was drawing more power from the wall, so I was thinking the extra cores would produce more heat. The comparison should be pretty apples to apples given both laptops have the same cooling design.

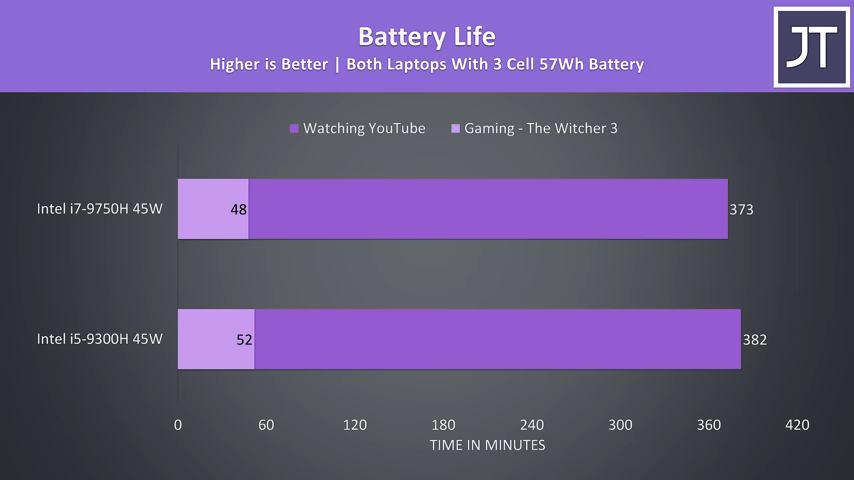

Both laptops have the same sized battery, and we can see the i5 was lasting longer in these tests, though to be fair, it’s hard to compare as the panel is also of lower quality so doesn’t get quite as bright even when at the same brightness percentage which may affect results.

In terms of price differences, you could get an Acer Helios 300 with i7 for around $1100 USD, or the i5 model with the same 1660 Ti for $900 USD, granted it does seem to have half the memory. Assuming around $50 for memory upgrade, that’s almost 16% more money to go for the i7. 16% more money for an on average 2% boost in games at max settings or 5% boost at minimum settings doesn’t really seem worth it.

The i5 actually seems fine for gaming in most instances, at least today, this could of course change in the future as games require more cores going forward, but that’s difficult to predict.

Outside of gaming, paying 16% more money for 50% more cores is absolutely worth it, on average in the applications tested there was 37% higher performance to be had, so it depends more on if your workload is multicore heavy than anything else.

Let me know which of these two CPUs you’d pick and why down in the comments.

Comments (2)