One of the specs you'll hear frown around when you're shopping for a CPU, is the process node measured in nanometers and how a smaller one is better.

Just check out the tech headlines and you'll see plenty of stories about how chip makers are racing to cram more and more tiny transistors onto their processors.

And why not? More transistors means better performance and efficiency because the electrons don't have to travel as far to each transistor, so they can switch on and off and therefore process information more quickly.

But to process nodes, really tell the whole story? To get some answers, we reached out to Jason Gorss and Bruce Fienberg from Intel, and we'd like to thank them for their contributions.

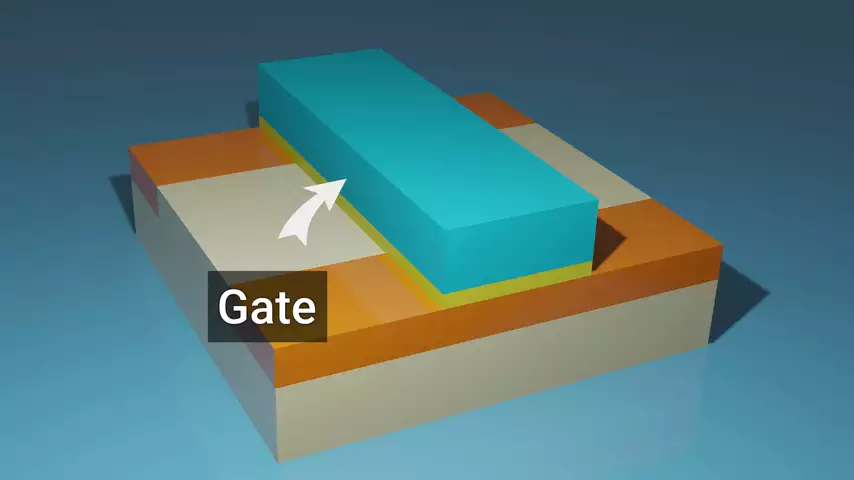

The process node was originally a measure of how long the gate in the transistor was.  This is the part that actually controls the flow of electrons from the source to the drain.

This is the part that actually controls the flow of electrons from the source to the drain.

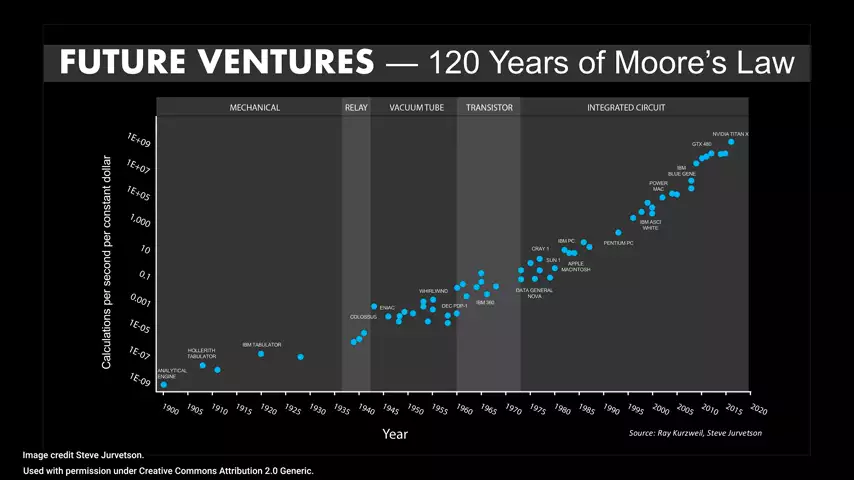

This was considered an accurate enough proxy for transistor size up to about 1997 when the 350 nanometer process was popular. The reason this is important, is because when you double the number of transistors on a chip, it's fair to expect roughly double the performance at a given dye size. And for a long time, these doublings, if you will, took place at such predictable intervals that Moore's law came to be, stating that the number of transistors on a chip would double about every two years.  This gave the chip makers an easy cadence to follow for naming each process node because they could expect each one to be smaller by a factor of about 0.7.

This gave the chip makers an easy cadence to follow for naming each process node because they could expect each one to be smaller by a factor of about 0.7.

Why 0.7, you might ask? Well, the transistors are roughly square in shape and if you multiply 0.7 by 0.7, you get 0.49 or roughly one half.

So for example, when the industry went from the 1000 nanometer process node to the 700 nanometer process node, this Mark the rough doubling of the number of transistors that they could fit in a given area even though the name of the process only reduced by a factor of 0.7.

Thing is, in 1997, while manufacturers were able to start shrinking the gate length by more than a factor of 0.7, other parts of the transistor weren't shrinking as quickly anymore. So, gate length was no longer a good proxy for the overall transistor density in the entire chip and therefore, the performance rather than changing the naming scheme outright though, we started to see a process node defined by the size of a group of transistors called a cell. This was done to give people an estimate of the equivalent level of processing power accounting for components that weren't shrinking as quickly.

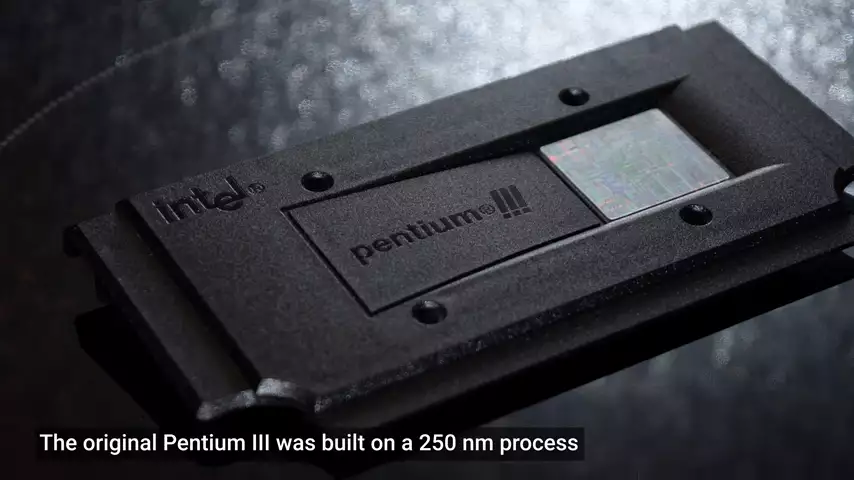

So, the first node we saw under this new naming system was the 250 nanometer process.  Performance was about double the previous node, as you would expect from the name but the gate length was actually around 190 nanometers which is much smaller. It's just that there was other stuff that prevented the transistors from being packed more tightly than that.

Performance was about double the previous node, as you would expect from the name but the gate length was actually around 190 nanometers which is much smaller. It's just that there was other stuff that prevented the transistors from being packed more tightly than that.

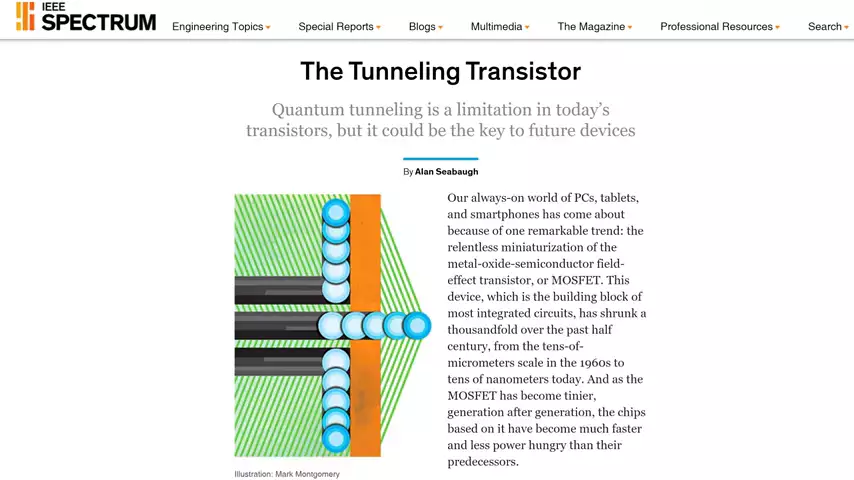

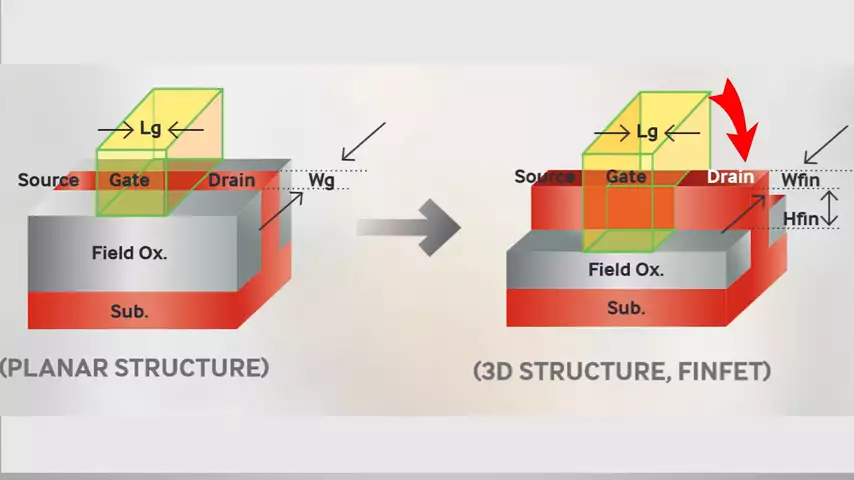

This game involving cell area lasted until around 2012 and the 22 nanometer process when a whole new type of trends sister was introduced. FinFET, chip makers found that at these sizes, the Gates were so small that you could have electrons leaking through them due to quantum tunneling. This could cause undesirable behavior.

FinFET, chip makers found that at these sizes, the Gates were so small that you could have electrons leaking through them due to quantum tunneling. This could cause undesirable behavior.

So, engineers needed a way to make their chips more powerful without shrinking the Gates even further.  The solution was to take the channel the electrons go through and raise it up like a shark fin, hence the name FinFET, increasing the surface area of the channel and allowing lots more electrons to pass through. Of course, this also meant that transistors were now three-dimensional instead of planar making it much harder to accurately measure their size.

The solution was to take the channel the electrons go through and raise it up like a shark fin, hence the name FinFET, increasing the surface area of the channel and allowing lots more electrons to pass through. Of course, this also meant that transistors were now three-dimensional instead of planar making it much harder to accurately measure their size.

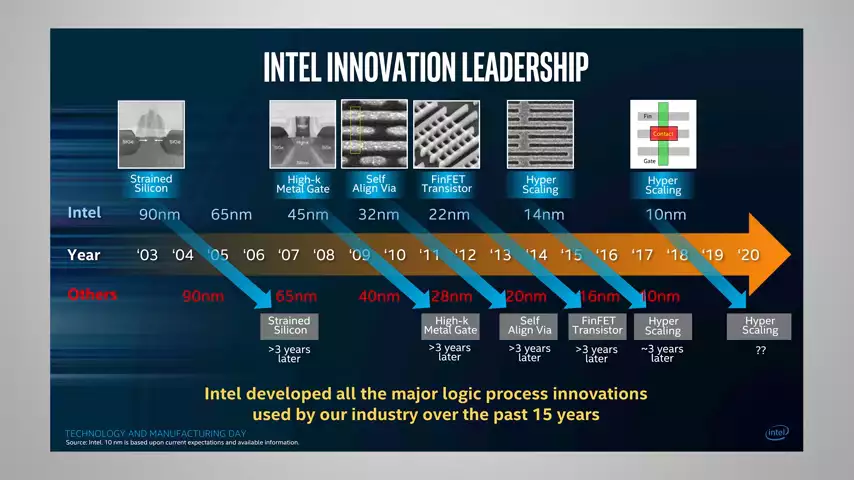

The industry has still continued to use that 0.7 factor to describe a generation of improvement, like going from 14 to 10 to seven nanometer processors. But the truth of the matter is that these numbers don't actually measure the real size of the transistor anymore, and they can even vary wildly between them different manufacturers.

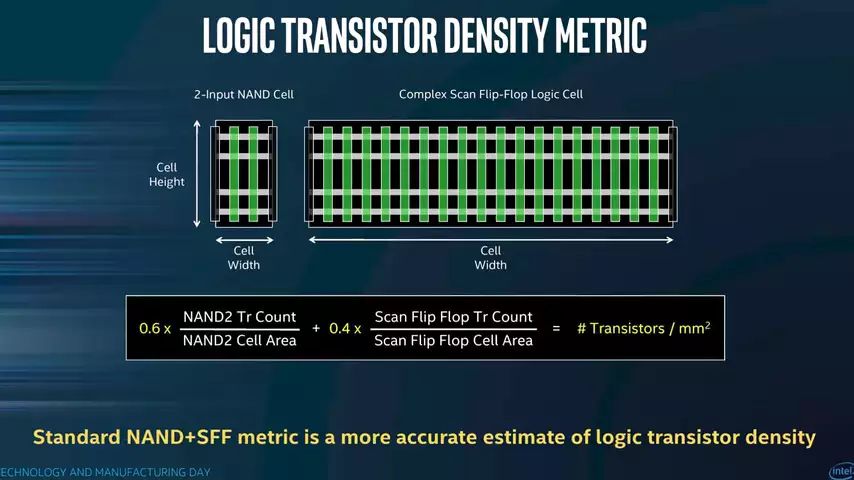

Intel, for example, attempts to measure a process node by taking the weighted average of the two most common standard cell sizes.  Really, a more important consideration though, is transistor density. That's how many can be packed into the same space without decreasing the size of the actual transistor features very much if at all.

Really, a more important consideration though, is transistor density. That's how many can be packed into the same space without decreasing the size of the actual transistor features very much if at all.

In addition to density, chip makers are using other techniques like improved materials to boost performance.  This can include everything from squeezing the crystal structure of the channel to make the electrons go through faster to lower resistance traces between transistors to gate materials with a high dielectric constant for better control of electron flow.

This can include everything from squeezing the crystal structure of the channel to make the electrons go through faster to lower resistance traces between transistors to gate materials with a high dielectric constant for better control of electron flow.

Of course, this process can require some trial and error. Intel's well-publicized difficulties with their 10 nanometer process, were due in large part to them trying to overscale. In other words, pack more than double the number of transistors into the same space which required them to try out a lots of new technologies inside the chip all at one time which caused delays and manufacturing problems.

But as our technology continues to improve, chip makers look poised to keep Moore's law even if it's a little slower or live to some extent as well as keeps silicon the base material for our processors for a long time to come before we have to really start considering more exotic solutions like carbon nanotubes.

In the meantime, I hope you enjoyed this deeper dive into processor sizes. Just remember that the process node isn't the be all and end all when you're shopping for a CPU anyway. It's always more important to pay attention to the real world performance that you'll see in games and applications that you actually use.

No comments yet