Nvidia RTX 3090Ti is the world's fastest gaming GPU, as of yesterday. Today, Nvidia launches the GeForce RTX 4090, a massive improvement over its predecessor in almost every way. So, why aren't I more excited?

The fact that I could, you know, buy a cheap used car for what Nvidia is asking for, this aside, there are some other problems that we need to talk about.

RTX 4090 - More than a mere refresh?

Two years ago the GeForce RTX 3090 launched at an eye watering 1500 US dollars, and Nvidia's justification for this was that it was a Titan class GPU. Now of course, we all know now that that was BS as Nvidia launched the RTX 3090 Ti just six months ago, in March of 2022, at two grand, with a very modest spec bump for the price, and still no Titan class floating point compute functionality.

Both cards are available at around a thousand dollars or so today thanks to the crash in GPU demand and competition from AMD is actually coming in a little bit less than that. The RTX 4090 that launches at $1,600 US. What do you get for the price of three PS5 gaming consoles?

It's not a Titan class GPU, and it has the same amount of VRAM as the previous gen RTX 3090, but, that's where the similarities end.

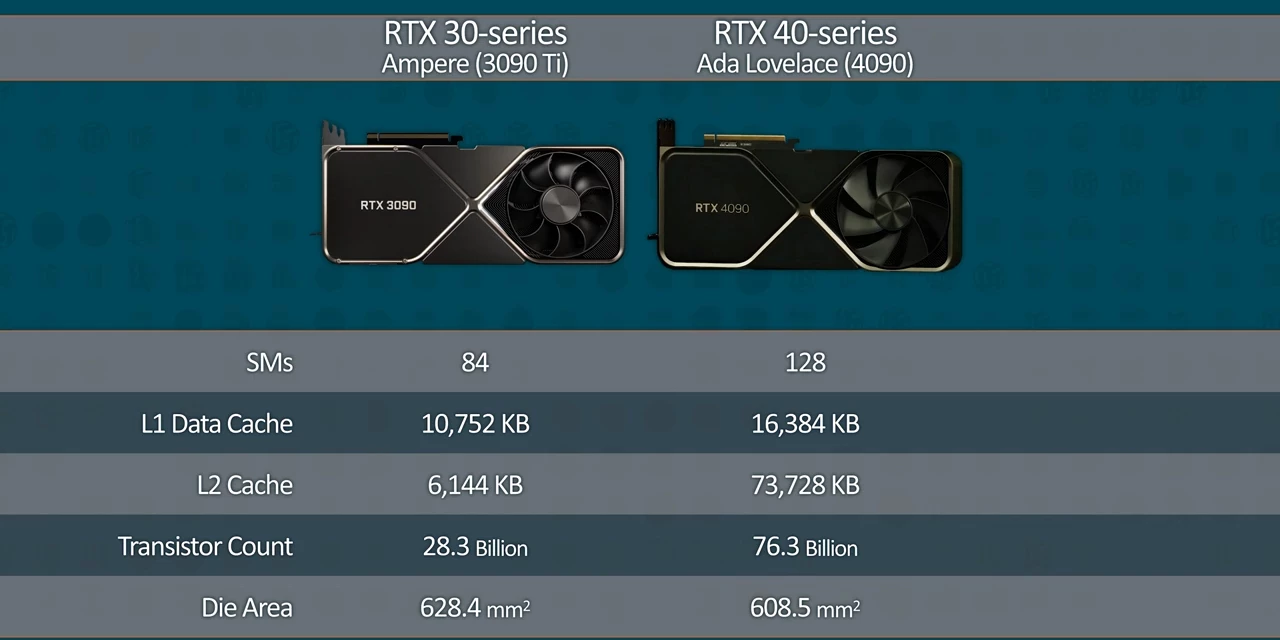

It's not a Titan class GPU, and it has the same amount of VRAM as the previous gen RTX 3090, but, that's where the similarities end.  Not only is the memory as fast as what you'd find on an RTX 3090 Ti, you're getting over 50% more CUDA cores that are each clocked at over 35% higher.

Not only is the memory as fast as what you'd find on an RTX 3090 Ti, you're getting over 50% more CUDA cores that are each clocked at over 35% higher. I mean, say what you want, but that alone would be a substantial upgrade for a mere 7% increase in MSRP, which is half the rate of inflation since 2020. "And what a steal," is what I would say if it weren't for the fact that it's, it's honestly, it's still the price of an entire mid-range gaming PC on its own. So what else does this bring to the table?

I mean, say what you want, but that alone would be a substantial upgrade for a mere 7% increase in MSRP, which is half the rate of inflation since 2020. "And what a steal," is what I would say if it weren't for the fact that it's, it's honestly, it's still the price of an entire mid-range gaming PC on its own. So what else does this bring to the table?

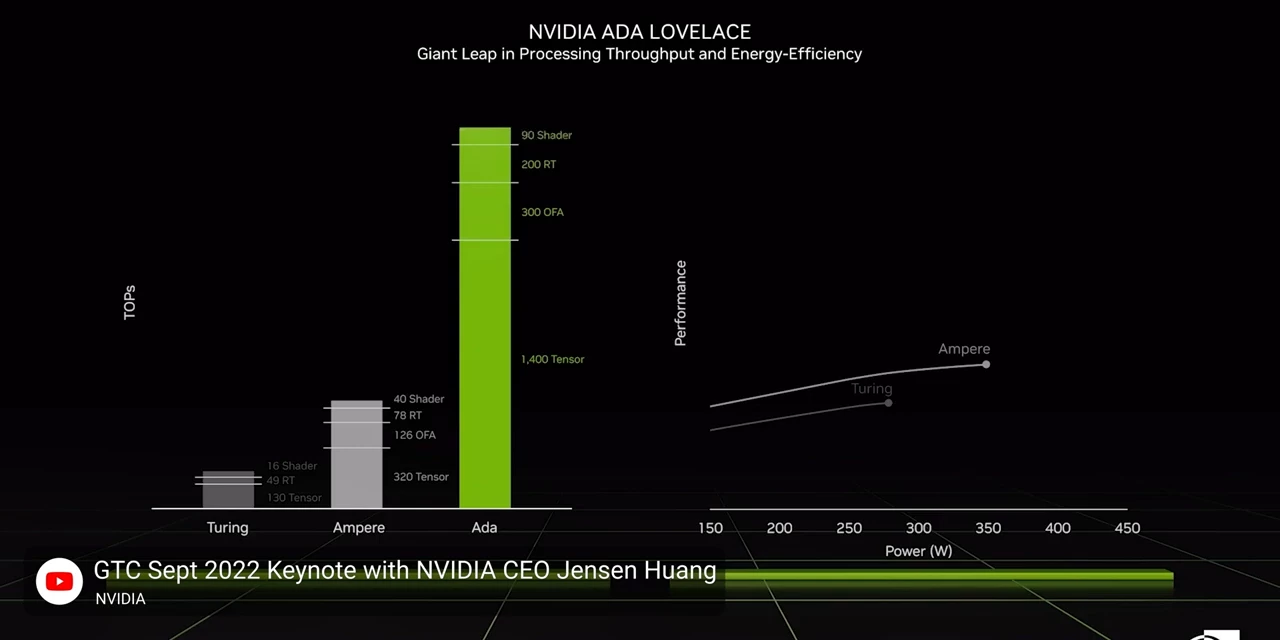

Nvidia claims that each of their CUDA cores is beefed up compared to the outgoing ampere architecture in almost all respects, with claimed performance improvements of up to two times what the 30 series could achieve. That's thanks in large part to nearly double the L1 cache, and a substantial change in the core layout itself.

That's thanks in large part to nearly double the L1 cache, and a substantial change in the core layout itself.  And also to the use of TSMC's new N4 process that allows the total Die Area to be nearly 150 millimeters squared smaller than its predecessor.

And also to the use of TSMC's new N4 process that allows the total Die Area to be nearly 150 millimeters squared smaller than its predecessor.

Test Setup and why we didn't run 22H2

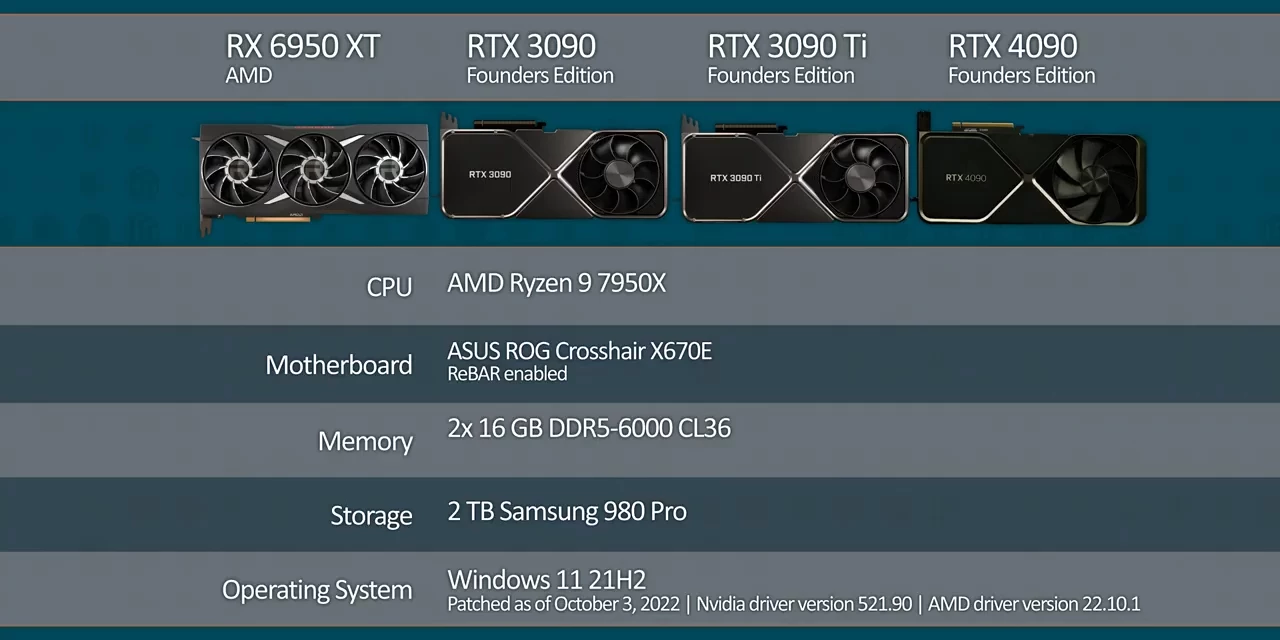

Of course, we need to test Nvidia's claims, so we cut the lamps to fire up our shiny new socket am5 bench with a fresh install of Windows 11 22H1 and go to town with testing.  Why 22H1? Apparently 22H2 introduced some issues that Nvidia is not going to have ironed out. But for now let's turn our attention to the main event: Games.

Why 22H1? Apparently 22H2 introduced some issues that Nvidia is not going to have ironed out. But for now let's turn our attention to the main event: Games.

4K Gaming Results

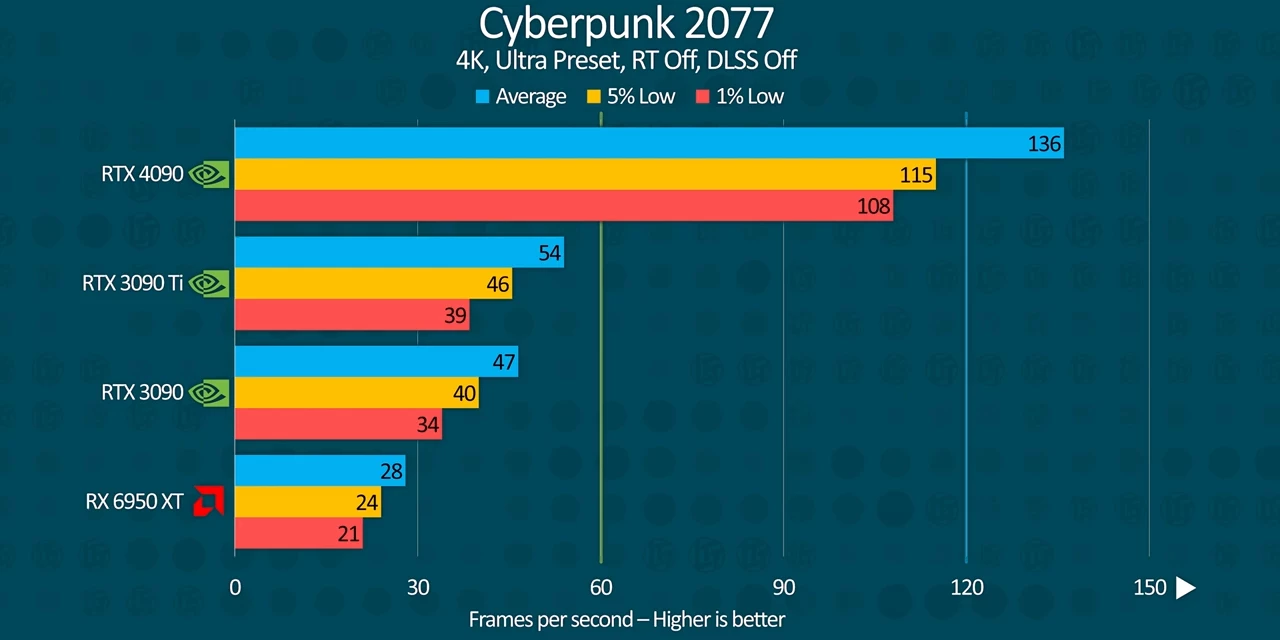

And right out of the gate, even the traditional raster performance is spectacular compared to the mighty 3090 Ti.  Yes, you're seeing that correctly. Cyberpunk 2077 ran 2.5 times faster on the 4090 than the 3090 Ti, bringing minimum frame rates up from sub 50 to over a hundred. We had to triple check that just to be sure, but here it is.

Yes, you're seeing that correctly. Cyberpunk 2077 ran 2.5 times faster on the 4090 than the 3090 Ti, bringing minimum frame rates up from sub 50 to over a hundred. We had to triple check that just to be sure, but here it is.

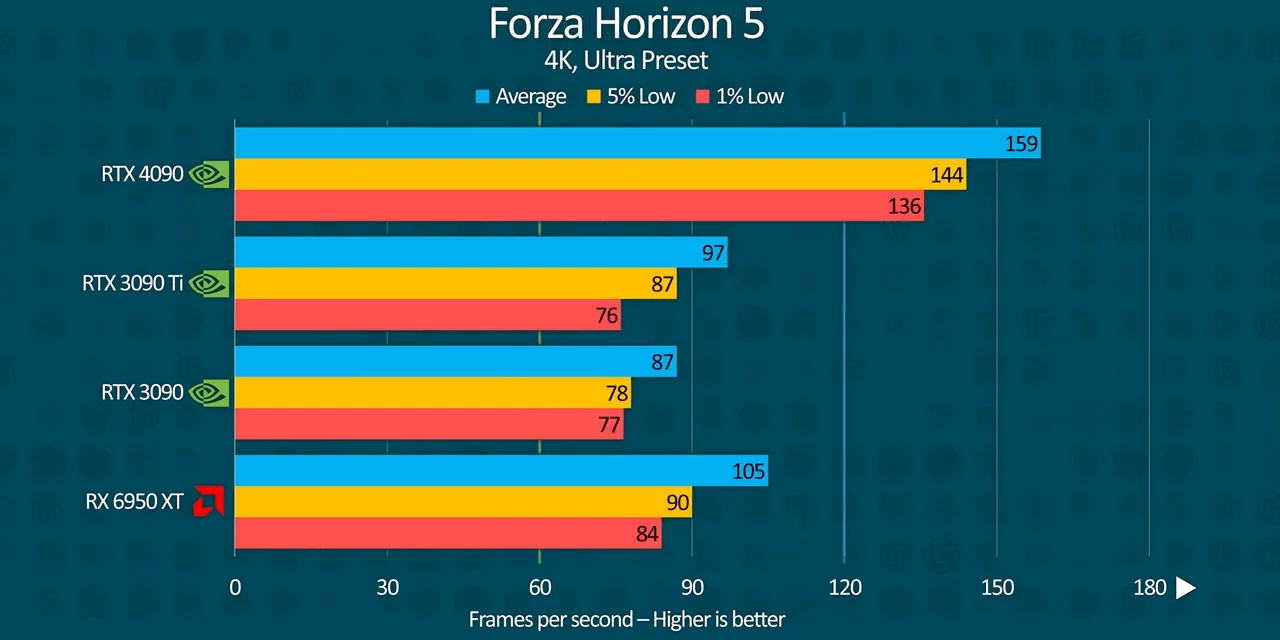

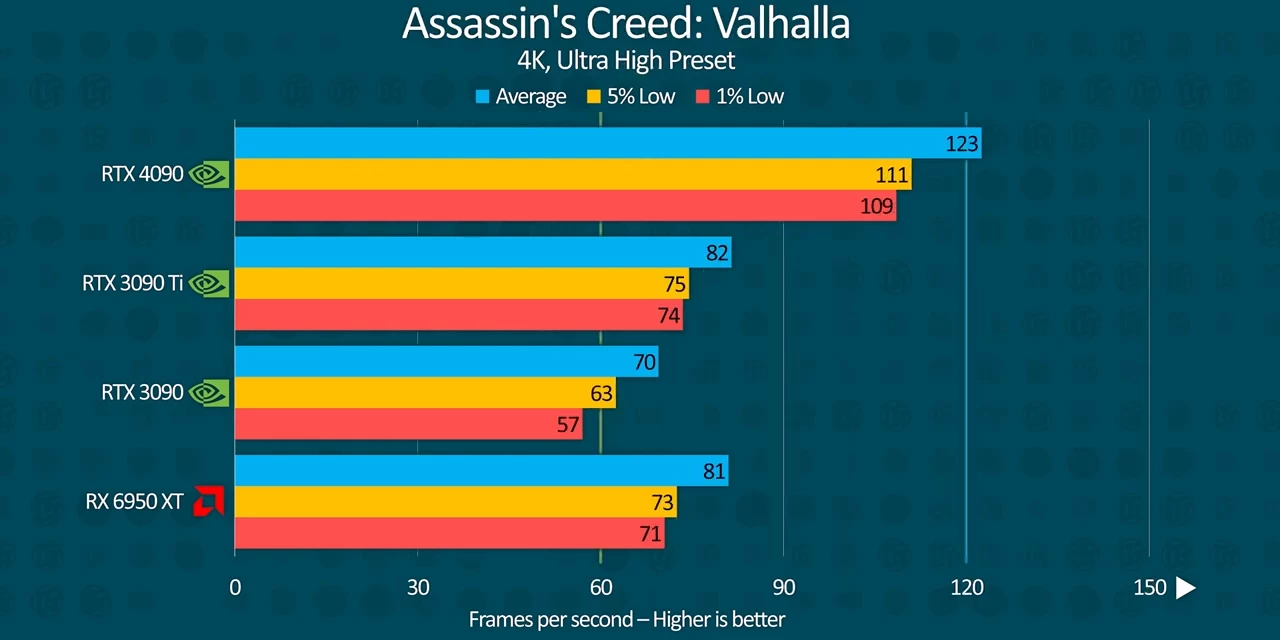

We're also looking at minimum frame rates beyond 120 at 4K in Forza Horizon 5, compared to sub 90 on the 3090 Ti, and it is incredibly stable. Assassins Creed, Valhalla's frame rate also shot up by about 50% on the 4090 enabling 4K 120 FPS gameplay without any compromises.

Assassins Creed, Valhalla's frame rate also shot up by about 50% on the 4090 enabling 4K 120 FPS gameplay without any compromises. Remember, this is just the rasterization performance, what we thought Nvidia was trying to hide from us by showing off their RT performance in the press materials.

Remember, this is just the rasterization performance, what we thought Nvidia was trying to hide from us by showing off their RT performance in the press materials.

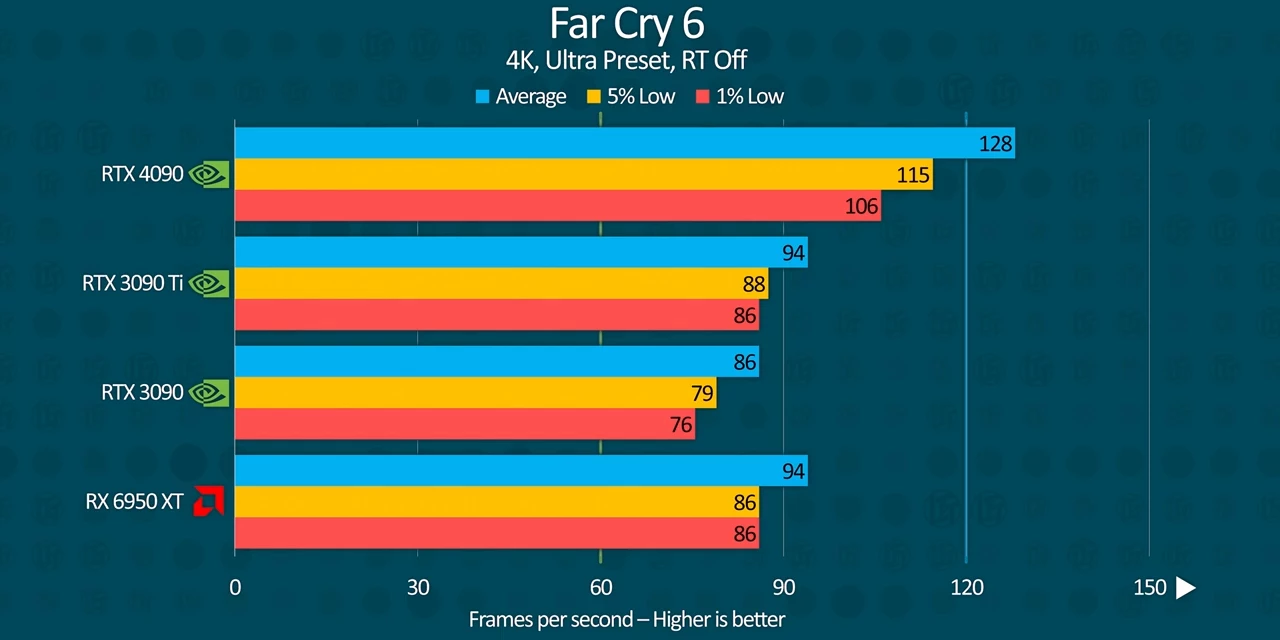

Far Cry 6, another Ubisoft title, doesn't see quite the same improvement, however, at about 30% or so across the board.

Far Cry 6, another Ubisoft title, doesn't see quite the same improvement, however, at about 30% or so across the board.

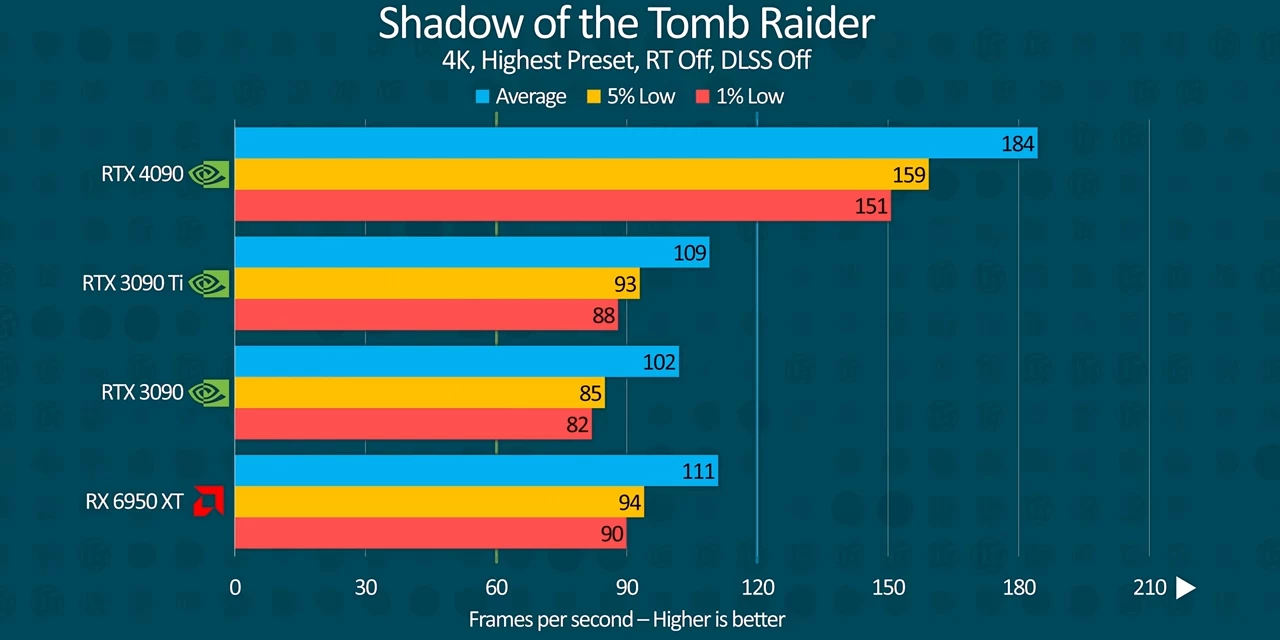

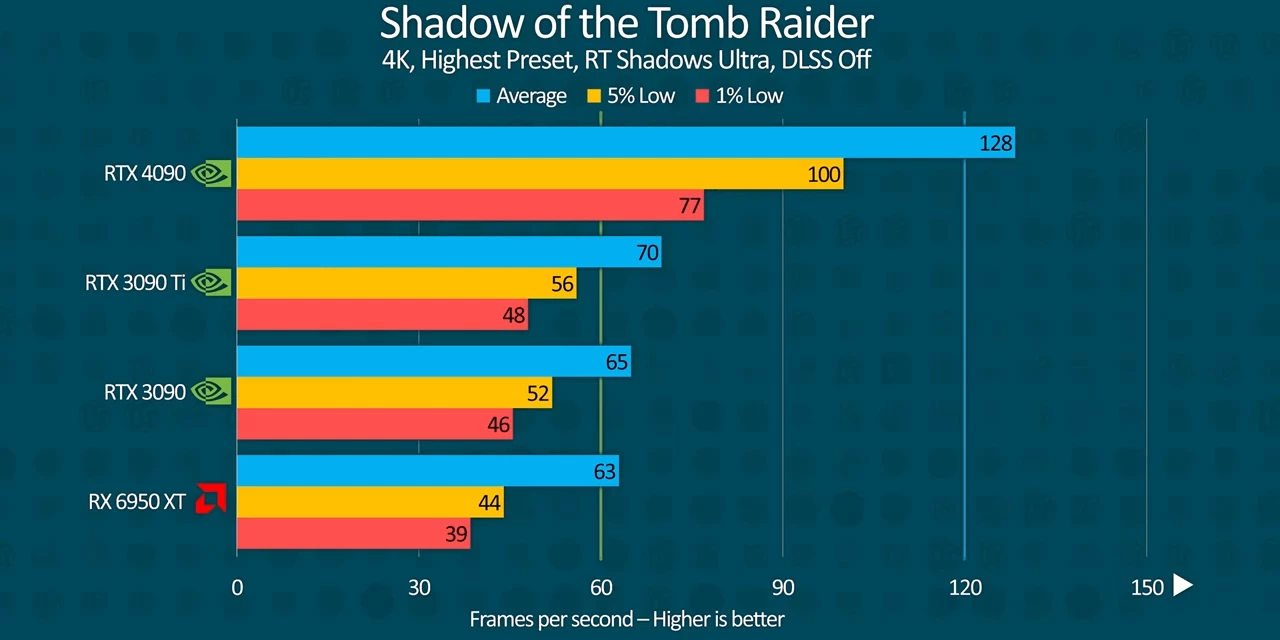

It's not bad, like not at all, but I'm just ruined after seeing those other results.  Improvements continue to become more modest in Shadow of the Tomb Raider, where we're likely starting to get CPU limited at 4K. I'll let that sink in for a moment.

Improvements continue to become more modest in Shadow of the Tomb Raider, where we're likely starting to get CPU limited at 4K. I'll let that sink in for a moment.

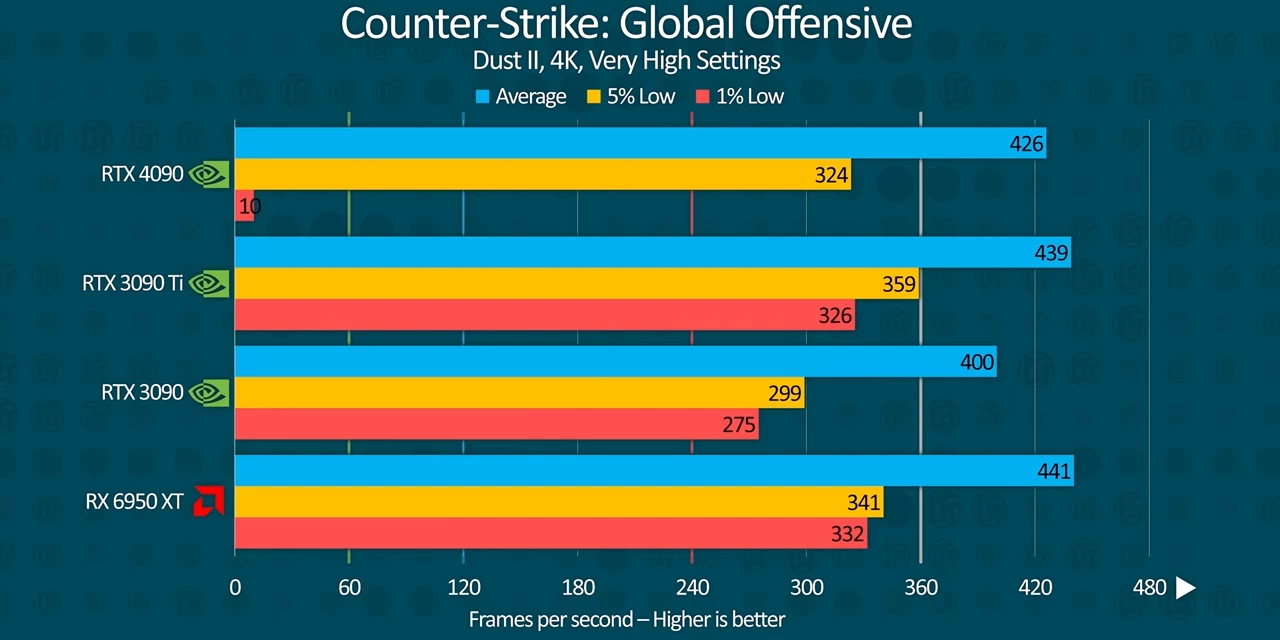

We see exactly that problem again when we run CS:GO, where, for some bizarre reason, the situation is flipped on its head. The RTX 3090 Ti outperforms the 4090 throughout multiple runs. It's especially surprising because the GPU core clocks and the load remained high. I mean, if you've got an explanation, I'd love to see your take in the comments.

We see exactly that problem again when we run CS:GO, where, for some bizarre reason, the situation is flipped on its head. The RTX 3090 Ti outperforms the 4090 throughout multiple runs. It's especially surprising because the GPU core clocks and the load remained high. I mean, if you've got an explanation, I'd love to see your take in the comments.

1440p Gaming Results

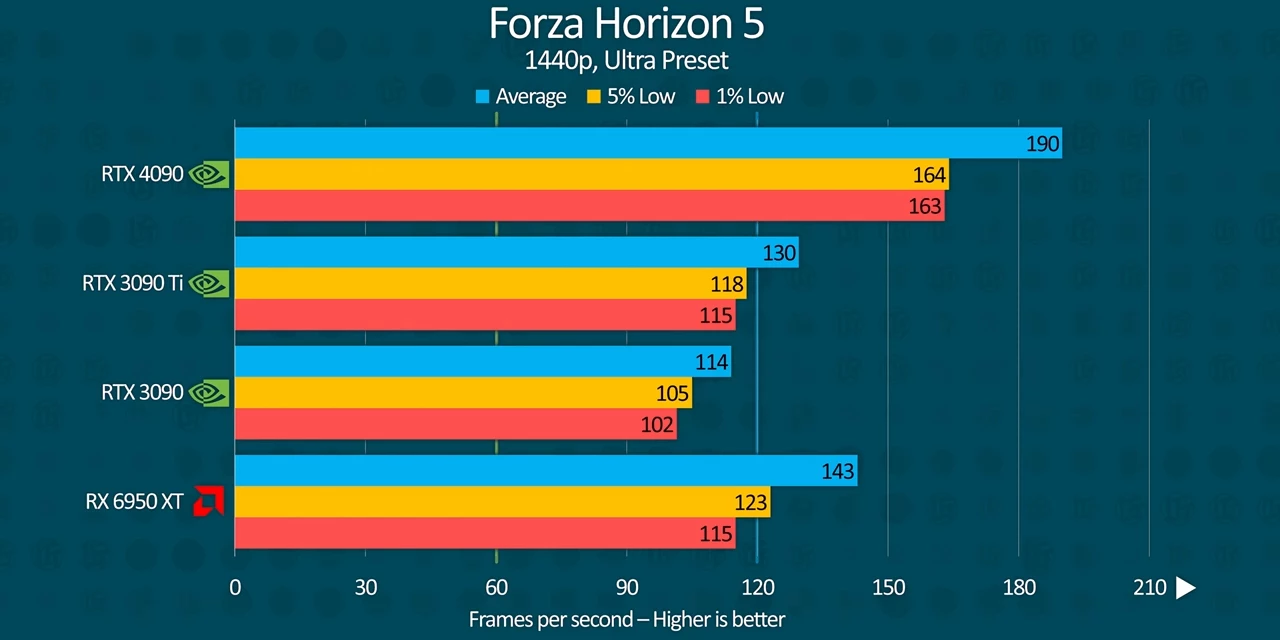

Dropping the resolution down to 1440P, it seems like we're becoming even more CPU bound, but critically, the minimum frame rates in Forza Horizon 5 are massively improved, making for a smoother overall experience.

Dropping the resolution down to 1440P, it seems like we're becoming even more CPU bound, but critically, the minimum frame rates in Forza Horizon 5 are massively improved, making for a smoother overall experience.

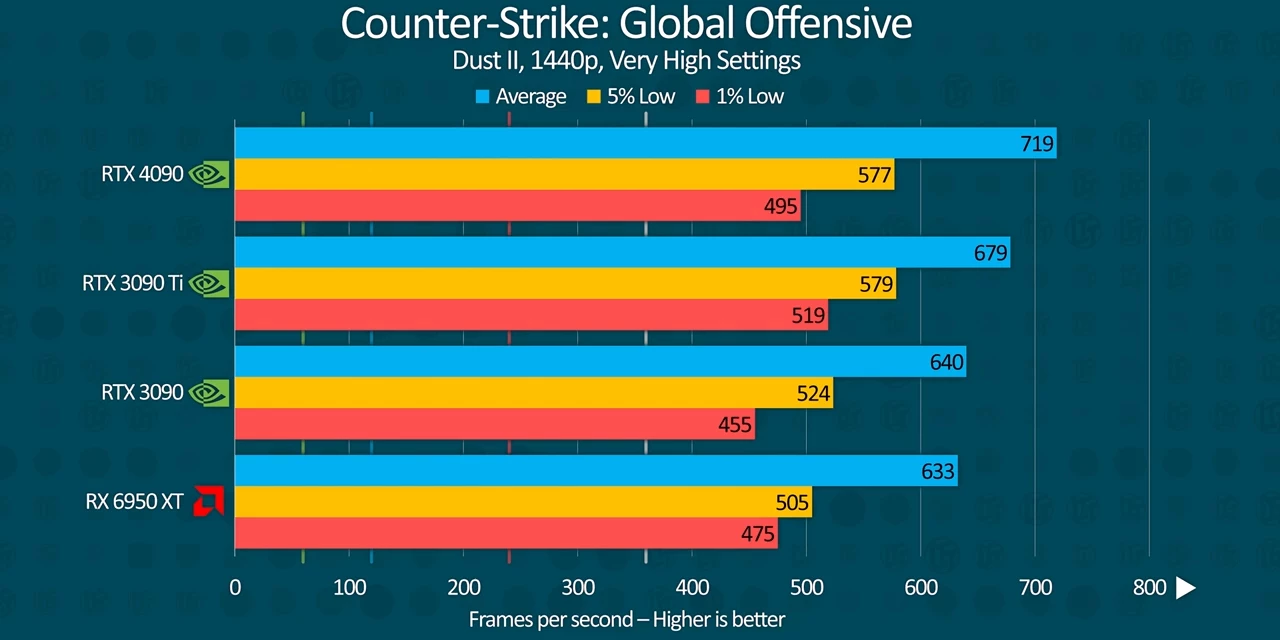

Interestingly, CS:GO actually manages to return to normalcy at 1440P. So, I don't know, maybe we've got a driver bug at 4K or something.

Interestingly, CS:GO actually manages to return to normalcy at 1440P. So, I don't know, maybe we've got a driver bug at 4K or something.

Ray Tracing & DLSS Gaming Results

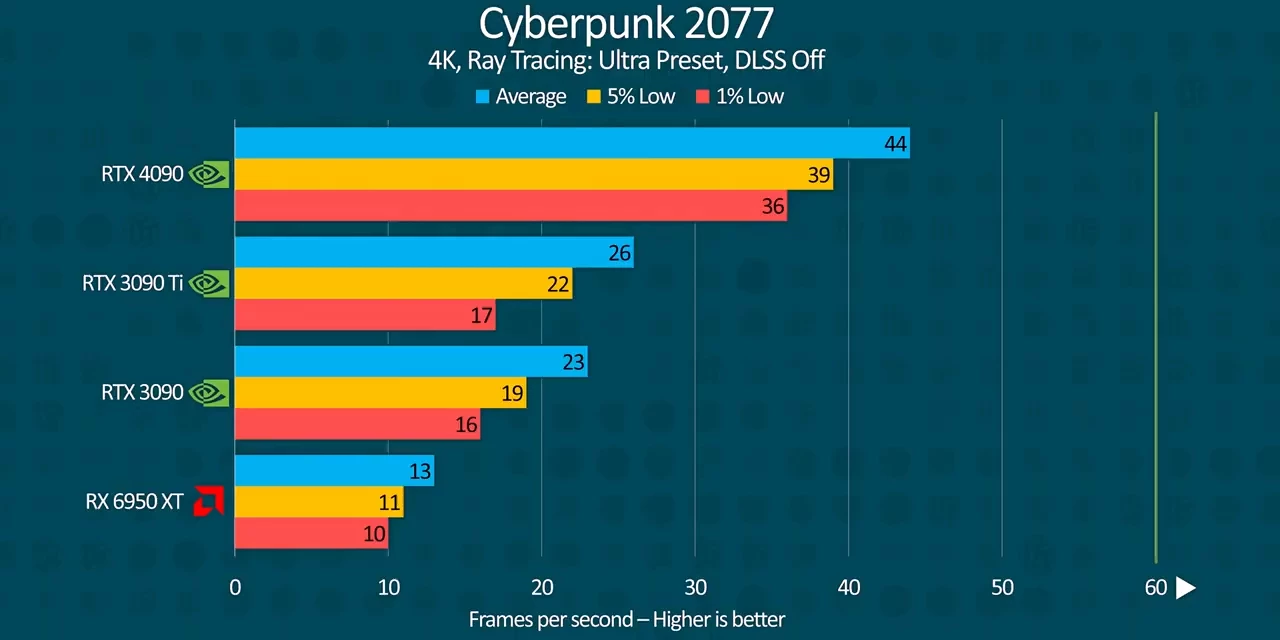

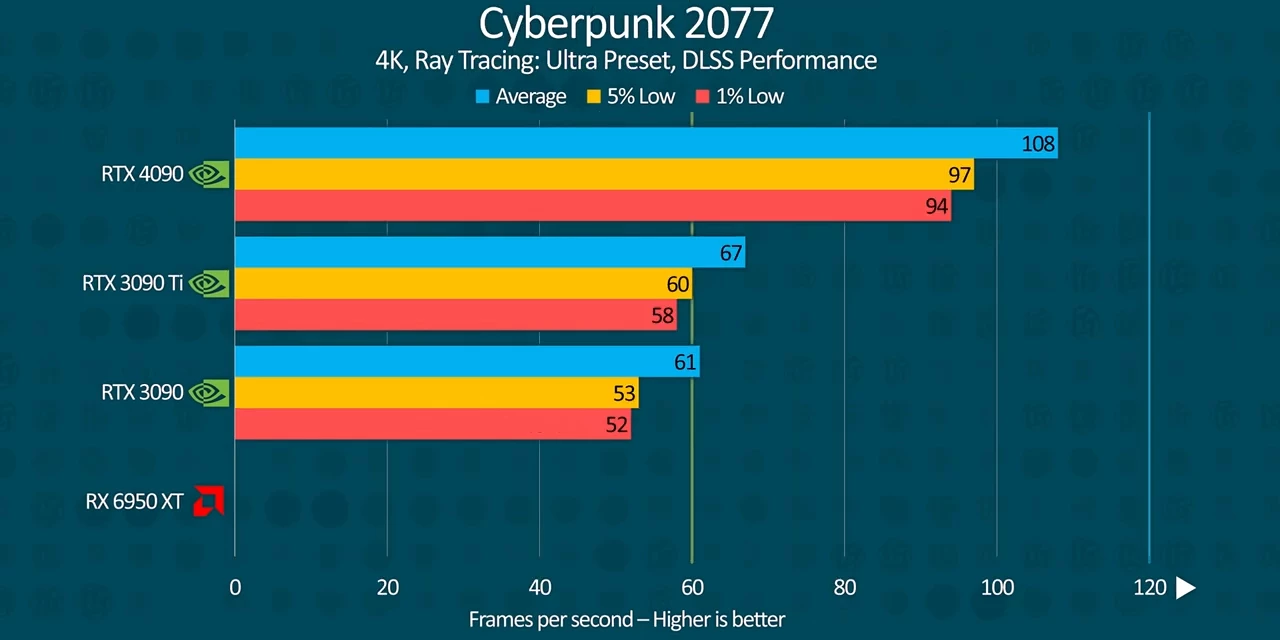

Now, do you still remember that Cyberpunk result? So check this out.  We're going from 25 FPS on average on the 3090 Ti to a staggering 96 FPS on the 4090 at 4K without DLSS. That's almost four times the performance.

We're going from 25 FPS on average on the 3090 Ti to a staggering 96 FPS on the 4090 at 4K without DLSS. That's almost four times the performance.

And when we turn ray tracing on in the much older Shadow of the Tomb Raider, the 4090 is capable of nearly doubling the minimum FPS of the 3090 Ti.  That brings this title from mostly 60 FPS or more at 4K to a buttery smooth 100 to 120 FPS, all without DLSS.

That brings this title from mostly 60 FPS or more at 4K to a buttery smooth 100 to 120 FPS, all without DLSS.

With DLSS, in performance mode, Cyberpunk manages an average of 144 FPS with 1% lows over 120 FPS. That's right, you can get buttery smooth 4K 120 with ray tracing on this card if you're okay with DLSS.

With DLSS, in performance mode, Cyberpunk manages an average of 144 FPS with 1% lows over 120 FPS. That's right, you can get buttery smooth 4K 120 with ray tracing on this card if you're okay with DLSS.

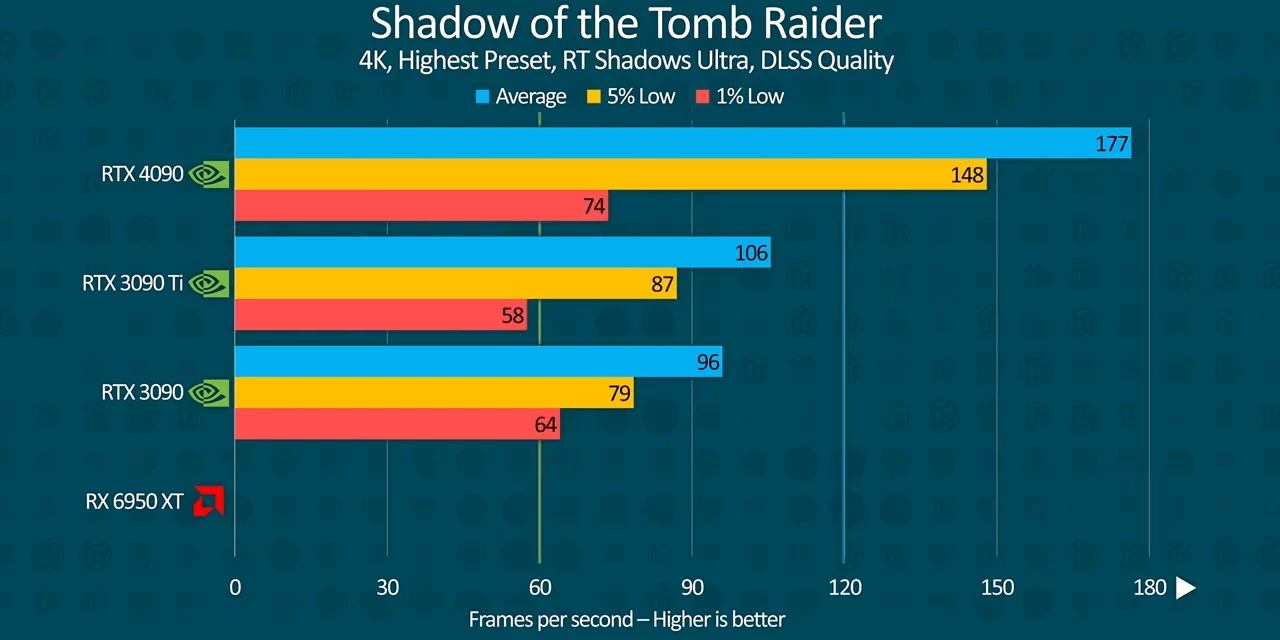

Shadow of the Tomb Raider in DLSS quality mode is just ridiculous. Where the 3090 Ti can't quite reach 90 FPS in 5% lows. The 4090 is fast enough to comfortably drive 144 hertz 4K monitor.

Shadow of the Tomb Raider in DLSS quality mode is just ridiculous. Where the 3090 Ti can't quite reach 90 FPS in 5% lows. The 4090 is fast enough to comfortably drive 144 hertz 4K monitor.

Where's DLSS 3.0?

Now, you might be wondering about the new DLSS 3.0, Nvidia's AI frame generation technology. We're wondering too. Unfortunately, all of our cards, even the third party ones that we're not allowed to show you today, all of them crashed a lot, like a lot a lot, even across multiple benches. As a result, the labs weren't able to properly test DLSS 3.0 and we'd like more ray tracing results than we got. We'll have all of that missing data in a follow up so stay tuned.

Productivity Results

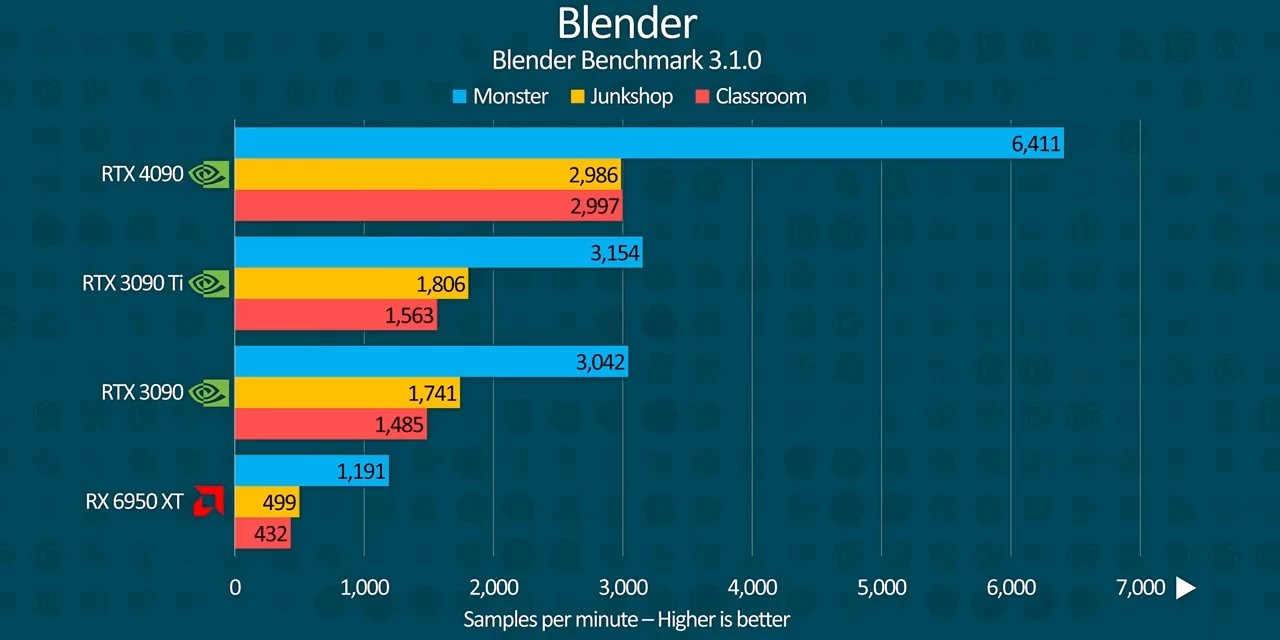

But okay, what if you're not a big gamer? Well, you're in luck because this thing is also a productivity beast. In Blender it gets over double the samples per minute in both the monster and junk shop scenes and just under double in the older classroom scene. That's a substantial time savings and might be worth it on its own if you're a 3D artist.

In Blender it gets over double the samples per minute in both the monster and junk shop scenes and just under double in the older classroom scene. That's a substantial time savings and might be worth it on its own if you're a 3D artist.

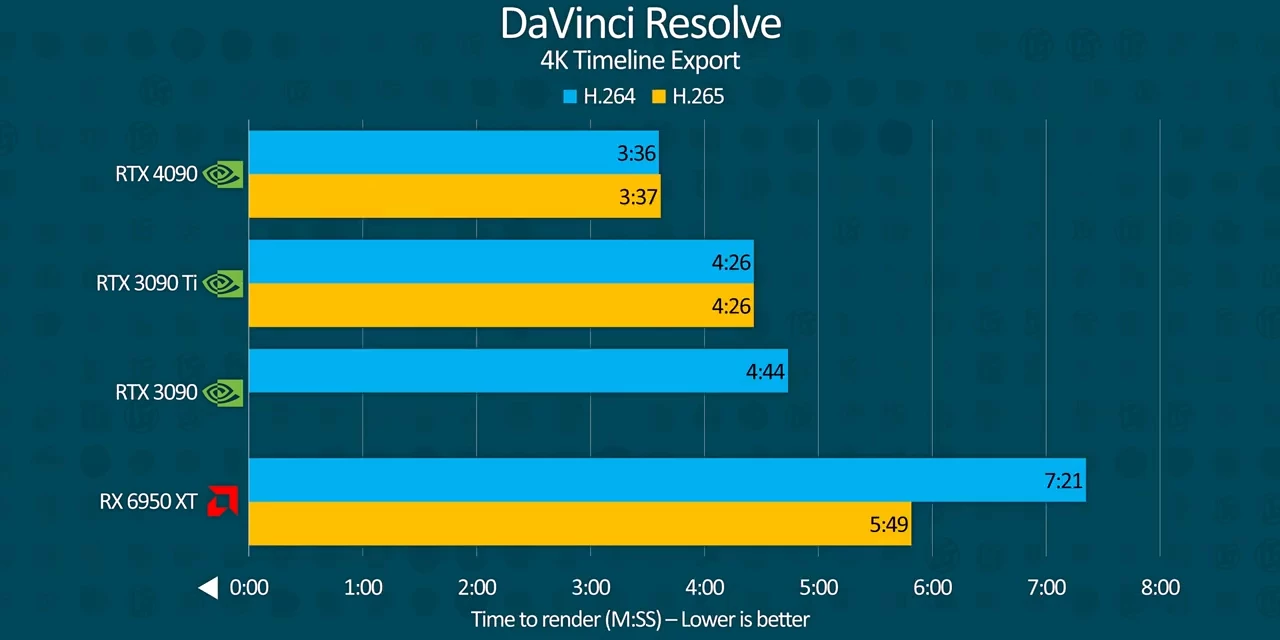

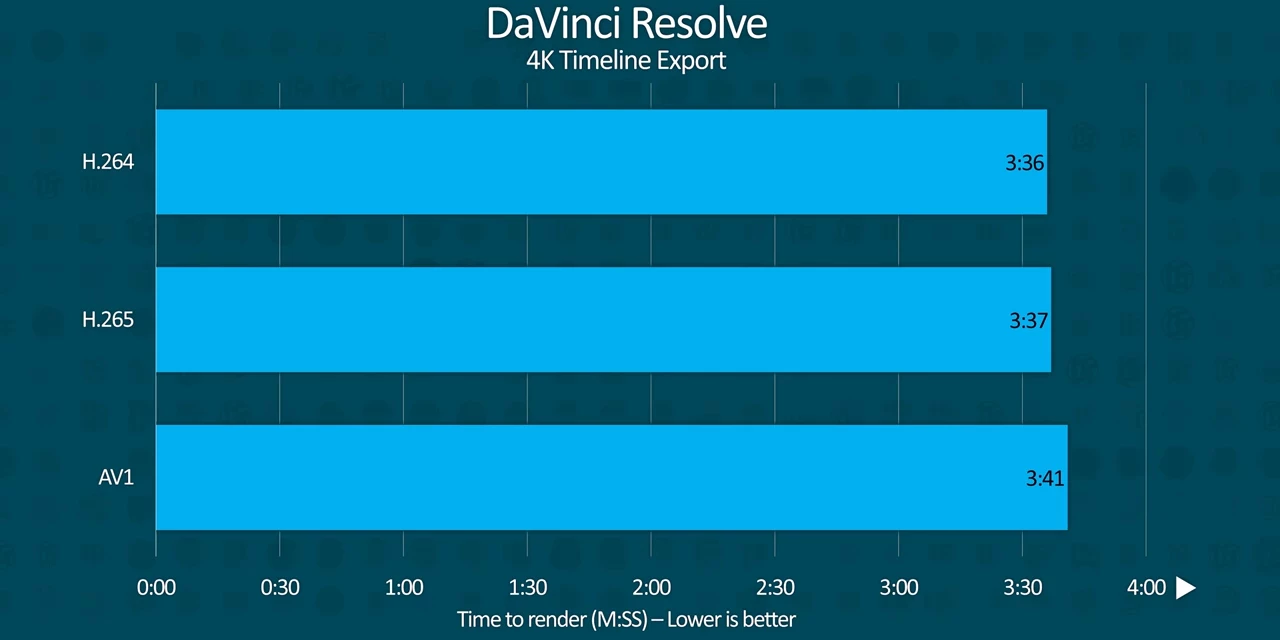

Similarly, our 4K DaVinci Resolve export finished nearly a minute faster, a difference of roughly 25%, Something that that'll also add up over time, especially for timelines with a lot of rendered graphical effects.

Similarly, our 4K DaVinci Resolve export finished nearly a minute faster, a difference of roughly 25%, Something that that'll also add up over time, especially for timelines with a lot of rendered graphical effects.

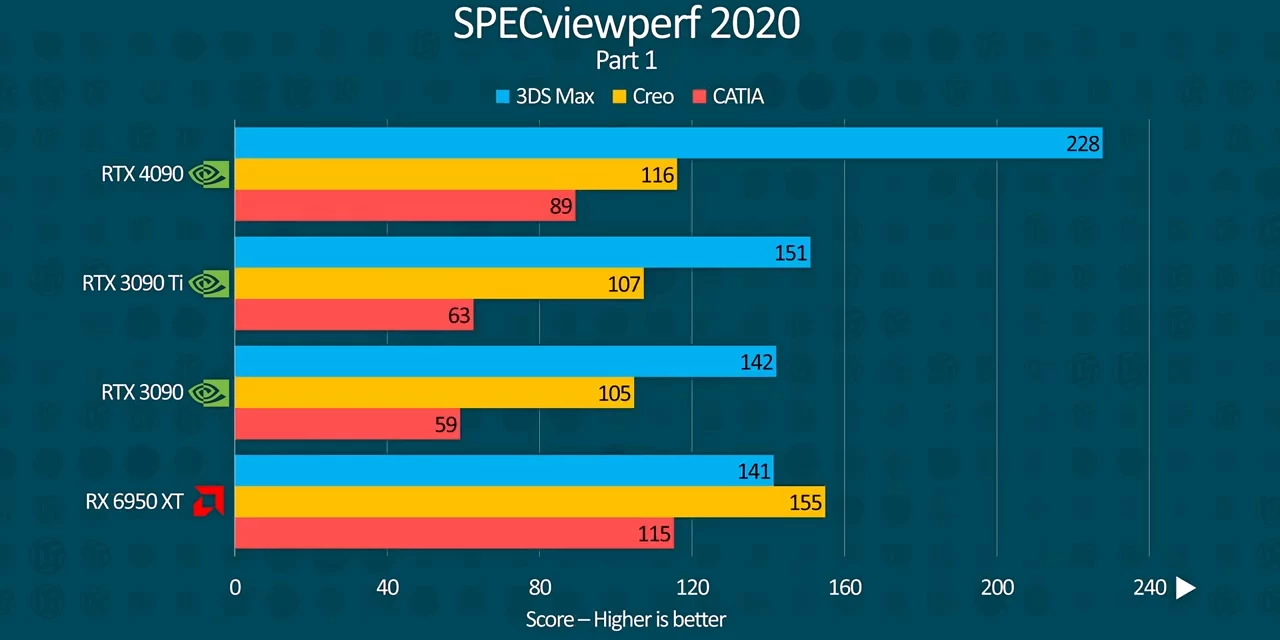

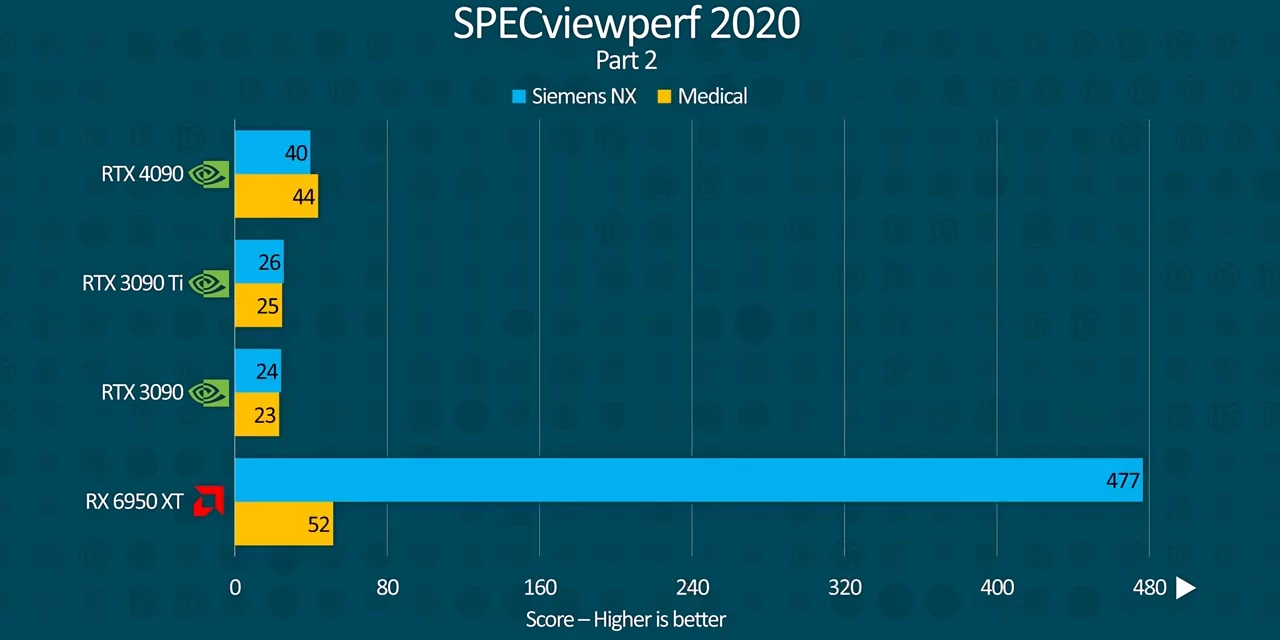

SPECviewperf meanwhile shows massive gains across the board, with the most substantial improvements in 3ds Max, Maya, Medical, and Solidworks at nearly double the score.

SPECviewperf meanwhile shows massive gains across the board, with the most substantial improvements in 3ds Max, Maya, Medical, and Solidworks at nearly double the score.  While Creo only saw modest performance improvement of around 10%. Still, this is like looking at a completely different class of GPU, not a typical generational improvement.

While Creo only saw modest performance improvement of around 10%. Still, this is like looking at a completely different class of GPU, not a typical generational improvement.

With all of these performance gains, Maybe Nvidia had to price these at $1,600 if they had any hope at all of selling through all of their mining surplus 3090s. And that's not everything either.

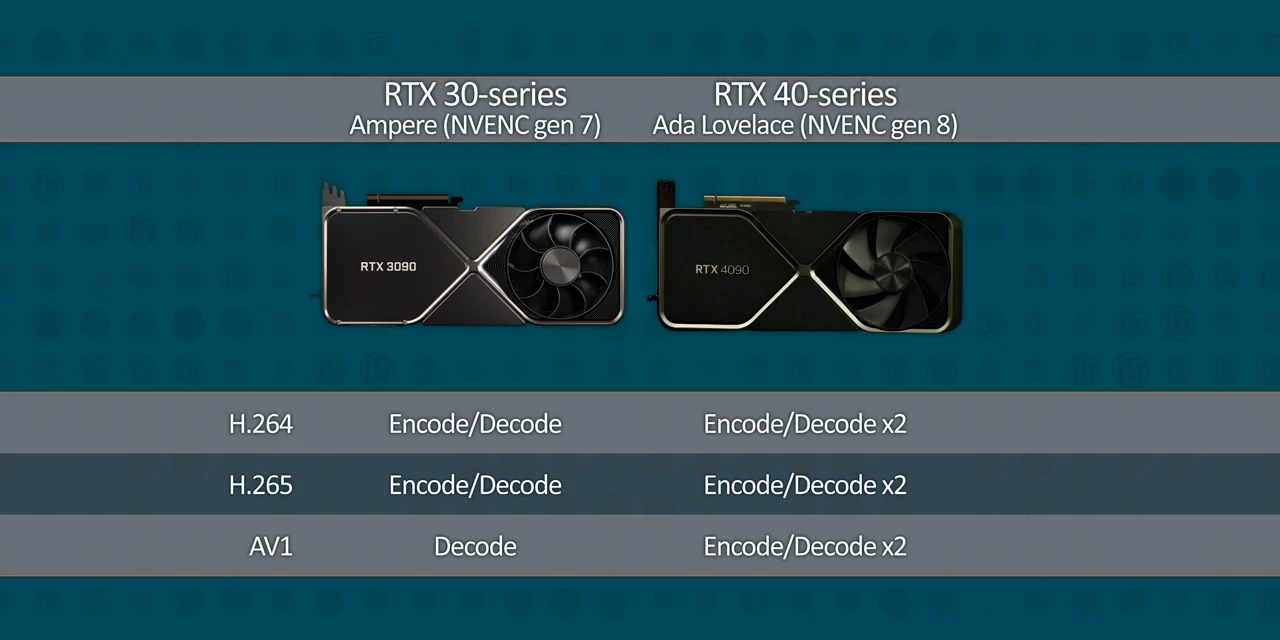

AV1

Another big part of productivity that many companies, Intel and Apple included, are taking very seriously is video encoding, and Nvidia has had to prove that they're not sleeping on the job.  Not only do you get the same high quality encoder that first debuted on the RTX 20-series as we saw in our DaVinci resolve test, but you get two of them, and they're both capable of AV1. AV1 is the new codec that's likely to take over for live and on-demand streaming on sites like YouTube and Twitch. And while it can produce significantly better video quality at the same bit rates, it's significantly more time consuming and difficult to encode, unless you have hardware dedicated for it. Intel Arc launched with it as a headline feature, and it's a safe bet that it'll become more important as time goes on.

Not only do you get the same high quality encoder that first debuted on the RTX 20-series as we saw in our DaVinci resolve test, but you get two of them, and they're both capable of AV1. AV1 is the new codec that's likely to take over for live and on-demand streaming on sites like YouTube and Twitch. And while it can produce significantly better video quality at the same bit rates, it's significantly more time consuming and difficult to encode, unless you have hardware dedicated for it. Intel Arc launched with it as a headline feature, and it's a safe bet that it'll become more important as time goes on.

Unfortunately, we don't have the time right now to do a proper test of it for the review. What I can tell you today is that it's nearly as fast as Nvidia's existing H.264 and H.265 encoders, which is pretty impressive. Of course, all of these capabilities come with some drawbacks, the first of which being the power draw.

Of course, all of these capabilities come with some drawbacks, the first of which being the power draw.

Power consumption

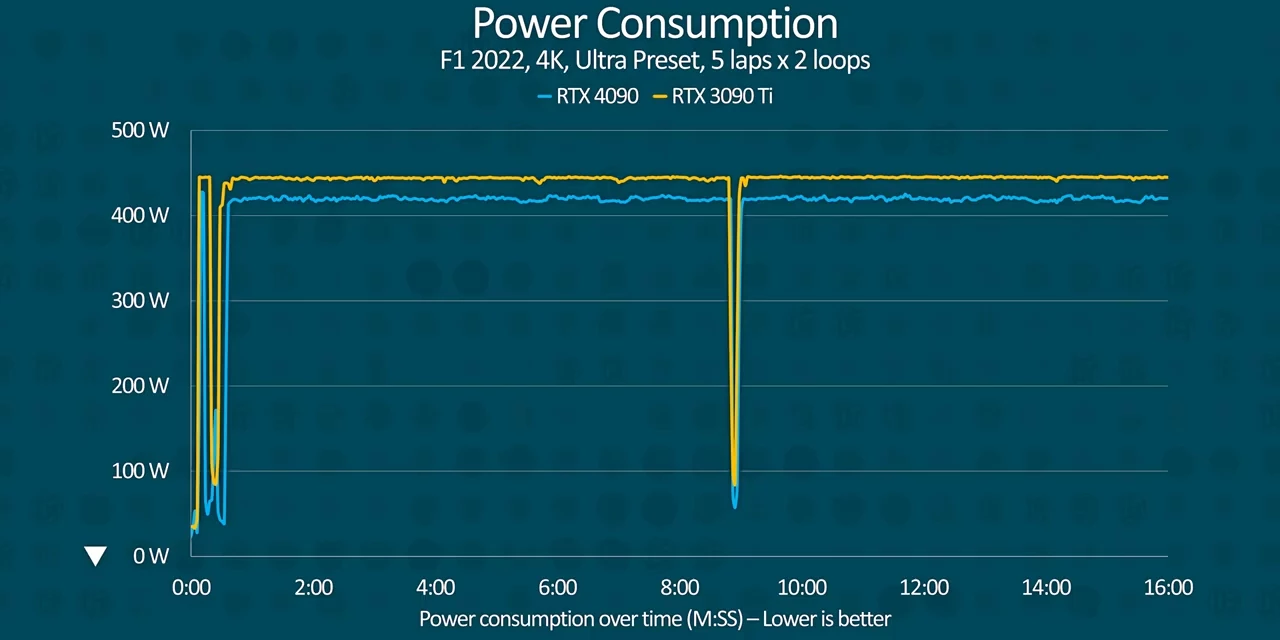

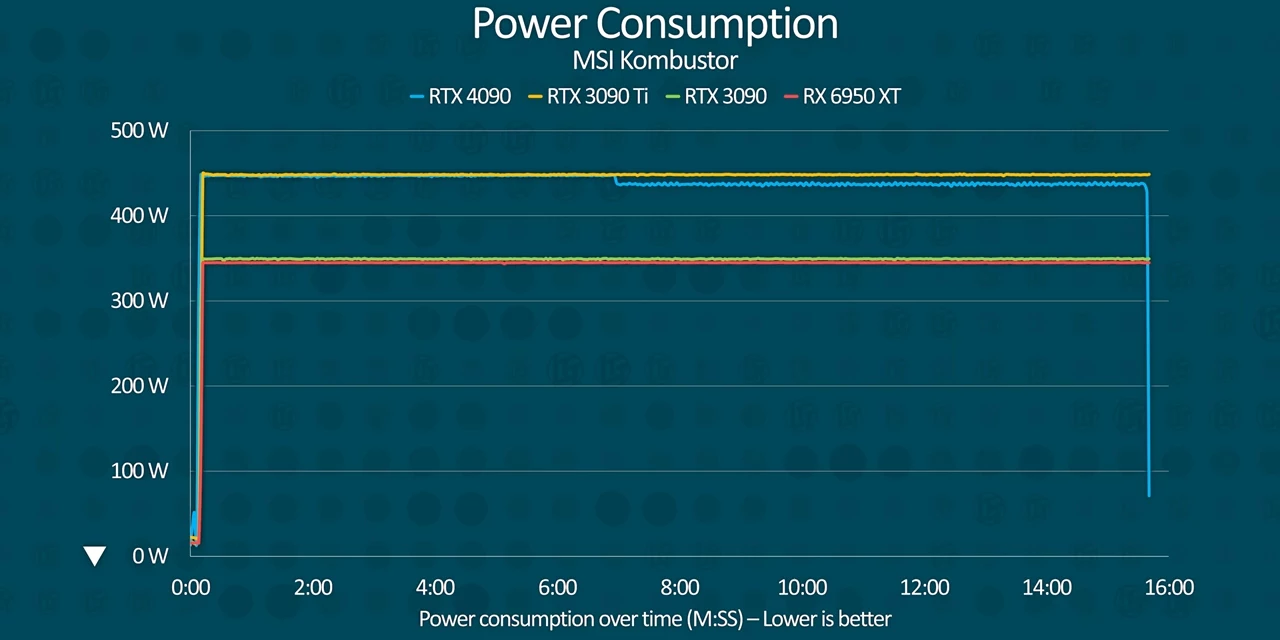

In order to reach the power target that Nvidia set for it, the RTX 4090 universally requires an ATX 3.0 connector and comes with adapters for it in the box.  As the specs indicated, our RTX 4090 draws nearly as much power under gaming load as the RTX 3090 Ti, though it did stay closer to 425 watts than its rated 450.

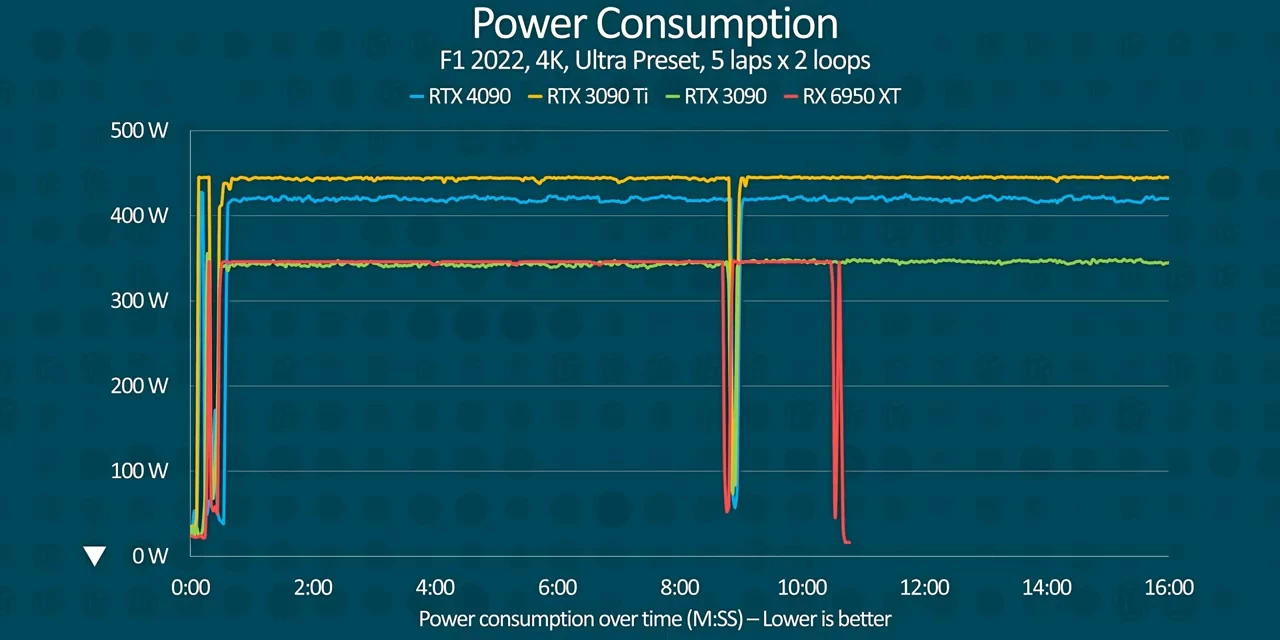

As the specs indicated, our RTX 4090 draws nearly as much power under gaming load as the RTX 3090 Ti, though it did stay closer to 425 watts than its rated 450.  This is a sharp contrast against the RTX 3090 and 6950 XT, which consistently both pulled nearly a hundred watts less. This does not bode well for an eventual RTX 4090 Ti. At least the power targets on the 4080 series cards are lower, though we don't have any of those to test today.

This is a sharp contrast against the RTX 3090 and 6950 XT, which consistently both pulled nearly a hundred watts less. This does not bode well for an eventual RTX 4090 Ti. At least the power targets on the 4080 series cards are lower, though we don't have any of those to test today.

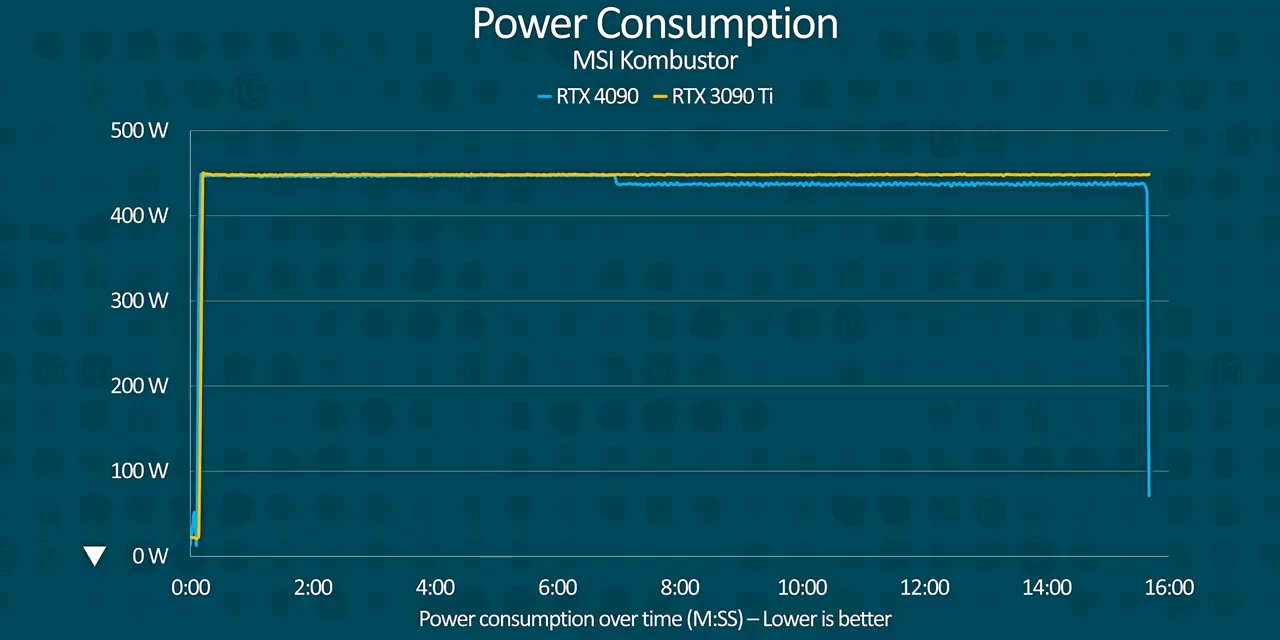

When we hit the RTX 4090 with a more demanding load via MSI Kombustor, power consumption skyrockets to the red line for both the RTX 4090 and the 3090 Ti. Though, curiously about halfway through it kind of dips down to about 440, which is kind of strange.

When we hit the RTX 4090 with a more demanding load via MSI Kombustor, power consumption skyrockets to the red line for both the RTX 4090 and the 3090 Ti. Though, curiously about halfway through it kind of dips down to about 440, which is kind of strange.  Meanwhile, the RTX 3090 once again doesn't cross that 350 watt threshold and neither did the 6950 XT.

Meanwhile, the RTX 3090 once again doesn't cross that 350 watt threshold and neither did the 6950 XT.

Thermals & clock stability

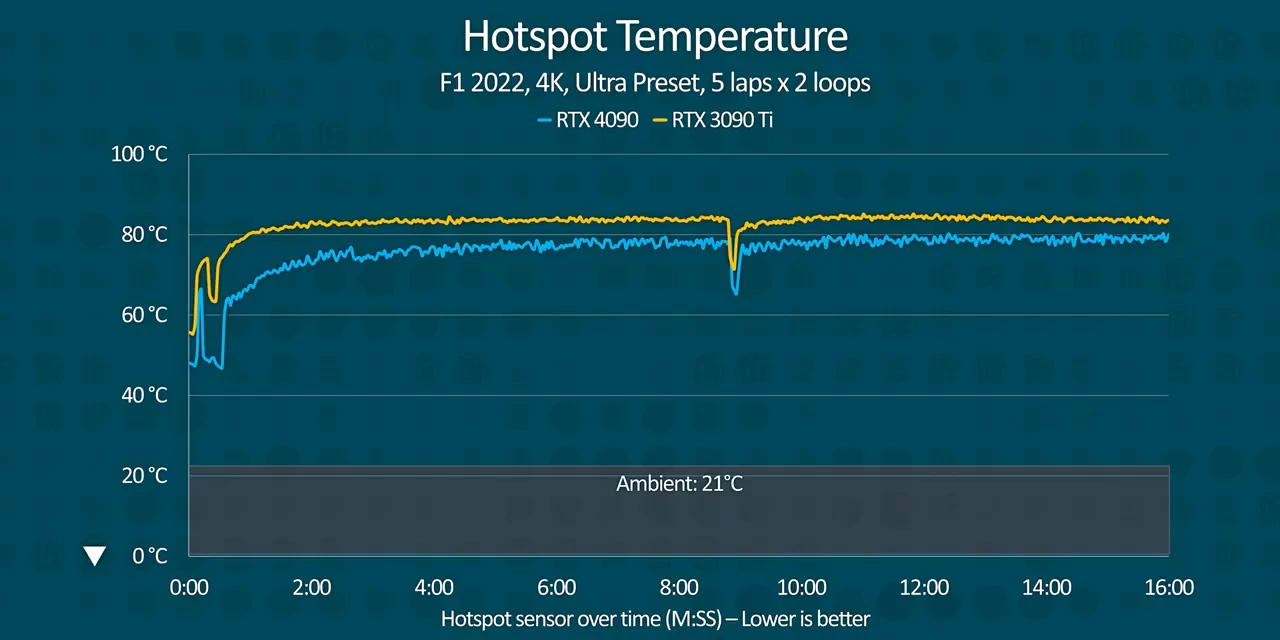

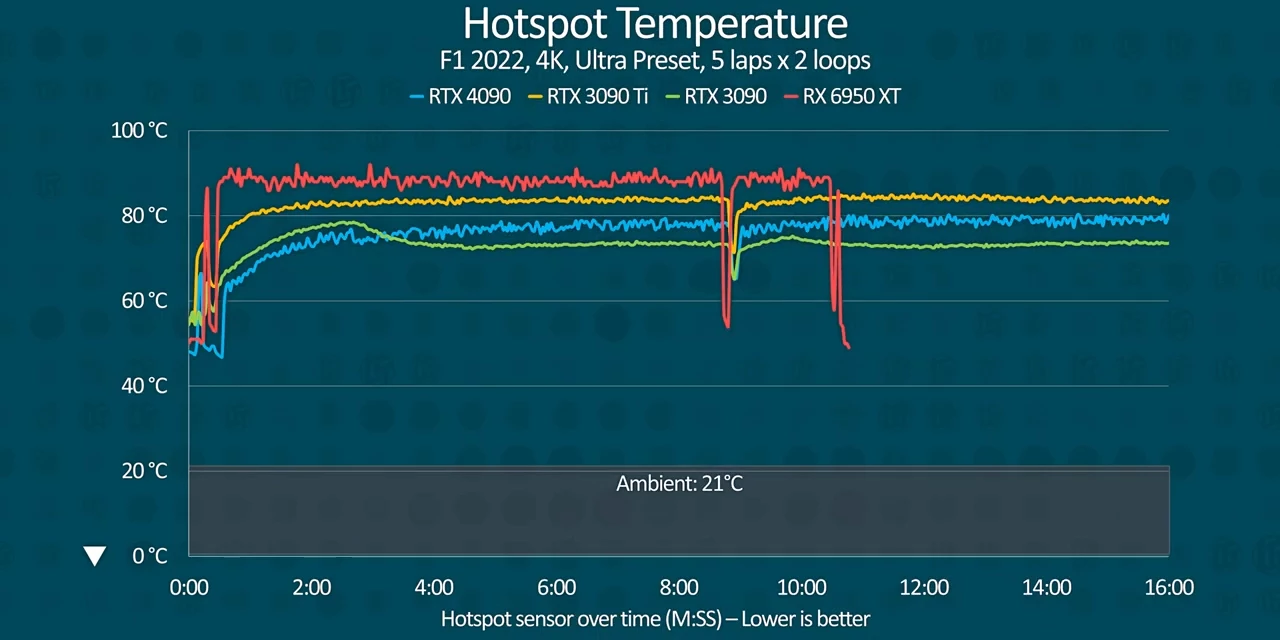

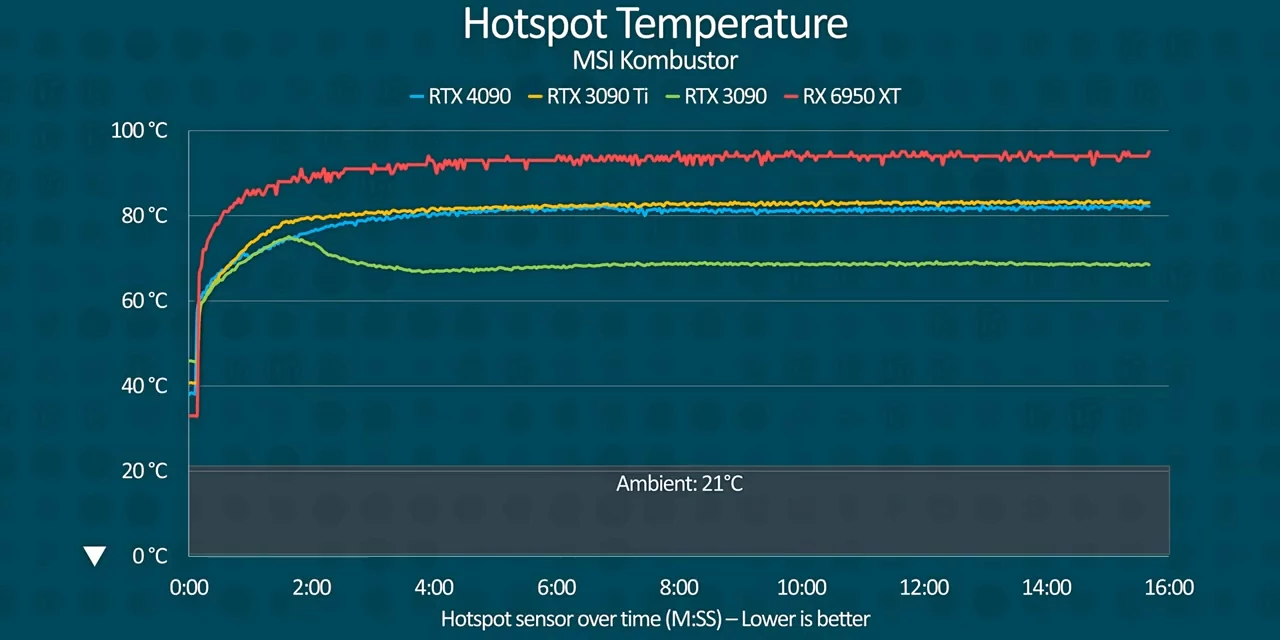

With great power of course comes great thermal output capacity and the RTX 4090 is no exception. With thermals while gaming tracking roughly in line with the power consumption. Its massive three and a half slot cooler keeps the hotspot at the 80 degree mark while gaming, placing it squarely between the RTX 3090 and the 6950 XT despite the power draw.

With thermals while gaming tracking roughly in line with the power consumption. Its massive three and a half slot cooler keeps the hotspot at the 80 degree mark while gaming, placing it squarely between the RTX 3090 and the 6950 XT despite the power draw.  A testament to Nvidia's cooler design.

A testament to Nvidia's cooler design.

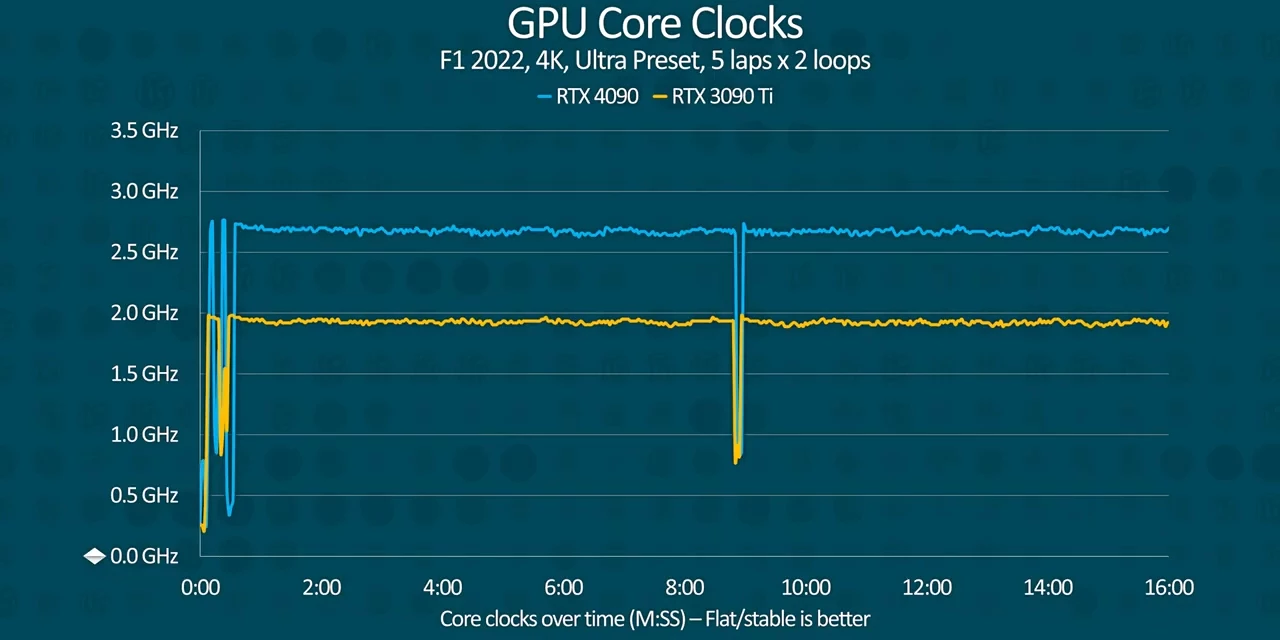

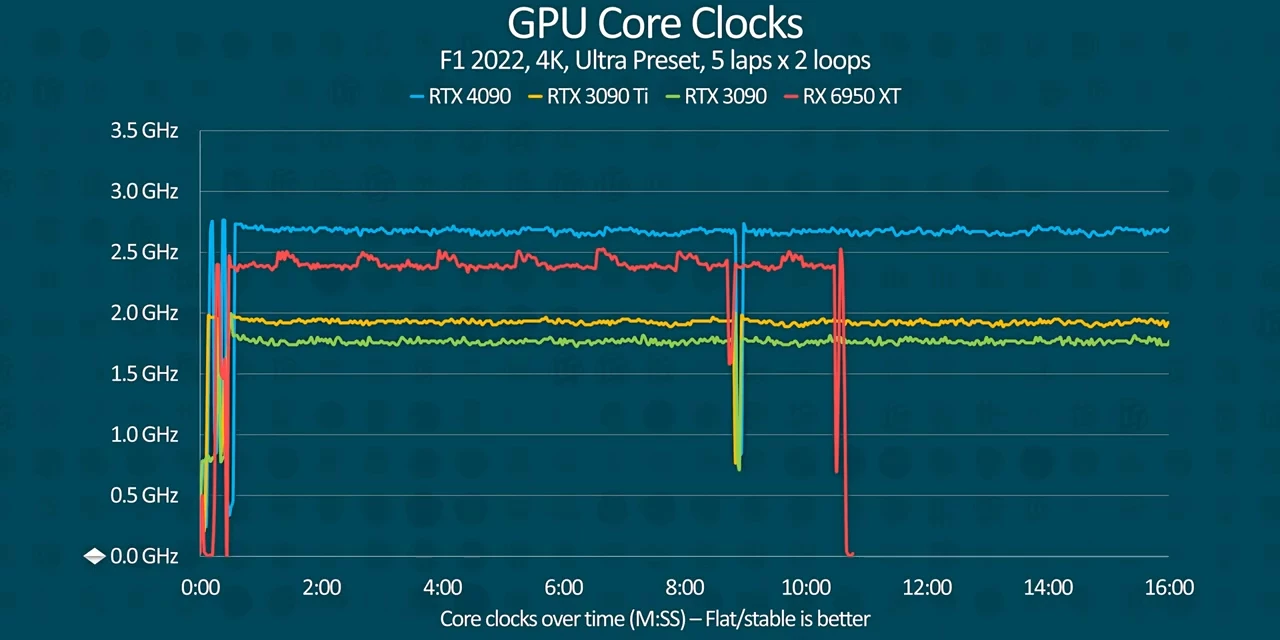

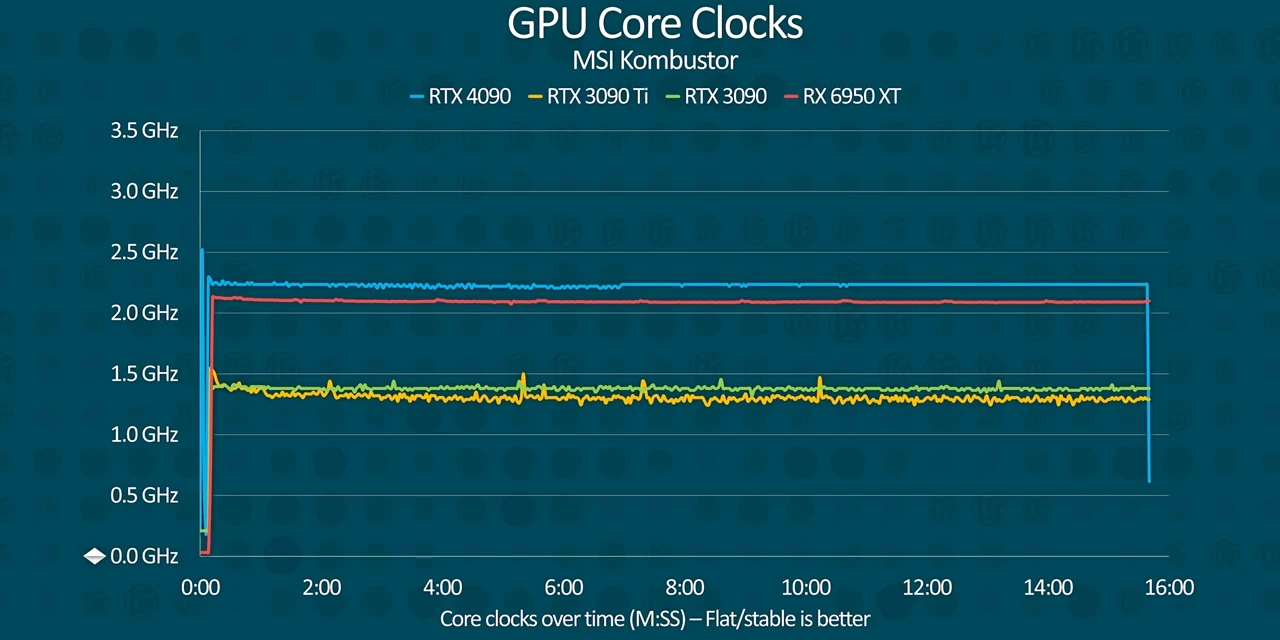

Core clocks were obviously way higher than any previous gen card, and crucially they remain just as stable throughout the run of about 2.6 to 2.7 gigahertz.

Core clocks were obviously way higher than any previous gen card, and crucially they remain just as stable throughout the run of about 2.6 to 2.7 gigahertz.

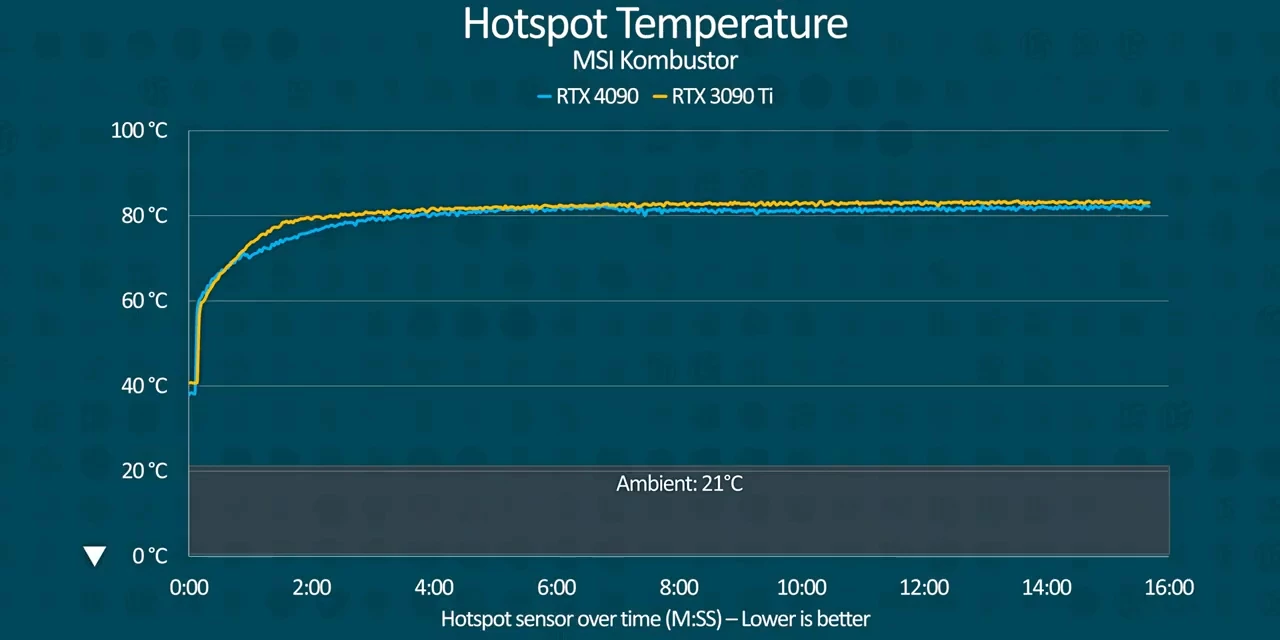

When we look again at our combustor results though,  the hotspot temperature crosses the 80 degree threshold along with the RTX 3090 Ti, and we again see that slight dip halfway into the run.

the hotspot temperature crosses the 80 degree threshold along with the RTX 3090 Ti, and we again see that slight dip halfway into the run.  While the 3090 sits below 70 degrees.

While the 3090 sits below 70 degrees.

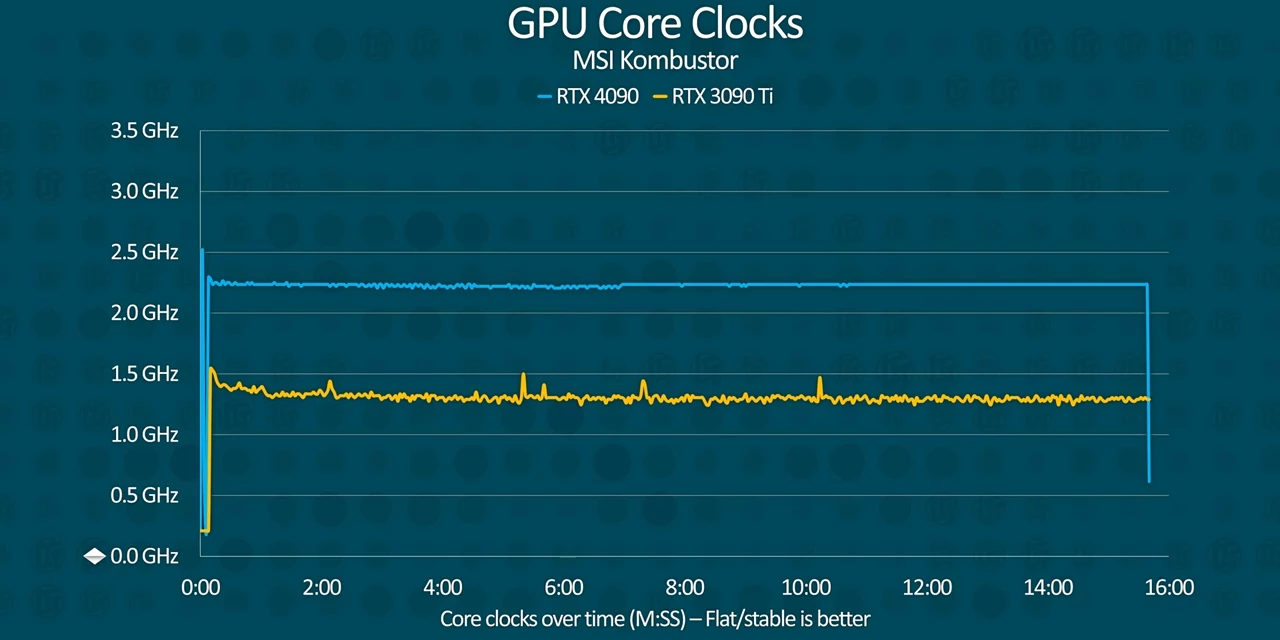

Core clocks end up significantly lower at around 2.25 gigahertz for the 4090 with the 3090 Ti throttling down to below its less power hungry sibling.

Core clocks end up significantly lower at around 2.25 gigahertz for the 4090 with the 3090 Ti throttling down to below its less power hungry sibling.

Our AMD card meanwhile sort of did a heartbeat pattern of boosting and throttling that could result in uneven performance.

Our AMD card meanwhile sort of did a heartbeat pattern of boosting and throttling that could result in uneven performance.

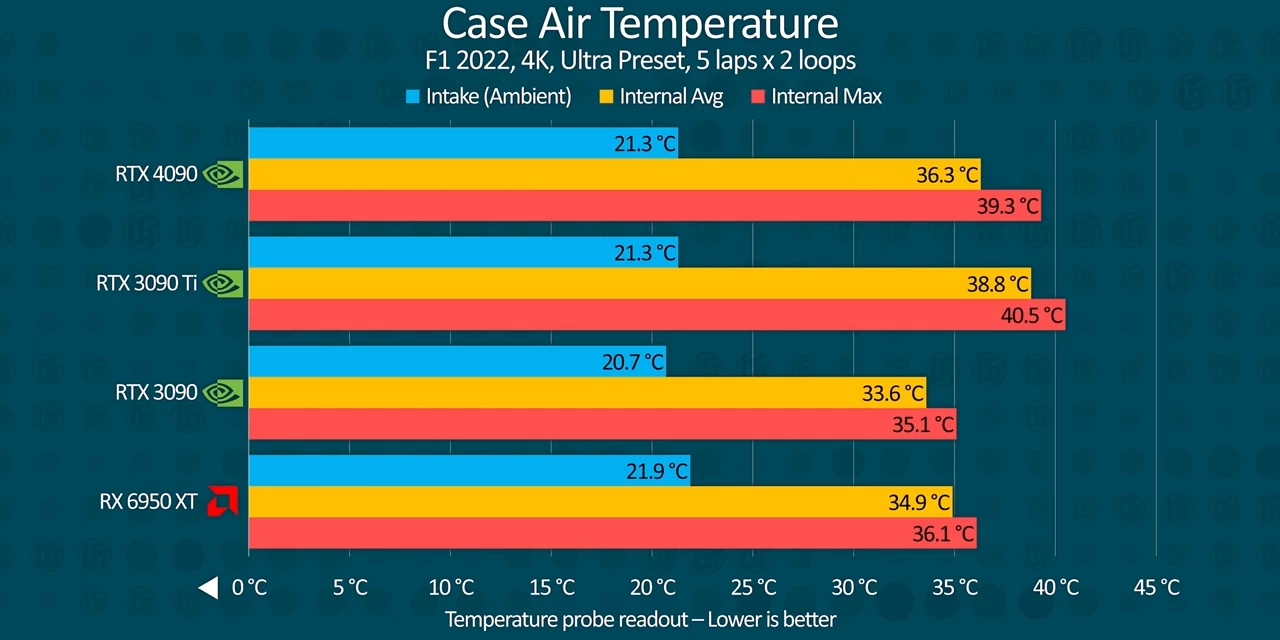

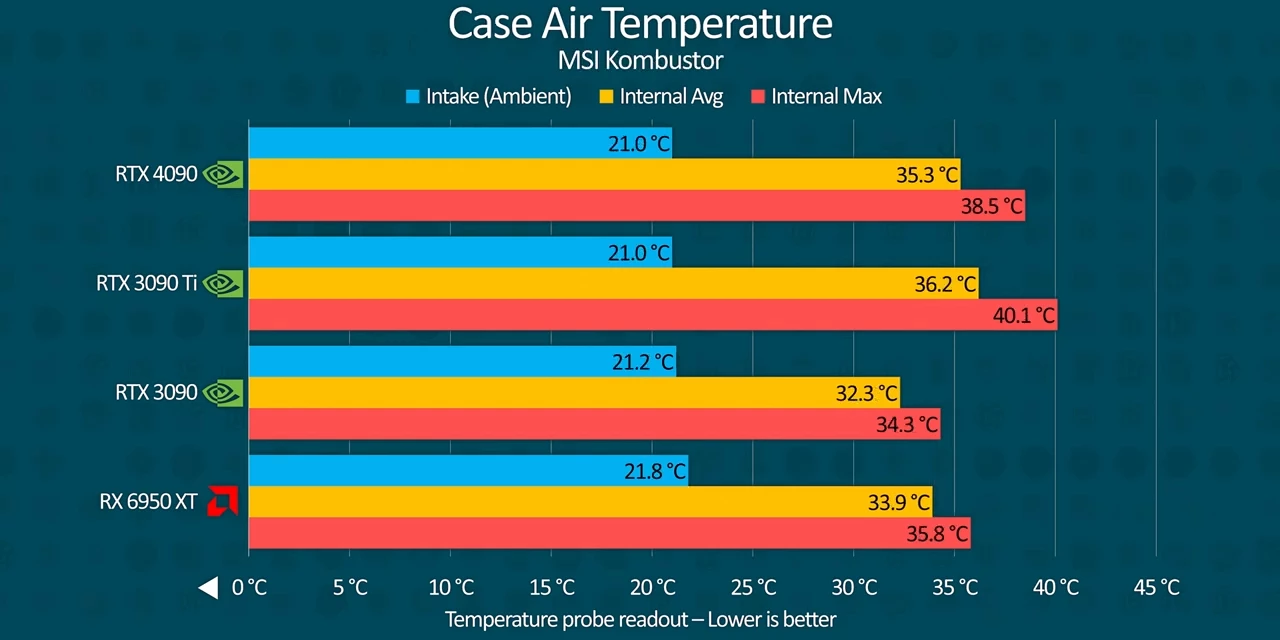

Case air temperature

It's worth noting that these tests were performed inside of a Corsair 5,000 D Airflow with a 360 millimeter rad up top and 3 120 millimeter fans drawing air up front.  So it's not like we were starving the cards for oxygen or feeding them hot air from the CPU.

So it's not like we were starving the cards for oxygen or feeding them hot air from the CPU.  In fact, in spite of all the airflow we gave them, the RTX 4090 and 3090 Ti both caused internal case air temperatures to hit highs of 39 to 40 degrees,

In fact, in spite of all the airflow we gave them, the RTX 4090 and 3090 Ti both caused internal case air temperatures to hit highs of 39 to 40 degrees,  with significantly higher minimum internal temperatures in the RTX 3090, all at an ambient temperature of around 21 degrees.

with significantly higher minimum internal temperatures in the RTX 3090, all at an ambient temperature of around 21 degrees.

That means that if your case can just barely handle a 3090, you won't be able to manage a 4090. Sorry, small form factor aficionados, this card is not for you.

Some quirks... DisplayPort and PCI Express

After all that, you might look at the specs sheet and have some lingering questions like "why isn't Nvidia supporting PCI Express Gen 5?" and, "where's DisplayPort 2.0?" And the answer to those questions, both of them, whether you like it or not, is that Nvidia doesn't think you need them on a $1,600 graphics card. You smell that? Yeah, that's the distinct scent of copium. While it's true that a GPU running a whole 16 lanes of PCI Express Gen 5 probably won't be super useful today, a GPU running eight lanes of Gen 5 certainly would be, especially for those who also want lots of NVMe storage. A good bet for a card of its class.

Remember, the faster the PCI Express link, the fewer the lanes you need for the same bandwidth requirements. Nvidia should know this.

As for DisplayPort 2.0, the official line is, "DisplayPort 1.0 already supports 8K at 60 hertz, and the consumer displays won't need more for while," which might sound fair until you realize that you are also limited to 120 hertz at 4K, a performance level the RTX 4090 can exceed, as we've seen. The only way to get higher refresh rates at DisplayPort 1.4 is to use Chroma subsampling which is, to put it mildly, a suboptimal experience on such premium hardware.

240 hertz 4K displays exist today and will have DisplayPort 2.0 support soon. Arc already supports it, and RDNA 3 has been confirmed to support DisplayPort 2.0 since May. Nvidia is clearly trying to save a buck again on a GPU that costs as much as a Series X, a PS5, a Switch and a Steam Deck, combined.  Or maybe these cards were just ready and waiting in a warehouse for longer than we realized. It's incredibly amusing to me that the first GPU that might actually be capable of running 8K gaming without asterisks is being handled in such a lackadaisical fashion by Team Green.

Or maybe these cards were just ready and waiting in a warehouse for longer than we realized. It's incredibly amusing to me that the first GPU that might actually be capable of running 8K gaming without asterisks is being handled in such a lackadaisical fashion by Team Green.

The days of generational performance for dollar gains are over, they say. Moore's law is dead, they say. And yet the competition doesn't seem to think so. While it's true that nobody can touch the 4090's mammoth performance right now, as we saw with the RTX 3090, that won't last forever.

Conclusion

And that's where things get weird. As it exists right now, while the RTX 4090 chugs as much power as an RTX 3090 Ti, it is a massive upgrade over both that card and the vanilla 3090 in nearly every respect, well in excess of its price increase. For content creators, this is a no brainer. But I cannot in good conscience recommend gamers with more dollars than sense go out and purchase a piece of hardware that is incapable of driving the also very expensive displays that might actually be able to take advantage of it, especially when a less expensive and less power hungry card, perhaps even Nvidia's own RTX 4080s, can do the same thing.

No comments yet