Previous part 6 - Convolutional cats and dogs

Over the last few articles, you've looked at convolutional neural networks and how they can be used for computer vision. You built classifiers for fashion, horses and humans and cats and dogs. But one theme that was common was the concept of overfitting. We're given limited data to train on. Your neural network could get very good at recognizing this data that it was trained on, but not necessarily very good at recognizing any data that it had not previously seen.

To help this make sense, consider this scenario. Say your whole life, you had only ever seen shoes that look like these.  Then to you, that's what shoes would look like. They'd cover the ankle. They'd have flat soles that have laces -- all those kind of things. So then if I showed you this picture

Then to you, that's what shoes would look like. They'd cover the ankle. They'd have flat soles that have laces -- all those kind of things. So then if I showed you this picture you'd instantly recognize everything here is a shoe -- the big ones, the small ones, the ones on their sides -- they're still in your mind, shoes. But what if I show you this picture?

you'd instantly recognize everything here is a shoe -- the big ones, the small ones, the ones on their sides -- they're still in your mind, shoes. But what if I show you this picture?  We know this is a shoe, because we've been exposed to shoes that look like this. But again, if all you had ever seen is the shoes I mentioned earlier, you wouldn't recognize this. There's no laces. The sole is all wrong. And it probably doesn't cover your ankle. You would then be overfit in the data that you think makes what a shoe is.

We know this is a shoe, because we've been exposed to shoes that look like this. But again, if all you had ever seen is the shoes I mentioned earlier, you wouldn't recognize this. There's no laces. The sole is all wrong. And it probably doesn't cover your ankle. You would then be overfit in the data that you think makes what a shoe is.

The same happens with a neural network. If it hasn't been exposed to a label sample that looks like a high heel shoe, then it's the same as our hypothetical person who has only ever seen ankle boots as shoes. Of course, if you don't have the data, you don't have the data. But there are many cases where a neural network overfits because of attributes in the image that it may not have previously seen. But using something called image augmentation, we might be able to fix this. This might seem a little vague. So let's show an example.

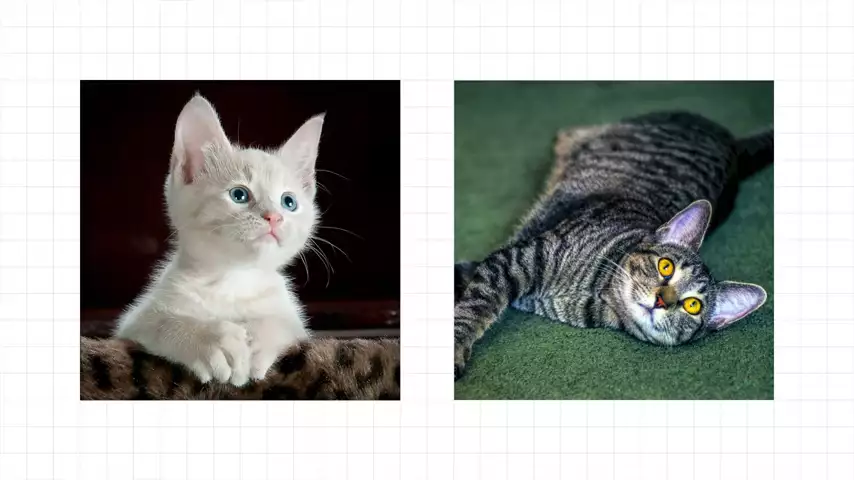

On the left is a cat. And on the right is a person. Computer vision works by isolating features. So you might have features like the pointy ears at the top being indicative of a cat or the two legs at the bottom being indicative of a human. But in the case of a cat, for example

On the left is a cat. And on the right is a person. Computer vision works by isolating features. So you might have features like the pointy ears at the top being indicative of a cat or the two legs at the bottom being indicative of a human. But in the case of a cat, for example the image on the right is a cat. You and I know that. But a computer may not, because if it was only trained on images like the one on the left, then it's looking for triangular shapes oriented upwards near the top of the image. But the ears on the cat on the right don't look like that. So what if on our training set, we could rotate the images? So we trained with a labeled image of a cat that looks like this.

the image on the right is a cat. You and I know that. But a computer may not, because if it was only trained on images like the one on the left, then it's looking for triangular shapes oriented upwards near the top of the image. But the ears on the cat on the right don't look like that. So what if on our training set, we could rotate the images? So we trained with a labeled image of a cat that looks like this.  Then the ears are the same as the cat on the right. So by using a transform like a rotation, we're effectively creating new data to be able to train on.

Then the ears are the same as the cat on the right. So by using a transform like a rotation, we're effectively creating new data to be able to train on.

The good news is you have all the tools you need already to get started on this using image augmentation to artificially extend your data sets to provide new information for the training of your neural network. And this can really help you with overfitting issues.

To get started, you can use the image data generator.

train_datagen = ImageDataGenerator(rescale=1./255)

You've already seen this for image manipulation with the rescaling that you've been doing to normalize the image. And it supports more parameters. So let's explore some examples.

train_datagen = ImageDataGenerator(

rescale=1./255,

rotation_range=40,

width_shift_range=0.2,

height_shift_range=0.2,

shear_range=0.2,

zoom_range=0.2,

horizontal_flip=True,

fill_mode='nearest')

So here's an extended image data generator constructor where I've added a bunch more parameters, as well as the rescale one that you've already seen. You've already seen rotation_range. This will randomly rotate each image by up to the parameterized amount, plus or minus. So here you can see it's 40 degrees. So the image will be rotated left or right by that amount.

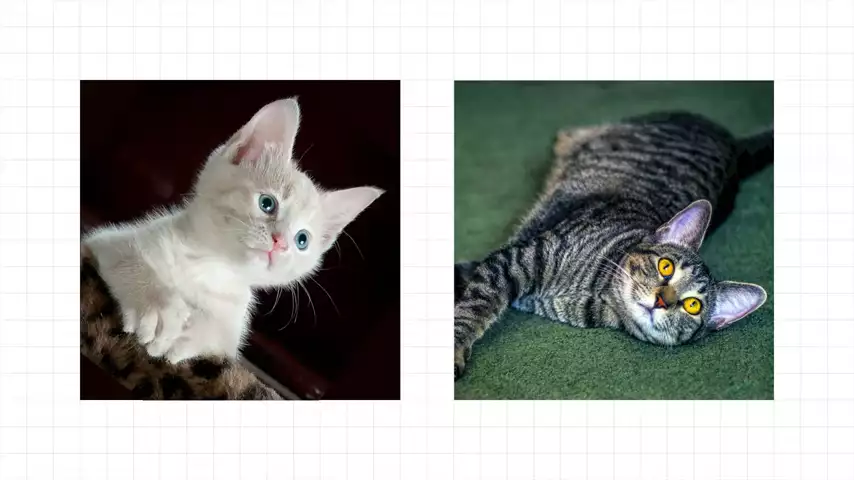

Width and height shift range will move your image around in the frame by that amount. So here, it can be moved by up to 20% in width or height, which can give an effect like this where the image is width-shifted and the subject moves to the left.  Sharing can give an excellent effect. And you set it up with parameter

Sharing can give an excellent effect. And you set it up with parameter shear_range. The value's from 0 to 1. So with 0.2, you can shear by up to 20%. To see when it can be useful, consider these images.

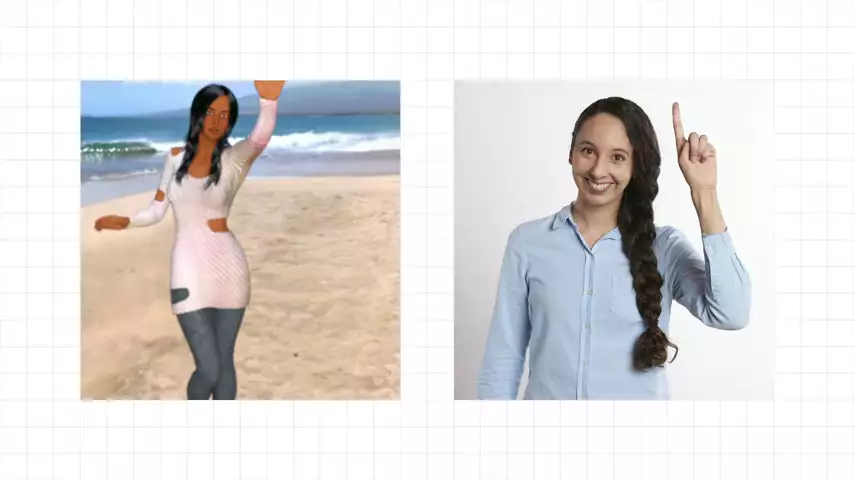

The image on the left is from the Horses or Humans data set. And the person is standing with his hands raised. The image on the right has nothing like it in the data set. And as such, it might fail to be classified as a human. We're overfitting to standing poses, for example. But if the left image was sheared, it can suddenly look a lot more like the image on the right.  The network while being trained could be trained to recognize an image like the one on the right and would be no longer overfit just for standing poses.

The network while being trained could be trained to recognize an image like the one on the right and would be no longer overfit just for standing poses.

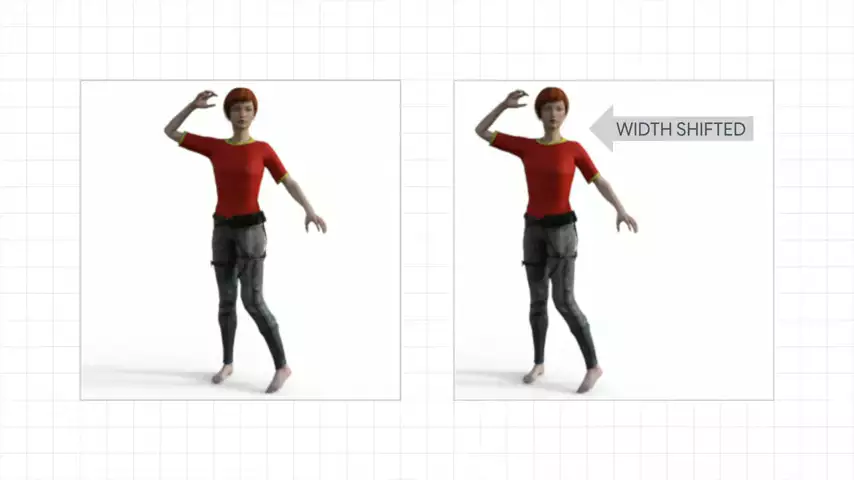

Another example is the zoom_range. And this parameter gives us a value from 0 to 1 to zoom by. This will give us a random zoom of 0% to 20% of the image size. Again, let's see an example of why this is useful.  The image on the left here is a woman from the training set from Horses or Humans. The woman on the right is quite similar. But because of how the image is framed, we can't see her legs. And again, the neural network might be overfit to seeing full bodies that include legs. The lady on the right is obviously human. But the neural network may not recognize that.

The image on the left here is a woman from the training set from Horses or Humans. The woman on the right is quite similar. But because of how the image is framed, we can't see her legs. And again, the neural network might be overfit to seeing full bodies that include legs. The lady on the right is obviously human. But the neural network may not recognize that.

But if we were to zoom in on the image on the left it now has far more similarities to the woman on the right. And we may be able to recognize the woman on the right as a human if we train with data like that on the left.

it now has far more similarities to the woman on the right. And we may be able to recognize the woman on the right as a human if we train with data like that on the left.

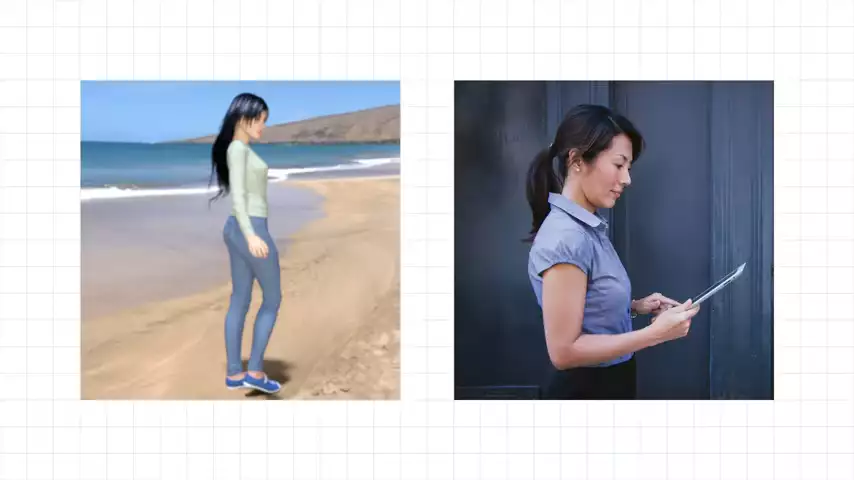

Another example is horizontal_flip. If this is set to true, then images will be randomly flipped. And let's take a look at an example of how this could be effective.  The image on the left is from the Horses and Humans data set. As you can see, she has her right hand up. The woman on the right is quite similar. But she has her left hand raised. If the training set only has images like the one on the left, we could overfit for humans with their left hand raised. But if we randomly flip, we could effectively extend our data set to include left hand raisers.

The image on the left is from the Horses and Humans data set. As you can see, she has her right hand up. The woman on the right is quite similar. But she has her left hand raised. If the training set only has images like the one on the left, we could overfit for humans with their left hand raised. But if we randomly flip, we could effectively extend our data set to include left hand raisers.

Fill_mode equals nearest is used to fill in parts of the image that could be lost in some operations like skewing.

So that's a tour of some of the operations that are available with image augmentation-- a really cool tool to help you avoid overfitting in image-based data sets.

I hope this has been useful for you.

Next: Part 8 - Tokenization for Natural Language Processing

No comments yet