And this is weekly update on the coolest developer news from Google.

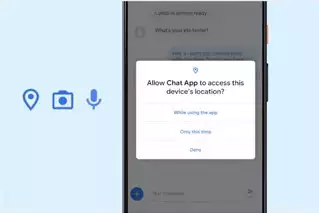

Google announced some new features focused on privacy and security in Android 11. One-time permissions let users give an app access to the device microphone, camera, or location just one time.  And best of all, you don't need to make any changes to your app for it to be able to work with these permissions.

And best of all, you don't need to make any changes to your app for it to be able to work with these permissions.

In Android 11, background location will no longer be a permission that a user can grant via a runtime prompt. And it will require a more deliberate action. If your app needs background location, the system will ensure that the app first asks for foreground location. The app can then broaden its access to background location through a separate permission request, which will then cause the system to take the user to Settings in order to complete the permission grant.

Another neat feature is that if you don't use an app for an extended period of time, Android 11 will auto reset the runtime permissions and notify you of this. And these are just a few of the updated security and privacy features.

Augmented reality is fun. And with the ARCore Depth API. Depth in your field of view can be measured without using radar or specialized devices. It uses depth from motion algorithms to generate a depth map from a single RGB camera. The Depth API is now available in ARCore 118 for Android and Unity. So it will work across hundreds of millions of compatible Android devices. One key capability of it is occlusion, where digital objects can accurately appear behind real world objects.

Also new this week is the AI adoption framework. Its goal is to help you through the challenges of building AI and ML into your overall workflow. If you ever wanted to know how to structure your teams, which ML projects to prioritize, or, for example, how to implement responsible and explainable AI and then do it all at scale, then the framework will help you answer all of these questions and more.

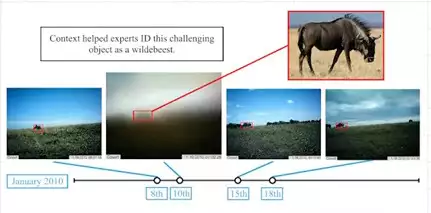

On the research and AI front, detecting objects in static images can often be really difficult. It's pretty hard to see that this is a herd of wildebeest in a static image.  But if we take into account temporal data with contextual clues across time for each camera, then we can improve the recognition of objects without lots of new training data. So for example

But if we take into account temporal data with contextual clues across time for each camera, then we can improve the recognition of objects without lots of new training data. So for example these images taken at the same location at a different time with different weather conditions can then help us identify the contents of the blurry image.

these images taken at the same location at a different time with different weather conditions can then help us identify the contents of the blurry image.

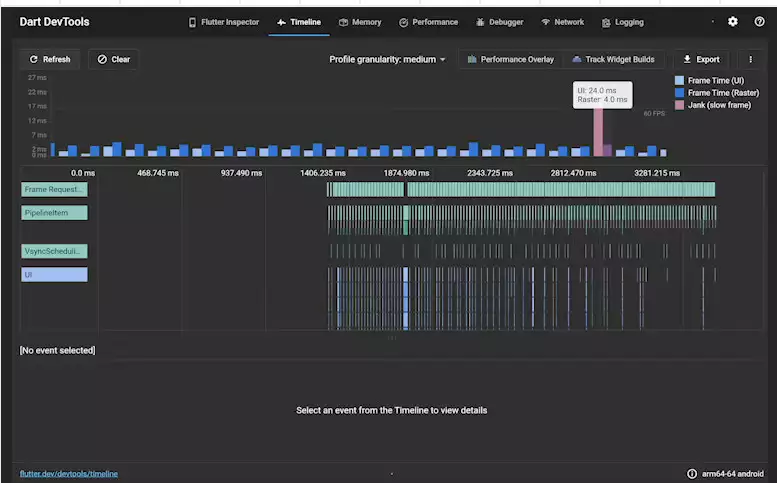

If you're a Flutter developer, you might like to see how the team rebuilt Dart DevTools from scratch using Flutter.  It takes advantage of the hot reload feature that lets you make changes to your app while it's running. And this is perfectly suited for testing, debugging, and analyzing an app. There's a static analysis tool that provides analysis to your favorite IDE so you can have code completion, linting, and things like error analysis right at your fingertips. Dart DevTools has been shipped as a web application. So it can integrate into the existing tooling experience across all target platforms and IDEs. There's a whole lot of great new stuff in there, including updates to the performance and memory pages, as well as a completely new Network page.

It takes advantage of the hot reload feature that lets you make changes to your app while it's running. And this is perfectly suited for testing, debugging, and analyzing an app. There's a static analysis tool that provides analysis to your favorite IDE so you can have code completion, linting, and things like error analysis right at your fingertips. Dart DevTools has been shipped as a web application. So it can integrate into the existing tooling experience across all target platforms and IDEs. There's a whole lot of great new stuff in there, including updates to the performance and memory pages, as well as a completely new Network page.

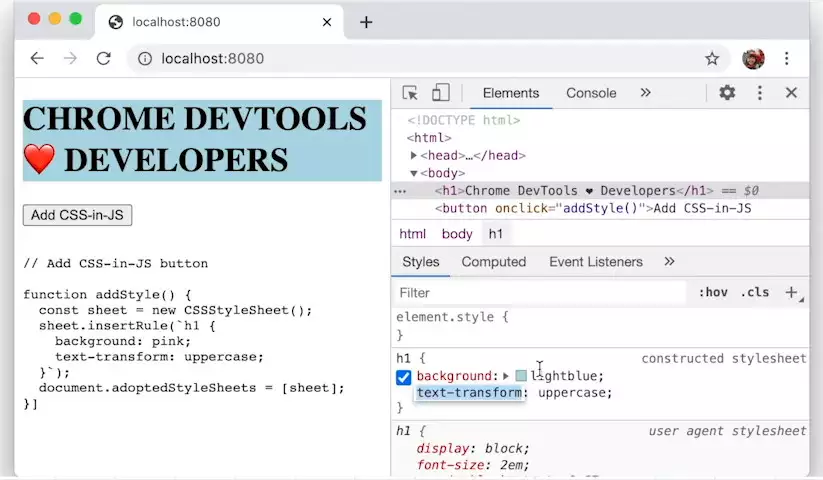

For web developers, check out what's new in DevTools in Chrome 85 with updates, including style editing for CSS and JS frameworks, the Lighthouse panel being updated for version 6, support for new JavaScript features, including syntax highlighting for private fields, knowledge coalescing operators, and optional chaining. And of course there's my personal favorite. It's the updated Computing pane in the Elements panel.

And of course there's my personal favorite. It's the updated Computing pane in the Elements panel.

No comments yet