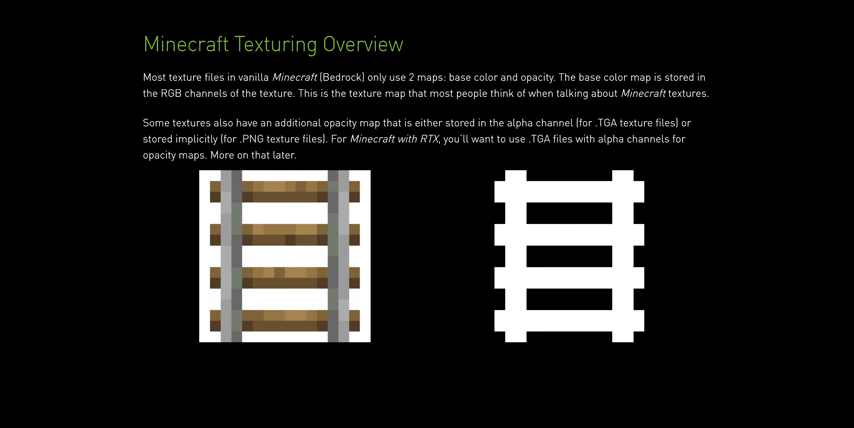

The RTX Real-time Ray Tracing Beta for Minecraft Bedrock is finally here and it looks absolutely amazing. I'm going to tell you guys all about it. But first, there's some really cool changes that have taken place under the hood to get RTX properly implemented in Minecraft. In normal Bedrock Edition Minecraft, there are two maps for every texture in the game, the base color as well as the opacity.

These maps contribute the colors as well as the transparency, or how see-through every block is.

However, when they moved to implement ray traced lighting, there was a pretty apparent problem. Regular Minecraft doesn't calculate light bouncing. Instead, light just spreads across any nearby surface evenly.

They could've just made every type of block bounce light in the same way. Problem is, that wouldn't have been true to the real-world behavior of materials and light.

For instance, if you shine a flashlight at a mirror, the majority of that light is gonna bounce right off and illuminate the surface that it bounces to. But, if you were to shine a light at your carpet, chances are that most of that light will end up illuminating the carpet itself, without much bouncing to other surfaces. This is because your carpet is not very metallic or reflective, and because it's very rough relative to the smoothness of a mirror.

Problem is, in the existing version of Minecraft, there is no way to define a block or a texture as reflective or rough. So for light to be able to properly bounce, the developers actually had to add four new mappings to every texture in the game:

metalness, or reflectiveness

metalness, or reflectiveness

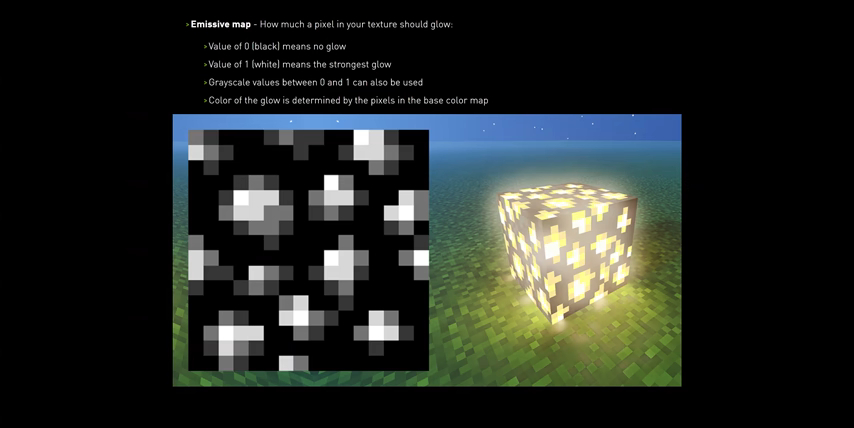

emissivity, or how much it should glow

emissivity, or how much it should glow

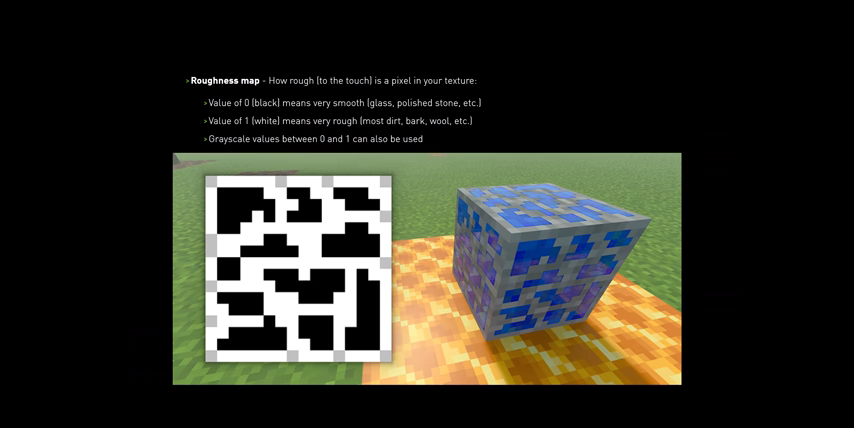

roughness, so how rough it is

roughness, so how rough it is

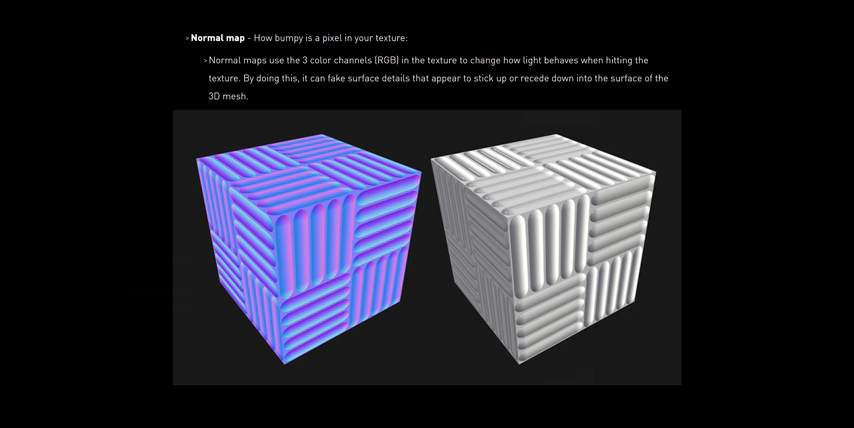

height map, where you could add artificial depth to parts of a texture in the game

height map, where you could add artificial depth to parts of a texture in the game

With this added data, ray traced lighting can be a lot more accurate.

What this means is that RTX support in the game comes with some caveats. Primarily, that ray tracing is not going to work on a non-RTX card, even though Nvidia has allowed this at times in the past. And, it also won't work on just any old Minecraft saved game right out of the box. You're going to need to select an RTX-compatible resource pack to enable ray traced lighting.

It's not a huge deal, it just means that for the moment, you're stuck to the sample texture packs that Nvidia and Mojang have provided. On the plus side, it does make it super easy to use RTX on maps that are ported over from the Java version of the game, which actually looks pretty sweet if we port over the lobby of our upcoming Java-edition server,by the way. There are also a couple of RTX-tailored maps, but behind the scenes, these are just regular maps with RTX resource packs specific to them bundled in.

To see what it takes to run that, we grabbed three different tiers of graphics cards. We used the same CPU across the board because spoiler alert, it will make little to no difference due to how GPU bottlenecked we're expecting to be. We tried both an experiential test just running around, breaking blocks, and killing mobs, to get a sense of how playable the game felt on each of our graphics cards. And then, we also ran benchmarks on the Nvidia-supplied Imagination Island sample map, riding around one of the in-game trains on a predefined loop. We settled on a render distance of 16 chunks, since it's the only option that's supported with both RTX on and off. And, it ended up being less than a 10% performance hit compared to using the lowest, 8 render distance with RTX on. Every other visual setting we left turned on.

Beginning with the lowest and RTX-supported option, an RTX 2060 KO from EVGA. After loading in Imagination Island with RTX off in 1080p, we were greeted with the expected crisp, clean, 180 plus fps experience, no stutters.

Then we turned RTX on, and oof. We were both shocked and a bit relieved. Experientially, the game actually feels a bit less responsive to movements, but it was playable with no noticeable stuttering, and an average fps of 53. Although, that is less than a third of what we had with RTX off. We also noticed that when interacting with the environment, like playing or breaking blocks or opening doors, we could see the shadows being processed, which made it feel a little disingenuous to call it real-time ray tracing. It was honestly kind of a bit jarring.

This is where another newly-introduced technology comes into play, though. DLSS 2.0 allows the game to be rendered at a lower than native resolution, and then AI-upscaled with the help of the tensor cores on RTX GPUs. It actually works really well, and we didn't notice a visual difference with it enabled versus disabled. The only problem is, that 50-ish fps number that we mentioned earlier, that was with DLSS turned on already. And if we switch it off, we're actually looking at closer to 30 fps on average, or about a sixth of our RTX off performance.

It actually works really well, and we didn't notice a visual difference with it enabled versus disabled. The only problem is, that 50-ish fps number that we mentioned earlier, that was with DLSS turned on already. And if we switch it off, we're actually looking at closer to 30 fps on average, or about a sixth of our RTX off performance.

Moving up to RTX 2070 Super is where the experience starts to feel a lot smoother, with an average of 72 frames per second. The sluggishness we felt with the 2060 KO ii completely gone, and I think it's safe to say that this is definitely the sweet spot for 1080p RTX on Minecraft at the moment.  I think in some of the example maps, the colors in direct sunlight are probably a bit oversaturated, and some textures like water could stand to have their reflectiveness toned down.

I think in some of the example maps, the colors in direct sunlight are probably a bit oversaturated, and some textures like water could stand to have their reflectiveness toned down.  Like, it kind of makes the game feel like one of those cheap Minecraft clones.

Like, it kind of makes the game feel like one of those cheap Minecraft clones.

However, when you get into a map with some interior lighting and some windows, the god rays are insane. Or in something like this cave area, the way the light bounces around is just stunning.  Like, it really goes to show how much better RTX can look than a traditional Java-edition shader pack.

Like, it really goes to show how much better RTX can look than a traditional Java-edition shader pack.

That is at least until SonicEther releases his software, Path-Traced Shaders. But dang, for now, the RTX 2070 Super is for sure the way to go if you're looking to buy a GPU for your spoiled af kids, so they can play Minecraft with real-time RTX at 1080p.

Then, there's the RTX 2080 Super, the last sort of reasonable option in the RTX GPU lineup. The improvements moving up to this card actually were surprisingly unimpressive, with an average fps of 80 compared to the 72 average on the 2070 Super.

That is technically an upgrade, but I would say it's probably not worth the money for this specific use case, at least at 1080p. But hey, since we've got a bit of head room, how about 1440p? Or even 4K? Yeah. Good luck with that.

1440p with DLSS on brings our 2080 Super right down to 61 fps, so that's usable.  And as for 4K, well, it's approaching what I would consider to be playable at around 41 fps average. But the thing is, that's not the kind of experience anyone spending 750 US dollars on a graphics card wants to have.

And as for 4K, well, it's approaching what I would consider to be playable at around 41 fps average. But the thing is, that's not the kind of experience anyone spending 750 US dollars on a graphics card wants to have.

With all of that in mind, was it worth the wait? Well, it's not something we're going to switch development of our LTT servers to Bedrock for. But, it does look legitimately next gen in a way that I never thought I'd hear myself say about Minecraft.

As for the performance. The beta is a bit of a poop demonstration. I mean, even on a 750 US dollar graphics card, it's really only playable at 1440p max. And then, forget about getting the most out of your high refresh rate gaming display. And, while RTX has a long and proud history of massive performance penalties, normally we're talking like 30% to 50% massive, not more than that massive. So what we're hoping is that, with some optimizations, they can get it to be a little bit more performant. And if they do, honestly, I am pretty stoked to see what the community can do to make even better and more unique builds and really cool-looking experiences with the extra flexibility that RTX and Minecraft can offer.

No comments yet