You wouldn't be able to connect high-speed components like graphics cards and NVMe drives to your computer without the PCI Express bus that's been a fixture on our motherboards for over a decade now.

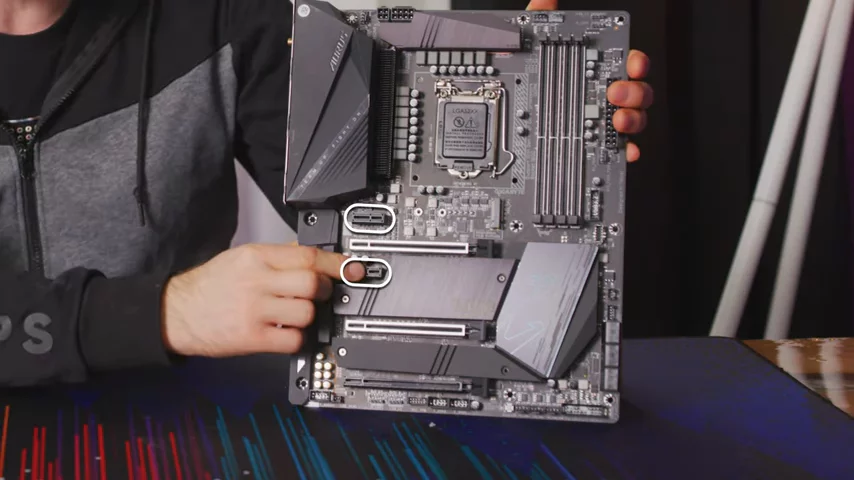

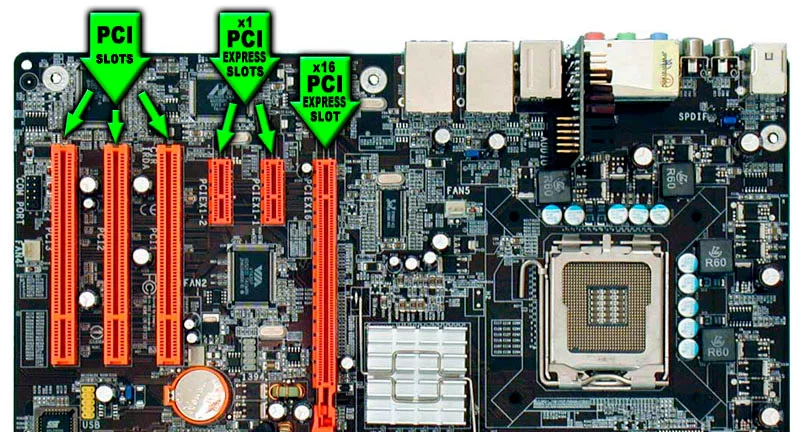

If you're not familiar, you can connect your devices via PCI Express in a few ways. Most commonly, you insert an adapter card into one of these slots right here. But did you know that not all PCI Express connections are the same?

But did you know that not all PCI Express connections are the same?

You see, PCI Express can connect your devices either directly to your CPU or to the chipset, which sits between your CPU and some of your other components, like your Ethernet jack or some USB ports.

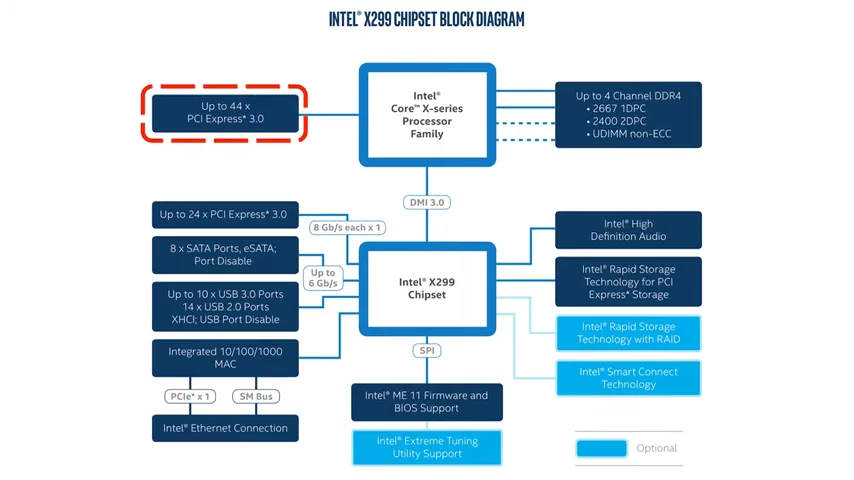

This might be a point of confusion, if you've seen a motherboard that supports, say, 40 PCI Express lanes, but the CPU can only run one graphics card at 16x speed or two cards at 8x speed each. I mean, shouldn't all those PCI Express lanes let you plug in more than just one or two adapter cards?

This is where the difference between chipset versus CPU PCI Express lanes comes into play. Most consumer CPUs will have 16 or 20 lanes running directly off of them, which you should be using for your graphics card for the best performance. But then, why is that better than using the lanes that come off the chipset?

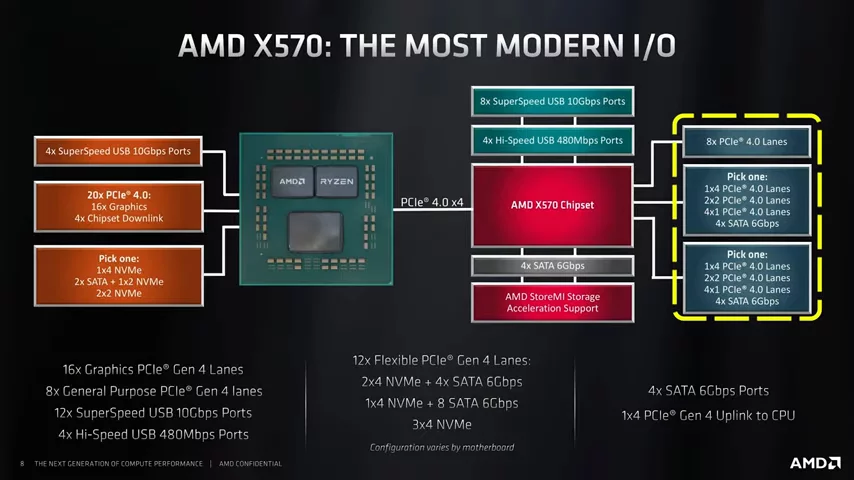

Well, one reason is that having the extra step of routing data through that chipset introduces latency, which can slow down your performance. Perhaps a bigger reason, though, is the link that connects the chipset back to the CPU.  You see, although you'll often see diagrams showing that 16 or 20 or even more PCI Express lanes come off of the chipset, the connection that it has to the CPU is actually quite narrow. It might be only four lanes wide.

You see, although you'll often see diagrams showing that 16 or 20 or even more PCI Express lanes come off of the chipset, the connection that it has to the CPU is actually quite narrow. It might be only four lanes wide.

Chipsets provide lots of lanes to other components and peripherals because of how many things connect to it: firmware, SATA, audio, Ethernet, USB devices. But these are all either relatively low-bandwidth applications or they're very unlikely to all be fully utilized at the same time. So, having them bottlenecked as they send data to the CPU isn't all that big of a deal. That limited bandwidth can hurt you, though, if you try to connect something that moves considerably more data, such as a graphics card, while you're also trying to use all that other stuff.

The long 16x PCI Express slots on your motherboard, those are typically connected directly to the CPU, so you don't have to worry too much about accidentally connecting a graphics card to your chipset. But, because those, usually two, share the same 16 lanes, you can't run two cards in them at full speed, unless you have a higher-end CPU that supports more lanes.

Additionally, if you have a standard 16-lane Intel CPU, this means that an NVMe drive installed in an M.2 slot on your board is going to be routed through the chipset. Now, you shouldn't see a ton of performance loss, but the aforementioned latency, plus any heavy network, SATA, or USB traffic being routed through those four lanes back to the CPU, could result in some slowdowns, depending on your workload.

On a related note, this is actually why some SATA ports get disabled when you plug in an M.2 drive, since those ports are also connected to the chipset, and they share bandwidth. The good news, though, is that if you're trying to squeeze every ounce of performance out of a drive, Intel's upcoming 11th-gen desktop CPUs are supposed to have 20 lanes coming directly off the CPU, four of which are meant to support an M.2 drive. And if you're on a Ryzen platform, you're in luck, because you already have 20 or more lanes coming off the CPU.

It's kind of like chip manufacturers widening the highway for us, but without the punitive speed limits.

No comments yet