RTX 3070 is going to outperform a 2080Ti at less than half the price, $499. Is Nvidia insane? I mean, even with all of the leaks indicating that Ampere was going to be super powerful, I was in no way ready for this.

Obviously I'm not ready to hand Nvidia their crown until we see the new cards for ourselves. But based on what they've shown, we are in for one heck of a ride. They announced well a lot, and we're going to get to all of it, but the big one is, Ampere is real and Ampere is an absolute monster.

As it turns out, the recent leaks were accurate in many if not most ways. Where Turing based RTX 2000 GPUs were manufactured on TSMC's 12 nanometer FinFET process, which was actually derived from their 16 nanometer process, Nvidia instead partnered with Samsung on a custom eight nanometer process for the RTX 3000 series.  And the difference here is much bigger than we got moving from Pascal to Turing.

And the difference here is much bigger than we got moving from Pascal to Turing.

Here's the thing, for over three years now, G-Force GPUs have had in the neighborhood of 20 to 25 million transistors per square millimeter, Ampere around 60 million per square millimeter, that's over double, and breaking with what's become their habit lately, they didn't just use this increase in density to lower their own costs and keep selling a similar performance with some new features tacked on. The RTX 3080 boasts over 28 billion that's with a B transistors.

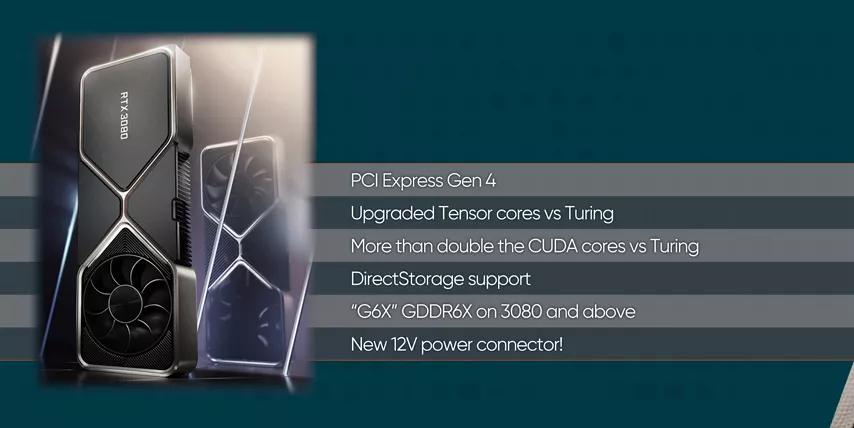

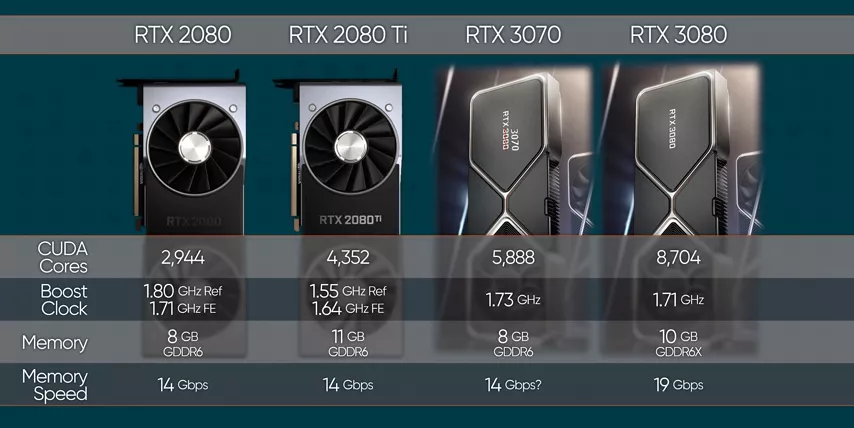

What does that get us? Well for starters, PCI Express Gen 4, upgraded Tensor cores and Oh, and casually more than double the CUDA cores across the board.  And here's the kicker, that's not compared to the cards that each of the 3000 series GPU replaces, that's compared to the previous generations step up.

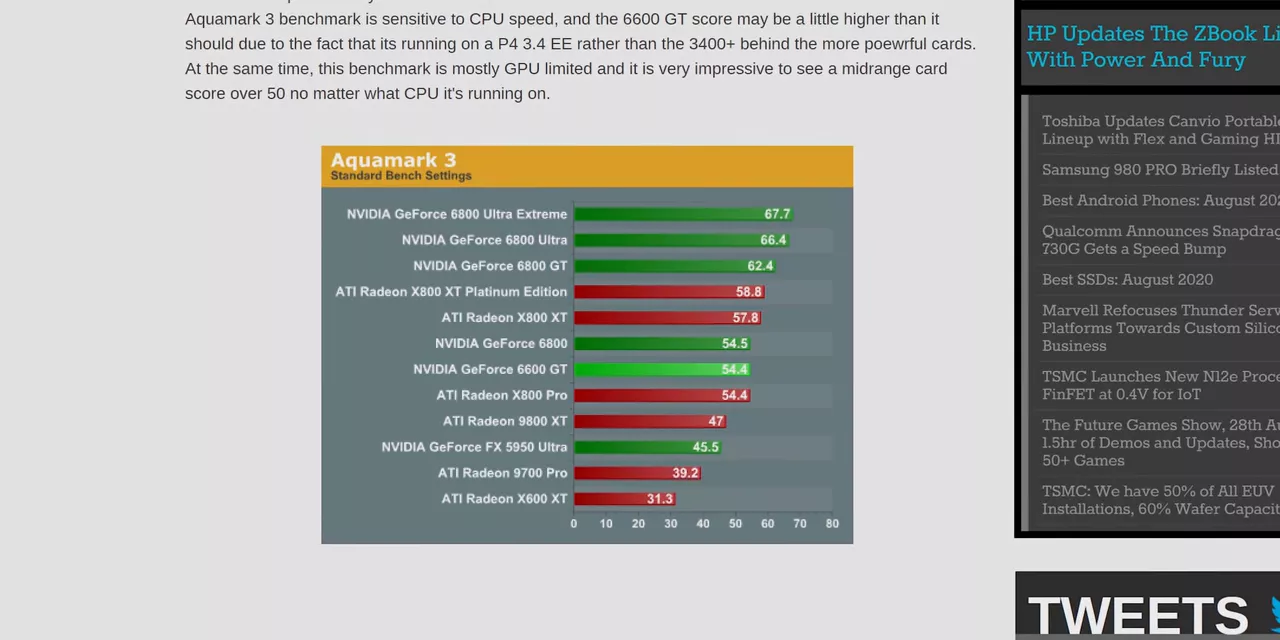

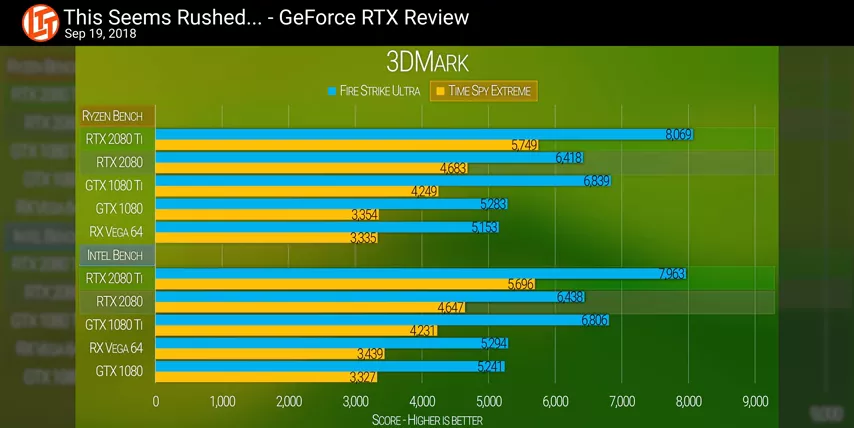

And here's the kicker, that's not compared to the cards that each of the 3000 series GPU replaces, that's compared to the previous generations step up.  I'm not kidding, on paper every Ampere card that was announced, even the 3070 is faster than a 2080Ti. To find the last time that this happened, a third rundown GPU being faster than the previous flagship, I had to dig pretty deep through the archives.

I'm not kidding, on paper every Ampere card that was announced, even the 3070 is faster than a 2080Ti. To find the last time that this happened, a third rundown GPU being faster than the previous flagship, I had to dig pretty deep through the archives.

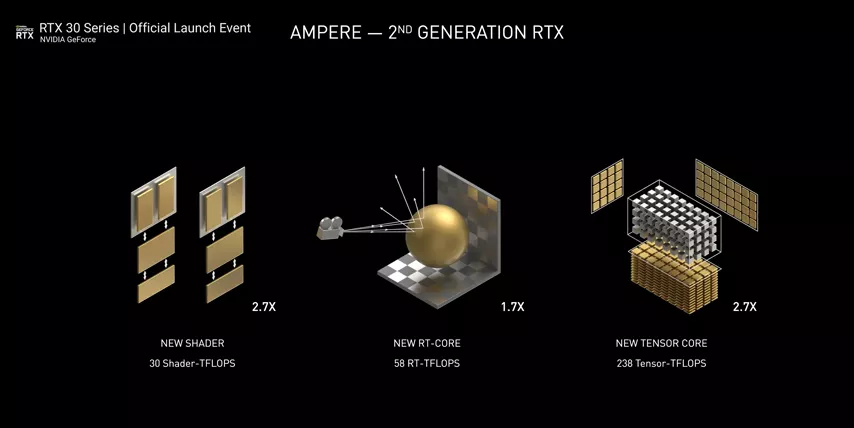

Here's a review from AnandTech showing the 6600 GT beating the FX 5950.  That was in 2004. And according to Nvidia things are even better than they seem. They claim we are getting a 1.9 times performance per watt improvement, 2.7 times the Shader performance, 1.7 times the Ray Tracing performance and 2.7 times the Tensor core performance, which in the real world translates to, one metric, but ton.

That was in 2004. And according to Nvidia things are even better than they seem. They claim we are getting a 1.9 times performance per watt improvement, 2.7 times the Shader performance, 1.7 times the Ray Tracing performance and 2.7 times the Tensor core performance, which in the real world translates to, one metric, but ton.  Nvidia showed another Marbless, fully Ray Traced graphics demo, but while Turing rendered at 720p Ampere managed to get 1440p with more sim complexity, that's huge and while to be fair, they had a little help from DLSS and from a new learning algorithm that derives missing color data from an incomplete image to improve performance, optimizations as a concept are nothing new and the results here speak for themselves.

Nvidia showed another Marbless, fully Ray Traced graphics demo, but while Turing rendered at 720p Ampere managed to get 1440p with more sim complexity, that's huge and while to be fair, they had a little help from DLSS and from a new learning algorithm that derives missing color data from an incomplete image to improve performance, optimizations as a concept are nothing new and the results here speak for themselves.

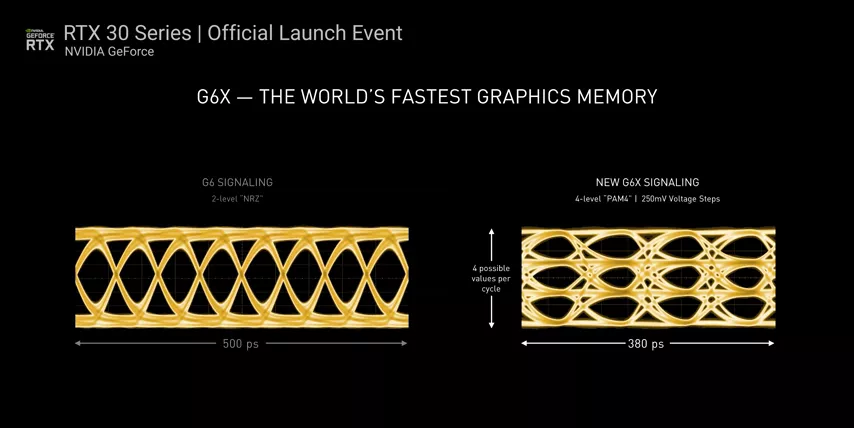

Nvidia also got a new memory signaling technique, which carries four possible values per cycle compared to only two, effectively doubling the amount of memory bandwidth while also increasing the frequency significantly.  So with the RTX 3080, you're gonna get 10 gigs and it's gonna run 320 Watts of rated power with the RTX 3070 getting 8 gigs of a more traditional GDDR6 at a mere 220 Watts. Put that in context, a 2080Ti was only 250 Watts.

So with the RTX 3080, you're gonna get 10 gigs and it's gonna run 320 Watts of rated power with the RTX 3070 getting 8 gigs of a more traditional GDDR6 at a mere 220 Watts. Put that in context, a 2080Ti was only 250 Watts.

And Nvidia claims that that RTX 3070, even though it consumes less power is faster than the $1,200 2080Ti with the new RTX 3080 doubling the performance of the 2080, and this is that 499 and 699 respectively. There's nothing else I can say. I'm absolutely mind blown. They even light up.

But the question is, why? Why after all these years of driving GPU prices up has Nvidia decided to bestow upon us these benevolent prices. We've got a couple of theories. One is that RTX 2080 and 2070 sales were something of a disappointment. I mean, it's not that they were terrible cards, they just weren't a very compelling upgrade for anyone who already owned a high end 1000 series. So looking back then in whatever the past version of a crystal ball is, it seems like Nvidia priced Turing high due to uncertain yields with TSMC, and then hoped that RTX Ray Tracing would be enough to carry it to the new price point. So then now, because Samsung has worked with Nvidia to make this custom eight nanometer process, presumably it's more reliable, which means that despite the cost per wafer, almost certainly being higher as they tend to be as we keep shrinking the process lower, they're getting more usable chips out of them working out to a much lower cost, which is great for consumers. I think it's fair to say that this is shaping up to be an absolute unicorn of a GPU release.

So looking back then in whatever the past version of a crystal ball is, it seems like Nvidia priced Turing high due to uncertain yields with TSMC, and then hoped that RTX Ray Tracing would be enough to carry it to the new price point. So then now, because Samsung has worked with Nvidia to make this custom eight nanometer process, presumably it's more reliable, which means that despite the cost per wafer, almost certainly being higher as they tend to be as we keep shrinking the process lower, they're getting more usable chips out of them working out to a much lower cost, which is great for consumers. I think it's fair to say that this is shaping up to be an absolute unicorn of a GPU release.

And there's also another angle to the pricing. With launch date set for the end of Q3 and beginning of Q4, it's pretty clear who Nvidia sees as the real competition for 3000 series. And it's not AMD, or at least not directly anyway. It's Sony and Microsoft with the Xbox Series X and PlayStation 5. And by getting out ahead of those launches, Nvidia seems to be trying to steal some of the thunder, hopefully preventing gamers from being lured away from PC, where Nvidia dominates over to consoles where they will, I don't know, I guess there's Nintendo Switch.

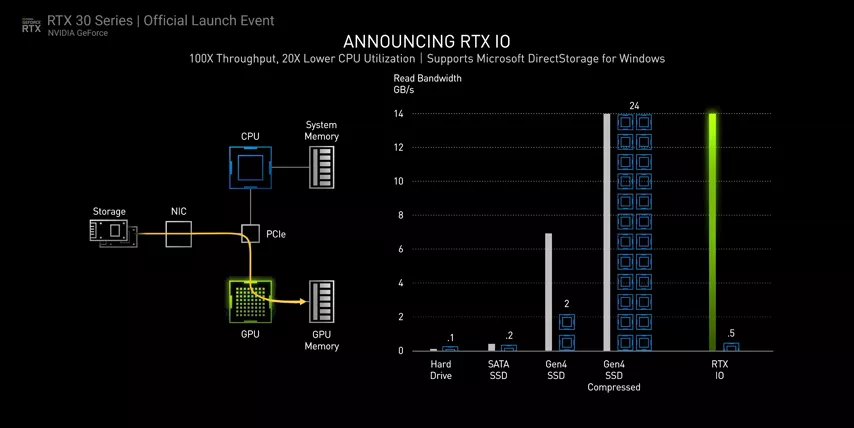

One big hand here, is that just like the PS5, the RTX 3000 GPUs have the ability to decompress data directly from an SSD attached to the system using direct storage.  This means load times can be much shorter and the CPU doesn't need to get bogged down decompressing the gigantic assets that make up today's game worlds. Just like on the PlayStation 5 then, developers who leverage this technology can achieve more seamless loading and Asset Streaming than was previously possible. It also means that NVME and even PCI Express Gen 4 might actually become useful for gamers where load times are notoriously CPU bound in spite of the storage speed of modern systems.

This means load times can be much shorter and the CPU doesn't need to get bogged down decompressing the gigantic assets that make up today's game worlds. Just like on the PlayStation 5 then, developers who leverage this technology can achieve more seamless loading and Asset Streaming than was previously possible. It also means that NVME and even PCI Express Gen 4 might actually become useful for gamers where load times are notoriously CPU bound in spite of the storage speed of modern systems.

Nvidia is also hyper-focused on content creation here, looking to lure streamers and Machinima enthusiasts with Nvidia Broadcast and Nvidia Omniverse Machinima. Nvidia Broadcast includes a number of tools to apply effects to the microphone input, camera and speakers using Tensor cores in what looks like a continuation of what RTX boy started. So in addition to the noise removal that was possible before we can now without a green screen, automatically remove backgrounds and apply a blur effect or backdrop and more with what looks like very convincing effect compared to current solutions. Nvidia Omniverse Machinima or NOM, I guess. NOM, lets you import game libraries and tools, apply materials, physics simulations, poses captured from webcams, automatic facial animations from recorded audio and more to create a wholly unique scene for doing your own Machinima. It's kind of like Ansel, except that you get full control over the scene instead of just the camera. They provided a pretty great looking example of a short clip of a siege to give us an idea of what can be accomplished and quite frankly, I wouldn't be surprised if developers or even animators end up drafting scenes this way, it's pretty cool.

So then RTX 3000 much less of a middle finger directly to Radeon Technologies Group and much more of a middle finger to the consoles. Which isn't to say though, that Nvidia hasn't done some extra credit work against RTG as well.

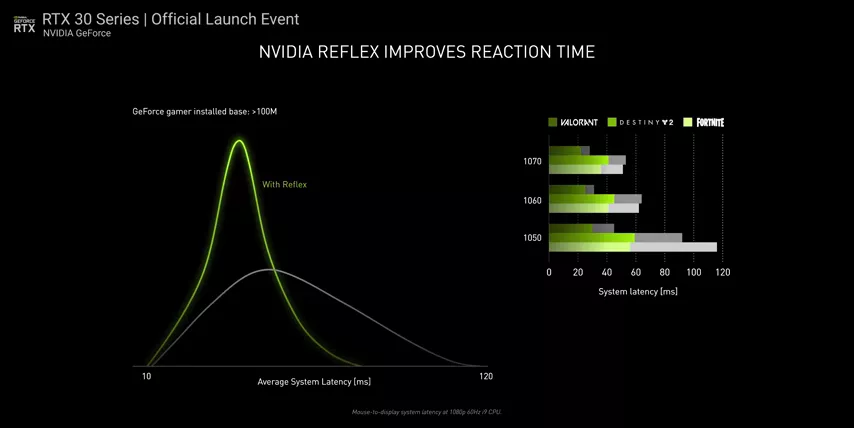

Do you remember Radeon Anti-Lag and how Nvidia originally claimed that it was just a tweak that users could already do to their drivers, and then realized, oops, it's actually something more and then they released Nvidia Ultra-Low Latency. Well now, they're putting the cherry on top with what they're calling Nvidia Reflex, a driver path optimization technique that should be available to all GTX 1000 and newer users to reduce total system latency by up to roughly half.  Now the exact mechanisms that they're exploiting to do this are currently unknown, but presumably there's a cost of some sort somewhere and you'll probably lose a bit of driver level control in the process.

Now the exact mechanisms that they're exploiting to do this are currently unknown, but presumably there's a cost of some sort somewhere and you'll probably lose a bit of driver level control in the process.

Finally, there's the one more thing. RTX 3090 is the formal name of this generation's Titan.  But Jensen lovingly referred to it as the BF GPU. It sucks back a rated 350 Watts, hence the fancy new 12 pin power connector, has over 10,000 CUDA cores and 24 gigs of GDDR6X memory and comes in at 1499. Meaning it's certainly priced like a Titan, but unlike the last couple of generations of Titans, it also appears to be specked like a Titan and it might even be worth that obscene price tag. They rolled out an LG 8K OLED TV to game on and demonstrated 8K 60 FPS gameplay using DLSS and RTX. Not only did the beefed up Tensor cores appear to handle 8K upscaling without breaking a sweat, the RTX 3090 can apparently even record 8K HDR gameplay with Shadowplay. In fact, there's bits and pieces in the whole presentation that allude to upgrades across the board. AV1 codex support for decode is now available and NVENC is presumably no different on the rest of the NPR cards, making 4K HDR Shadowplay a thing now. To go with it, it looks like we're finally getting HDMI 2.1 and probably more, but we can figure all of that out in our review, for now all that's left to say is, "Boy is it ever good to see the effects of more competition in the gaming hardware space?" Even if it came from a bit of an unexpected direction.

But Jensen lovingly referred to it as the BF GPU. It sucks back a rated 350 Watts, hence the fancy new 12 pin power connector, has over 10,000 CUDA cores and 24 gigs of GDDR6X memory and comes in at 1499. Meaning it's certainly priced like a Titan, but unlike the last couple of generations of Titans, it also appears to be specked like a Titan and it might even be worth that obscene price tag. They rolled out an LG 8K OLED TV to game on and demonstrated 8K 60 FPS gameplay using DLSS and RTX. Not only did the beefed up Tensor cores appear to handle 8K upscaling without breaking a sweat, the RTX 3090 can apparently even record 8K HDR gameplay with Shadowplay. In fact, there's bits and pieces in the whole presentation that allude to upgrades across the board. AV1 codex support for decode is now available and NVENC is presumably no different on the rest of the NPR cards, making 4K HDR Shadowplay a thing now. To go with it, it looks like we're finally getting HDMI 2.1 and probably more, but we can figure all of that out in our review, for now all that's left to say is, "Boy is it ever good to see the effects of more competition in the gaming hardware space?" Even if it came from a bit of an unexpected direction.

No comments yet