Who would believe that Intel is the bang for the buck king? But wait, there's more. With the release of the 13th gen Raptor Lake desktop CPUs, they're also the gaming performance king, making Ryzen 7000's reign the shortest in recent memory.

The Architecture

While AMD kicked off this generation with some big platform changes, Intel's remained on their LGA 1700 socket where they've had a solid year behind the scenes to refine their hybrid architecture on a relatively mature platform by now, which isn't to say they aren't bringing some big gains.

While AMD kicked off this generation with some big platform changes, Intel's remained on their LGA 1700 socket where they've had a solid year behind the scenes to refine their hybrid architecture on a relatively mature platform by now, which isn't to say they aren't bringing some big gains.

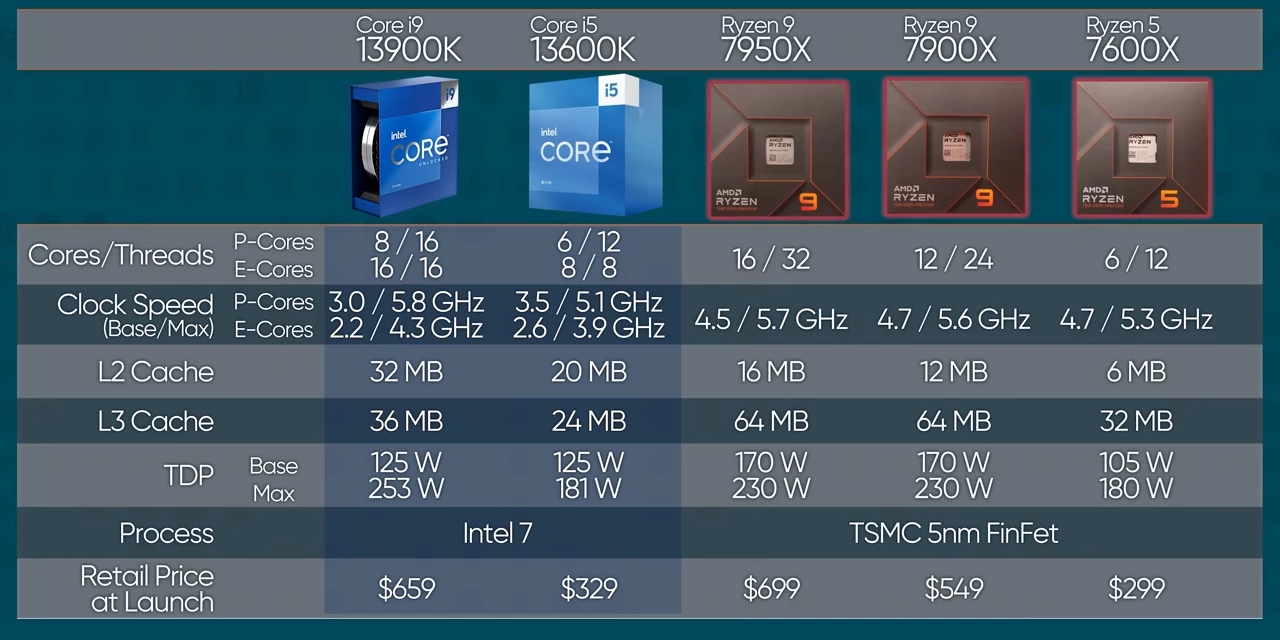

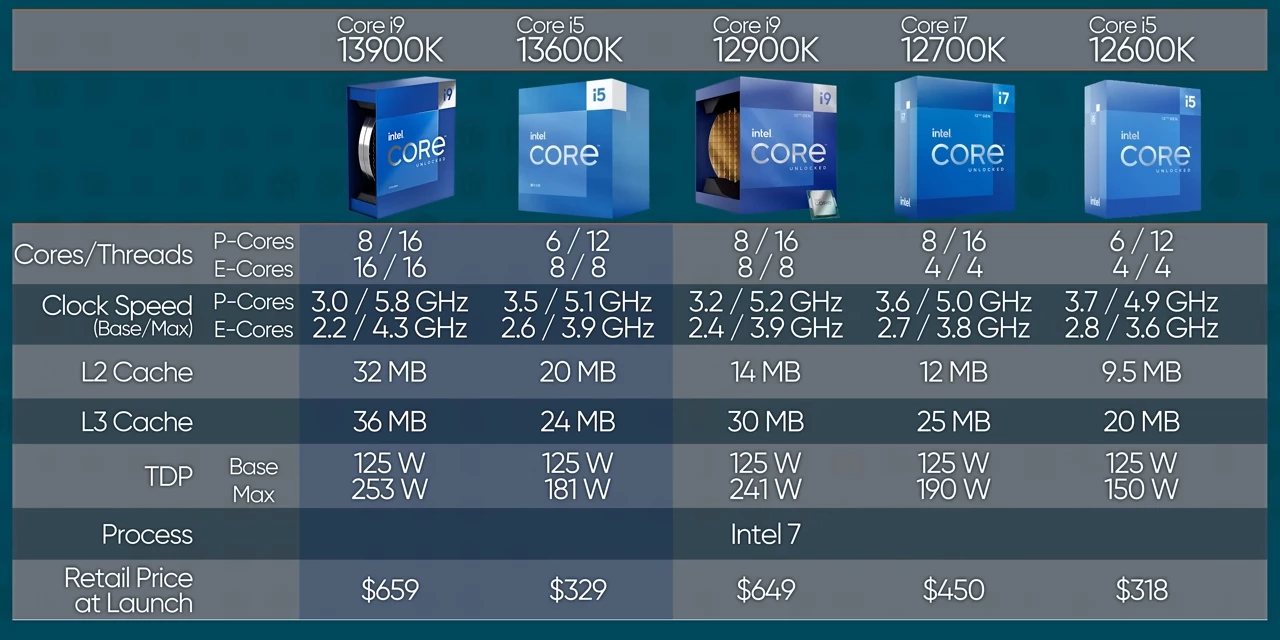

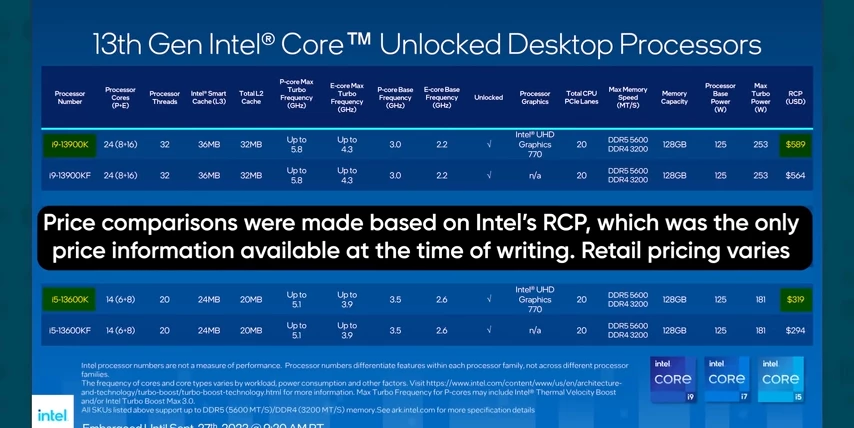

First up is the improvements to their Intel 7 manufacturing process, which contributed to a massive bump in core counts compared to last generation. Both the 13900K and the 13600K got double the number of efficiency or E cores, and the entire lineup saw big bumps in on dye cache, which is great for workloads like gaming. And they boosted up clock speeds too.

First up is the improvements to their Intel 7 manufacturing process, which contributed to a massive bump in core counts compared to last generation. Both the 13900K and the 13600K got double the number of efficiency or E cores, and the entire lineup saw big bumps in on dye cache, which is great for workloads like gaming. And they boosted up clock speeds too.

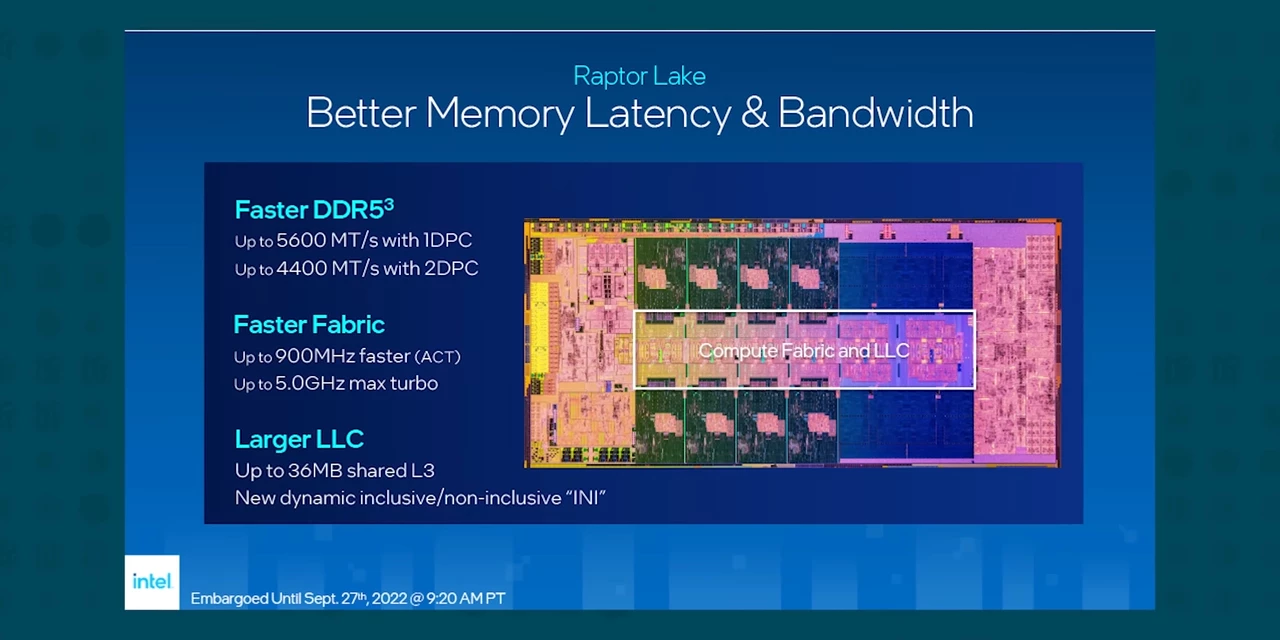

On that note, the next big improvement is to memory compatibility.  Intel has moved from official support for a poultry DDR5 4,800, all the way up to DDR5 5,600 with XMP, extending that to a whopping 7,200 mega transfer per second, and that is out of the box.

Intel has moved from official support for a poultry DDR5 4,800, all the way up to DDR5 5,600 with XMP, extending that to a whopping 7,200 mega transfer per second, and that is out of the box.

From talking with memory manufacturers, we are expecting to see even faster memory kits in the future that could benefit from Raptor Lake's second generation DDR5 controller.

The Chipset

Their updated Z790 chipset has some tricks up its sleeve as well, giving 13th gen chips access to more PCI Express Gen 4 lanes for high speed storage and additional USB 20 gigabit per second ports, but that's not the best thing about it to be honest. The best thing about it is you don't have to buy it.

13th gen CPUs are backwards compatible with 600 series motherboards after a BIOS update, and they're compatible with DDR4 memory, dramatically reducing the overall platform cost for upgraders, making Intel the value play this generation, but only if it has the performance to keep up with AMD. So how fast is it?

Out testing setup

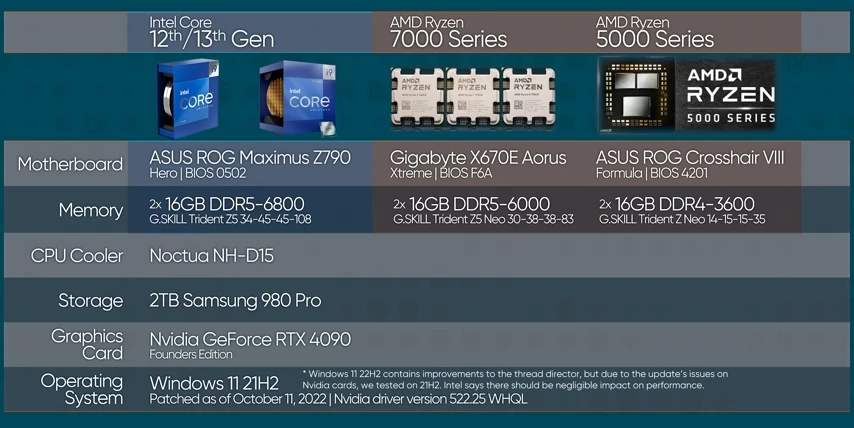

To help each platform to put its best foot forward, we paired them with the best kits of RAM as recommended by each manufacturer. We also updated our bench to the brand new and beastly RTX 4090. So saying bye-bye to bottlenecks and hello to some spicy numbers.

We also updated our bench to the brand new and beastly RTX 4090. So saying bye-bye to bottlenecks and hello to some spicy numbers.

Gaming Benchmarks

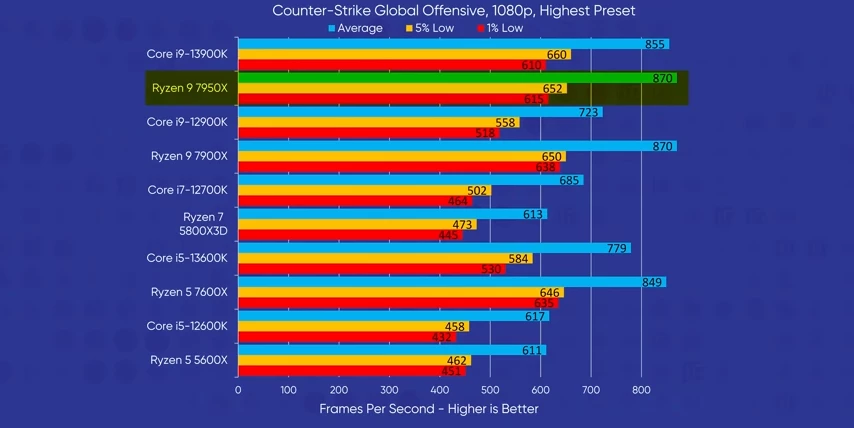

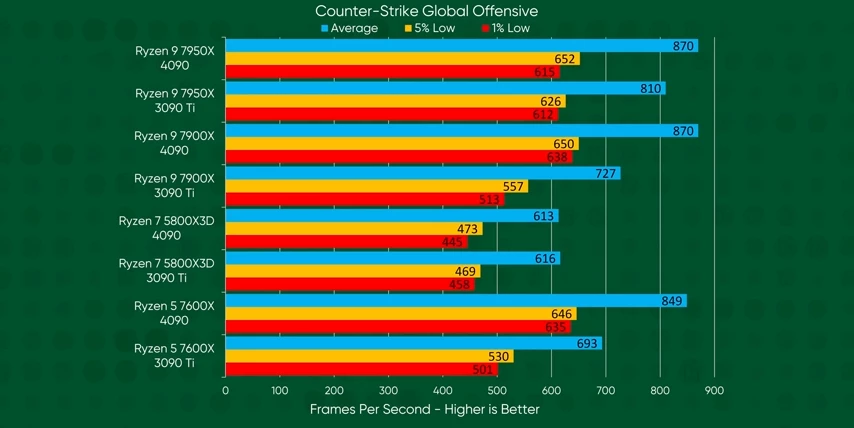

At 1080p, which we used for most of our benchmarking to amplify CPU bottlenecks, we saw AMD rip outta the gate with a narrow win in "CS:Go."  But I mean with the top three CPUs all bottlenecked, it's not really a meaningful win. Notable here though is the 13600K leading the last gen flagship, 12900K. Could this be a trend?

But I mean with the top three CPUs all bottlenecked, it's not really a meaningful win. Notable here though is the 13600K leading the last gen flagship, 12900K. Could this be a trend?

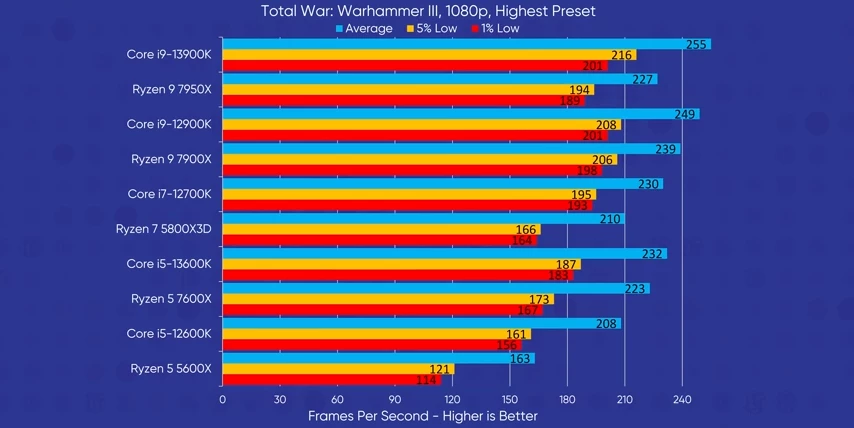

Well, "Total War" says, yes. Again, the 13600K, just edges past the 12900K. And the 13900K, just barely loses to the AMD 7950X. Frankly, the most notable thing here is just how far behind the Ryzen 5 5600X is.

Well, "Total War" says, yes. Again, the 13600K, just edges past the 12900K. And the 13900K, just barely loses to the AMD 7950X. Frankly, the most notable thing here is just how far behind the Ryzen 5 5600X is.

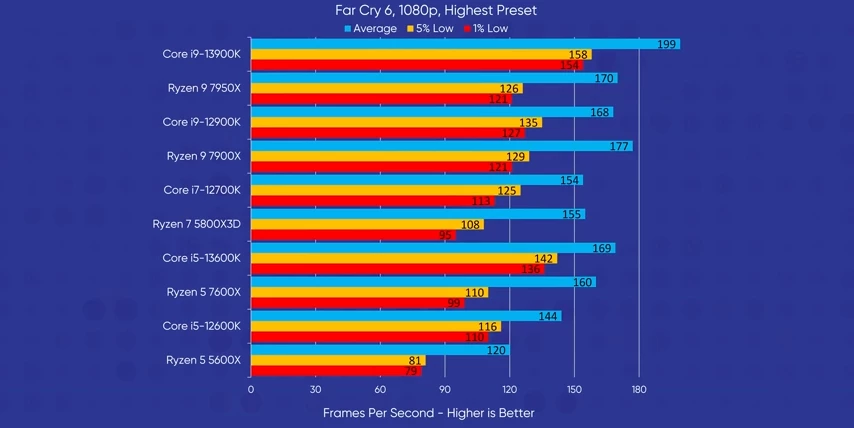

And it only falls further behind in "Far Cry 6," where Intel gains their first big win, topping the chart with a 12% lead in average frame rates, but also big wins across the board in our minimums. The game is just more stable on Intel.

And it only falls further behind in "Far Cry 6," where Intel gains their first big win, topping the chart with a 12% lead in average frame rates, but also big wins across the board in our minimums. The game is just more stable on Intel.

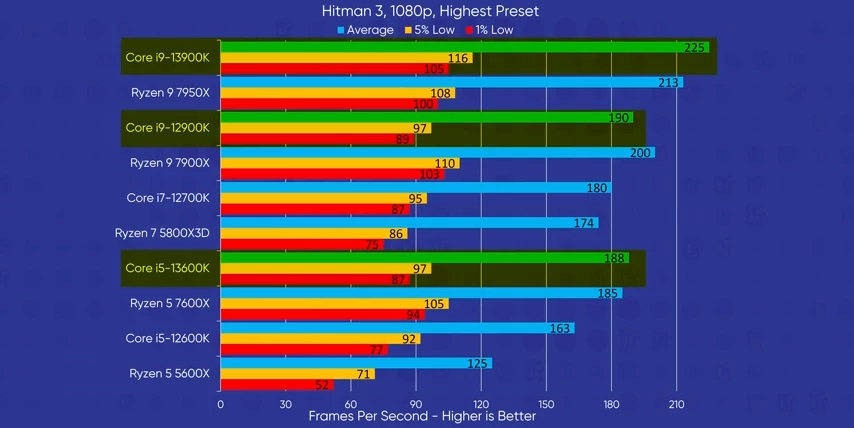

And in "Hitman 3," it just feels like we've been playing through the same graphs over and over again, trying to find different ways to kill AMD.

And in "Hitman 3," it just feels like we've been playing through the same graphs over and over again, trying to find different ways to kill AMD.

Factory building game "Factorio" meanwhile, guzzles L3 cache like a frat boy on spring break, allowing AMD to dominate.

Factory building game "Factorio" meanwhile, guzzles L3 cache like a frat boy on spring break, allowing AMD to dominate.

The next four games were tested at three resolutions, 1080p, 1440p, and 4k.

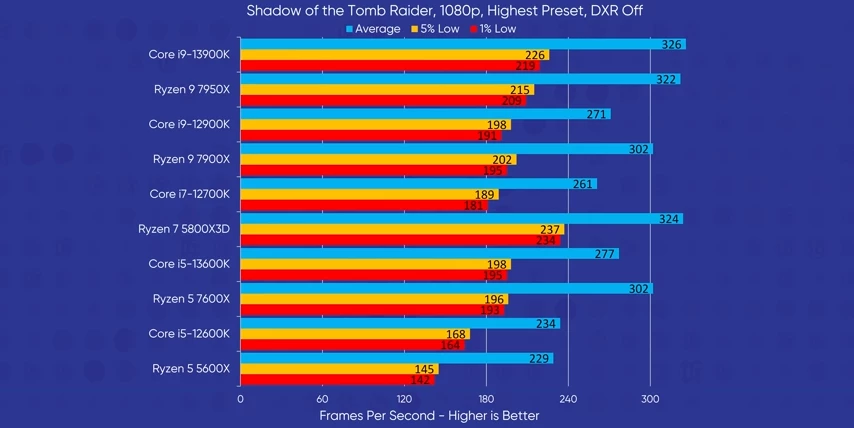

Let's start with our old friend, "Tomb Raider." At 1080p, the 5800X3D finally shows us what we know it can do. And while it's very much AMD's game with their 7600X keeping a sizable gap over the 13600K, it's the 13900K that takes the cake.

At 1080p, the 5800X3D finally shows us what we know it can do. And while it's very much AMD's game with their 7600X keeping a sizable gap over the 13600K, it's the 13900K that takes the cake.

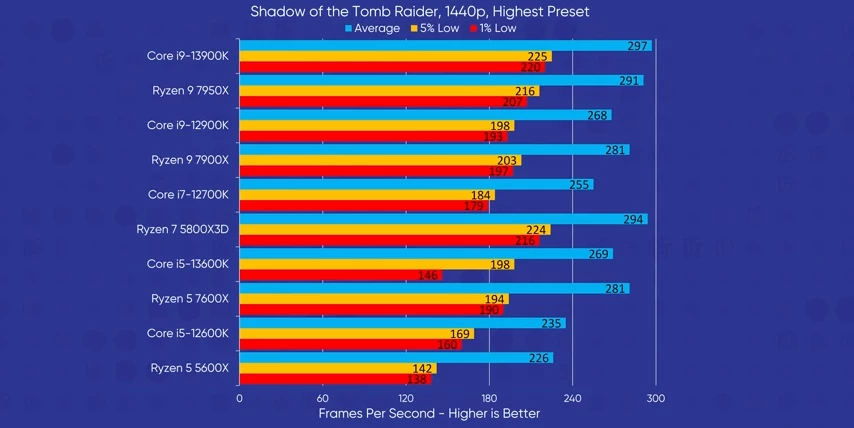

Moving up to 1440p, the rankings stay pretty much identical.

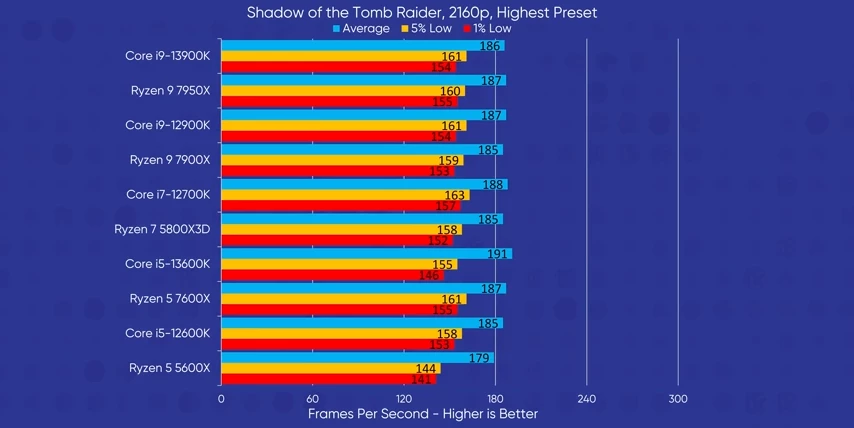

Moving up to 1440p, the rankings stay pretty much identical.  And at 4k, we hit a brick wall where the GPU is clearly bottlenecking, and all the CPUs are performing within error.

And at 4k, we hit a brick wall where the GPU is clearly bottlenecking, and all the CPUs are performing within error.

The thing about 4K benchmarks...

This is actually something we've seen in all of our 4k games today, and actually most of our 1440p tests too.  So if you were wondering why we don't test CPUs at resolutions higher than 1080p, it's because results tend to be fuzzy at best and generally inconclusive. We could lower our graphic settings, but in the past we've shown that that reduces strain on memory and consequently, the processor, which will ironically make the tests even more CPU bound. Anyway, let's get back to 1080p and stay there.

So if you were wondering why we don't test CPUs at resolutions higher than 1080p, it's because results tend to be fuzzy at best and generally inconclusive. We could lower our graphic settings, but in the past we've shown that that reduces strain on memory and consequently, the processor, which will ironically make the tests even more CPU bound. Anyway, let's get back to 1080p and stay there.

More Gaming benchmarks

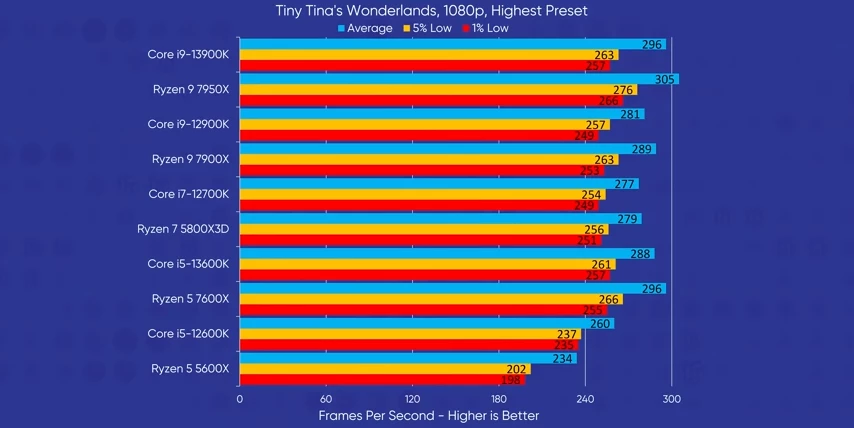

In "Tiny Tina's Wonderlands," we see a tight race with barely a 10% difference in frame rates between the 7950X and the last gen 12600K. Only the 5600X lags behind in any significant way.

In "Tiny Tina's Wonderlands," we see a tight race with barely a 10% difference in frame rates between the 7950X and the last gen 12600K. Only the 5600X lags behind in any significant way.

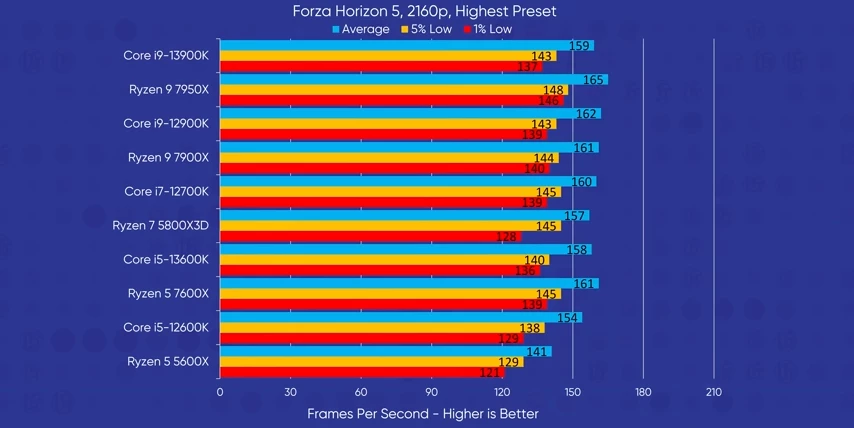

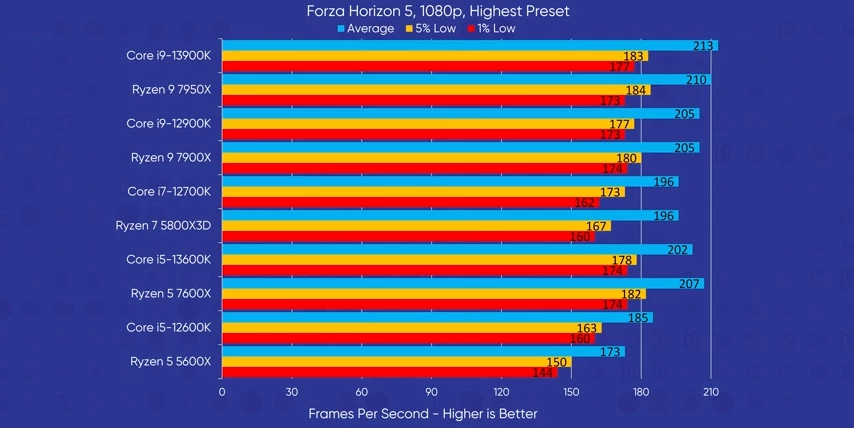

And it's nearly the same story when we look at "Forza Horizon 5," only narrow victories to be had there.

And it's nearly the same story when we look at "Forza Horizon 5," only narrow victories to be had there.

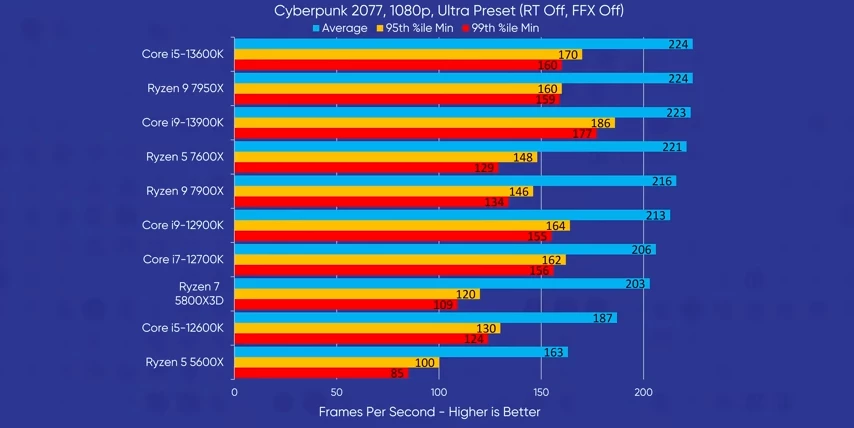

"Cyberpunk" though, it's a bit of a mess. Organizing our graph by average FPS puts the 13600K at the top, but when you look at the minimums, it's the 13900K that is the clear winner. In a few of our charts, we see the 7600X outperforming the higher clocked, cached, and cored, 7900X, and we think this is likely due to some AM5 teething issues.

"Cyberpunk" though, it's a bit of a mess. Organizing our graph by average FPS puts the 13600K at the top, but when you look at the minimums, it's the 13900K that is the clear winner. In a few of our charts, we see the 7600X outperforming the higher clocked, cached, and cored, 7900X, and we think this is likely due to some AM5 teething issues.

It appears that in some workloads, the chips with two CCDs may be underperforming. Now this was noted by Hardware Unboxed and "Cyberpunk" might be one of those workloads, I suppose? Maybe AMD will fix it, but that is in the future and we want performance now. So where do these results put us?

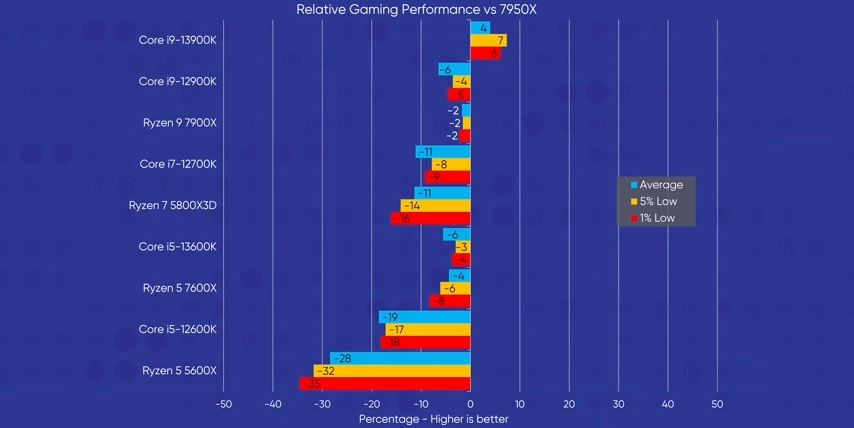

The gaming king is dead. Long live the gaming king!  Or short lived depending on your perspective. When we compare the average performance of all of our CPUs to the 7950X, we see the 13900K dominating with approximately 5% improved frame rates across the board. And the 13600K falls squarely in the as good as the 12900K category alongside the Ryzen 9s. And it beats out its price competitor, the 7600X, thanks to more stable performance.

Or short lived depending on your perspective. When we compare the average performance of all of our CPUs to the 7950X, we see the 13900K dominating with approximately 5% improved frame rates across the board. And the 13600K falls squarely in the as good as the 12900K category alongside the Ryzen 9s. And it beats out its price competitor, the 7600X, thanks to more stable performance.

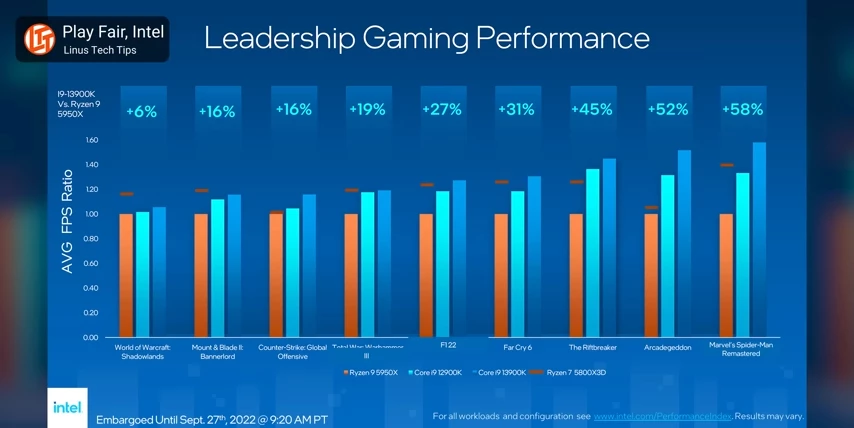

But look at this. What happened to everyone's favorite Ryzen 7 5800X3D?  It was so dominant in previous benchmarks that even Intel played coy in their performance graphs from their announcement. Well, we have a couple of theories here. One, the games we used for this review didn't benefit as much from the extra V-Cache. It's a possibility.

It was so dominant in previous benchmarks that even Intel played coy in their performance graphs from their announcement. Well, we have a couple of theories here. One, the games we used for this review didn't benefit as much from the extra V-Cache. It's a possibility.

The 4090 changes everything!

Two, and this is the big one, like physically big, the RTX 4090. When we compare these numbers against the ones from our Ryzen 7000 review, we see that the 7000 series have much improved frame rates but the 5800X3D, it doesn't budge.

It appears that the extra GPU horsepower was what we needed to demonstrate the 5800X3D's upper limits. And the 5800X3Ds fall from grace continues in our productivity benchmarks.

It appears that the extra GPU horsepower was what we needed to demonstrate the 5800X3D's upper limits. And the 5800X3Ds fall from grace continues in our productivity benchmarks.

Productivity Benchmarks

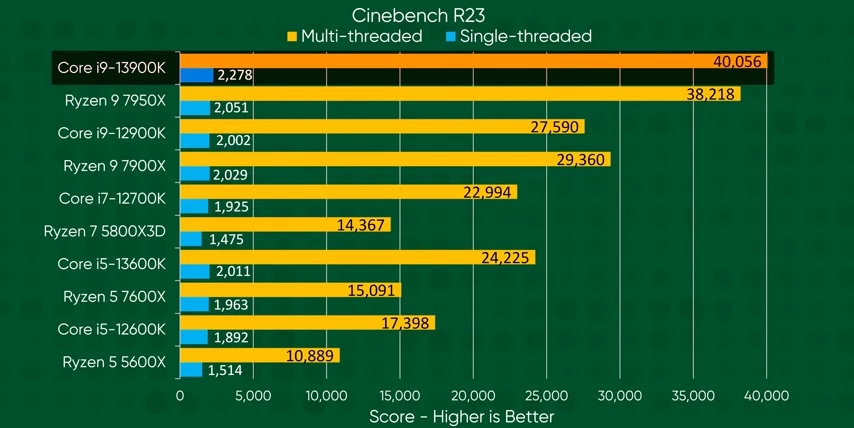

In Cinebench, the 13900K just cracks the 40,000 mark in multi-threaded with a healthy lead over AMD just across the board. The 13600K gets a lot of its extra efficiency cores showing up the competing mid-range CPUs by a wide margin, and actually surpassing the 12700K despite the lower number of performance cores.

In Cinebench, the 13900K just cracks the 40,000 mark in multi-threaded with a healthy lead over AMD just across the board. The 13600K gets a lot of its extra efficiency cores showing up the competing mid-range CPUs by a wide margin, and actually surpassing the 12700K despite the lower number of performance cores.  And in single threaded performance, the 13900K whips out nearly 2,300 points. That's 10% more than the 7950X. Meanwhile, the 13600K is not much faster than the 7600X so it's leaning more on its E cores.

And in single threaded performance, the 13900K whips out nearly 2,300 points. That's 10% more than the 7950X. Meanwhile, the 13600K is not much faster than the 7600X so it's leaning more on its E cores.

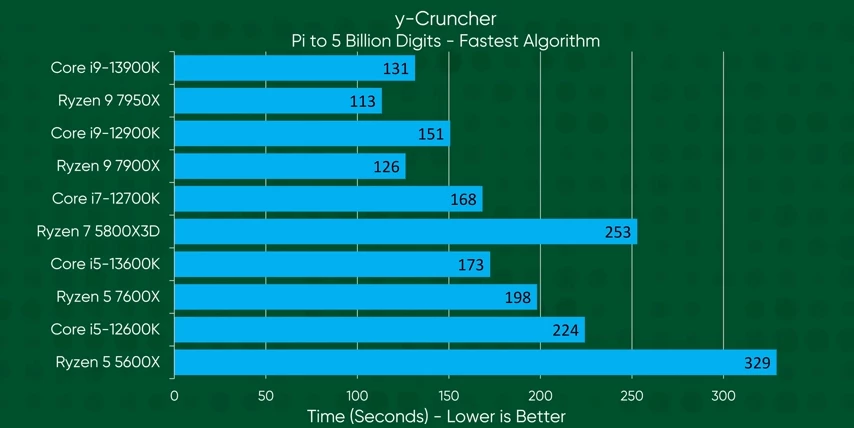

In y-Cruncher, which uses the fastest available algorithm for each chip, Intel actually takes an L by not supporting their own AVX-512 extensions.

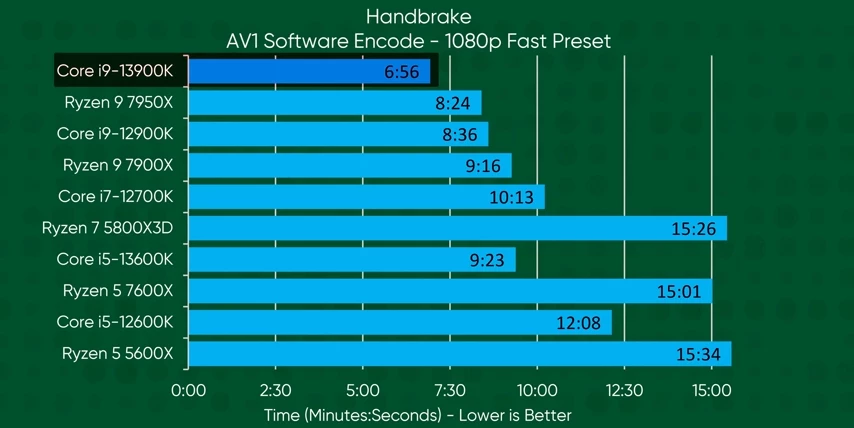

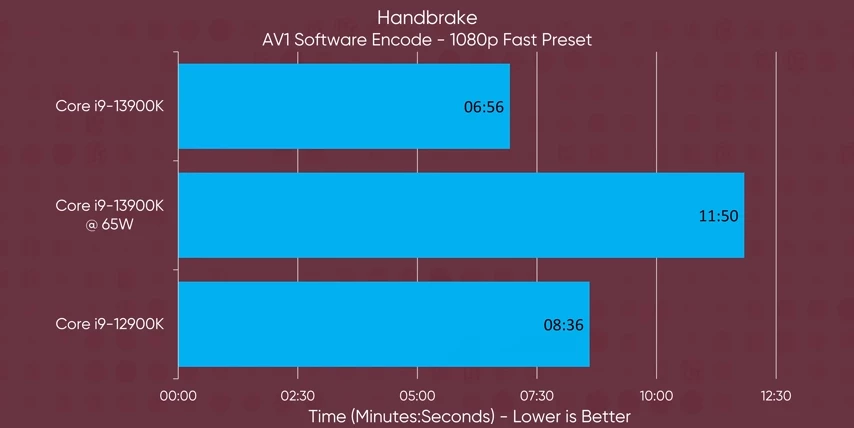

In y-Cruncher, which uses the fastest available algorithm for each chip, Intel actually takes an L by not supporting their own AVX-512 extensions. But they manage a win in the Handbrake encode where the 13900K handily beats out the 7950X in the AV1 software encode. And the 13600K is no slouch either, beating out its price competitor 7600X by 40%, and breathing down the neck of the significantly more expensive 7900X.

But they manage a win in the Handbrake encode where the 13900K handily beats out the 7950X in the AV1 software encode. And the 13600K is no slouch either, beating out its price competitor 7600X by 40%, and breathing down the neck of the significantly more expensive 7900X.

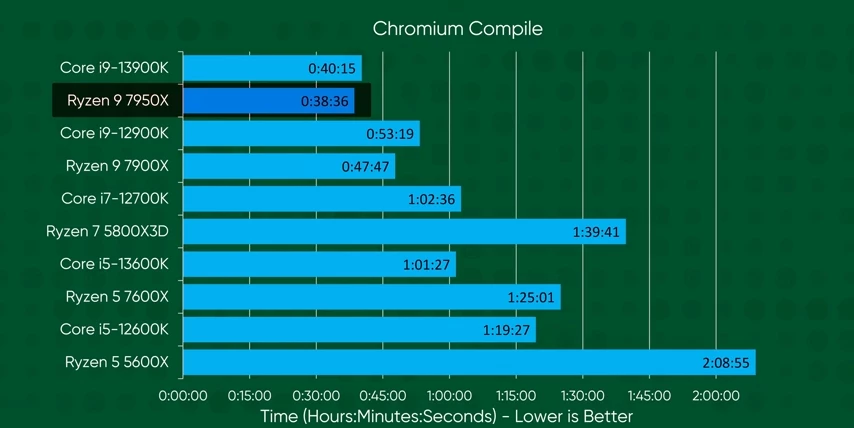

When compiling Chromium, the 7950X's abundance of full fat cores continues to win in such heavily parallelized workloads.

When compiling Chromium, the 7950X's abundance of full fat cores continues to win in such heavily parallelized workloads.

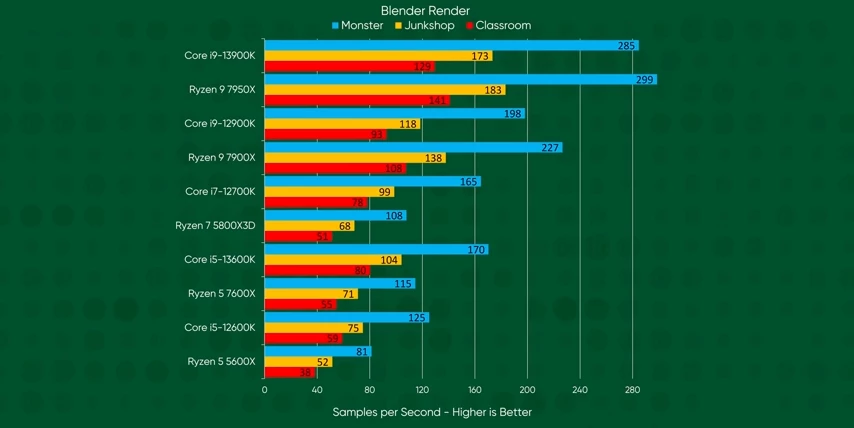

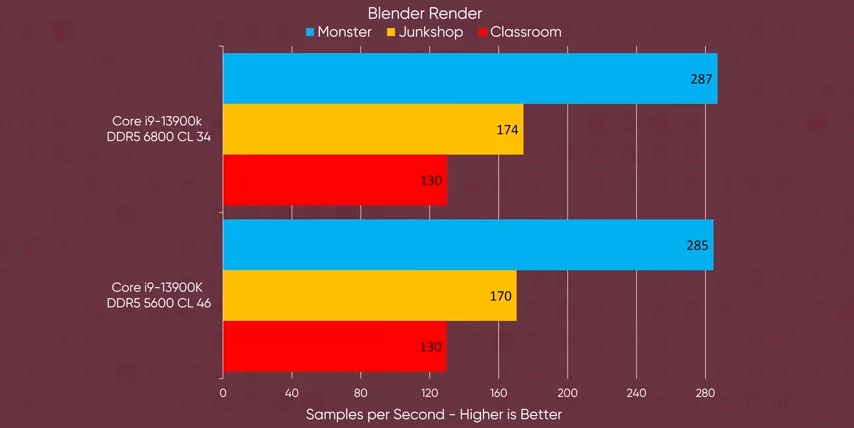

So it's strong showing in Blender, is again expected. But Intel is sure given them a run for their money.

So it's strong showing in Blender, is again expected. But Intel is sure given them a run for their money.

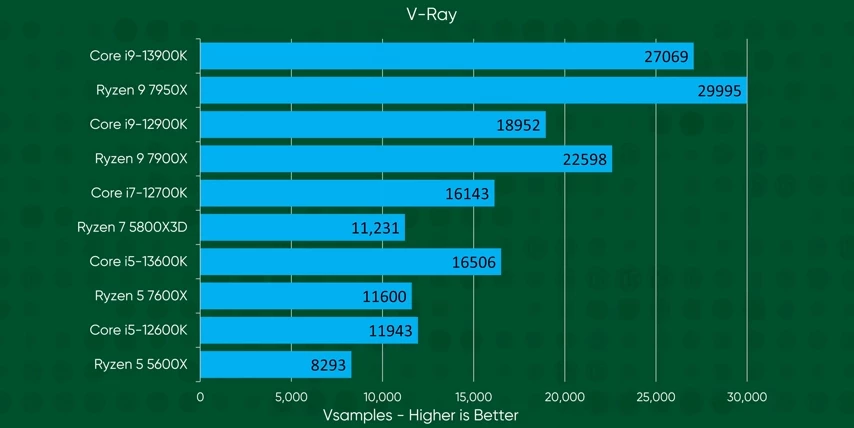

V-Ray again is still very much AMD's game, at least in the high end. The 13600K is making the 7600X look pretty irrelevant at this point.

V-Ray again is still very much AMD's game, at least in the high end. The 13600K is making the 7600X look pretty irrelevant at this point.

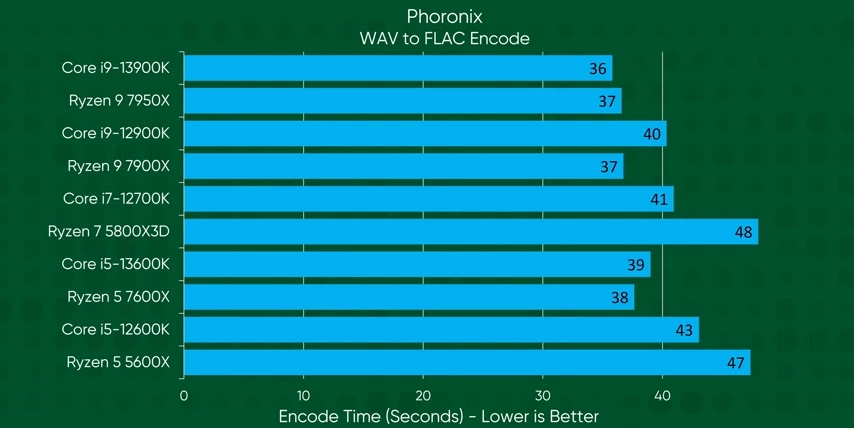

In the single threaded FLAC encode, AMD's really high clocks can only do so much against Intel's better instructions per clock.

In the single threaded FLAC encode, AMD's really high clocks can only do so much against Intel's better instructions per clock.

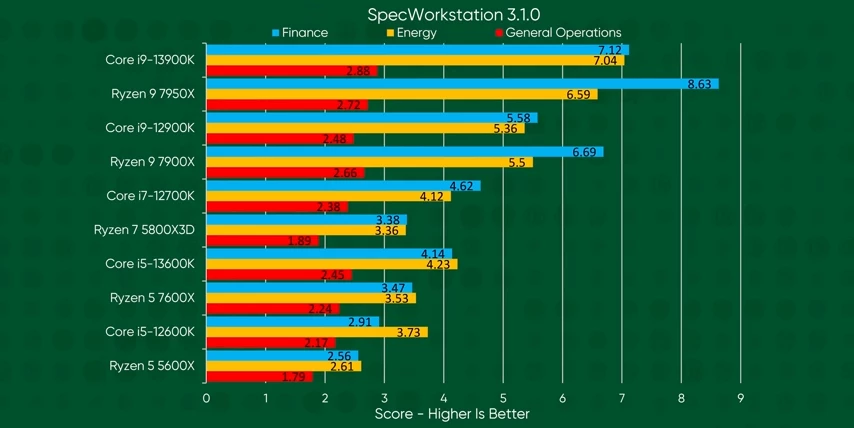

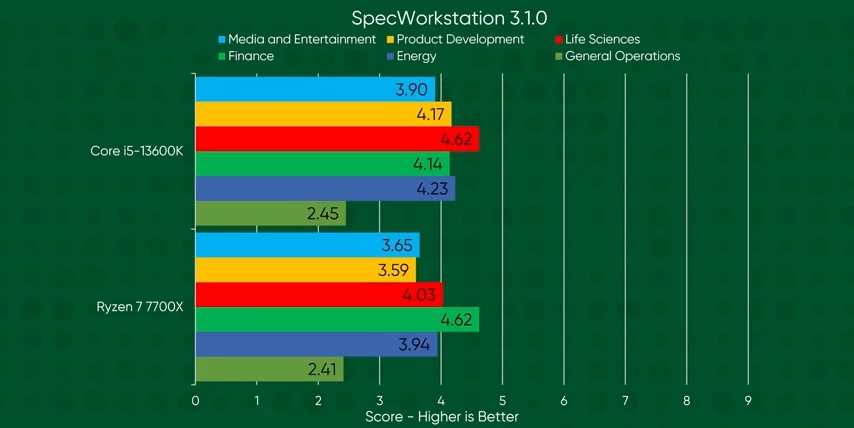

Moving on in SpecWorkstation, the 13900K steals the crown in every situation except for finance. And while the 13600K can't keep up with the 12900K as well as did in gaming, it still offers a lot more performance than the 7600X for just 20 extra dollars. It also beats the more expensive 7700X.

Moving on in SpecWorkstation, the 13900K steals the crown in every situation except for finance. And while the 13600K can't keep up with the 12900K as well as did in gaming, it still offers a lot more performance than the 7600X for just 20 extra dollars. It also beats the more expensive 7700X.

While we didn't test that CPU today, compared to the results from our Ryzen 7000 review, we see that the 7700X loses in nearly every category.

While we didn't test that CPU today, compared to the results from our Ryzen 7000 review, we see that the 7700X loses in nearly every category.

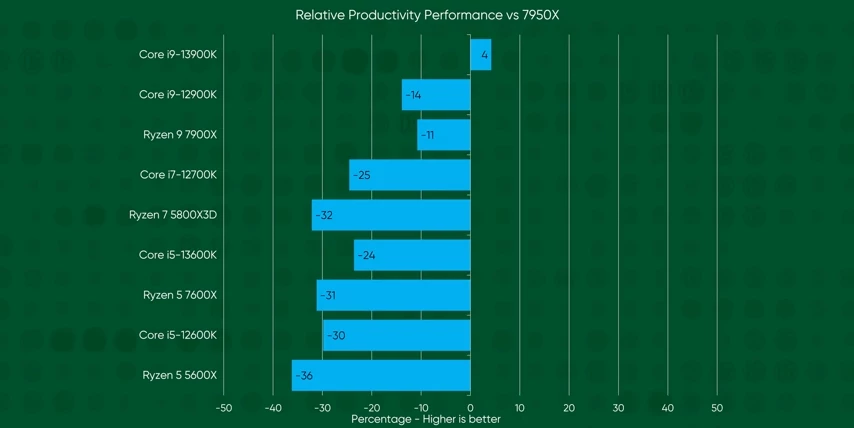

At the end of the workday, Intel wins again. They beat AMD's flagship. And while the victory is small, the difference in price is massive.

At the end of the workday, Intel wins again. They beat AMD's flagship. And while the victory is small, the difference in price is massive.

The 13900K undercuts the 7950X by $110, nearly 16%. And by providing 15% more performance at a mere $40 premium over the 7900X, that squashes that CPU's performance at its current price point, taking the performance and the value crown

The 13900K undercuts the 7950X by $110, nearly 16%. And by providing 15% more performance at a mere $40 premium over the 7900X, that squashes that CPU's performance at its current price point, taking the performance and the value crown

What about RAM choice?

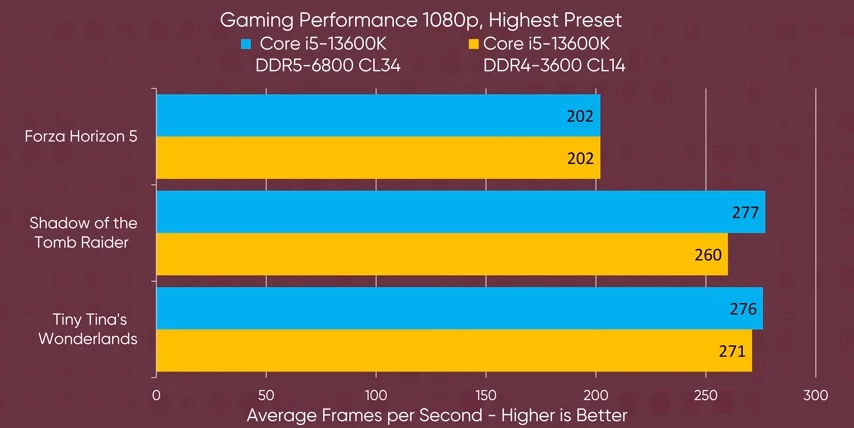

You use expensive RAM, that's not fair. I only have cheap DDR4. Will these CPUs be any good for me? To answer your rudely asked question, we ran some tests again on DDR4 and using cheap JEDEC speed DDR5. Expect a deep dive regarding RAM speeds in the future. But for today, if you're on DDR4, our comparably fast kit on the 13600K and SpecWorkstation, see's performance remain virtually unchanged in most CPU workloads.  There were only a few notable drops. And in the couple of gains we tested, we saw little to no difference.

There were only a few notable drops. And in the couple of gains we tested, we saw little to no difference.

Okay, well, what about slower DDR5?

Okay, well, what about slower DDR5?

Well, in the tests we ran on our 13900K, we saw typically performance drops of about 5% on the lower kit if we saw anything.

Thermals & Power

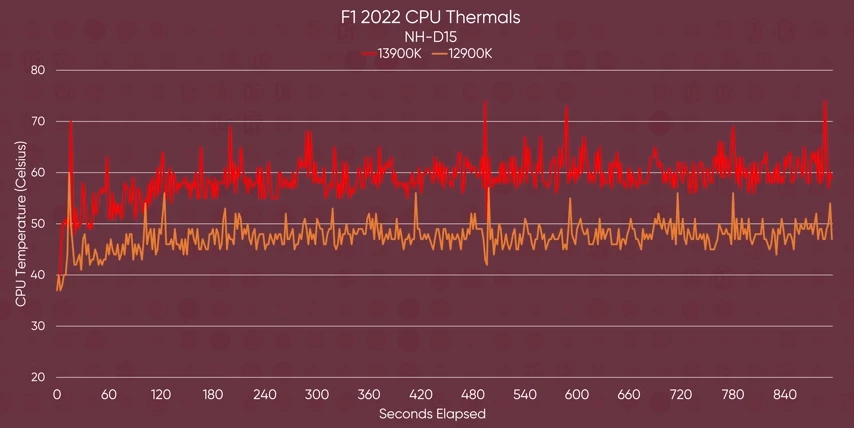

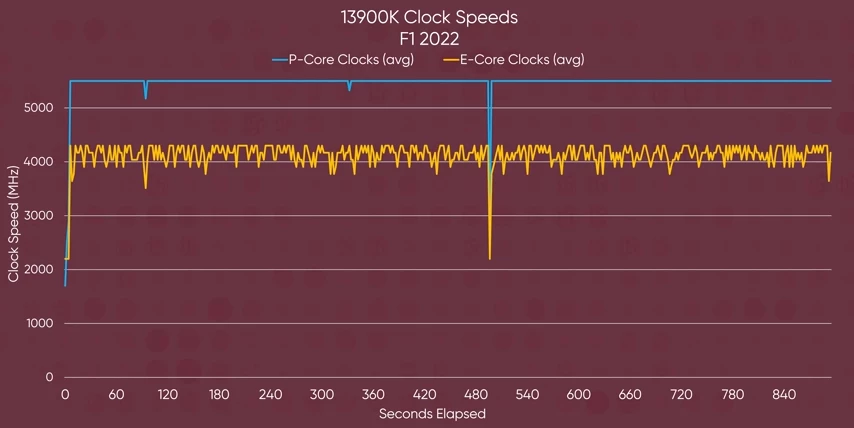

One thing I hope to see is if Intel has managed to get their heat and power issues in check. In our F1 thermal tests, we see the 13900K running at about 10 degrees hotter than its last gen counterpart. But as AMD has shown us, if you have thermal head room why not use it and pin the performance cores to full boost, right?

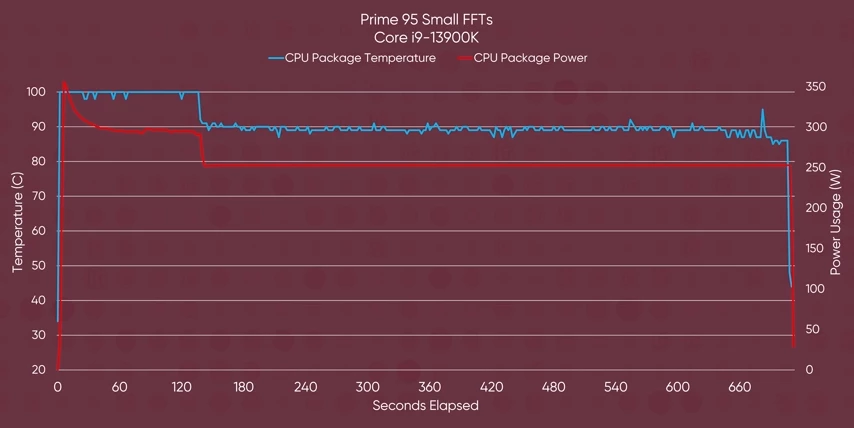

In our F1 thermal tests, we see the 13900K running at about 10 degrees hotter than its last gen counterpart. But as AMD has shown us, if you have thermal head room why not use it and pin the performance cores to full boost, right? And in Prime 95, the 250 watts CPU managed to spike as high as 350 watts.

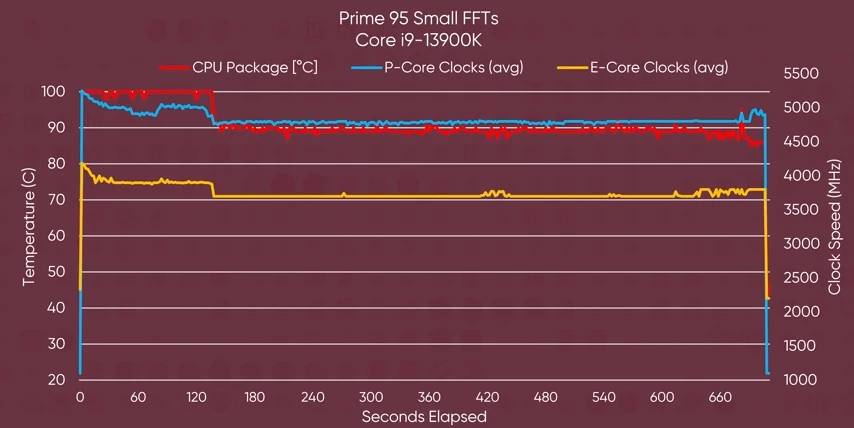

And in Prime 95, the 250 watts CPU managed to spike as high as 350 watts. So you need to make sure that you get a beefy cooler. NH-D15 from Noctua is the minimum that I would recommend.

So you need to make sure that you get a beefy cooler. NH-D15 from Noctua is the minimum that I would recommend.  The 13900K skyrockets to a hundred degrees Celsius, before dialing back the clocks from their all core boost target, and settling in at about 90 degrees.

The 13900K skyrockets to a hundred degrees Celsius, before dialing back the clocks from their all core boost target, and settling in at about 90 degrees.

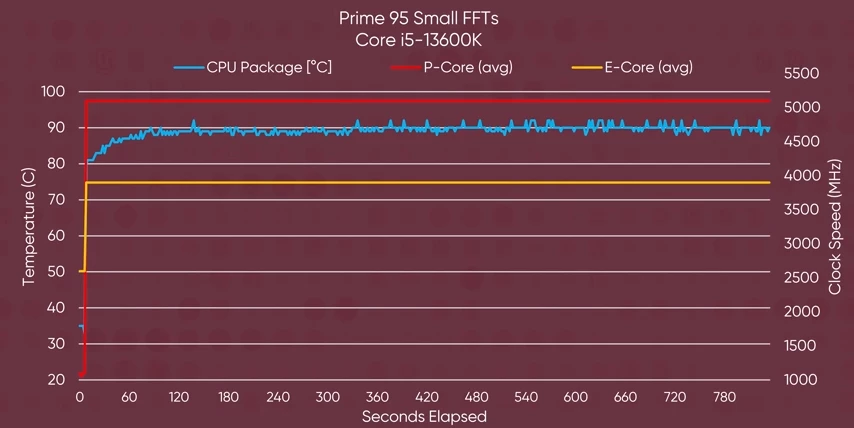

As for the 13600K, well, it's still managed well by the NH-D15, staying 10 degrees shy of throttling and keeping clocks pinned at max boost. It is pulling 60 more watts and running 15 degrees hotter than its last gen counterpart though. But this is a worst case scenario.

As for the 13600K, well, it's still managed well by the NH-D15, staying 10 degrees shy of throttling and keeping clocks pinned at max boost. It is pulling 60 more watts and running 15 degrees hotter than its last gen counterpart though. But this is a worst case scenario.

Intel's claims EXAMINED

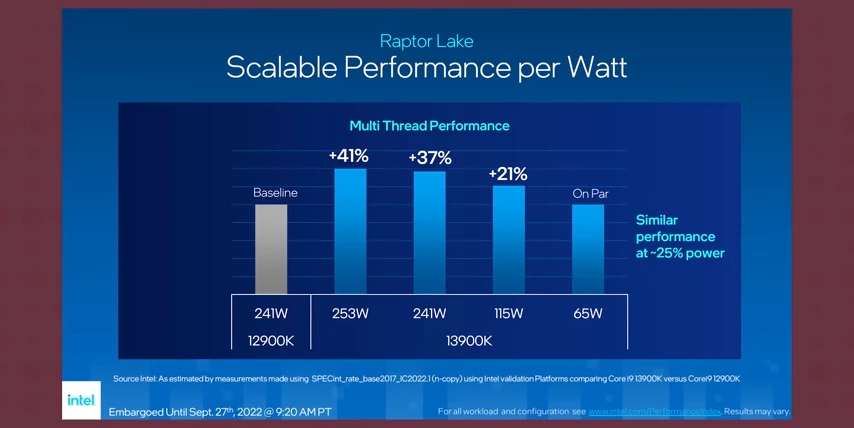

So they like power, but can they wield it responsibly? Intel claimed that when running the 13900K at 65 watts we could expect performance that is on par with the 12900K at 241 watts.  While the efficiency improvements they've made on this gen are impressive, it seems that the bold claim with a lengthy post script, is not quite true.

While the efficiency improvements they've made on this gen are impressive, it seems that the bold claim with a lengthy post script, is not quite true.  40% worse performance in Handbrake, and that's more of a double bogie than a par. But looking at SpecWorkstation, the improvements to efficiency are more in line with Intel's claims.

40% worse performance in Handbrake, and that's more of a double bogie than a par. But looking at SpecWorkstation, the improvements to efficiency are more in line with Intel's claims.

Overall, Intel has managed to make some big improvements to their CPUs without increasing power budgets like AMD. Although in fairness, Intel's power budget was already, and still is, sky high. And they are doing better numbers at a better price. Remember the 13900K meets or beats the 7950X in almost all workloads for $110 less.

Now, I understand that a few of you might be feeling a bit sad with your already aging Ryzen 5 5600X, but remember these three things. At 1080p in most games, performance differences will be imperceptible because a lot of these games are already running faster than most monitors can refresh. And a lot of the performance differences in our charts are only exposed when the chips are run alongside a $1,600 GPU. As much as it feels bad, man, to see your three year old CPU getting bodied by these new chips, the year over year performance improvements that we are seeing, thanks to this renewed competition, they feel pretty damn good.

No comments yet